by Charlotte Johnson | Dec 19, 2024 | Artificial Intelligence, Blog, Latest Post |

Measuring how well AI systems work is very important for their success. A good evaluation and AI performance metrics help improve efficiency and ensure they meet their goals. Data scientists use performance metrics and standard data sets to understand their models better. This understanding helps them adjust and enhance their solutions for various uses.

This blog post explores AI performance metrics in several areas as part of a comprehensive AI service strategy. It explains why these metrics matter, how to use them, and best practices to follow. We will review the key metrics for classification, regression, clustering, and some special AI areas. We will also talk about how to choose the right metrics for your project.

Key Highlights

- Read expert advice on measuring AI performance in this helpful blog.

- Learn key metrics to check AI model performance.

- See why performance metrics matter for connecting AI development to business goals.

- Understand metrics for classification, regression, and clustering in several AI tasks.

- Discover special metrics like the BLEU score for NLP and IoU for object detection.

- Get tips on picking the right metrics for your AI project and how to avoid common mistakes.

Understanding AI Performance Metrics

AI performance metrics, including the square root of mse, are really important. They help us see how good a machine learning model is. These metrics tell us how well the AI system works and give us ideas to improve it. The main metrics we pay attention to are:

- Precision: This tells us how many positive identifications were correct.

- Recall: This measures how well the model can find actual positive cases.

- F1 Score: This combines precision and recall into a single score.

Data scientists use these methods and others that match the needs of the project. This ensures good performance and continued progress.

The Importance of Performance Metrics in AI Development

AI performance metrics are pivotal for:

Model Selection and Optimization:

- We use metrics to pick the best model.

- They also help us change settings during training.

Business Alignment:

- Metrics help ensure AI models reach business goals.

- For instance, a fraud detection system focuses on high recall. This way, it can catch most fraud cases, even if that means missing some true positives.

Tracking Model Performance Over Time:

- Regular checks can spot issues like data drift.

- Metrics help us retrain models quickly to keep their performance strong.

Data Quality Assessment:

- Metrics can reveal data issues like class imbalances or outliers.

- This leads to better data preparation and cleaner datasets.

Key Categories of AI Performance Metrics

AI metrics are made for certain jobs. Here’s a list by type:

1. Classification Metrics

- It is used to sort data into specific groups.

- Here are some common ways to measure this.

- Accuracy: This shows how correct the results are. However, it can be misleading with data that is unbalanced.

- Precision and Recall: These help us understand the trade-offs in model performance.

- F1 Score: This is a balanced measure to use when both precision and recall are very important.

2. Regression Metrics

- This discusses models that forecast values that are always changing.

- Mean Absolute Error (MAE): This shows the average size of the errors.

- Root Mean Squared Error (RMSE): This highlights larger errors by squaring them.

- R-Squared: This describes how well the model fits the data.

3. Clustering Metrics

- Clustering metrics help to measure how good the groups are in unsupervised learning.

- Silhouette Score: This score helps us see how well the items in a cluster fit together. It also shows how far apart the clusters are from one another.

- Davies-Bouldin Index: This index checks how alike or different the clusters are. A lower score means better results.

Exploring Classification Metrics

Classification models are very important in AI. To see how well they work, we need to consider more than just accuracy.

Precision and Recall: Finding the Balance

- Precision: This tells us how many positive predictions are correct. High precision matters a lot for tasks like spam detection. It stops real emails from being incorrectly marked as spam.

- Recall: This checks how many true positives are found. High recall is crucial in areas like medical diagnoses. Missing true positives can cause serious issues.

Choosing between precision and recall depends on what you need the most.

F1 Score: A Balanced Approach

The F1 score is a way to balance precision and recall. It treats both of them equally.

- It is the average of precision and recall.

- It is useful when you need to balance false positives and false negatives.

The F1 score matters in information retrieval systems. It helps find all the relevant documents. At the same time, it reduces the number of unrelated ones.

Understanding Regression Metrics

Regression models help predict continuous values. To do this, we need certain methods to check how well they are performing.

Mean Absolute Error (MAE)

- Simplicity: Calculates the average of the absolute prediction errors.

- Use Case: Useful in cases with outliers or when the direction of the error is not important.

Root Mean Squared Error (RMSE)

- Pay Attention to Big Mistakes: Look at major errors before you find the average. This makes bigger mistakes more significant.

- Use Case: This approach works well for jobs that need focus on important mistakes.

R-Squared

- Explains Fit: It shows how well the model captures the differences found in the data.

- Use Case: It helps to check the overall quality of the model in tasks that involve regression.

Clustering Metrics: Evaluating Unsupervised Models

Unsupervised learning often depends on clustering, where tools like the Silhouette Score and Davies-Bouldin Index are key AI performance metrics for evaluating the effectiveness of the clusters.

Silhouette Coefficient

- Measures Cohesion and Separation: The values range from -1 to 1. A higher value shows that the groups are better together.

- Use Case: This helps to see if the groups are clear and separate from one another.

Davies-Bouldin Index

- Checks How Similar Clusters Are: A lower number shows better grouping.

- Use Case: It’s simple to grasp, making it a great choice for initial clustering checks.

Navigating Specialized Metrics for Niche Applications

AI employs tools like NLP and computer vision, which demand specialized AI performance metrics to gauge their success, addressing the distinct challenges they face.

BLEU Score in NLP

- Checks Text Similarity: This is helpful for tasks like translating text. It sees how closely the new text matches the reference text.

- Limitations: It mainly focuses on similar words. This can overlook deeper meanings in the language.

Intersection Over Union (IoU) in Object Detection

- Measures Overlap Accuracy: This checks how well predicted bounding boxes fit with the real ones in object detection tasks.

- Use Case: It is very important for areas like self-driving cars and surveillance systems.

Advanced Metrics for Enhanced Model Evaluation

Using advanced tools helps to achieve a comprehensive evaluation through precise AI performance metrics.

AUC-ROC for Binary Classification

- Overview: Examines how a model does at different levels of classification.

- Benefit: Provides one clear score (AUC) to indicate how well the model can distinguish between classes.

GAN Evaluation Challenges

- Special Metrics Needed: The Inception Score and Fréchet Inception Distance are important. They help us see the quality and range of the data created.

Selecting the Right Metrics for Your AI Project

Aligning our metrics with project goals helps us assess our work properly. This way, we can gain valuable insights through the effective use of AI performance metrics.

Matching Metrics to Goals

- Example 1: When dealing with a customer service chatbot, focus on customer satisfaction scores and how effectively issues are addressed.

- Example 2: For fraud detection, consider precision, recall, and the F1-score. This can help lower the number of false negatives.

Avoiding Common Pitfalls

- Use different methods to see the full picture.

-

- Address data issues, like class imbalance, by using the appropriate techniques.

Conclusion

AI performance metrics are important for checking and improving models in various AI initiatives. Choosing the right metrics helps match models with business goals. This choice also improves model performance and helps with ongoing development while meeting specific requirements. As AI grows, being aware of new metrics and ethical issues will help data scientists and companies use AI in a responsible way. This knowledge can help unlock the full potential of AI.

Frequently Asked Questions

-

What is the Significance of Precision and Recall in AI?

Precision and recall matter a lot in classification problems. Precision shows how correct the positive predictions are by checking true positives. Recall focuses on identifying all actual positive cases, which are contributed by the number of correct predictions, as this might include a few false positives and is often related to the true positive rate.

-

How Do Regression Metrics Differ from Classification Metrics?

Regression metrics tell us how well we can predict continuous values. On the other hand, classification metrics, which include text classification, measure how good we are at sorting data into specific groups. One valuable classification metric is the ROC curve, which is useful for evaluating performance in sorting data. The evaluation process for each type uses different metrics that suit their goals.

-

Can You Explain the Importance of Clustering Metrics in AI?

Clustering metrics help us check how well unsupervised learning models work. These models put similar data points together. The metrics look at the longest common subsequence to measure clustering quality. They focus on two key things: how closely data points stay in each cluster and how well we can see the clusters apart from each other.

Cluster cohesion tells us how similar the data points are within a cluster.

Separation shows how different the clusters are from each other.

-

How to Choose the Right Performance Metric for My AI Model?

Choosing the right way to measure performance of the model depends on a few things. It includes the goals of your AI model and the data you are using. Business leaders should pay close attention to customer satisfaction. They should also look at metrics that fit with their overall business objectives.

by Arthur Williams | Dec 18, 2024 | Artificial Intelligence, Blog, Latest Post |

As artificial intelligence (AI) becomes a more significant part of our daily lives, we must consider its ethics. This blog post shares why we need to have rules for AI ethics and provides essential guidelines to follow in AI development services. These include ensuring data privacy by protecting user information, promoting fairness by avoiding biases in AI systems, maintaining transparency by clearly explaining how AI operates, and incorporating human oversight to prevent misuse or errors. By adhering to these AI ethics guidelines and addressing key ethical issues, we can benefit from AI while minimizing potential risks..

Key Highlights

- It is important to develop artificial intelligence (AI) in a responsible way. This way, AI can benefit everyone.

- Some important ideas for AI ethics include human agency, transparency, fairness, and data privacy.

- Organizations need to establish rules, watch for ethical risks, and promote responsible AI use.

- Trustworthy AI systems must follow laws and practice ethics. They should work correctly and meet applicable laws and regulations.

- Policymakers play a key role in creating rules and standards for the ethical development and use of AI.

- Ethical considerations, guided by AI Ethics Guidelines, are crucial in the development and use of AI to ensure it benefits society while minimizing risks.

Understanding the Fundamentals of AI Ethics

AI ethics is about building and using artificial intelligence and communication networks in a respectful manner. The European Commission points out how important this is. We need to think about people’s rights and stand by our shared values. The main goal is to ensure everyone feels good. To reach this goal, we should focus on important ideas like fairness, accountability, transparency, and privacy. We must also consider how AI affects individuals, communities, and society in general.

AI principles focus on the need to protect civil liberties and avoid harm. We must ensure that AI systems do not create or increase biases and treat everyone fairly. By making ethics a priority in designing, developing, and using AI, we can build systems that people can trust. This way of doing things will help everyone.

The Importance of Ethical Guidelines in AI Development

Ethical guidelines are important for developers, policymakers, and organizations. They help everyone understand AI ethics better. These AI Ethics Guidelines provide clear steps to manage risks and ensure that AI is created and used responsibly. On 8 April, these guidelines emphasize the importance of ethical practices in AI development. This is key to building trustworthy artificial intelligence systems. When stakeholders follow these rules, they can develop dependable AI that adheres to ethical standards. Trustworthy artificial intelligence, guided by AI Ethics Guidelines, can help society and reduce harm.

Technical robustness is very important for ethical AI. It involves building systems that work well, are safe, and make fewer mistakes. Good data governance is also essential for creating ethical AI. This means we must collect, store, and use data properly in the AI process. It is crucial to get consent, protect data privacy, and clearly explain how we use the data.

When developers follow strict ethical standards and focus on data governance, they create trust in AI systems. This trust can lead to more people using AI, which benefits society.

Key Principles Guiding Ethical AI

Ethical development of AI needs to focus on people’s rights and keeping human control. People should stay in control to avoid biased or unfair results from AI. It is also important to explain how AI systems are built and how they make decisions. Doing this helps create trust and responsibility.

Here are some main ideas to consider:

- Human Agency and Oversight: AI should help people make decisions. It needs to let humans take charge when needed. This way, individuals can keep their freedom and not rely only on machines.

- Transparency and Explainability: It is important to be clear about how AI works. We need to give understandable reasons for AI’s choices. This builds trust and helps stakeholders see and fix any problems or biases.

- Fairness and Non-discrimination: AI must be created and trained to treat everyone fairly. It should not have biases that cause unfair treatment or discrimination.

By following these rules and adhering to AI Ethics Guidelines, developers can ensure that AI is used safely and in a fair way..

1. Fairness and Avoiding Bias

Why It Matters:

AI systems learn from past data, which is often shaped by societal biases linked to race, gender, age, or wealth. By not adhering to AI Ethics Guidelines, these systems might accidentally repeat or even amplify such biases, leading to unfair outcomes for certain groups of people.

Guideline:

- Use different training data: Include all important groups in the data.

- Check algorithms often: Test AI systems regularly for fairness and bias.

- Measure fairness: Use data to find and fix bias in AI predictions or suggestions.

Best Practice:

- Test your AI models carefully with different types of data.

- This helps ensure they work well for all users.

2. Transparency and Explainability

Why It Matters:

AI decision-making can feel confusing. This lack of clarity makes it difficult for users and stakeholders to understand how choices are made. When there is not enough transparency, trust in AI systems can drop. This issue is very important in fields like healthcare, finance, and criminal justice.

Guideline:

- Make AI systems easy to understand: Build models that show clear outcomes. This helps users know how decisions are made.

- Provide simple documentation: Give easy-to-follow explanations about how your AI models work, the data they use, and how they make choices.

Best Practice:

- Use tools like LIME or SHAP.

- These tools explain machine learning models that can be difficult to understand.

- They help make these models clearer for people.

3. Privacy and Data Protection

Why It Matters:

AI systems often need a lot of data, which can include private personal information. Without following AI Ethics Guidelines, mishandling this data can lead to serious problems, such as privacy breaches, security risks, and a loss of trust among users.

Guideline:

- Follow privacy laws: Make sure your AI system follows data protection laws like GDPR (General Data Protection Regulation) and CCPA (California Consumer Privacy Act).

- Reduce data collection: Only collect and keep the data that your AI system needs.

- Use strong security: Keep data safe by encrypting it. Ensure that your AI systems are secure from online threats.

Best Practice:

- Let people manage their data with options they accept.

- Provide clear information on how their data will be used.

- Being open and honest is key to this process.

4. Accountability and Responsibility

Why It Matters:

When AI systems make mistakes, it is important to know who is responsible. If no one is accountable, fixing the errors becomes difficult. This also makes it hard to assist the people affected by the decisions AI makes.

Guideline:

- Define roles clearly: Make sure specific people or teams take charge of creating, monitoring, and starting AI systems.

- Establish safety protocols: Design methods for humans to review AI decisions and take action if those choices could hurt anyone.

- Implement a complaint system: Provide users with a way to raise concerns about AI decisions and get responses.

Best Practice:

- Make a simple plan for who is responsible for the AI system.

- Identify who leads the AI system at each step.

- The steps include designing the system, launching it, and reviewing it once it is running.

5. AI for Social Good

Why It Matters:

AI can help solve major issues in the world, such as supporting climate change efforts, improving healthcare access, and reducing poverty. However, adhering to AI Ethics Guidelines is crucial to ensure AI is used to benefit society as a whole, rather than solely prioritizing profit.

Guideline:

- Make AI development fit community values: Use AI to solve important social and environmental issues.

- Collaborate with different groups: Work with policymakers, ethicists, and social scientists to ensure AI helps everyone.

- Promote equal access to AI: Do not make AI systems that only help a few people; instead, work to benefit all of society.

Best Practice:

- Help AI projects that assist people.

- Think about ideas like health checks or support during natural disasters.

- This way, we can create a positive impact.

6. Continuous Monitoring and Evaluation

Why It Matters:

AI technologies are always changing, and a system that worked fine before might face problems later. This often happens due to shifts in data, the environment, or how people use AI, which can lead to unexpected issues. Following AI Ethics Guidelines and conducting regular checks are crucial to ensure ethical standards remain high and systems adapt effectively to these changes.

Guideline:

- Do regular checks: Look at how AI systems work often to ensure they are ethical.

- Stay updated on AI ethics research: Keep up with new studies in AI ethics. This helps you prepare for future challenges.

- Get opinions from the public: Ask users and stakeholders what they think about AI ethics.

Best Practice:

- Look at your AI systems regularly.

- Have outside experts check them for any ethical problems.

Conclusion

In conclusion, AI ethics are very important when we create and use artificial intelligence and its tools. Sticking to AI Ethics Guidelines helps organizations use AI responsibly. Key ideas like transparency, accountability, and fairness, as outlined in these guidelines, form the foundation of good AI practices. By following these rules, we can gain trust from stakeholders and lower ethical issues. As we advance in the rapidly changing world of AI, putting a focus on AI Ethics Guidelines is crucial for building a future that is fair and sustainable.

Frequently Asked Questions

-

What Are the Core Components of AI Ethics?

The main ideas about AI ethics are found in guidelines like the AI HLEG's assessment list and ALTAI. These guidelines ensure that AI systems follow the law. They address several important issues. These include human oversight, technical robustness, data governance, and the ethical impact of AI algorithms. This is similar to the policies established in June 2019.

-

How Can Organizations Implement AI Ethics Guidelines Effectively?

Organizations can create rules for AI ethics. They need to identify any ethical risks that may exist. It is important to encourage teamwork between developers and ethicists. In healthcare, audio data can be sensitive, so groups can follow guidelines such as IBM's AI ethics principles or consider EU laws.

by Hannah Rivera | Dec 10, 2024 | Artificial Intelligence, Blog, Latest Post |

The world of artificial intelligence is changing quickly. AI services are driving exciting new developments, such as AutoGPT. This new app gives a peek into the future of AI. In this future, autonomous agents powered by advanced AI services will understand natural language. They can also perform complex tasks with little help from humans. Let’s look at the key ideas and uses of AutoGPT. AutoGPT examples highlight the amazing potential of this tool and its integration with AI services. These advancements can transform how we work and significantly boost productivity.

Key Highlights

- AutoGPT uses artificial intelligence to handle tasks and improve workflows.

- It is an open-source application that relies on OpenAI’s GPT-4 large language model.

- AutoGPT is more than just a chatbot. Users can set big goals instead of just giving simple commands.

- AutoGPT Examples include tasks like coding, market research, making content, and automating business processes.

- To start using AutoGPT, you need some technical skills and a paid OpenAI account.

Exploring the Basics of AutoGPT

At its center, AutoGPT relies on generative AI, natural language processing, and text generation skills. These features allow it to grasp and follow instructions in everyday language. This new tool uses a big language model known as GPT-4. It can write text, translate languages, and, most importantly, automate complex tasks in various fields.

AutoGPT is different from older AI systems. It does not need detailed programming steps. Instead, it gets the main goals. Then, it finds the steps by itself to achieve those goals.

This shift in artificial intelligence opens up many ways to automate jobs that were once too tough for machines. For example, AutoGPT can help with writing creative content and doing market research. These examples show how AI can make a big difference in several areas of our lives. AutoGPT proves its wide range of uses, whether it is for creating content or conducting market research.

What is AutoGPT?

AutoGPT is an AI agent that uses OpenAI’s GPT-4 large language model. You can see it as an autonomous AI agent. It helps you take your big goals and split them into smaller tasks. Then, it uses its own smart thinking and the OpenAI API to find the best ways to get those tasks done while keeping your main goal clear.

AutoGPT is unique because it can operate by itself. Unlike chatbots, which require ongoing help from users, AutoGPT can create its own prompts. It gathers information and makes decisions without needing assistance. This results in a truly independent way of working. It helps automate tasks and projects that used to need a lot of human effort.

AutoGPT Examples

1. AutoGPT for Market Research

A new company wants to study market trends for electric vehicles (EVs).

Steps AutoGPT Performs:

- Gathers the newest market reports and news about EVs.

- Highlights important points like sales trends, new competitors, and what consumers want.

- Offers practical plans for the company, like focusing on eco-friendly customers.

- Outcome: Saves weeks of hard research and provides insights for better planning.

2. AutoGPT for Content Creation

The content creator needs support to write blog posts about “The Future of Remote Work.”

Steps AutoGPT Performs:

- Gathers information on remote work trends, tools, and new policies.

- Creates an outline for the blog with parts like “Benefits of Remote Work” and “Technological Innovations.”

- Writes a 1,500-word draft designed for SEO, including a list of important keywords.

- Outcome: The creator gets a full first draft ready for editing, which makes work easier.

3. AutoGPT for Coding Assistance

A developer wants to create a Python script. This script will collect weather data.

Steps AutoGPT Performs:

- Creates a Python script to get weather info from public APIs.

- Fixes the script to make sure it runs smoothly without problems.

- Adds comments and instructions to explain the code.

- Result: A working script is ready to use, helping the developer save time.

4. AutoGPT for Business Process Automation

- A business wants to use technology for writing product descriptions automatically.

- They believe this will save time and money.

- By automating it, they can provide clear and detailed descriptions.

- Good product descriptions can help attract more customers.

- The goal is to improve sales and growth for the e-commerce site.

Steps AutoGPT Performs:

- Pulls product information such as features, sizes, and specs from inventory databases.

- Creates interesting and SEO-friendly descriptions for each item.

- Saves the descriptions in a format ready for the e-commerce site.

- Result: Automates a repetitive job, allowing employees to focus on more important tasks.

5. AutoGPT for Financial Planning

- A financial advisor will help you with investment choices.

- They will consider your comfort with risk.

- High-risk options can bring more rewards but can also lead to greater losses.

- Low-risk options tend to be safer but may have lower returns.

- Middle-ground choices balance risk and reward.

- Be clear about how much risk you accept.

- A strong plan aligns with your goals and needs.

- It is important to keep checking the portfolio.

- Adjustments might be needed based on changing markets.

- A good advisor will create a plan that works well for you.

Steps AutoGPT Performs:

- Looks at the client’s money data and goals.

- Checks different investment choices, like stocks, ETFs, and mutual funds.

- Suggests a mix of investments, explaining the risks and possible gains.

- Result: The advisor gets custom suggestions, making clients happier.

6. AutoGPT for Lead Generation

- A SaaS company is looking for leads.

- They want to focus on the healthcare sector.

Steps AutoGPT Performs:

- Finds healthcare companies that can gain from their software.

- Writes custom cold emails to reach decision-makers in those companies.

- Automates email sending and keeps track of replies for follow-up.

- Result: Leads are generated quickly with little manual work.

The Evolution and Importance of AutoGPT in AI

Large language models like GPT-3 are good at understanding and using language. AutoGPT, however, moves closer to artificial general intelligence. It shows better independence and problem-solving skills that we have not seen before.

This change from narrow AI, which focuses on specific tasks, to a more flexible AI is very important. It allows machines to do many different jobs. They can learn by themselves and adapt to new problems. AutoGPT examples, like help with coding and financial planning, show its skill in handling different challenges easily.

Preparing for AutoGPT: What You Need to Get Started

Before you use AutoGPT, it’s important to understand how it works and what you need. First, you need to have an OpenAI account. You should feel comfortable using command-line tools because AutoGPT mainly works in that space. You can also find the source code in the AutoGPT GitHub repository. It’s essential to know the task or project you want to automate. Understanding these details will help you set clear goals and get good results.

Essential Resources and Tools

To begin using AutoGPT, you need some important resources. First, get an API key from OpenAI. This key is crucial because it lets you access their language models and input data properly. Remember to keep your API key safe. You should add it to your AutoGPT environment.

Steps to Implement AutoGPT

Here is a simple guide to using AutoGPT well:

Step 1: Understanding AutoGPT’s Capabilities

Get to know what AutoGPT can do. It can help with tasks that are the same over and over. It can also create content, write code, and help with research. Understanding what it can do and what it cannot do will help you set realistic goals and use it better.

Step 2: Setting Up Your Environment

- Get an OpenAI API Key: Start by creating an OpenAI account. Then, make your API key so you can use GPT-4.

- Install Software: You need to set up Python, Git, and Docker on your computer to run AutoGPT.

- Download AutoGPT: Clone the AutoGPT repository from GitHub to your machine. Follow the installation instructions to complete the setup.

Step 3: Running AutoGPT

- Use command-line tools to start AutoGPT.

- Set your goal, and AutoGPT will divide it into smaller tasks.

- Let AutoGPT do these tasks on its own by creating and following its prompts.

Step 4: Optimizing and Iterating

- Check the results and make changes to task descriptions or API settings as needed.

- Use plugins to improve functionality, like connecting AutoGPT with your CRM or email systems.

-

- Keep the tool updated for new features and better performance.

Table: AutoGPT Use Cases and Benefits

| Use Case |

Steps AutoGPT Performs |

Outcome |

| Market Research |

Scrapes reports, summarizes insights, suggests strategies. |

Delivers actionable insights for strategic planning. |

| Content Creation |

Gathers data, creates outlines, writes drafts. |

Produces first drafts for blogs or articles, saving time. |

| Coding Assistance |

Writes, debugs, and documents scripts. |

Provides functional, error-free code ready for use. |

| Business Process Automation |

Generates SEO-friendly product descriptions from databases. |

Automates repetitive tasks, improving efficiency. |

| Lead Generation |

Identifies potential customers, drafts emails, and schedules follow-ups. |

Streamlines the sales funnel with automated lead qualification. |

| Financial Planning |

Analyzes data, researches options, suggests diversified portfolios. |

Enhances decision-making with personalized investment recommendations. |

Creative AutoGPT Examples

Enhancing Content Creation with AutoGPT

Content creators get a lot from AutoGPT. It helps with writing a blog post, making social media content captions, or planning a podcast. AutoGPT does the hard work. For instance, when you use AutoGPT to come up with ideas or create outlines, you can save time. This way, creators can focus more on improving their work.

Streamlining Business Processes Using AutoGPT

Businesses can use AutoGPT for several tasks. They can use it for lead generation, customer support, or to automate repeatable data entry. By automating these tasks, companies can save their human workers for more important roles. For example, AutoGPT can automate market research. This process can save weeks of work and provide useful reports in just a few hours.

Conclusion

AutoGPT is a major development for people who want to use the power of AI. It can help with making content, coding support, and automated business tasks. AutoGPT examples show how flexible it is and how it can complete tasks that improve workflows. By learning what it can do, choosing the best setup, and using it wisely, you can gain a lot of productivity.

As AI technology changes, AutoGPT is an important development. It helps users complete complex tasks with minimal effort, supporting human intelligence. Start using it today. This tool can transform your projects in new ways.

Frequently Asked Questions

-

What are the limitations of AutoGPT?

Right now, AutoGPT requires some technical skills to set up and use properly. However, it takes user input in natural language, which makes it easier for people. There are helpful tutorials available too. This means anyone willing to learn can understand this AI agent well.

-

How does AutoGPT differ from other AI models?

AutoGPT is not like other AI models, such as ChatGPT. ChatGPT needs constant user input to operate. In contrast, AutoGPT can work on its own. This is especially useful in a production environment. It makes its own prompts to reach bigger goals. Because of this, AutoGPT can handle complex tasks with less human intervention. This different method helps AutoGPT stand out from normal AI models.

-

Can AutoGPT be used by beginners without coding experience?

AutoGPT is still in development. This means it might have some of the same limits as other large language models. It can sometimes provide wrong information, which we call hallucinations. It may also struggle with complex reasoning that requires a better understanding of specific tasks or detailed contexts.

by Hannah Rivera | Dec 6, 2024 | Artificial Intelligence, Blog, Latest Post |

The world of artificial intelligence (AI) is always changing. A fun part of this change is the development of AI agents. These smart systems, often utilized in modern AI Services, use Natural Language Processing (NLP) and machine learning to automate repetitive tasks, understand, and interact with what is around them. Unlike regular AI models, understanding AI agents reveals that they can work on their own. They can make choices, complete tasks, and learn from their experiences. Some even use the internet to gather more information, demonstrating that they don’t always need human intervention.

Key Highlights

- Check how AI agents have changed and what they do today in technology.

- Learn how AI agents function and their main parts.

- Discover the different types of AI agents, like reflex, goal-based, utility-based, and learning agents.

- See how AI agents are impacting areas like customer service and healthcare.

- Understand the challenges AI agents deal with, such as data privacy, ethics, and tech problems.

- Apply best practices for AI agents by focusing on data accuracy, continuous learning, and changing strategies.

Deciphering AI Agents in Modern Technology

In our tech-driven world, AI agents and home automation systems are changing how we work. They make life easier by taking care of many tasks. For example, chatbots offer quick customer support. Advanced systems can also manage complex tasks in businesses.

There are simple agents that handle basic jobs. There are also smart agents that can learn and adjust to new situations. The options seem endless. As AI grows better, we will see AI agents become more skilled. This will make it harder to tell what humans and machines can do differently.

The Evolution of AI Agents

The development of AI agents has changed a lot over time. In the beginning, AI agents were simple. They just followed certain rules. They could only do basic tasks that were given to them. But as time passed, research improved, and development moved forward. This helped AI agents learn to handle more complex tasks. They got better at adapting and solving different problems.

A big change began with open source machine learning algorithms. These algorithms help AI agents learn from data. They can discover patterns and get better over time. This development opened a new era for AI agent skills. It played an important role in creating the smart AI agents we have now.

Ongoing research in deep learning and reinforcement learning will help make AI agents better. This work will lead to systems that are smarter, more independent, and can adapt well in the future.

Defining the Role of AI Agents Today

Today, AI agents play a big role in many areas, offering a variety of use case solutions. They fit into our everyday life and change how businesses work, especially with systems like CRM. They can take care of specific tasks and look at large amounts of data, called enterprise data. This skill helps them give important insights, making them valuable tools for us.

In customer service, AI chatbots and virtual assistants, such as Google Assistant, are everywhere. They help quickly and give answers that match business goals. These agents understand customer questions, solve problems, and even offer special product recommendations.

AI agents are helpful in fields like finance, healthcare, and manufacturing. They can automate tasks, make processes better, and assist in decision-making with AI systems. The ability and flexibility of AI agents are important in today’s technology world.

The Fundamentals of AI Agent Functionality

To understand AI agents, we need to know how they work. This helps us see their true abilities. These smart systems operate in three main steps. They are perception, decision, and action.

AI agents begin by noticing what is around them. They use various sensors or data sources to do this. After that, they review the information they collect. They then make decisions based on their programming or past experiences, which includes agent development processes. Lastly, they take action to reach their goals. This cycle of seeing, deciding, and acting allows AI agents to work on their own and adapt to new situations.

Understanding Agent Functions and Programs

The key part of any AI agent is its functions and software program. A good software program manages the actions and actuators of the AI agent. This program has a clear goal. It shows what the agent wants to do and provides rules and steps to reach these goals.

The agent acts like a guide. It helps show how the agent gathers information. It also explains how the agent decides and acts to complete tasks. The strong link between the program and its function makes functional agents different from simple software.

The agent’s program does much more than just handle actions. It helps the agent learn and update its plan of action, eliminating the dependence on pre-defined strategies. As the agent connects with the world and collects information, the program uses this data to improve its choices. Over time, the agent gets better at reaching its goals.

The Architecture of AI Agents

Behind every smart AI agent, there is a strong system. This system helps the agent perform well. It is the base for all the agent’s actions. It provides the key parts needed for seeing, thinking, and acting.

An agent builder, particularly a no code agent builder, is important for making this system. It can be a unique platform or an AI agent builder that uses programming languages. Developers use agent builders to set goals for the agent. They also choose how the agent will make decisions. Additionally, they provide it with tools to interact with the world.

The AI agent’s system is flexible. It changes as the agent learns. When the agent faces new situations or gets new information, the system adjusts to help improve. This lets the agent do its tasks better over time.

Understanding AI Agents: The Diverse Types

The world of AI agents is vast and varied, encompassing different types designed for specific tasks and challenges. Each type has unique features that influence how they learn, make decisions, and achieve their goals. By understanding AI agents, you can select the right type for your needs. Let’s explore the key types of AI agents and what sets them apart.

1. Reactive Agents

- What They Do: Respond to the current environment without relying on memory or past experiences.

- Key Features:

- Simple and fast.

- No memory or learning capability.

- Example: Chatbots that provide predefined responses based on immediate inputs.

2. Deliberative Agents

- What They Do: Use stored information and logical reasoning to plan and achieve goals.

- Key Features:

- Depend on systematic decision-making.

- Effective for solving complex problems.

- Example: Navigation apps like Google Maps, which analyze data to calculate optimal routes.

3. Learning Agents

- What They Do: Adapt and improve their decision-making abilities by learning from data or feedback over time.

- Key Features:

- Use machine learning to refine performance.

- Continuously improve based on new information.

- Example: Recommendation systems like Netflix or Spotify that suggest personalized content based on user behavior.

4. Collaborative Agents

- What They Do: Work alongside humans or other agents to accomplish shared objectives.

- Key Features:

- Enhance collaboration and efficiency.

- Facilitate teamwork in problem-solving.

- Example: Tools like GitHub Copilot that assist developers by providing intelligent coding suggestions.

5. Hybrid Agents

- What They Do: Combine elements of reactive, deliberative, and learning agents for greater adaptability.

- Key Features:

- Versatile and capable of managing complex scenarios.

- Leverage multiple approaches for decision-making.

- Example: Self-driving cars that navigate challenging environments by reacting to real-time data, planning routes, and learning from experiences.

By understanding AI agents, you can better appreciate how each type functions and identify the most suitable one for your specific tasks. From simple reactive agents to sophisticated hybrid agents, these technologies are shaping the future of AI across industries.

How AI Agents Transform Industries

AI agents are found in more than just research labs and tech companies. They are changing different industries and making a significant impact through what is being referred to as “agentic AI”. They can perform tasks automatically, analyze data, and communicate with people. This makes them useful in many different areas.

AI agents help improve customer service and healthcare by providing date information. They are also changing how we make products and better our financial services. These AI agents are transforming various industries. They make processes easier, reduce costs, and create new opportunities for growth.

- Healthcare: Virtual health assistants providing medical advice.

- Finance: Fraud detection systems and algorithmic trading bots.

- E-commerce: Chatbots and personalized product recommendations.

- Robotics: Autonomous robots in manufacturing and logistics.

- Gaming: Non-player characters (NPCs) with adaptive behaviors.

Navigating the Challenges of AI Agents

AI agents can change our lives a lot. But they also come with challenges. Like other technologies that use a lot of data and affect people, AI agents raise important questions. These questions relate to ethics and tech problems. We need to think about these issues carefully.

It is important to think about issues like data privacy. We need to make sure our decisions are ethical. We also have to reduce bias in AI agents to use them responsibly. We must tackle technical challenges, too. This involves building, training, and fitting these complex systems into how we work now. Doing this will help AI be used more by people.

- Ethics and Bias: Ensuring agents make unbiased and fair decisions.

- Scalability: Managing the increasing complexity of tasks and data.

- Security: Protecting AI agents from hacking or malicious misuse.

- Reliability: Ensuring consistent and accurate performance in dynamic environments.

Best Practices for Implementing AI Agents

Using AI agents the right way is not just about understanding how they work. You must practice good methods at each step. This includes planning, building, launching, and managing them with your sales team. Doing this is important. It helps make sure they work well, act ethically, and succeed over time.

You should pay attention to the quality and trustworthiness of data. It’s also important to support continuous learning and adapt to changes in the workflow. A key goal should be to ensure human oversight and teamwork. Following these steps can help organizations make the most of AI agents while reducing risks.

Ensuring Data Accuracy and Integrity

The success of an AI agent depends a lot on the quality of its data. It is crucial that the data is accurate. This means the information given to the AI must be correct and trustworthy. If the data is wrong or old, it can cause poor decisions and unfair results. This can hurt how well the AI agent performs.

Data integrity is very important. It means we should keep data reliable and consistent all through its life. We need clear rules to manage data, check its quality, and protect it. This helps stop data from being changed or accessed by the wrong people. This is especially true when we talk about sensitive enterprise data.

To keep our data accurate and trustworthy, we need to review our data sources regularly. It is important to do data quality checks. We must also ensure that everything is labeled and organized correctly. These steps will help our AI agent work better.

Continuous Learning and Adaptation Strategies

In the fast-changing world of AI, learning all the time is very important. It helps in the AI development lifecycle, especially when working with LLMs (large language models). AI agents need to adapt to new data, improve their models, and learn from what people say. This is key for their success as time goes on.

To help AI agents keep learning, especially in the early stages, good ways to adapt are very important. These ways need to find ways to get feedback from users. They should also watch how the agent performs in real situations. Finally, it’s key to have plans to improve the model using new data and knowledge.

Organizations can keep their AI agents up to date. They can do this by focusing on continuous learning and good ways to adapt. This helps the AI agents stay accurate and manage changes in tasks effectively.

Understanding AI Agents: AI Assistants vs. AI Agents

| Aspect |

AI Assistant |

AI Agent |

| Definition |

A tool designed to assist users by performing tasks or providing information. |

An autonomous system that proactively acts and makes decisions to achieve specific goals. |

| Core Purpose |

Assists users with predefined tasks, usually in response to commands or queries. |

Operates independently to solve problems or complete tasks aligned with its goals. |

| Interactivity |

Relies on user inputs to function, offering responses or executing commands. |

Functions autonomously, often requiring little to no user interaction once set up. |

| Autonomy |

Limited autonomy, requiring guidance from the user for most actions. |

High autonomy, capable of learning, adapting, and acting without ongoing user involvement. |

| Memory |

Typically has minimal or no memory of past interactions (e.g., Siri, Alexa). |

Can use memory to store context, learn patterns, and improve decision-making. |

| Learning Capability |

Learns from user preferences or past interactions in a basic way. |

Employs advanced learning techniques like machine learning or reinforcement learning. |

| Example Tasks |

Answering questions, managing schedules, setting alarms, or playing music. |

Autonomous navigation, optimizing supply chains, or handling stock trading. |

| Complexity |

Best for simple, predefined tasks or queries. |

Handles dynamic, complex environments that require reasoning, planning, or adaptation. |

| Examples |

Voice assistants (e.g., Siri, Alexa, Google Assistant). |

Self-driving cars, warehouse robotics, or AI managing trading portfolios. |

| Use Case Scope |

Focused on aiding users in daily activities and productivity. |

Broad range of use cases, including independent operation and human-agent collaboration. |

When understanding AI agents, the distinction becomes clear: while AI Assistants are built for direct interaction and specific tasks, AI Agents operate autonomously, tackling more complex challenges and adapting to dynamic situations.

Future of AI Agents

As AI continues to grow, AI agents are becoming smarter and more independent. They are now better at working with people to achieve a desired outcome goal. New methods like multi-agent systems and general AI help these agents work together on complex tasks in an effective way.

AI agents are not just tools. They are like friends in our digital world. They help us finish tasks easier and faster, even in areas using AWS. To use their full potential, it’s key to understand how they work.

Conclusion

AI agents are changing many industries, especially in marketing campaigns. They help us improve customer service, change healthcare, and bring us closer to a future with learning agents. However, there are some challenges, like data privacy, security, and ethics. Yet, using AI agents that focus on accurate data and ongoing learning can lead to big improvements. It’s important to understand how AI agents have developed and how they work. This understanding helps us get the most out of their ability for innovation and efficiency. We should follow best practices when using AI agents. By doing this, we can fully enjoy the good benefits they bring to our technology world. Frequently Asked Questions

-

What Are the Core Functions of AI Agents?

The main job of AI agents is to observe their surroundings. They use the information they find to make decisions. After that, they act to finish specific tasks. This helps automate simple tasks as well as complex tasks. In the end, this helps us to get the results we want.

-

How Do AI Agents Learn Over Time?

Learning agents use machine learning and feedback mechanisms to change what they do. They keep adjusting and studying new information. This helps them improve their AI model, making it more accurate and effective.

-

Can AI Agents Make Decisions Independently?

AI systems can make decisions on their own using their coding and how they understand the world. However, we should keep in mind that their ability to do this is limited by ethical rules and human intervention. Many times, these systems require oversight from human agents, especially when it comes to big decisions.

by Arthur Williams | Dec 5, 2024 | Artificial Intelligence, Blog, Latest Post |

Large Language Models (LLMs) are changing how we see natural language processing (NLP). They know a lot but might not always perform well on specific tasks. This is where LLM fine-tuning, reinforcement learning, and LLM testing services help improve the model’s performance. LLM fine-tuning makes these strong pre-trained LLMs better, helping them excel in certain areas or tasks. By focusing on specific data or activities, LLM fine-tuning ensures these models give accurate, efficient, and useful answers in the field of natural language processing. Additionally, LLM testing services ensure that the fine-tuned models perform optimally and meet the required standards for real-world applications.

Key Highlights

- Custom Performance: Changing pre-trained LLMs can help them do certain tasks better. This can make them more accurate and effective.

- Money-Saving: You can save money and time by using strong existing models instead of starting a new training process.

- Field Specialization: You can adjust LLMs to fit the specific language and details of your industry, which can lead to better results.

- Data Safety: You can train using your own data while keeping privacy and confidentiality rules in mind.

- Small Data Help: Fine-tuning can be effective even with smaller, focused datasets, getting the most out of your data.

Why LLM Fine Tuning is Essential

- LLM Fine tuning change it to perform better.

- Provide targeted responses.

- Improve accuracy in certain areas.

- Make the model more useful for specific tasks.

- Adapt the model for unique needs of the organization.

- Improve Accuracy: Make predictions more precise by using data from your location.

- Boost Relevance: Tailor the model’s answers to match your audience more closely.

- Enhance Performance: Reduce errors or fuzzy answers for specific situations.

- Personalize Responses: Use words, style, or choices that are specific to your business.

The Essence of LLM Fine Tuning for Modern AI Applications

Imagine you have a strong engine, but it doesn’t fit the vehicle you need. Adjusting it is like fine-tuning that engine to make it work better for your machine. This is how we work with LLMs. Instead of building a new model from the ground up, which takes a lot of time and money, we take an existing LLM. We then improve it with a smaller set of data that is focused on our target task.

This process is similar to sending a general language model architecture to a training camp. At this camp, the model can practice and improve its skills. This practice helps with tasks like sentiment analysis and question answering. Fine-tuning the model makes it stronger. It also lets us use the power of language models while adjusting them for specific needs in the entire dataset. This leads to better creativity and efficiency when working on various tasks in natural language processing.

Defining Fine Tuning in the Realm of Large Language Models

In natural language processing, adjusting pre-trained models for specific tasks in deep learning is very important. This process is called fine-tuning. Fine-tuning means taking a pre-trained language model and training it more with a data set that is meant for a specific task. Often, this requires a smaller amount of data. You can think of it as turning a general language model into a tool that can accurately solve certain problems.

Fine-tuning is more than just boosting general knowledge from large amounts of data. It helps the model develop specific skills in a particular domain. Just like a skilled chef uses their cooking talent to perfect one type of food, fine-tuning lets language models take their broad understanding of language and concentrate on tasks like sentiment analysis, question answering, or even creative writing.

By providing the model with specific training data, we help it change its working process. This allows it to perform better on that specific task. This approach reveals the full potential of language models. It makes them very useful in several industries and research areas.

The Significance of Tailoring Pre-Trained Models to Specific Needs

In natural language processing (NLP) and machine learning, a “one size fits all” method does not usually work. Each situation needs a special approach. The model must understand the details of the specific task. This can include named entity recognition and improving customer interactions. Fine-tuning the model is very helpful in these cases.

We improve large language models (LLMs) that are already trained. This combines general language skills with specific knowledge. It helps with a wide range of tasks. For example, we can translate legal documents, analyze financial reports, or create effective marketing text. Fine-tuning allows LLMs to learn the details and skills they need to do well.

Think about what happens when we check medical records without the right training. A model that learns only from news articles won’t do well. But if we train that model using real medical texts, it can learn medical language better. With this knowledge, it can spot patterns in patient data and help make better healthcare choices.

Common Fine-Tuning Use Cases

- Customer Support Chatbots: Train models to respond to common questions and scenarios.

- Content Generation: Modify models for writing tasks in marketing or publishing.

- Sentiment Analysis: Adapt the model to understand customer feedback in areas like retail or entertainment.

- Healthcare: Create models to assist with diagnosis or summarize research findings.

- Legal/Financial: Teach models to read contracts, legal documents, or make financial forecasts.

Preparing for Fine Tuning: A Prerequisite Checklist

Before you start fine-tuning, you must set up a strong base for success. Begin with careful planning and getting ready. It’s like getting ready for a big construction project. A clear plan helps everything go smoothly.

Here’s a checklist to follow:

- Define your goal simply: What exact task do you want the model to perform well?

- Collect and organize your data: A high-quality dataset that is relevant is key.

- Select the right model: Choose a pre-trained LLM that matches your specific task.

Selecting the Right Model and Dataset for Your Project

Choosing the right pretrained model is as important as finding a strong base for a building. Each model has its own strengths based on its training data and design. This is similar to the hugging face datasets. For instance, Codex is trained on a large dataset of code, which makes it great for code generation. In contrast, GPT-3 is trained on a large amount of text, so it is better for text generation or summarizing text.

Think about what you want to do. Are you focused on text generation, translation, question answering, or something else? The model’s design matters a lot too. Some designs are better for specific tasks. For instance, transformer-based models are excellent for many NLP tasks.

It’s important to look at the good and bad points of different pretrained models. You should keep the details of your project in mind as well.

Understanding the Role of Data Quality and Quantity

The phrase “garbage in, garbage out” fits machine learning perfectly. The quality and amount of your training data are very important. Good data can make your model better.

Good data is clean and relevant. It should show what you want the model to learn. For example, if you are changing a model for sentiment analysis of customer reviews, your data needs to have many reviews. Each review must have the right labels, like positive, negative, or neutral.

The size of your dataset is very important. Generally, more data helps the model do a better job. Still, how much data you need depends on how hard the task is and what the model can handle. You need to find a good balance. If you have too little data, the model might not learn well. On the other hand, if you have too much data, it can cost a lot to manage and may not really improve performance.

Operationalizing LLM Fine Tuning

It is important to know the basics of fine-tuning. However, to use that knowledge well, you need a good plan. Think of it like having all the ingredients for a tasty meal. Without a recipe or a clear plan, you may not create the dish you want. A step-by-step approach is the best way to achieve great results.

Let’s break the fine-tuning process into easy steps. This will give us a clear guide to follow. It will help us reach our goals.

Steps to Fine-Tune LLMs

1. Data Collection and Preparation

- Get Key Information: Collect examples that connect to the topic.

- Sort and Label: Remove any extra information or errors. Tag the data for tasks such as grouping or summarizing.

2. Choose the Right LLM

- Choosing a Model: Start with a model that suits your needs. For example, use GPT-3 for creative work or BERT for organizing tasks.

- Check Size and Skills: Consider your computer’s power and the difficulty of the task.

3. Fine-Tuning Frameworks and Tools

- Use libraries like Hugging Face Transformers, TensorFlow, or PyTorch to modify models that are already trained. These tools simplify the process and offer good APIs for various LLMs.

4. Training the Model

- Set Parameters: Pick key numbers such as how quick to learn, how many examples to train at once, and how many times to repeat the training.

- Supervised Training: Enhance the model with example data that has the right answers for certain tasks.

- Instruction Tuning: Show the model the correct actions by giving it prompts or examples.

5. Evaluate Performance

- Check how well the model works by using these measures:

- Accuracy: This is key for tasks that classify items.

- BLEU/ROUGE: Use these when you work on text generation or summarizing text.

- F1-Score: This helps for datasets that are not balanced.

6. Iterative Optimization

- Check the results.

- Change the settings.

- Train again to get better performance.

Model Initialization and Evaluation Metrics

Model initialization starts the process by giving initial values to the model’s parameters. It’s a bit like getting ready for a play. A good start can help the model learn more effectively. Randomly choosing these values is common practice. But using pre-trained weights can help make the training quicker.

Evaluation metrics help us see how good our model is. They show how well our model works on new data. Some key metrics are accuracy, precision, recall, and F1-score. These metrics give clear details about what the model does right and where it can improve.

| Metric |

Description |

| Accuracy |

The ratio of correctly classified instances to the total instances |

| Precision |

The ratio of correctly classified positive instances to the total predicted positive instances. |

| Recall |

The ratio of correctly classified positive instances to all actual positive instance |

| F1-score |

The harmonic mean of precision and recall, providing a balanced measure of performance. |

Choosing the right training arguments is important for the training process. This includes things like learning rate and batch size. It’s like how a director helps actors practice to make their performance better.

Employing the Trainer Method for Fine-Tuning Execution

Imagine having someone to guide you while you train a neural network. That is what the ‘trainer method’ does. It makes adjusting the model easier. This way, we can focus on the overall goal instead of getting lost in tiny details.

The trainer method is widely used in machine learning tools, like Hugging Face’s Transformers. It helps manage the training process by handling a wide range of training options and several different tasks. This method offers many training options. It gives data to the model, calculates the gradients, updates the settings, and checks the performance. Overall, it makes the training process easier.

This simpler approach is really helpful. It allows people, even those who aren’t experts in neural network design, to work with large language models (LLMs) more easily. Now, more developers can use powerful AI techniques. They can make new and interesting applications.

Best Practices for Successful LLM Fine Tuning

Fine-tuning LLMs is similar to learning a new skill. You get better with practice and by having good habits. These habits assist us in getting strong and steady results. When we know how to use these habits in our work, we can boost our success. This allows us to reach the full potential of fine-tuned LLMs.

No matter your experience level, these best practices can help you get better results when fine-tuning. Whether you are just starting or have been doing this for a while, these tips can be useful for everyone.

Navigating Hyperparameter Tuning and Optimization

Hyperparameter tuning is a lot like changing the settings on a camera to take a good photo. It means trying different hyperparameter values, such as learning rate, batch size, and the number of training epochs while training. The aim is to find the best mix that results in the highest model performance.

It’s a delicate balance. If the learning rate is too high, the model could skip the best solution. If it is too low, the training will take a lot of time. You need patience and a good plan to find the right balance.

Methods like grid search and random search can help us test. They look into a range of hyperparameter values. The goal is to improve our chosen evaluation metric. This metric could be accuracy, precision, recall, or anything else related to the task.

Regular Evaluation for Continuous Improvement

In the fast-moving world of machine learning, we can’t let our guard down. We should check our work regularly to keep getting better. Just like a captain watches the ship’s path, we need to keep an eye on how our model does. We must see where it works well and where there is room for improvement.

If we create a model for sentiment analysis, it may do well with positive and negative reviews. However, it might have a hard time with neutral reviews. Knowing this helps us decide what to do next. We can either gather more data for neutral sentiments or adjust the model to recognize those tiny details better.

Regular checks are not only for finding out what goes wrong. They also help us make a practice of always getting better. When we check our models a lot, look at their results, and change things based on what we learn, we keep them strong, flexible, and in line with our needs as things change.

Overcoming Common Fine-Tuning Challenges

Fine-tuning can be very helpful. But it has some challenges too. One challenge is overfitting. This occurs when the model learns the training data too well. Then, it struggles with new examples. Another issue is underfitting. This happens when the model cannot find the important patterns. By learning about these problems, we can avoid them and fine-tune our LLMs better.

Just like a good sailor has to deal with tough waters, improving LLMs means knowing the issues and finding solutions. Let’s look at some common troubles.

Strategies to Prevent Overfitting

Overfitting is like learning answers by heart for a test without knowing the topic. This occurs when our model pays too much attention to the ‘training dataset.’ It performs well with this data but struggles with new and unseen data. Many people working in machine learning face this problem of not being able to generalize effectively.

There are several ways to reduce overfitting. One way is through regularization. This method adds penalties when models get too complicated. It helps the model focus on simpler solutions. Another method is dropout. With dropout, some connections between neurons are randomly ignored during training. This prevents the model from relying too much on any one feature.

Data augmentation is important. It involves making new versions of the training data we already have. We can switch up sentences or use different words. This helps make our training set bigger and more varied. When we enhance our data, we support the model in handling new examples better. It helps the model learn to understand different language styles easily.

Challenges in Fine-Tuning LLMs

- Overfitting: This happens when the model focuses too much on the training data. It can lose its ability to perform well with new data.

- Data Scarcity: There is not enough good quality data for this area.

- High Computational Cost: Changing the model requires a lot of computer power, especially for larger models.

- Bias Amplification: There is a chance of making any bias in the training data even stronger during fine-tuning.

Comparing Fine-Tuning and Retrieval-Augmented Generation (RAG)

Fine-tuning and Retrieval-Augmented Generation (RAG) are two ways to help computers understand language better.

- Fine-tuning is about changing a language model that has already learned many things. You use a little bit of new data to improve it for a specific task.

- This method helps the model do better and usually leads to higher accuracy on the target task.

- RAG, on the other hand, pulls in relevant documents while it creates text.

- This method adds more context by using useful information.

Both ways have their own strengths. You can choose one based on what you need to do.

Deciding When to Use Fine-Tuning vs. RAG

Choosing between fine-tuning and retrieval-augmented generation (RAG) is like picking the right tool for a task. Each method has its own advantages and disadvantages. The best choice really depends on your specific use case and the job you need to do.

Fine-tuning works well when we want our LLM to concentrate on a specific area or task. It makes direct changes to the model’s settings. This way, the model can learn through the learning process of important information and language details needed for that task. However, fine-tuning needs a lot of labeled data for the target task. Finding or collecting this data can be difficult.

RAG is most useful when we need information quickly or when we don’t have enough labeled data for training. It links to a knowledge base that gives us fresh and relevant answers. This is true even for questions that were not part of the training. Because of this, RAG is great for tasks like question answering, checking facts, or summarizing news, where real-time information is very important.

Future of Fine-Tuning

New methods like parameter-efficient fine-tuning, such as LoRA and adapters, aim to save money. They do this by reducing the number of trainable parameters compared to the original model. They only update some layers of the model. Also, prompt engineering and reinforcement learning with human feedback (RLHF) can help improve the skills of LLMs. They do this without needing full fine-tuning.

Conclusion

Fine-tuning Large Language Models (LLMs) is important for improving AI applications. You can get the best results by adjusting models that are already trained to meet specific needs. To fine-tune LLMs well, choosing the right model and dataset is crucial. Good data preparation makes a difference too. You can use several methods, such as supervised learning, few-shot learning, vtransfer learning, and special techniques for specific areas. It is important to adjust hyperparameters and regularly check your progress. You also have to deal with common issues like overfitting and underfitting. Knowing when to use fine-tuning instead of Retrieval-Augmented Generation (RAG) is essential. By following best practices and staying updated with new information, you can successfully fine-tune LLMs, making your AI projects much better.

Frequently Asked Questions

-

What differentiates fine-tuning from training a model from scratch?

Fine-tuning begins with a pretrained model that already knows some things. Then, it adjusts its settings using a smaller and more specific training dataset.

Training from scratch means creating a new model. This process requires much more data and computing power. The aim is to reach a performance level like the one fine-tuning provides.

-

How can one avoid common pitfalls in LLM fine tuning?

To prevent mistakes when fine-tuning, use methods like regularization and data augmentation. They can help stop overfitting. It's good to include human feedback in your work. Make sure you review your work regularly and adjust the hyperparameters if you need to. This will help you achieve the best performance.

-

What types of data are most effective for fine-tuning efforts?

Effective data for fine-tuning should be high quality and relate well to your target task. You need a labeled dataset specific to your task. It is important that the data is clean and accurate. Additionally, it should have a good variety of examples that clearly show the target task.

-

In what scenarios is RAG preferred over direct fine-tuning?

Retrieval-augmented generation (RAG) is a good choice when you need more details than what the LLM can provide. It uses information retrieval methods. This is helpful for things like question answering or tasks that need the latest information.

by Anika Chakraborty | Nov 19, 2024 | Artificial Intelligence, Blog, Latest Post |

Artificial Intelligence (AI) has transformed how we live, work, and interact with technology. From virtual assistants to advanced robotics, AI is all about speed, logic, and efficiency. Yet, one thing it lacks is the ability to connect emotionally.

Enter Artificial Empathy—a groundbreaking idea that teaches machines to understand human emotions and respond in ways that feel more personal and caring. Imagine a Healthcare bot that notices your anxiety before a procedure or a customer service chatbot that understands the frustration and adapts its tone.

While both AI and Artificial Empathy involve advanced algorithms, they differ in purpose, functionality, and potential impact. Let’s explore what sets them apart and how they complement each other.

Key Highlights:

- AI excels in data-driven tasks but often misses the emotional depth humans bring.

- Artificial Empathy enables machines to recognize and respond to emotions, making interactions more human-like.

- Applications of empathetic AI include healthcare, customer service, education, and more.

- Ethical concerns like privacy and bias must be addressed for responsible development.

- A balanced approach can unlock the full potential of AI and Artificial Empathy.

What Is Artificial Intelligence (AI)

AI refers to computer systems that can perform tasks requiring human-like intelligence. These tasks include decision-making, problem-solving, and pattern recognition. AI uses various techniques, such as:

- Natural Language Processing (NLP): Understanding and generating human language.

- Machine Learning: Learning from data to make predictions or decisions.

- Computer Vision: Recognizing and interpreting visual information.

Examples of AI in action include Google Maps predicting traffic, Netflix recommending shows, and facial recognition unlocking your smartphone.

However, AI’s logical approach often feels cold and detached, especially in scenarios requiring emotional sensitivity, like customer support or therapy.

What Is Artificial Empathy

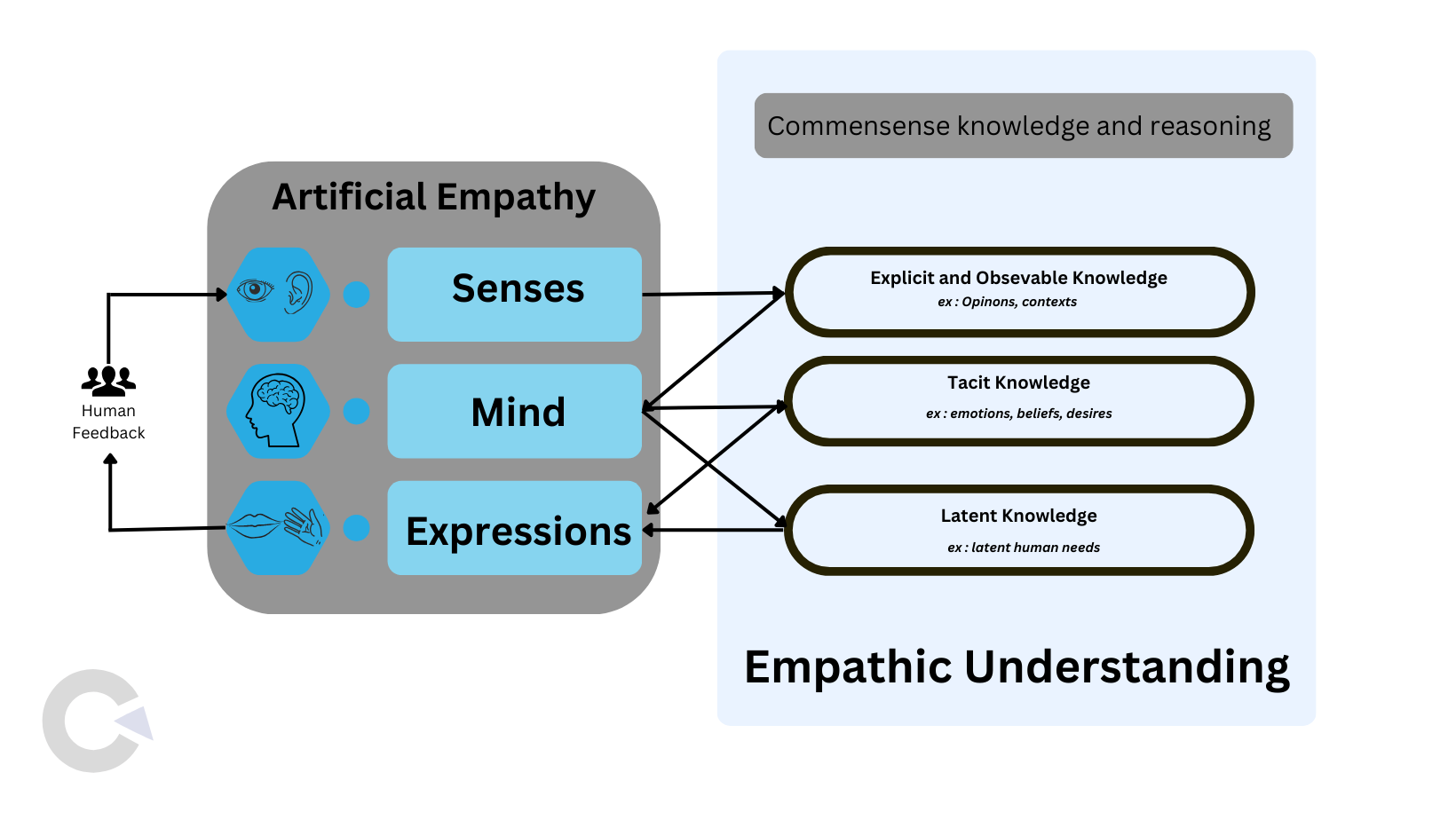

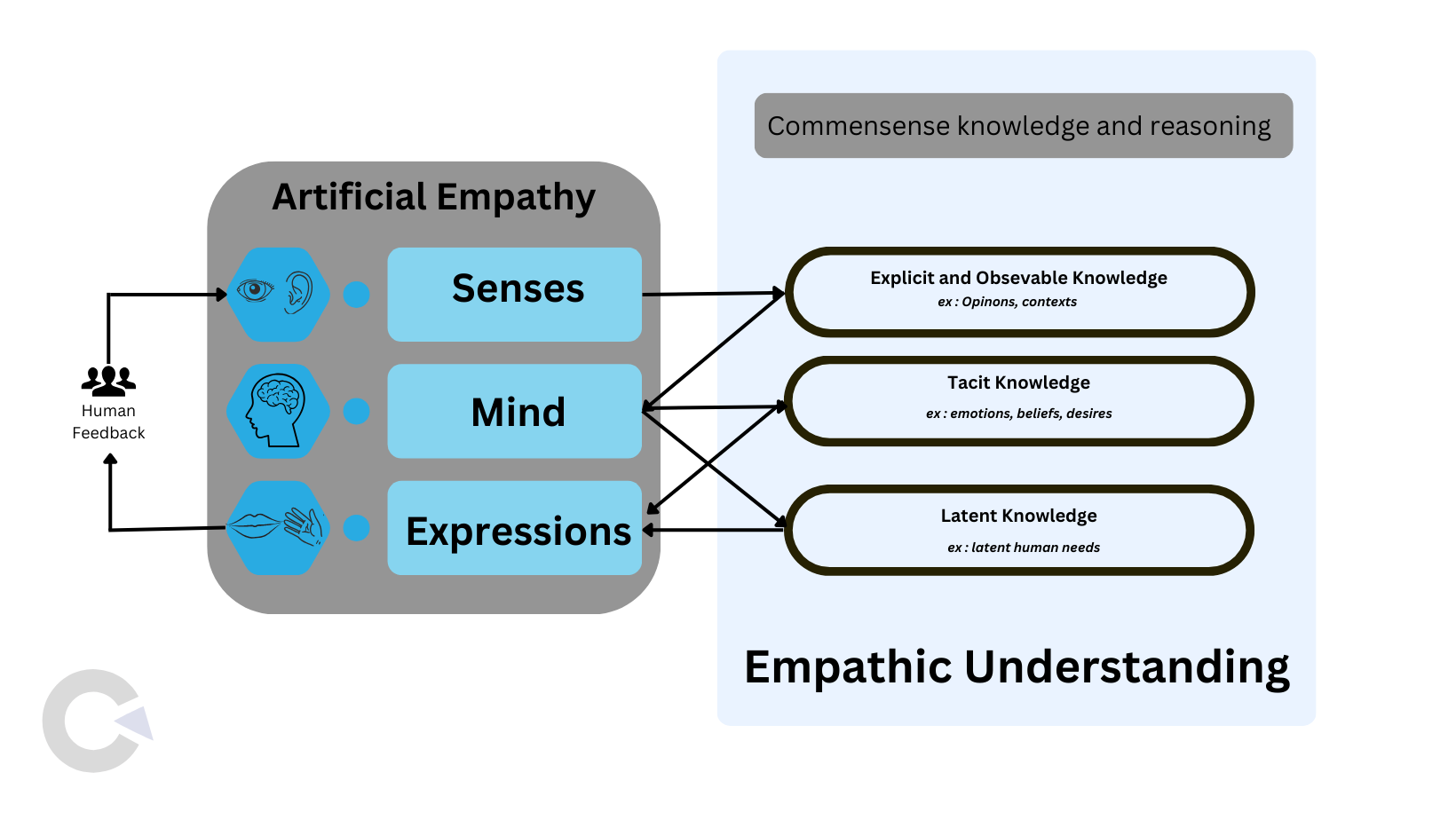

Artificial Empathy aims to bridge the emotional gap in human-machine interactions. By using techniques like tone analysis, facial expression recognition, and sentiment analysis, AI systems can detect and simulate emotional understanding.

For example:

- Healthcare: A virtual assistant notices stress in a patient’s voice and offers calming suggestions.

- Customer Service: A chatbot detects frustration and responds with empathy, saying, “I understand this is frustrating; let me help you right away.”

While Artificial Empathy doesn’t replicate genuine human emotions, it mimics them well enough to make interactions smoother and more human-like.

Key Differences Between Artificial Intelligence and Artificial Empathy

| Feature |

Artificial Intelligence |

Artificial Empathy |

| Purpose |

Solves logical problems and performs tasks. |

Enhances emotional understanding in interactions. |

| Core Functionality |

Data-driven decision-making and problem-solving. |

Emotion-driven responses using pattern recognition. |

| Applications |

Autonomous cars, predictive analytics, etc. |

Therapy bots, empathetic chatbots, etc. |

| Human Connection |

Minimal emotional engagement. |

Focused on improving emotional engagement. |

| Learning Source |

Large datasets of facts and logic. |

Emotional cues from voice, text, and expressions. |

| Depth of Understanding |

Lacks emotional depth |

Mimics emotions but doesn’t truly feel them. |

The Evolution of Artificial Empathy

AI started as a rule-based system focused purely on logic. Over time, researchers realized that true human-AI collaboration required more than just efficiency—it needed emotional intelligence.

Here’s how empathy in AI has evolved:

- Rule-Based Systems: Early AI followed strict commands and couldn’t adapt to emotions.

- Introduction of NLP: Natural Language Processing enabled AI to interpret human language and tone.

- Deep Learning Revolution: With deep learning, AI started recognizing complex patterns in emotions.