by admin | Jan 19, 2022 | Automation Testing, Blog, Latest Post |

Now that the digital landscape is continuously growing momentum, your website mustn’t fall behind progress, especially since consumer behavior is trickier now more than ever.

If there’s anything you should invest in your business, it’s to ensure that you’re providing the perfect website for your brand. This is because it’s not only you who’ll be using it; your customers will be there to learn more about your brand, products, and services. So if your web development and design aren’t close to perfect, it may affect your customer’s user experience.

A fantastic way to achieve a strong and effective website is through testing, code review, and site audits. With that said, it’s worth paying attention to automated testing to guide you through the development process. In essence, automated testing helps reduce risk and serves as a guide through the development stage.

For this reason, it’s best to partner up with credible automation testing companies to ensure that you’re conducting the right tests to improve your website’s performance.

Reasons You Should Consider Automated Testing for Web Development

Working with automation testing companies can provide you with a plethora of benefits. Here are some reasons you should consider automated testing right now:

Reason #1: It’s Incredibly Time-Efficient

When you integrate automated testing, you get to conduct a huge number of tests and run them at the same time. If you do this manually, it could take forever, affecting your development and design stages. With automation, you get to speed up the deployment of new features and updates and get to deliver projects just in time.

Reason #2: You Can Reuse the Same Tests

With automated testing, you’re able to use the same tests, and you can run them multiple times so you can make the necessary adjustments to your codes and design. This is because established and ready scripts are pretty handed and can help with different scenarios, especially when delivering different projects.

Reason #3: You Get to Test in Different Browsers, Devices, and Scenarios

Perhaps one of the best things about automated testing is that you get to execute cross-device and cross-browser testing to see if your website will work seamlessly on different platforms. With automated testing, you’re able to benefit from various testing coverage and scenarios, reducing risks and preparing you for different situations.

Reason #4: Utilize Regression Testing

You can reuse tests to help fuel regression testing, thanks to automated testing. This test allows you to make changes, especially with complex features and updates, enabling you to make many tests on different platforms as well.

Reason #5: You Get High Accuracy and Better Results

Unlike manual tests, which are monotonous and repetitive, automation testing is quite the opposite. With manual tests, you may miss a bug or forget a certain step, but with automated testing, you get to test frequently and get accurate results since you eliminate human error.

The Bottom Line: If You Want to Improve Your Website, It’s Best to Integrate Automated Testing

There’s no doubt that businesses are even more competitive now, especially since more businesses are claiming digital real estate. Because of this, it’s safe to say that you should produce a strong and effective website for your consumers to enjoy. However, testing is a vital component for this, and with that, it’s worth working with a credible automation testing company to ensure that your site is up to speed at all times!

How Can We Help You?

Codoid is an industry leader in QA, leading and guiding communities and clients with our passion for development and innovation. Our team of highly skilled engineers can help your team through software testing meetup groups, software quality assurance events, automation testing, and more.

Are you looking for automation testing companies? If so, reach out to us today!

by admin | Jan 19, 2022 | Automation Testing, Blog, Latest Post |

Most firms in the business software industry are moving towards accelerated and agile methodologies. One of the essential specializations in these companies is automation testing, replacing manual testing. Automation is also key to the business success of the software industry.

A professional who performs automation testing has many essential skills, which their managers must know before making a particular person head of automation testing. A few of these skills are listed below:

1. The Ability to Manage and Prioritize Tests

Managers want to know that their automation testers can handle multiple assignments simultaneously. As a professional, you must know how to prioritize, which is a successful automation tester’s essential skill. Allocate the tests so that the most important ones are executed first. For example, the tests that were left incomplete during the last sprint should be worked on first.

2. The Ability to Identify and Resolve Bugs

Managers should know that a successful automation tester can identify and resolve bugs. Only when a tester can identify the bugs and fix them can the company ensure that the product will be ready for production. They should be able to write automation scripts that identify and resolve bugs.

3. The Ability to Identify and Track Bugs

Managers want to know that their automation testers can identify and track new bugs in software. The testers must track bugs effectively to resolve issues as soon as possible.

4. The Ability to Identify and Understand the Business Requirements

Managers want to know that their automation testers have an in-depth understanding of the business requirements. This is important to create practical tests and meet the business goals.

5. The Ability to Write Automation Scripts

Managers want to know that their automation testers can write scripts ready for execution. A good automation tester must have the skill of writing precise, efficient, and effective automation scripts.

6. The Ability to Investigate Bugs

Managers want to know that their automation testers can investigate the cause of an issue. As a professional in the industry, you must master the art of investigating bugs. The managers want to find someone who can create tests and resolve issues.

7. The Ability to Write Test Scenarios

Managers want to know that their automation testers can write test scenarios clearly and effectively. Automation testers should be able to write compelling and thorough test scenarios.

8. The Ability to Use Automation Tools

Managers want to know that their automation testers can use QTP and Selenium effectively. QTP, Selenium, and other types of automation tools are used to test a software package, and you must be aware of the importance of these tools.

Conclusion

Automation testing is a niche specialization in the business software industry. It is one of the essential specializations because it helps a software company save time and money. Automation testing has a huge industry, and it is growing worldwide.

Therefore, automation testing is an essential specialization that the software industry employs today. The software industry’s future depends on automation testing, which is why managers must know the skills of a qualified automation tester before offering them a job.

Codoid is an industry leader in QA. We don’t say this just to brag–we say this because it is our passion to help guide and lead the Quality Assurance community. Codoid does it all: web, mobile, desktop, gaming, car infotainment systems, and mixed reality applications. Our automation testing services will help you to test your applications across multiple platforms, devices, browsers, and wearable devices. If you need test automation services in the United States, get in touch with us now! Let us know how we can help.

by admin | Jan 6, 2022 | Automation Testing, Blog, Latest Post |

As per a recent report, the global automation testing market is expected to grow from an already high $20.7 Billion to a mammoth $49.9 Billion by the year 2026. So in such a fast-growing domain, you might lose out on a lot of potentials if you fail to keep up with the current trends in automation testing. Being a leading QA company that provides top-of-the-line automation testing services to all our clients, we have created this list of current trends in automation testing based on real-world scenarios and not assumptions. So let’s take a look at automation testing trends to keep an eye on for 2022.

Automation Tools Powered by AI and ML

Artificial Intelligence and Machine Learning are two technologies that have the potential to take automation to the next level as it eases everything from test creation to test maintenance. With the help of AI and ML, we will be able to automate unit tests, validate UI changes, ease regression testing, and detect bugs earlier than before. So we will be able to achieve seamless continuous testing with the least amount of effort and resources. Though such capabilities aren’t possible now, the prospect of being able to achieve such a feat doesn’t seem to be far away.

Codeless Automation Solutions

Such growth in AI and ML will also yield the rise of codeless automation solutions that are slowly gaining popularity. So the learning curve can be greatly reduced and a lot of time can be saved with such codeless automation solutions. If you’re wondering that codeless automation sounds a little too similar to Selenium Testing, make sure to read our blog about codeless automation testing to know its advantages and to understand how it is different from Selenium testing. As an experienced automation testing company, we have to say that codeless automation will not replace automation. But it will definitely come in handy.

Cloud-Based Solutions

Be it testing or collaboration tools, cloud-based solutions are already flying high and there seems to be no slowing them down in the near future as well. They have so many advantages to offer such as cost-effective infrastructure, reduced execution time with parallel testing, better test coverage, bug management, and so on. With the never-ending pandemic raging on, the implementation of such solutions will definitely be high.

The Emergence of IoT Testing

We are slowly seeing IoT being implemented in more and more avenues like smart speakers, smart home appliances like thermostats, lights, and so on. It goes without saying that all these products would have to be tested. And since almost all information sharing across these devices is achieved through APIs, the need for API testing will definitely be on the rise. Making it an undeniable entry to our list of current trends in automation testing.

Security Testing

Security breaches were also on the rise during the pandemic and privacy concerns with regards to the user data also became a talking point. So you will no longer be able to use real-world data to test security. Instead, you’d have to use masking or synthetic test data generation tools to perform security testing and finally conclude with various compliance tools as well.

Conclusion

Pretty much every single point we have seen in this list of the current trends in automation testing is about the various tools that will be useful. But we should also make sure to focus on our automation skills and self-improvement to make use of the state-of-the-art tools and methods to achieve continuous testing.

by admin | Dec 24, 2021 | Automation Testing, Blog, Latest Post |

As the name suggests, Endtest.io is a great option if you are looking for a great option for automating your end-to-end and regression testing. The best part about Endtest.io is that you could do all this for both mobile and web apps without having to code. So the end result here is that you get is quicker evaluation of quality for your products since it is instrumental in overcoming the bottlenecks that come with traditional testing. Being one of the best automation testing companies, we are always on the lookout for the best tools that can streamline our automation testing process. Endtest.io is one such tool that we have found to be very resourceful. So in this blog, we will be exploring why you should consider Endtest.io, and help you get started with it as well.

Why is end-to-end testing (E2E testing) Important?

E2E testing is crucial as it helps to thoroughly test the entire software by modeling and validating real-world scenarios. Since it is executed from the standpoint of an end-user, it helps establish system dependencies and guarantee that all features & integrations function as intended. So the chances of a system failure due to the failure of any of the subsystems becomes very less.

Why Endtest.io?

If you have done your own research on test automation solutions, you might have come across Selenium which is a very popular open-source tool. But Selenium lacks various features such as native video recording of tests, integrations with Jenkins, Jira, or Slack, e-mail notifications, and test scheduling. That is where Endtest.io comes into the picture as it addresses all these issues to give you a complete package.

Endtest.io is simple to use and has a very intuitive user interface. So even junior QA Engineers can work with it without any prior experience. Endtest’s website is also surprisingly simple to use and offers a lot of helpful documentation that can guide you through any doubts. If you are still finding it hard to resolve an issue or if you need additional help, you can reach out to their super responsive customer service via email or chat to get swift replies. Since they are open to suggestions that can help better your workflow or their product, you can approach them with your ideas. In fact, we have already discussed an option to include special characters with their team.

As stated earlier, we can also use Endtest.io to automate regression tests. Similar to E2E testing, regression testing is also very important and it will ensure that a recent program or code modification hasn’t broken any current features of the product. So regression testing becomes a must when introducing new features, repairing errors, or dealing with performance difficulties.

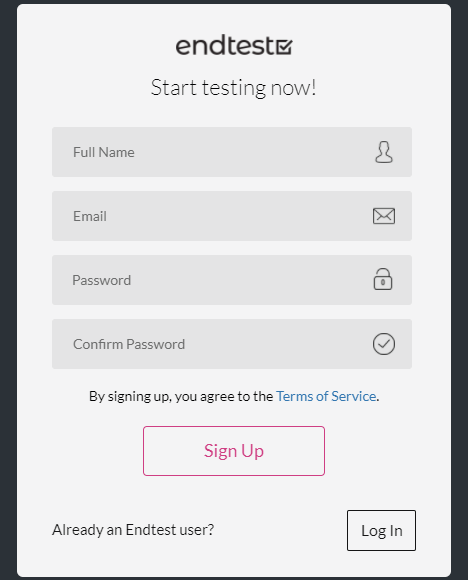

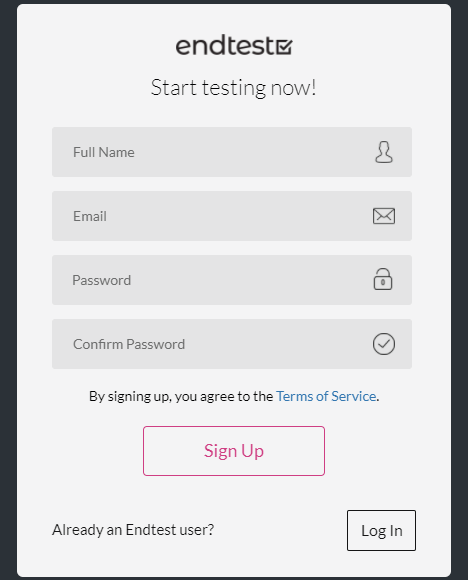

Getting Started with Endtest.io’s End-to-End testing

Now that we have seen the capabilities of Endtest.io, let’s find out how you can start using it. First and foremost, you would have to Sign up to EndTest by visiting this link and then log in using the valid credentials. Once your account creation is complete, you’d have to install the Endtest.io Chrome Extension (Web test recorder Codeless Automated Testing). So you needn’t have to download any separate software for Endtest.io to work.

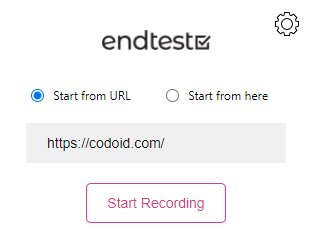

Recording Function

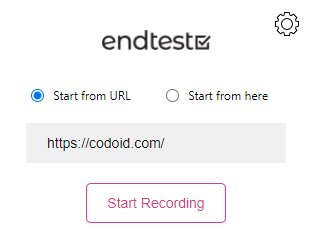

Once you open the Chrome extension, you’d have to select either the ‘Start from here’ or the ‘Start from URL’ and hit the ‘Start Recording’ button.

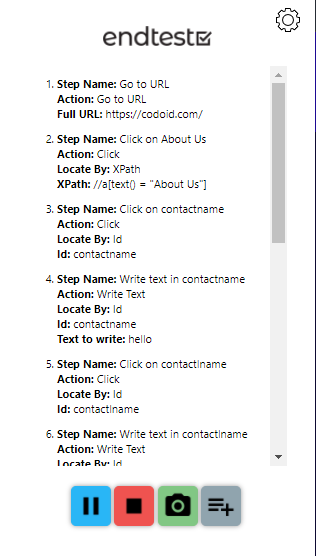

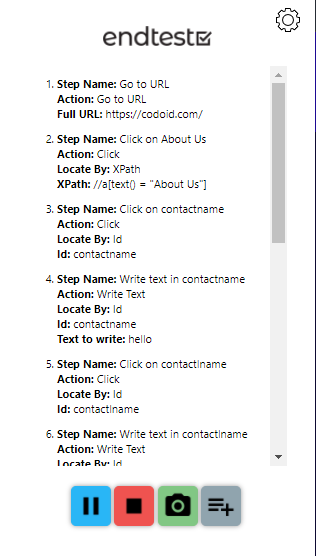

So Endtest.io will now record each and every step you take while performing the test scenario. If needed, you can even pause the recording at any given moment. Once you’ve finished your test scenario, head back to the Endtest.io Chrome Extension and click on the Stop recording button.

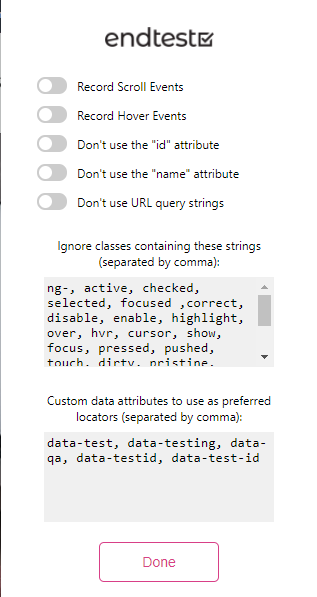

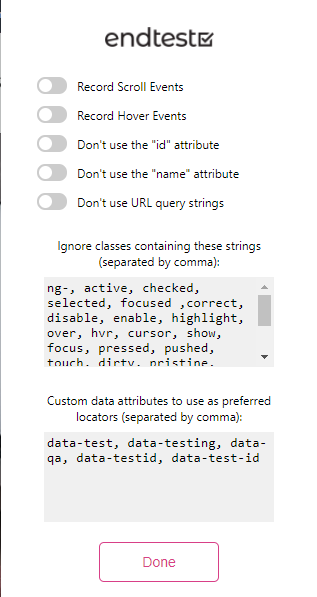

Endtest.io has some predefined settings from which we can choose different options based on the testing requirements and needs. These user-friendly options shown in the image make it even easier for us to use the recording feature with full accuracy.

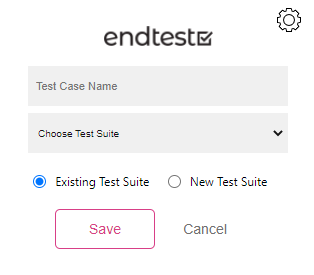

Test Case and Test Suite

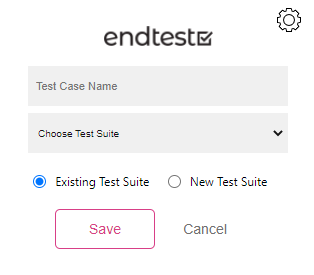

So once the recording part has been done, you will be able to assign a name to the test case you just recorded. If you want to add the test case to an existing test suite, you can do so. If not, you can also create a test suite and add the test case to that.

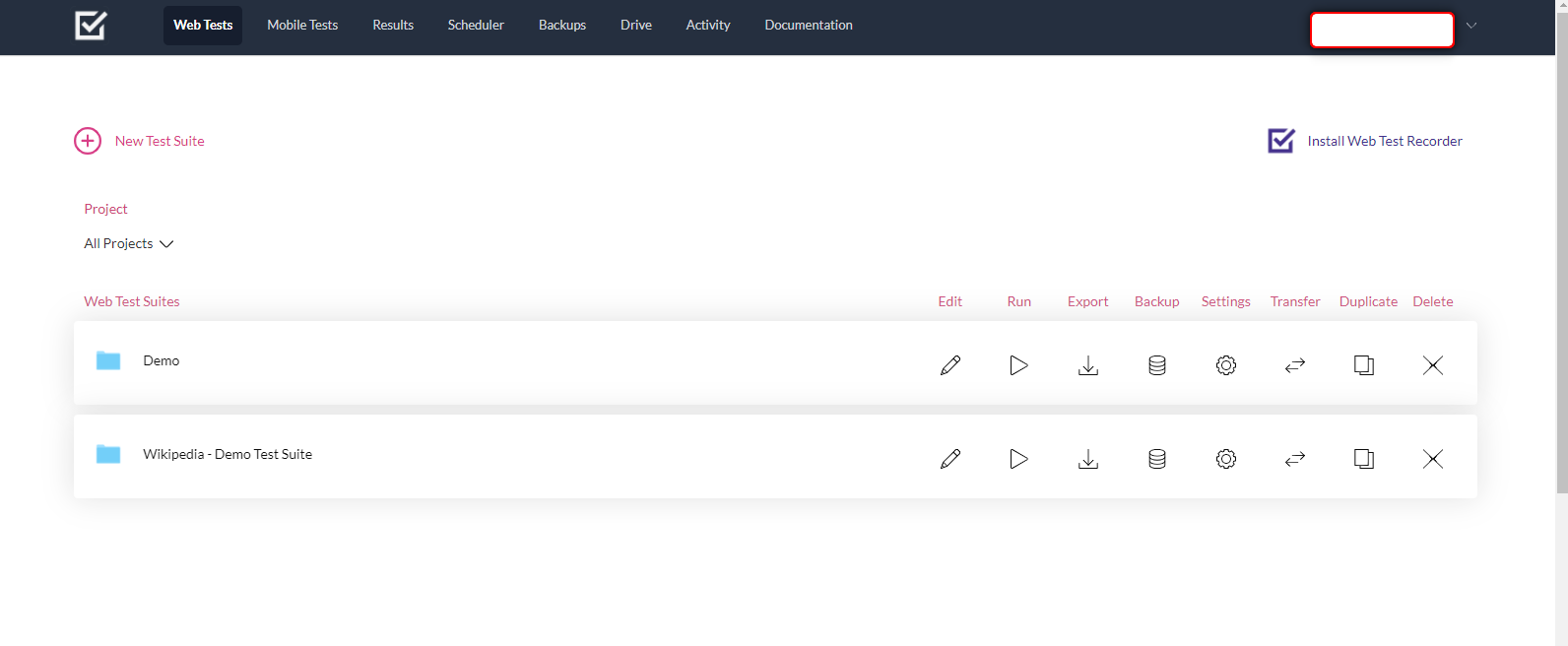

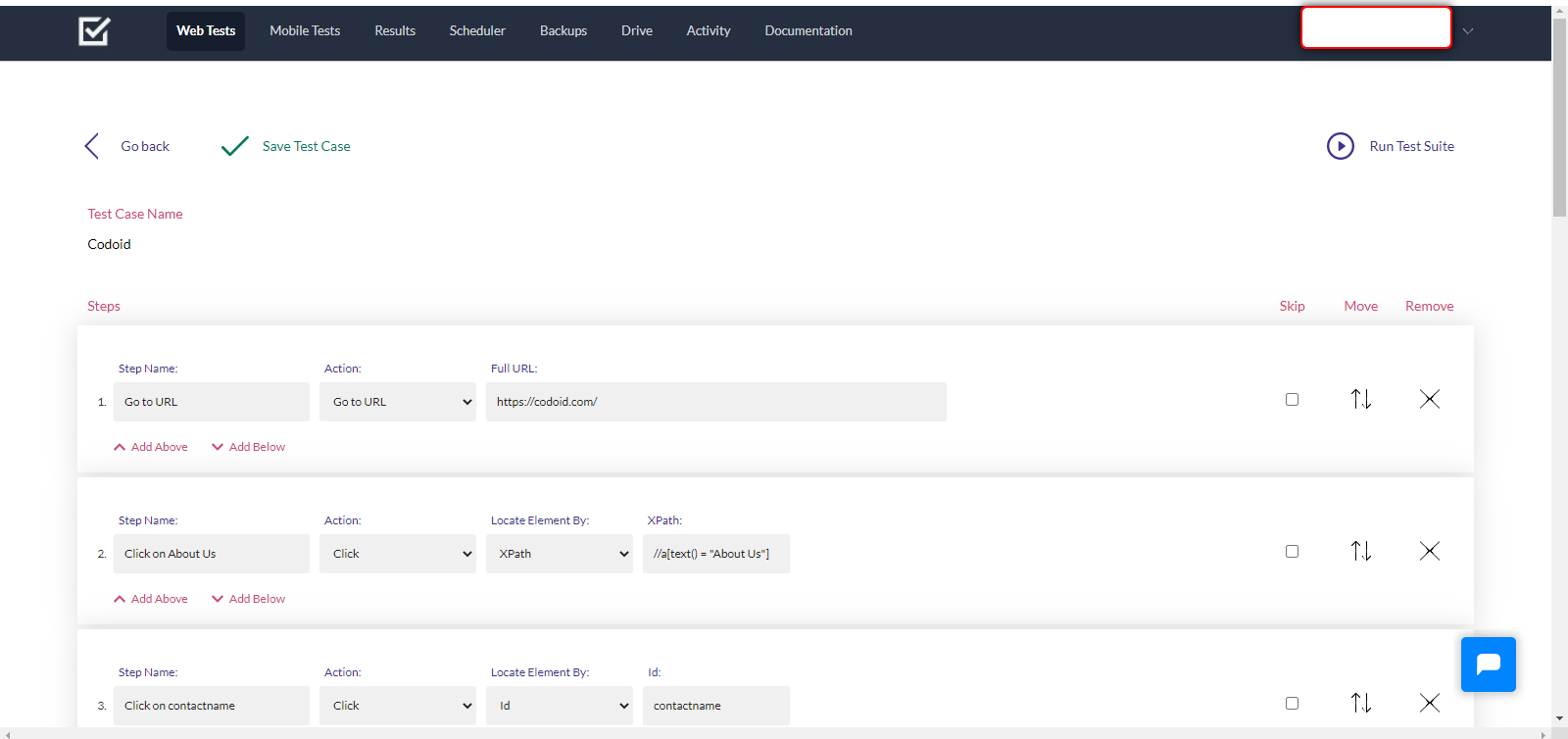

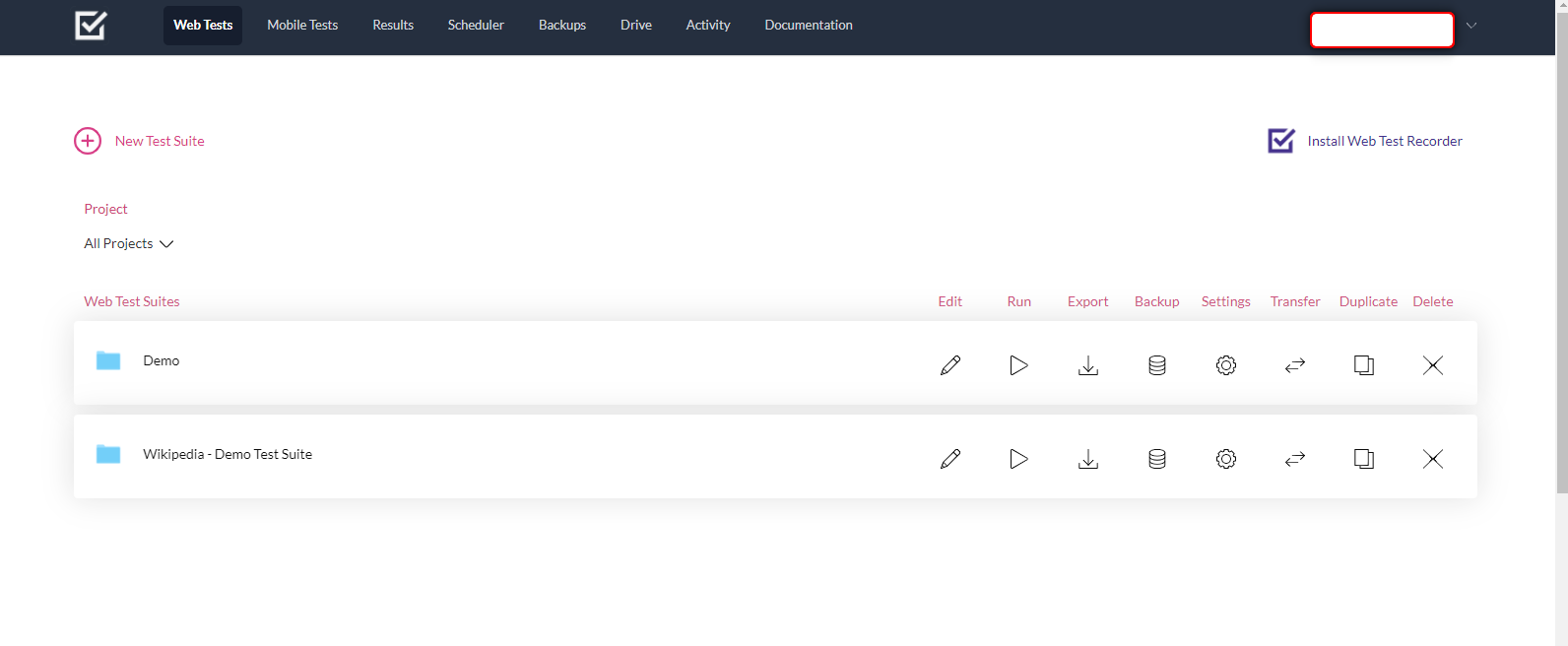

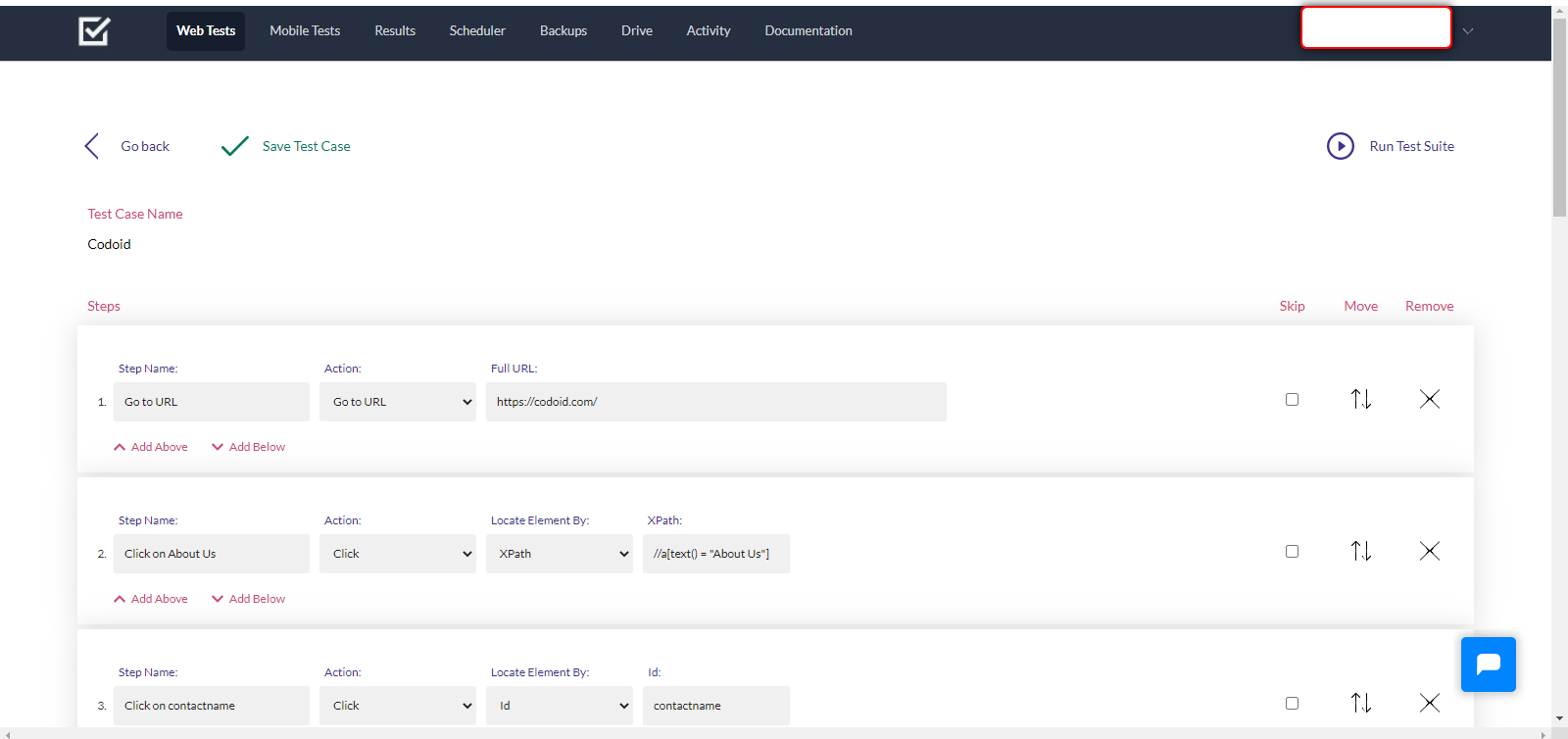

For the sake of explanation, we have named this test case as ‘Codoid’ and created a test suite by the name ‘Demo’. Once you press the ‘Save’ button, you will be taken to the Endtest.io site’s Web Tests page as shown below.

As seen in the image, there are various options such as edit, run, export, and so on. But since we’re not trying to do any of that with the test suite now, let’s just click on the Test Suite named Demo.

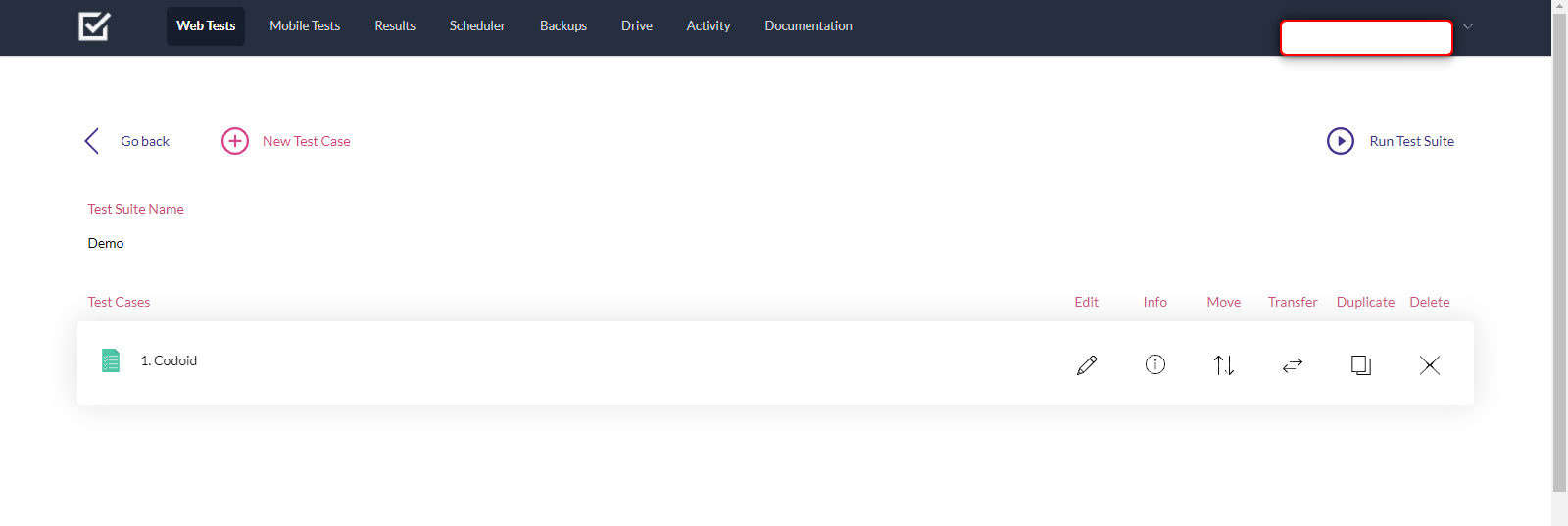

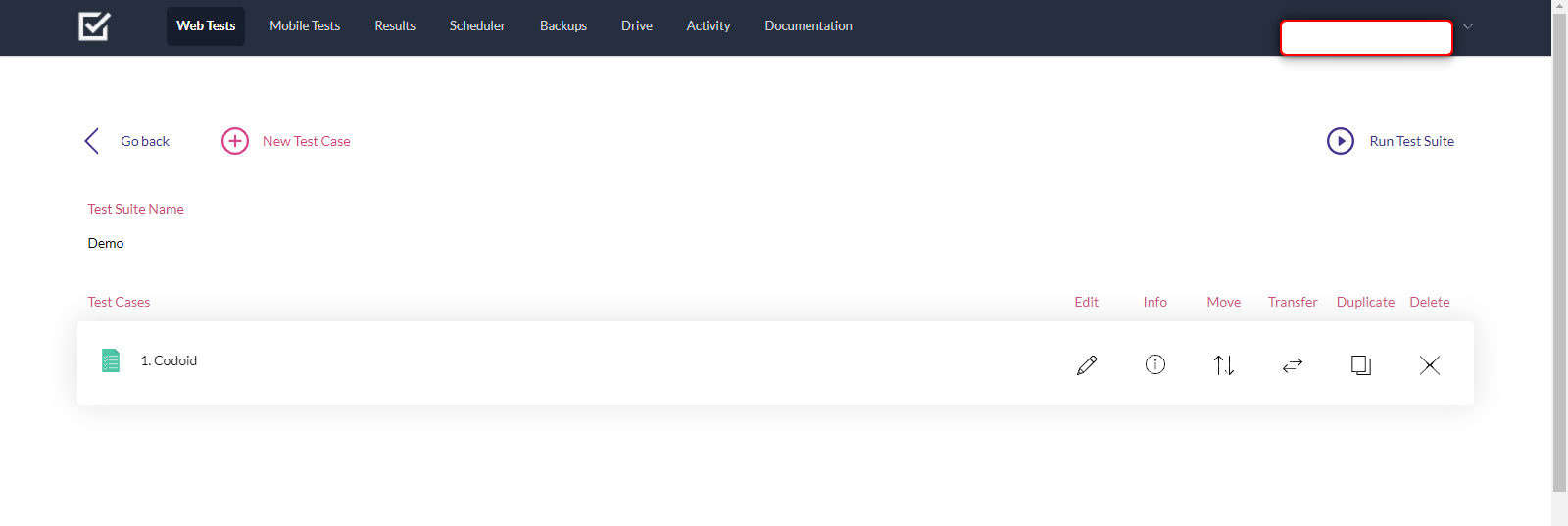

Inside you will find all the test cases that you have created under that particular Test Suite. In this case, we have created just one test case named ‘Codoid’. So let’s click on that to see the steps that were previously recorded.

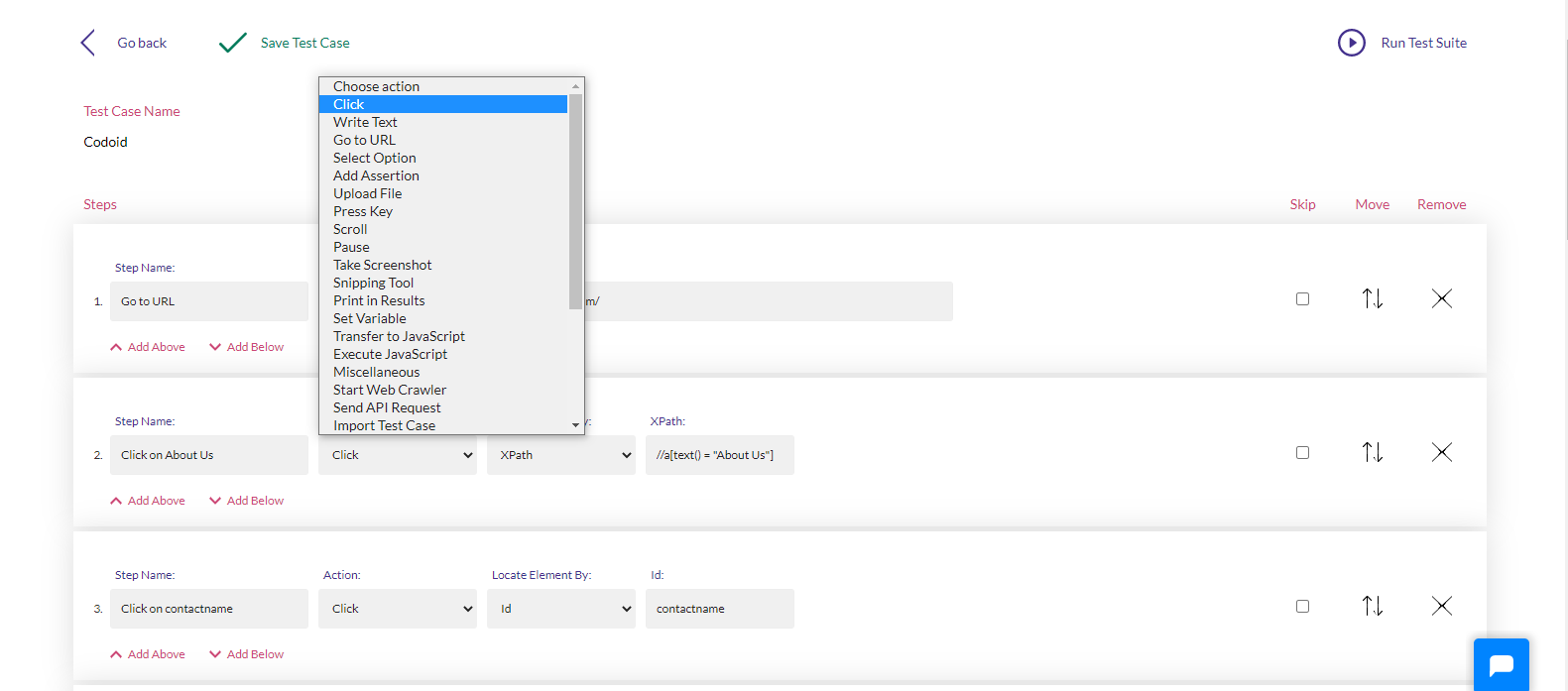

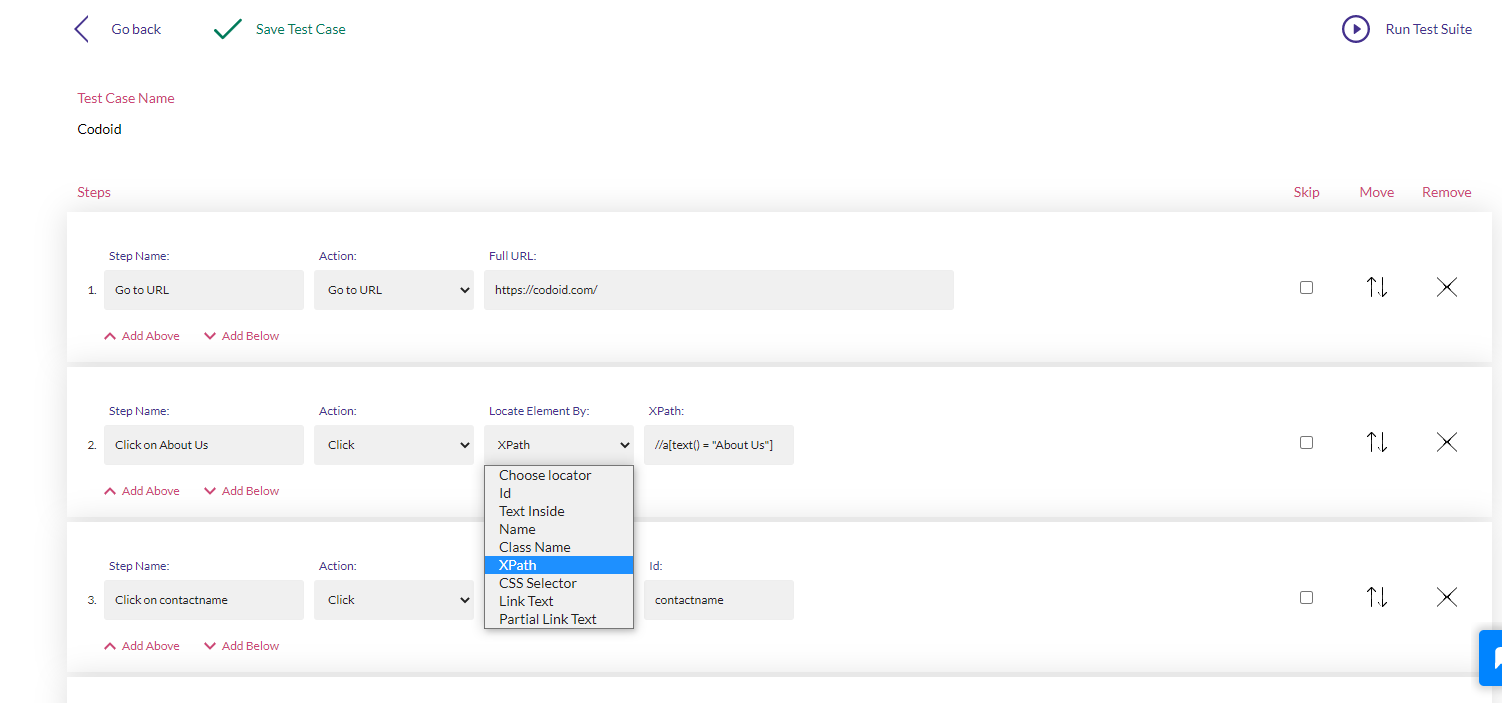

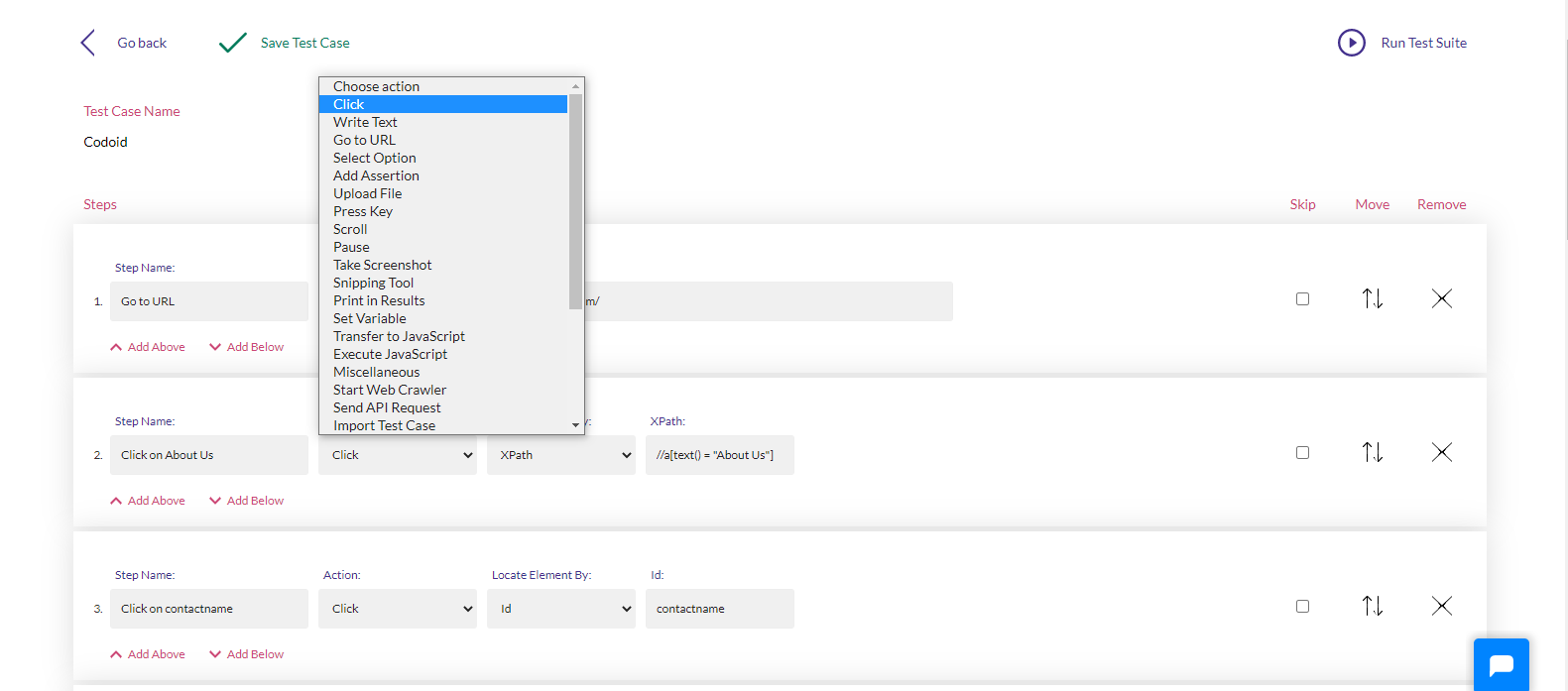

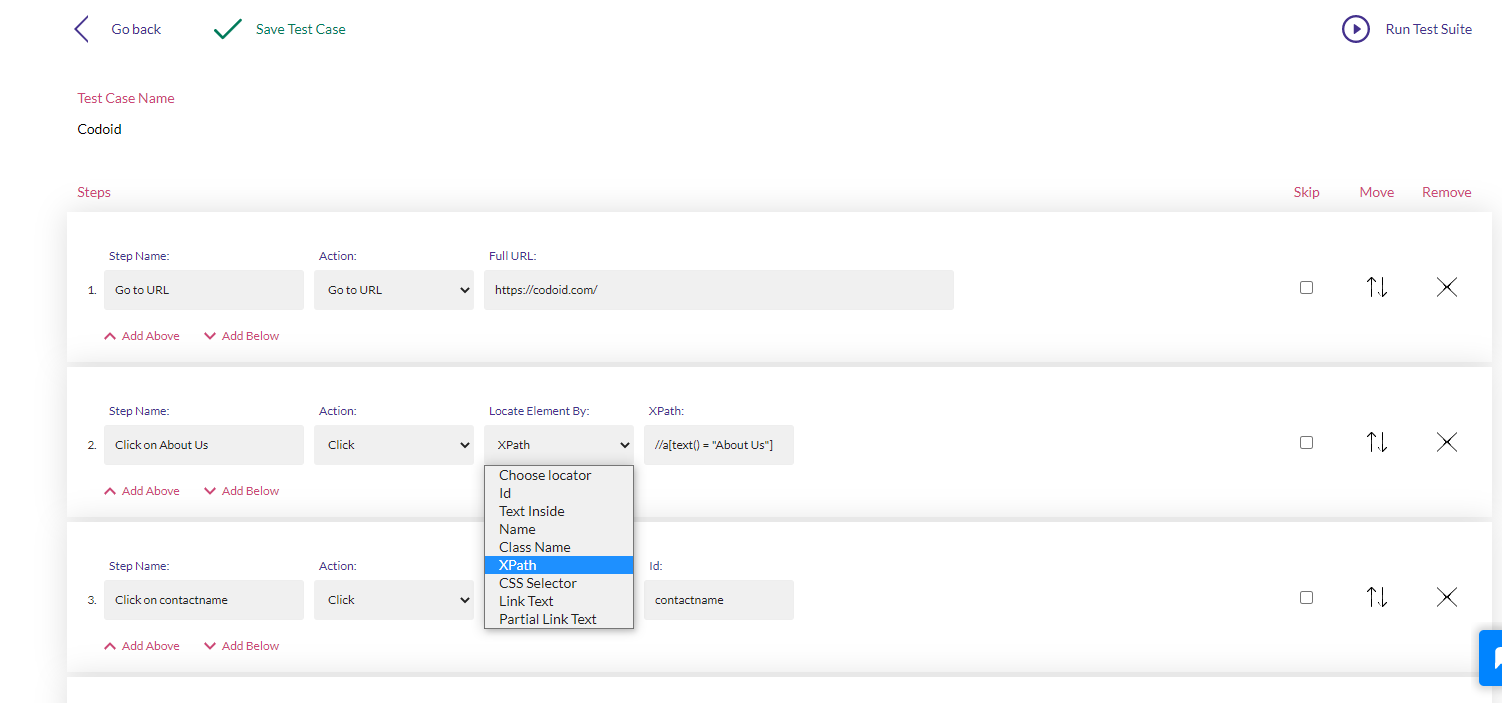

So it is evident here that the test case can be edited without any hassle as there are options to skip, move, and even remove a particular action which we had recorded earlier. In addition to such basic options, you can even edit the type of action that you recorded and choose the type of locating technique that you want to use.

Conclusion:

With all such easy editing options and its ability to easily generate all the possible locators for the particular function in the application, Endtest.io makes it easy to write, edit, and scale automation scripts for even complex applications. In addition to that, you also be able to view detailed results of the execution, take screenshots, schedule the test execution, detect changes, and so much more. Here at Codoid Innovations, we are always focused on delivering true automation solutions that require no interference or supervision. Endtest.io is an automation tool that helps us achieve this target and enable us to provide our clients with top-notch automation testing services.

by admin | Dec 23, 2021 | Automation Testing, Blog, Latest Post |

As the name suggests, codeless automation testing is the process of performing automation tests without having to write any code. Codeless Automation Testing can be instrumental in implementing continuous testing as the majority of automation scripts fail due to the lack of proper coding. It will also enable you to focus more on test creation and analysis instead of worrying about getting the code to work, maintaining it, and scaling it when needed as well. So if you are fairly new to codeless automation testing, then you will find this blog useful as we will be pointing out the advantages of codeless automation testing by comparing it with Selenium Testing. So let’s get started.

How is Codeless Automation Testing Different from Selenium Testing?

Selenium is a tool that greatly simplified the automation testing process. It even allows software testers to record their testing and play it back using Selenium IDE to create basic automation. But there was no easy option to edit the created test cases without being strong in coding. So if you weren’t strong in coding, the only other option would be to rerecord the entire test. But now with the introduction of codeless automation testing, you can go beyond the record and playback technique. So the scope of usage is broadened as it even makes it possible to edit the test cases with basic HTML, CSS, and XPath knowledge. Owing to the minimal use of coding, tests can be easily understood by people without much programming knowledge as well. To top it all off, the setup process is so simple that you can set it up in no time.

Getting Started with Codeless Automation Testing

So it is evident that codeless solutions are a lot more powerful in comparison. But one has to keep in mind the fact that it will work effectively only if they are used appropriately. It is always a good idea to get started with simple tests that can be validated easily. For example, if you are testing an e-commerce product, start by seeing if you are able to add a product to the cart. Once you familiarise yourself, you can go a notch higher and try testing the purchase and return/exchange process. It wouldn’t be wise to use codeless solutions in products that use third-party integrations or has a dynamic and unpredictable output as it becomes very difficult to validate them.

Local and Cloud Options

You can either opt for local codeless solutions or cloud-based solutions. As one of the best automation testing companies, we always prefer cloud-based solutions as they offer more advantages. Collaboration is one of the main plus points as it will enable seamless sharing of test data and test scenarios. In addition to that, you will be to scale your services better with the help of the many virtual machines and mobile devices available online. Since your infrastructure becomes more robust, your overall process quality will also drastically improve.

The Future of Codeless Automation Testing

Though complete codeless automation isn’t yet possible in the same way how all manual tests can’t be automated, it is the natural next step that testers have to take. Repetitive tests were replaced with automation using scripts and now repetitive automation coding is being replaced with codeless solutions that utilize machine learning and AI. But as it always has been, manual testing and scripted automated testing will still play a major role in software testing.

by admin | Dec 21, 2021 | Automation Testing, Blog, Latest Post |

In today’s world, success is heavily dependent on the pace at which we work. So automating repetitive work is one of the quickest ways to attain functional performance. But if you find automation to be daunting, you might have a negative approach of just manually doing the tasks over and over again to enter an endless loop of wasting time. So if you are someone who uses Google Sheets regularly to create and maintain data, this blog will be a lifesaver for you. As a leading automation testing services company, we are focused on dedicating our time to our core service by automating repetitive tasks. So in this blog, we will be exploring how to achieve Google Sheet Automation and get the job done in no time. Without any further ado, let’s get started.

The pace isn’t the only advantage that comes with automation. Since repetitive tasks are prone to oversight and manual errors, we will be able to avoid such issues by implementing automation. Now that we have established what we are targeting to achieve, let’s first see how to do it. We will be making use of the available Google APIs to examine and add data in Google Sheets using Python. So you wouldn’t have to spend hours of your time extracting data and then replica-pasting it to other spreadsheets.

Prerequisites:

In order to achieve Google Sheet Automation, you’ll be needing a few prerequisites such as

- A Google account.

- A Google Cloud Platform project with the API enabled.

- The pip package management tool

- Python 2.6 or greater

How to Achieve Google Sheet Automation using Google API Services?

Here are the few steps that need to be followed to start using the Google sheets API.

i. Create a project on Google Cloud console

ii. Activate the Google Drive API

iii. Create credentials for the Google Drive API

iv. Activate the Google Sheets API

v. Install a few modules with pip

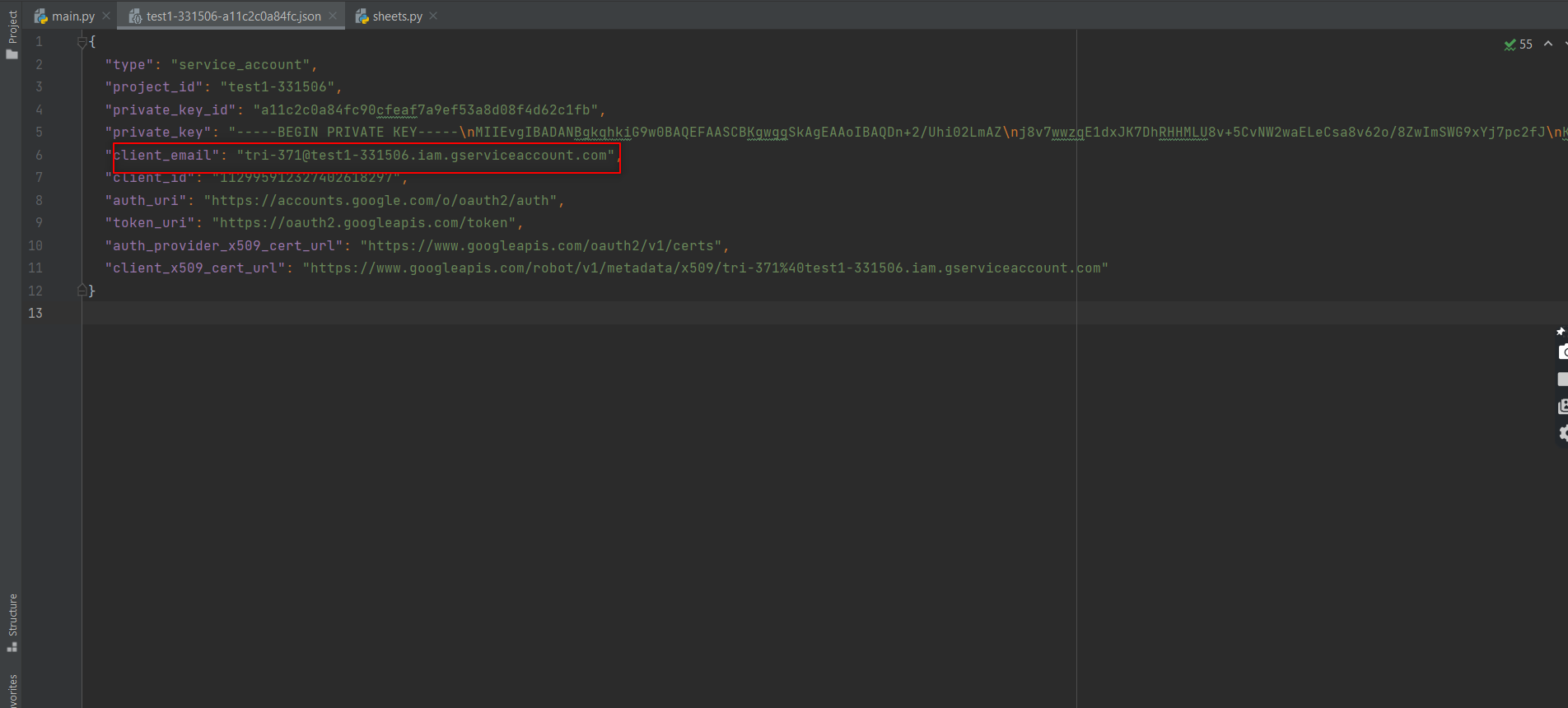

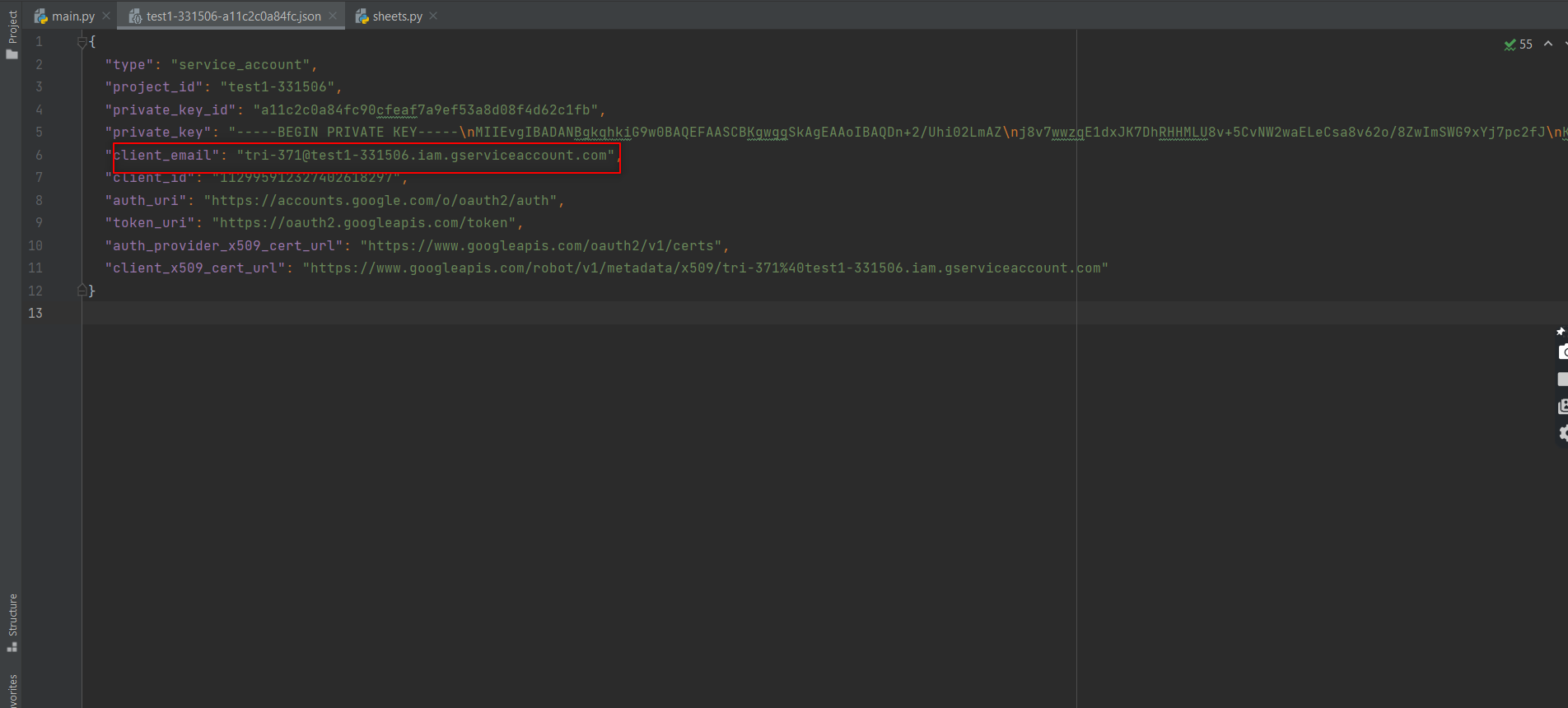

vi. Open the downloaded JSON file and get the client email

vii. Share the desired sheet with that email

viii. Connect to Google Sheet using the Python code

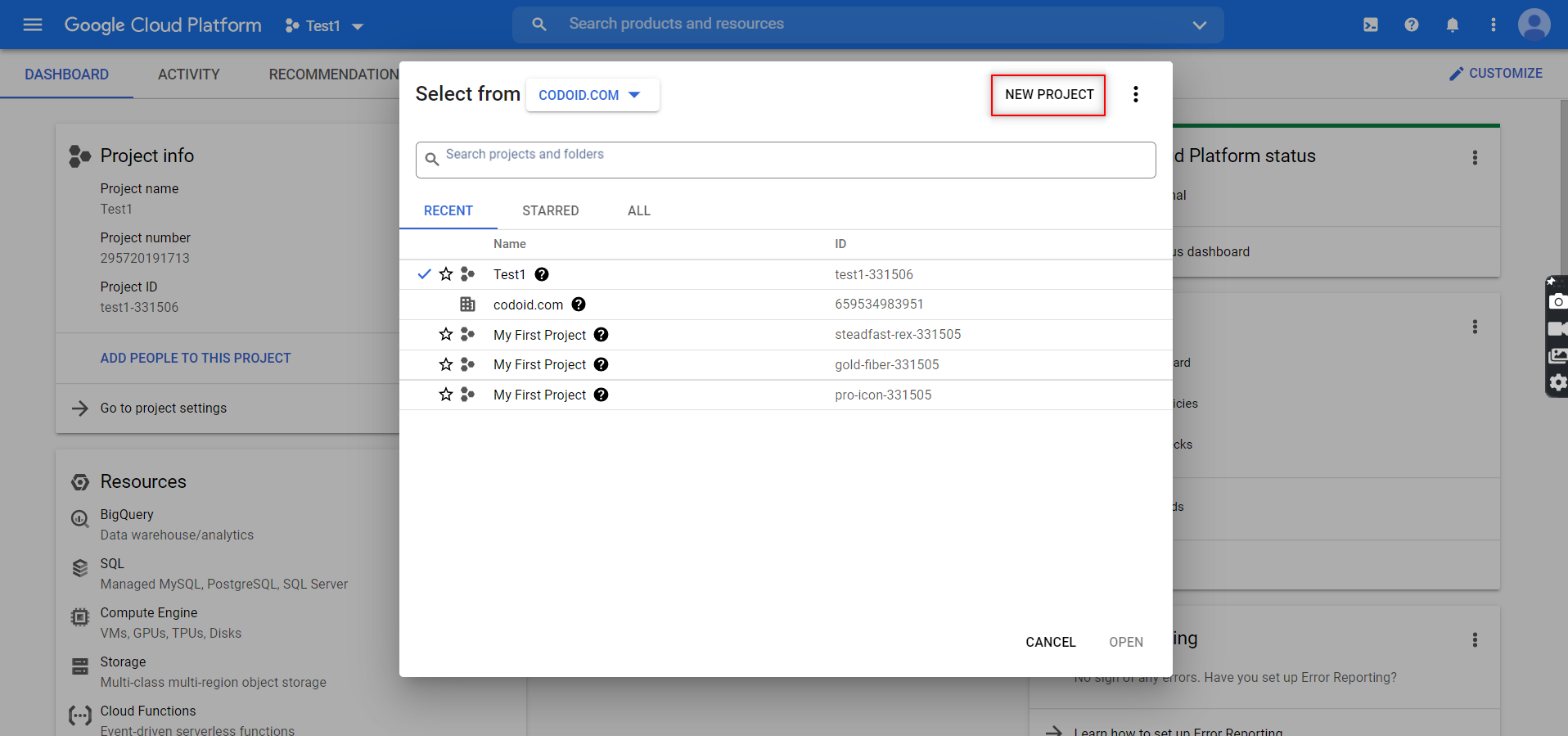

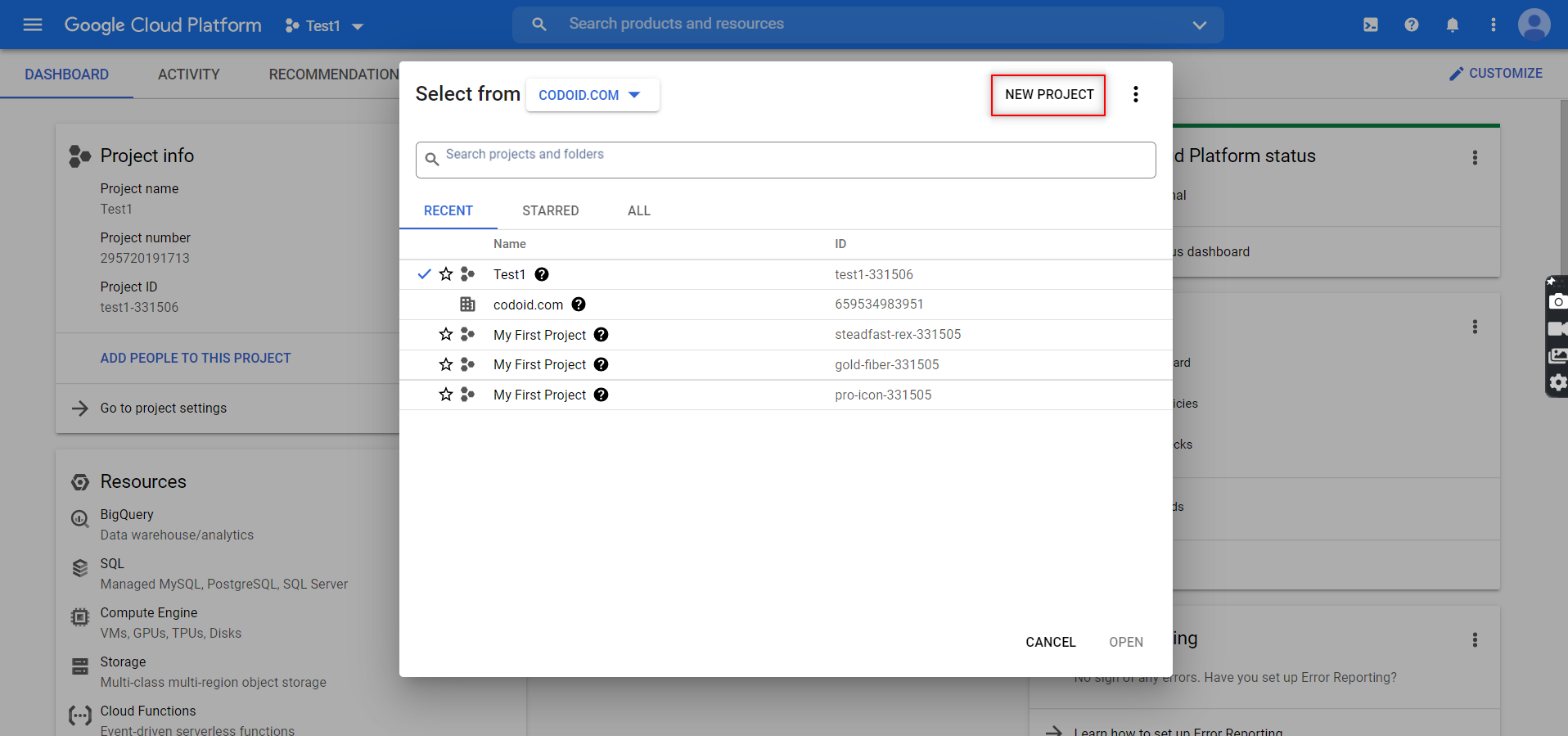

Create a project on Google cloud console

In order to read and update the data from Google Sheets in Python, we will have to create a Service Account. The reason behind this need is that it is a special form of account that can be used to make authorized API calls to Google Cloud Services. Almost everybody has a Google account today. In case you don’t, make sure to create one and then follow all the steps and comply with the requirements to create a Google Services account.

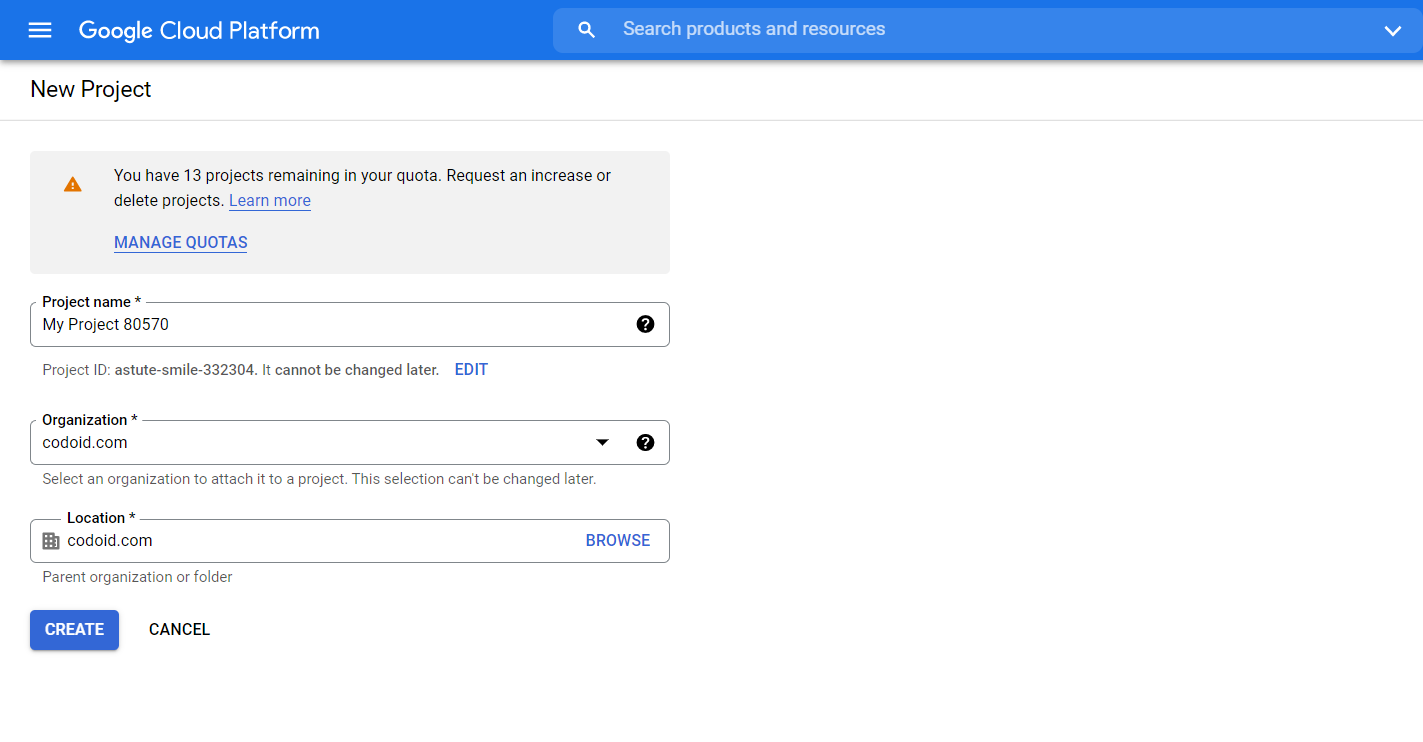

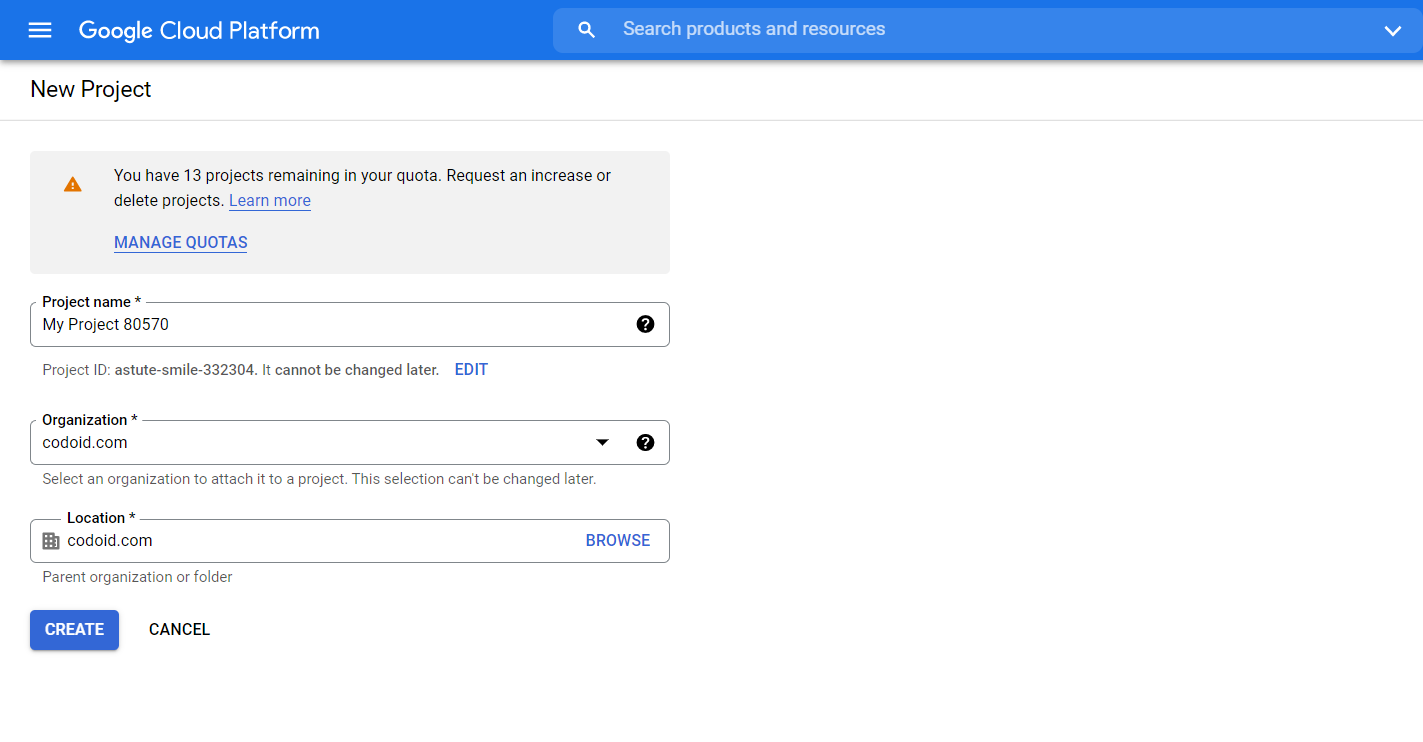

1. You will be able to create a new project by going to the developer’s console and clicking on the ‘New Project’ button.

2. You can then assign the project name and even enter the organization name if you prefer to. Once you are done, click on the ‘Create’ button.

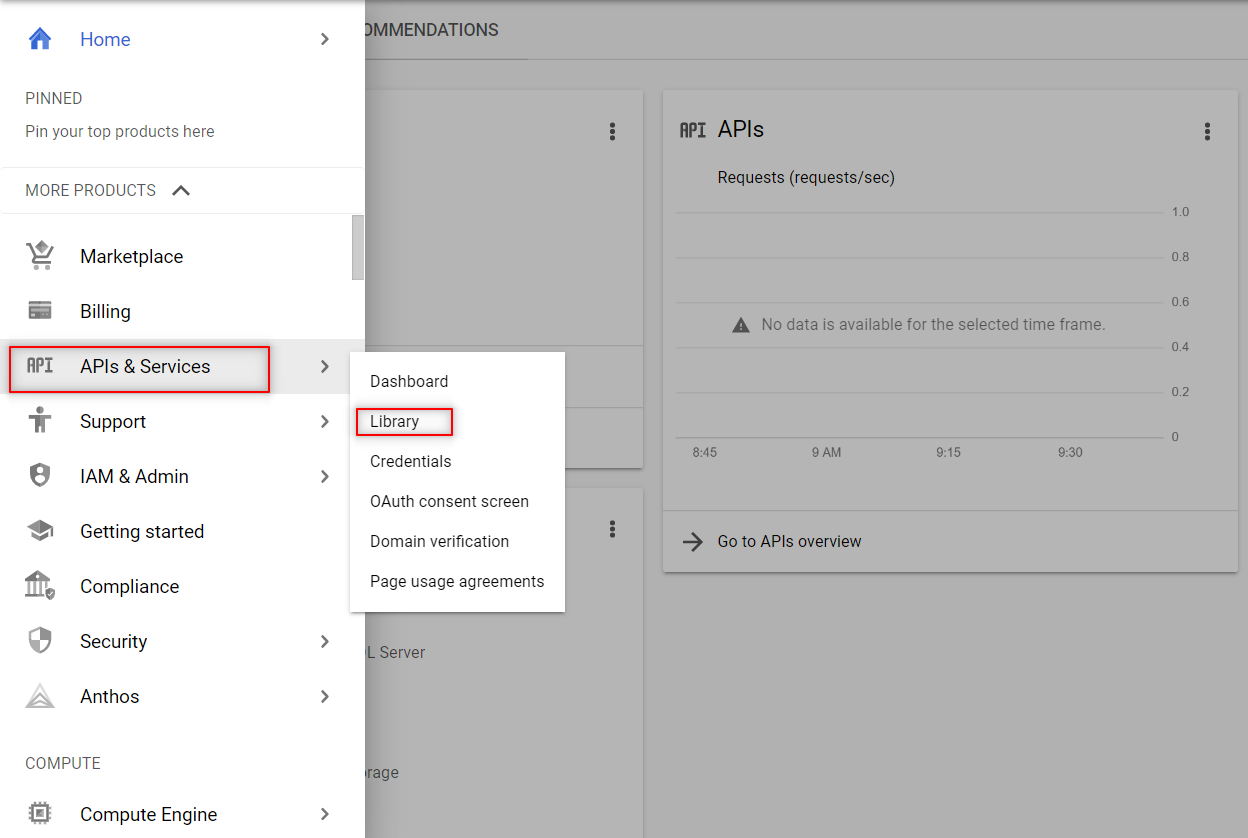

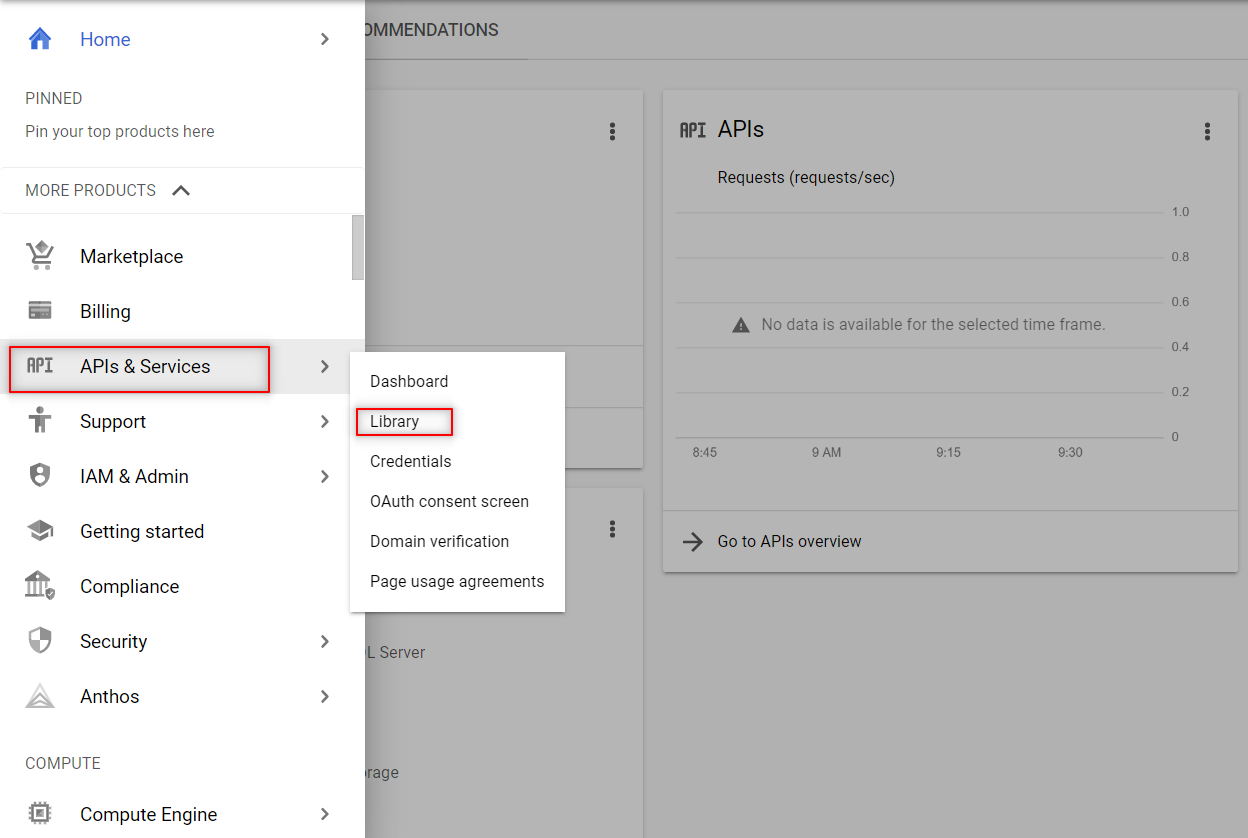

3. The next step after creating the project would be to enable the APIs that we require in this it. To access the different API options provided by Google, you have to click on Menu -> APIs & Services -> Library.

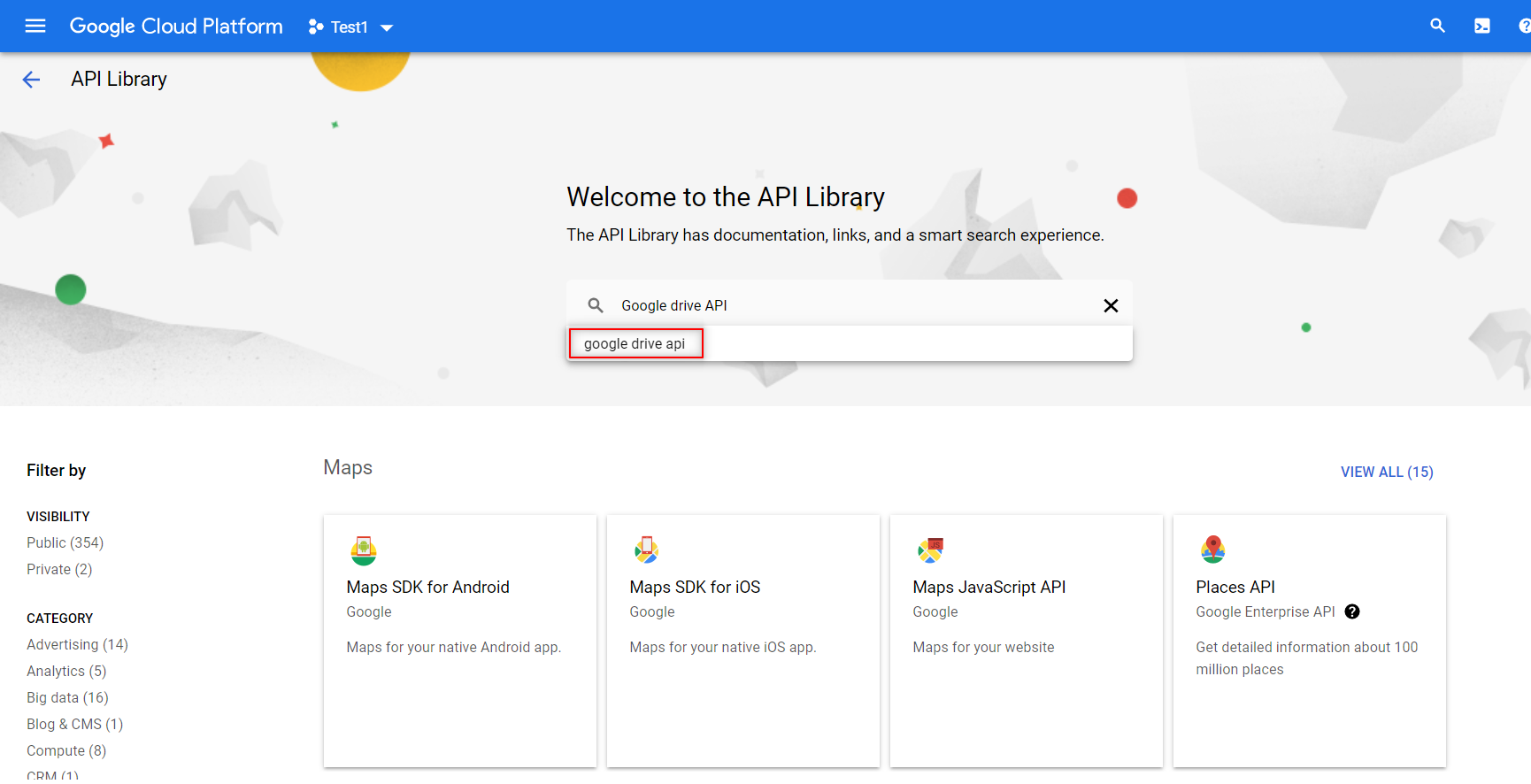

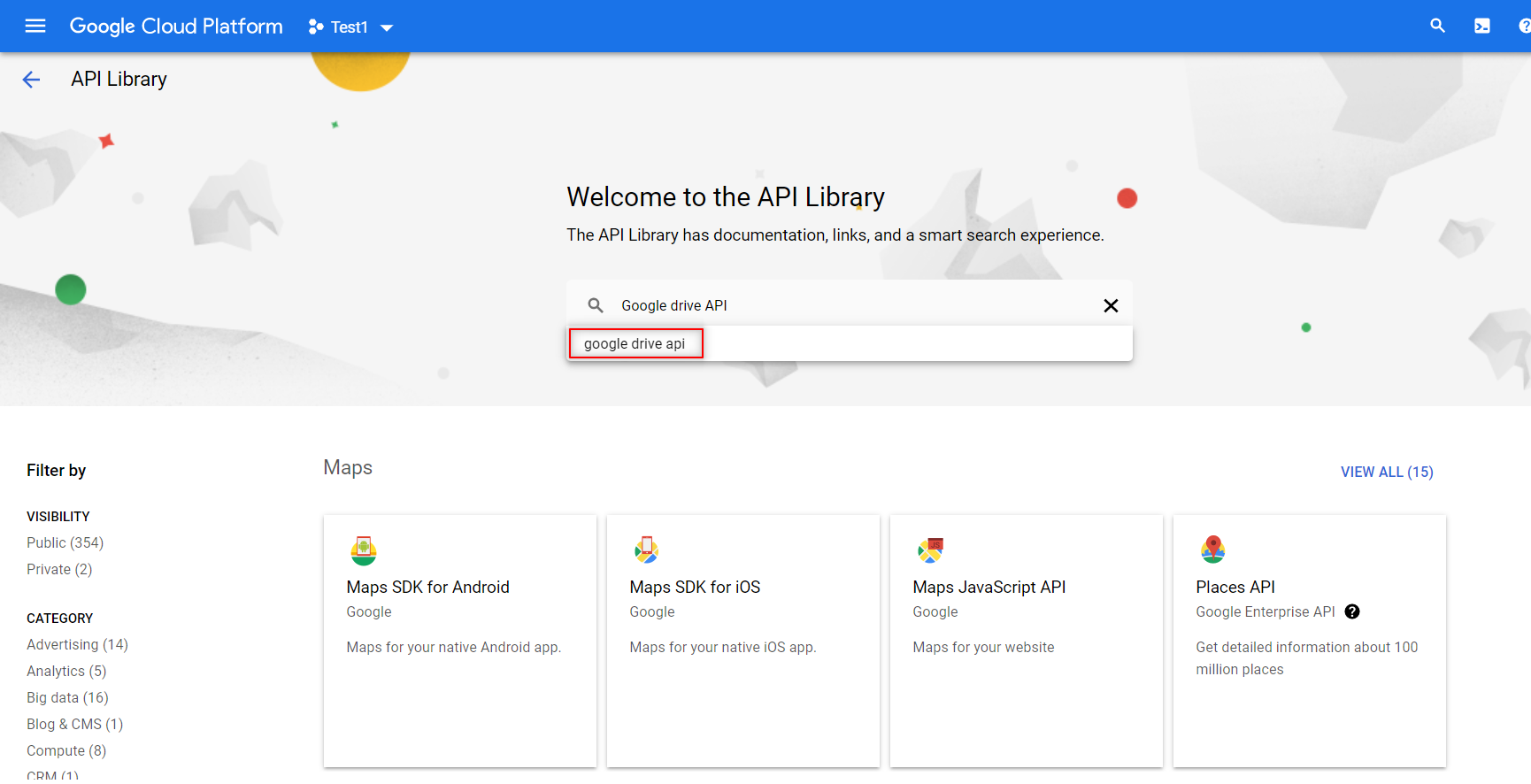

4. You have to search and enable the following two APIs from the library as shown below. You can enable them by just clicking on the enable button that appears.

- Google Sheets API

-

- Google Drive API

The Google Sheets API is the important one that will enable you to read and regulate the data in Google Sheets.

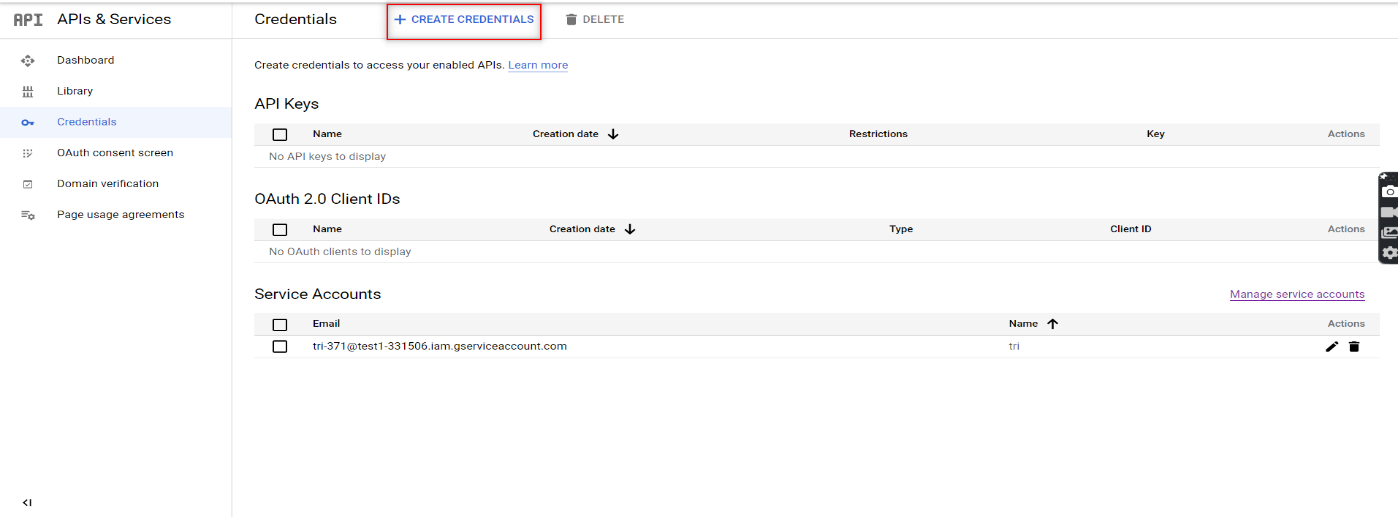

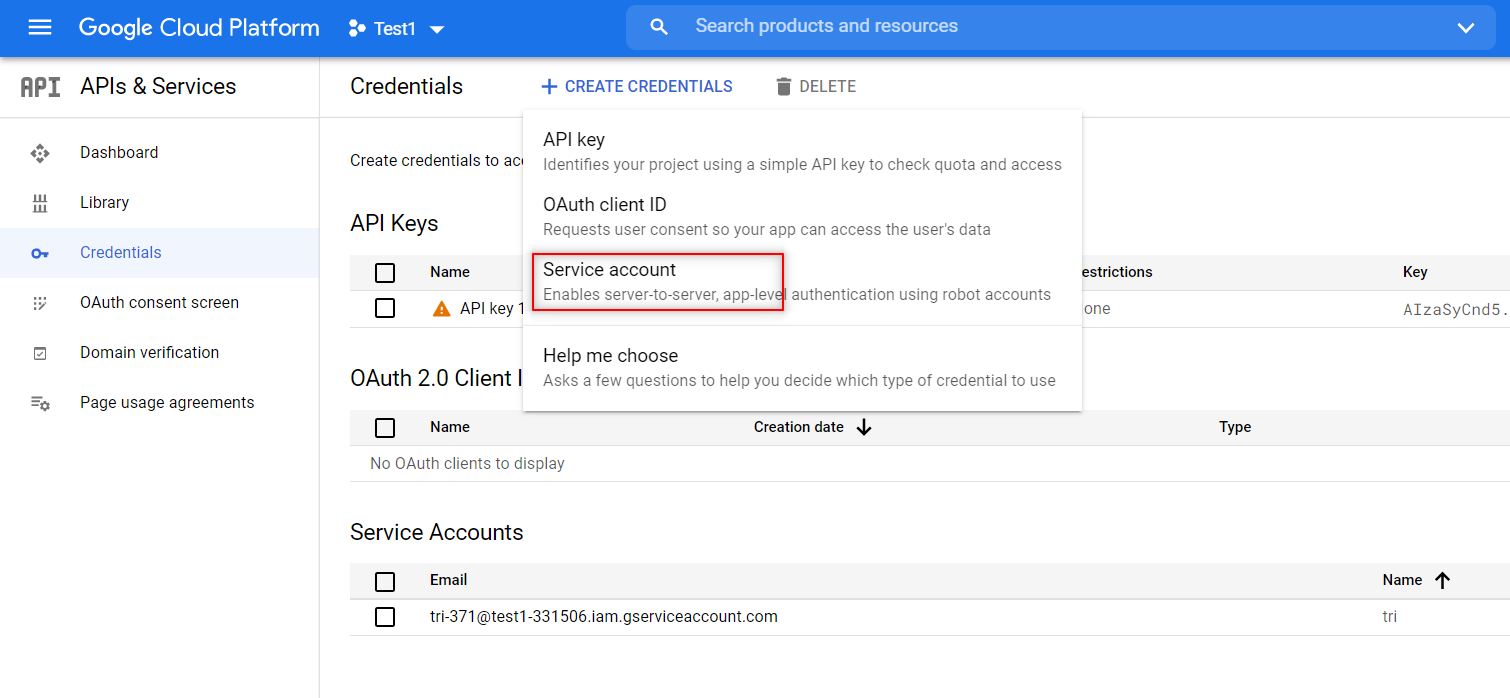

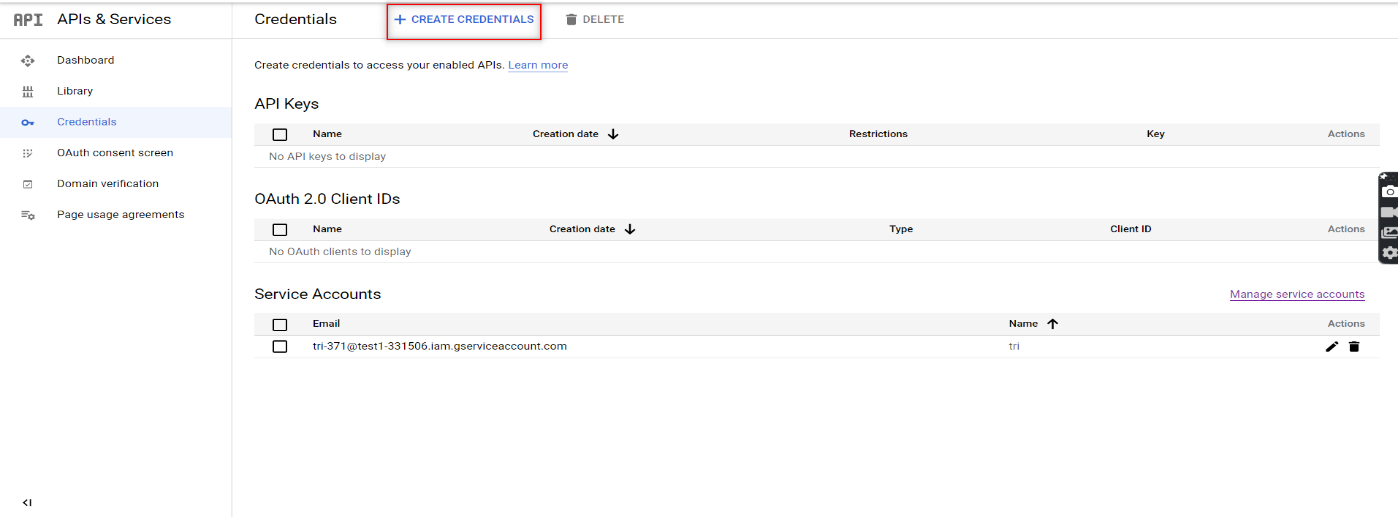

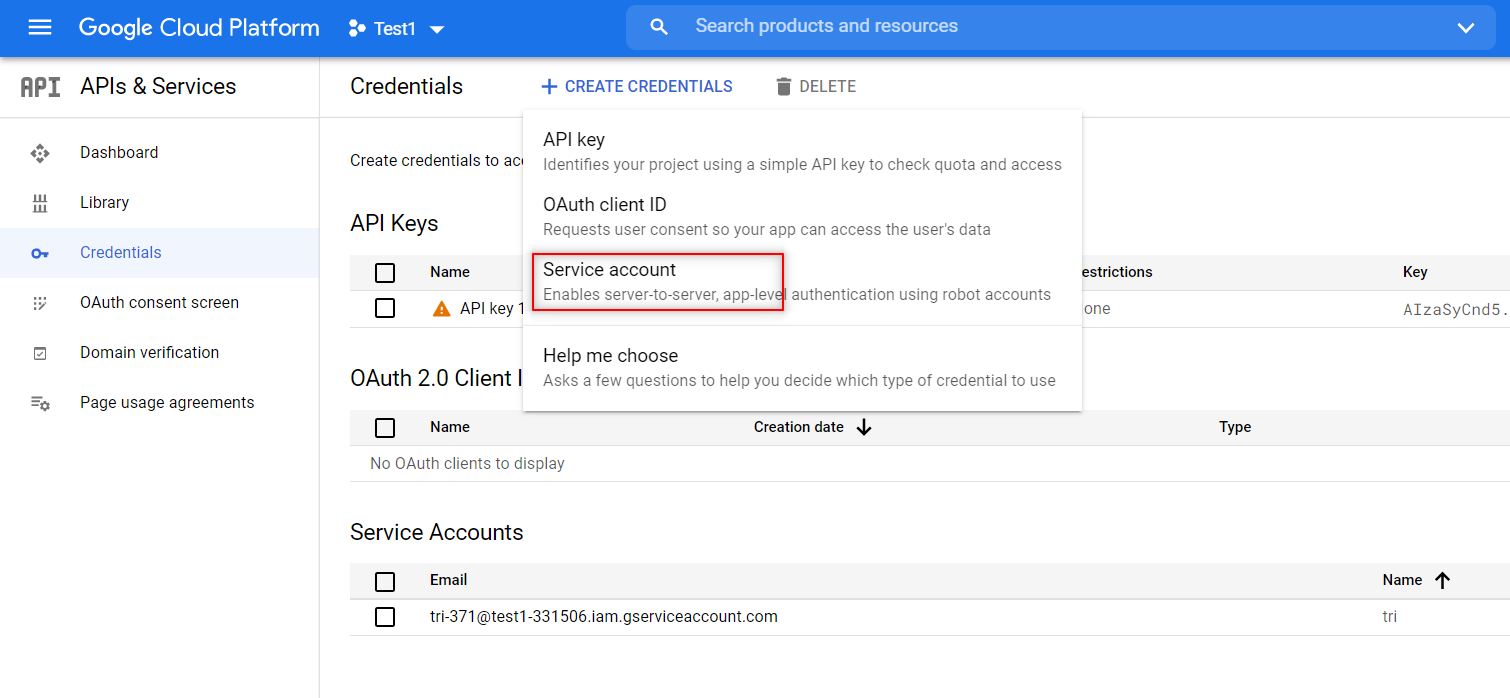

5. Now that you have enabled the required APIs to beat your automation challenge, it’s time to create the credentials for the services account. You can do that by clicking the ‘Create Credentials’ button that can be found in the Credentials menu as shown in the image.

6. Once you click on that, a drop-down list will appear. Choose the ‘Service Account’ option from the list.

7. You would have to provide the Service Account details here in order to continue. That is why we had mentioned that you would have to create one prior to starting this process. Once you have provided the info, you can create the credentials by clicking on the ‘Create and Continue’ option.

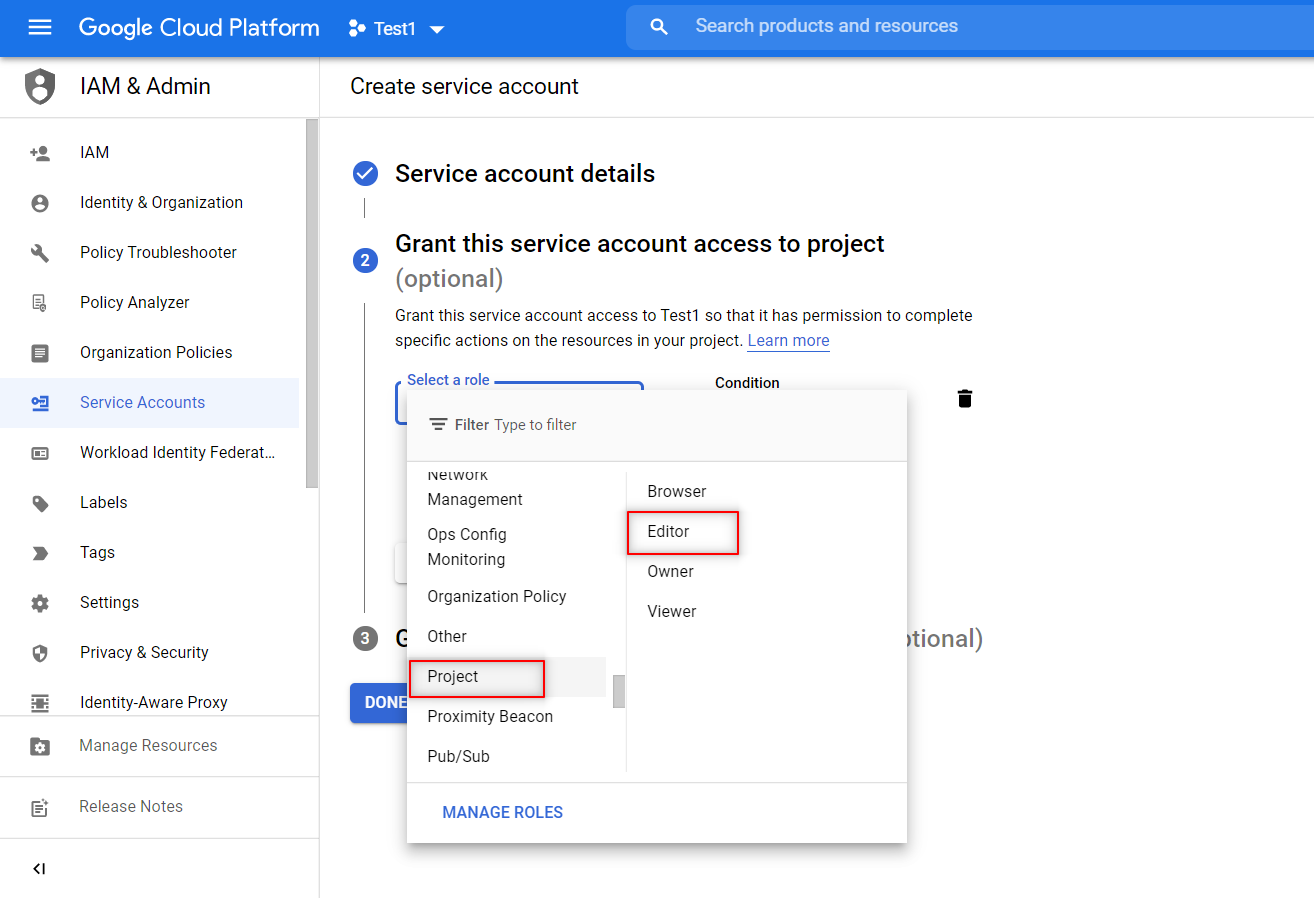

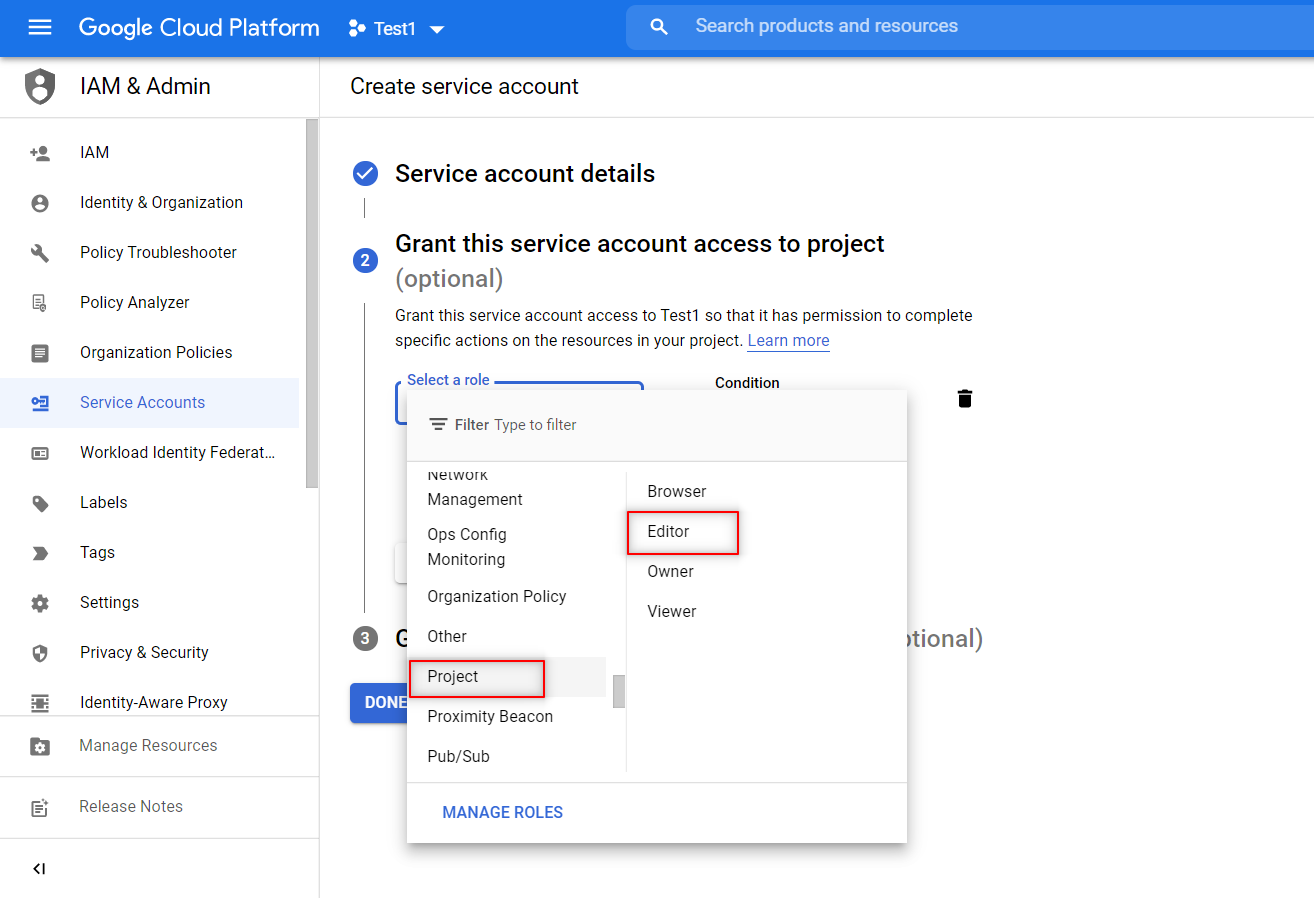

8. Similar to how we share the Google spreadsheets with other collaborators by providing them various access permissions like edit or view only, we will have to provide access to our service account as well. Since we have to both read and write in the spreadsheets, you would have to give editing access or not the view-only option.

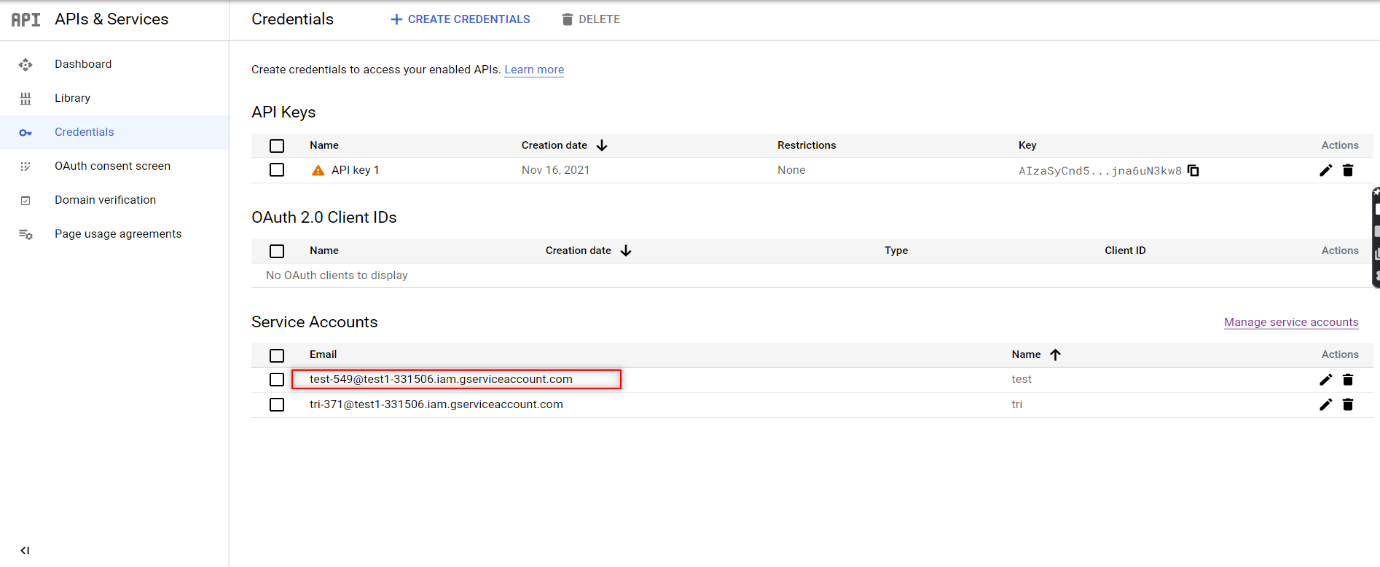

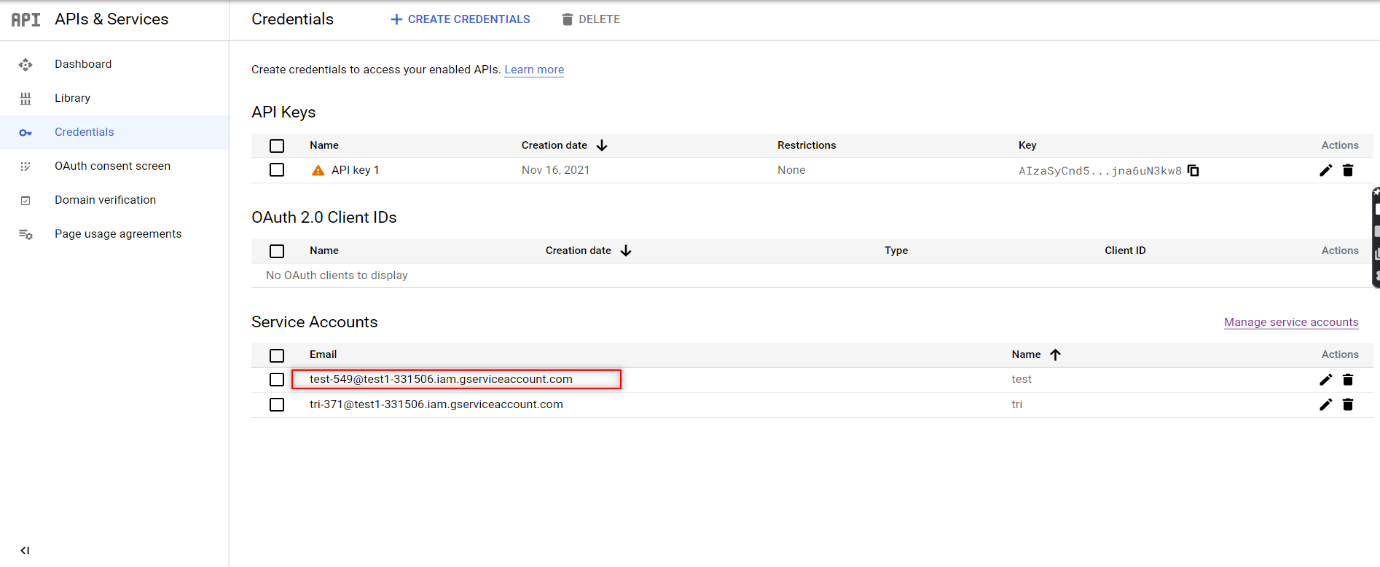

9. Once the credentials have been created, download the JSON file for the credentials. The JSON file will contain the keys that you will need to access the API. So our Google Service account is now ready to use.

Share the desired sheet with that email

Now that the Services account credentials have been created, you have to provide the email using which you will access the spreadsheet.

Open the Google Sheet that you want to automate and click on the Share button to provide access to this client email. Now, you are all set to code and access the sheet using Python.

Connect to Google Sheet using Python Code

First up, you have to open the downloaded JSON file in PyCharm. You can then create a Python file in the same project folder and start writing your script.

We then have to install two packages (gspread and oauth2client) from PIP. To do that in PyCharm, we have to open command prompt and use the below command

pip install gspread oauth2client

Now let’s take a look at the different segments of the python code one by one to understand it easily and successfully implement it to achieve Google Sheet Automation.

1. Importing the Libraries

We will need both the gspread and oauth2client services to authorize and make API calls to Google Cloud Services.

import gspread

from oauth2client.service_account import ServiceAccountCredentials

from pprint import print

2. Define the scope of the application

Then, we will define the scope of the application and add the JSON file that has the credentials to access the API.

scope = ["https://spreadsheets.google.com/feeds",'https://www.googleapis.com/auth/spreadsheets',"https://www.googleapis.com/auth/drive.file",

"https://www.googleapis.com/auth/drive"]

3. Add credentials to the account

Once the scope has been defined, you have to add the credentials to the account.

creds = ServiceAccountCredentials.from_json_keyfile_name("test1-331506-a11c2c0a84fc.json", scope)

4. Authorize the client sheet

The next stage is authorizing the client sheet.

client = gspread.authorize(creds)

5. To open a Google Sheet

There is nothing that can be done without opening the Google Sheet in the first place.

sheet = client.open("Automation").sheet1

6. Get all records

Once the sheet is open, you can get all the data present in the sheet using the get_all_records function. It will return a JSON string that contains the data.

data = sheet.get_all_records()

pprint(data)

7. To get a specific row

Though reading all the data is a great feature, that wouldn’t be needed every single. So this is the code that you can use to get data from a specific row.

row = sheet.row_values(3) # Get a specific row

pprint(row)

8. To get a specific Column

Similarly, we will also be able to access data from a specific column.

col = sheet.col_values(2) # Get a specific column

pprint(col)

9. To Get the value of a specific cell

We can even be very precise and access data from a specific cell too.

cell = sheet.cell(1,2).value # Get the value of a specific cell

pprint(cell)

10. To insert the data into a sheet

We have seen how to read the data, now let’s see how we can insert data.

insertRow = [ 15, "Logesh"]

sheet.insert_row(insertRow, 15)

11. To delete certain row

Not all data in a sheet will be needed forever and so you can even delete a row of data from your sheet.

12. To update one cell

If at all you want to change the data in an existing cell, you wouldn’t have to delete the content and then add the new one again. Instead, you can just update the content in the cell.

sheet.update_cell(2,4, "CHANGED") # Update one cell

13. To Get the number of rows in the sheet

Beyond reading and editing the content in the sheet, you can even get to know the number of rows in a sheet as it might be needed for your automation process.

numRows = sheet.row_count # Get the number of rows in the s

pprint(numRows)

14. To get the length of the data

Likewise, we can even get the length of the data in the sheet if you want to use that in your automation as well.

Source Code:

Since we have explained everything part by part, now it’ll be much easier for you to go through the source code and understand it clearly.

import gspread

from oauth2client.service_account import ServiceAccountCredentials

from pprint import pprint

scope = ["https://spreadsheets.google.com/feeds",'https://www.googleapis.com/auth/spreadsheets',"https://www.googleapis.com/auth/drive.file",

"https://www.googleapis.com/auth/drive"]

creds = ServiceAccountCredentials.from_json_keyfile_name("test1-331506-a11c2c0a84fc.json", scope)

client = gspread.authorize(creds)

sheet = client.open("Automation").sheet1

data = sheet.get_all_records()

pprint(data)

row = sheet.row_values(3) # Get a specific row

pprint(row)

col = sheet.col_values(2) # Get a specific column

pprint(col)

cell = sheet.cell(1,2).value # Get the value of a specific cell

pprint(cell)

insertRow = [ 15, "Logesh"]

sheet.insert_row(insertRow, 15) # Insert the list as a row at index 4 its will over write

sheet.delete_rows(14)

sheet.update_cell(2,4, "CHANGED") # Update one cell

numRows = sheet.row_count # Get the number of rows in the sheet

pprint(numRows)

pprint(len(data))

Conclusion:

We hope you now have a clear idea of how to achieve google sheet automation as per your needs and make the most out of the tool to save your valuable time. As a test automation services provider, we understand that not everything can be automated, but if you approach any automation task with a negative mindset, you’ll never be able to unravel the solutions to overcome your obstacles. So follow and implement these methods with a positive mindset and you will definitely witness performance improvement at your end.