by Jacob | Mar 20, 2025 | Automation Testing, Blog, Latest Post |

Testbeats is a powerful test reporting and analytics platform that enhances automation testing and test execution monitoring by providing detailed insights, real-time alerts, and seamless integration with automation frameworks. When integrated with Playwright, Testbeats simplifies test result publishing, ensures instant notifications via communication tools like Slack, Microsoft Teams, and Google Chat, and offers structured reports for better decision-making. One of the key advantages of Testbeats is its ability to work seamlessly with CucumberJS, a behavior-driven development (BDD) framework that runs on Node.js using the Gherkin syntax. This makes it an ideal solution for teams looking to combine Playwright’s automation capabilities with structured and collaborative test execution. By using Testbeats, QA teams and developers can streamline their workflows, minimize debugging time, and enhance visibility into test outcomes, ultimately improving software reliability in agile and CI/CD environments.

This blog explores the key features of Testbeats, highlights its benefits, and demonstrates how it enhances Playwright test automation with real-time alerts, streamlined reporting, and comprehensive test analytics.

Key Features of Testbeats

- Automated Test Execution Tracking – Captures and organizes test execution data from multiple automation frameworks, ensuring a structured and systematic approach to test result management.

- Multi-Platform Integration – Seamlessly connects with various test automation frameworks, making it a versatile solution for teams using different testing tools.

- Customizable Notifications – Allows users to configure notifications based on test outcomes, ensuring relevant stakeholders receive updates as needed.

- Advanced Test Result Filtering – Enables filtering of test reports based on status, execution time, and test categories, simplifying test analysis.

- Historical Data and Trend Analysis – Maintains test execution history, helping teams track performance trends over time for better decision-making.

- Security & Role-Based Access Control – Provides secure access management, ensuring only authorized users can view or modify test results.

- Exportable Reports – Allows exporting test execution reports in various formats (CSV, JSON, PDF), making it easier to share insights across teams.

Highlights of Testbeats

1. Streamlined Test Reporting – Simplifies publishing and managing test results from various frameworks, enhancing collaboration and accessibility.

2. Real-Time Alerts – Sends instant notifications to Google Chat, Slack, and Microsoft Teams, keeping teams informed about test execution status.

3. Comprehensive Reporting – Provides in-depth test execution reports on the Testbeats portal, offering actionable insights and analytics.

4. Seamless CucumberJS Integration – Supports behavior-driven development (BDD) with CucumberJS, enabling efficient execution and structured test reporting.

By leveraging these features and highlights, Testbeats enhances automation workflows, improves test visibility, and ensures seamless communication within development and QA teams. Now, let’s dive into the integration setup and execution process

Guide to Testbeats Integrating with Playwright

Prerequisites

Before proceeding with the integration, ensure that you have the following essential components set up:

- Node.js installed (v14 or later)

- Playwright installed in your project

- A Testbeats account

- API Key from Testbeats

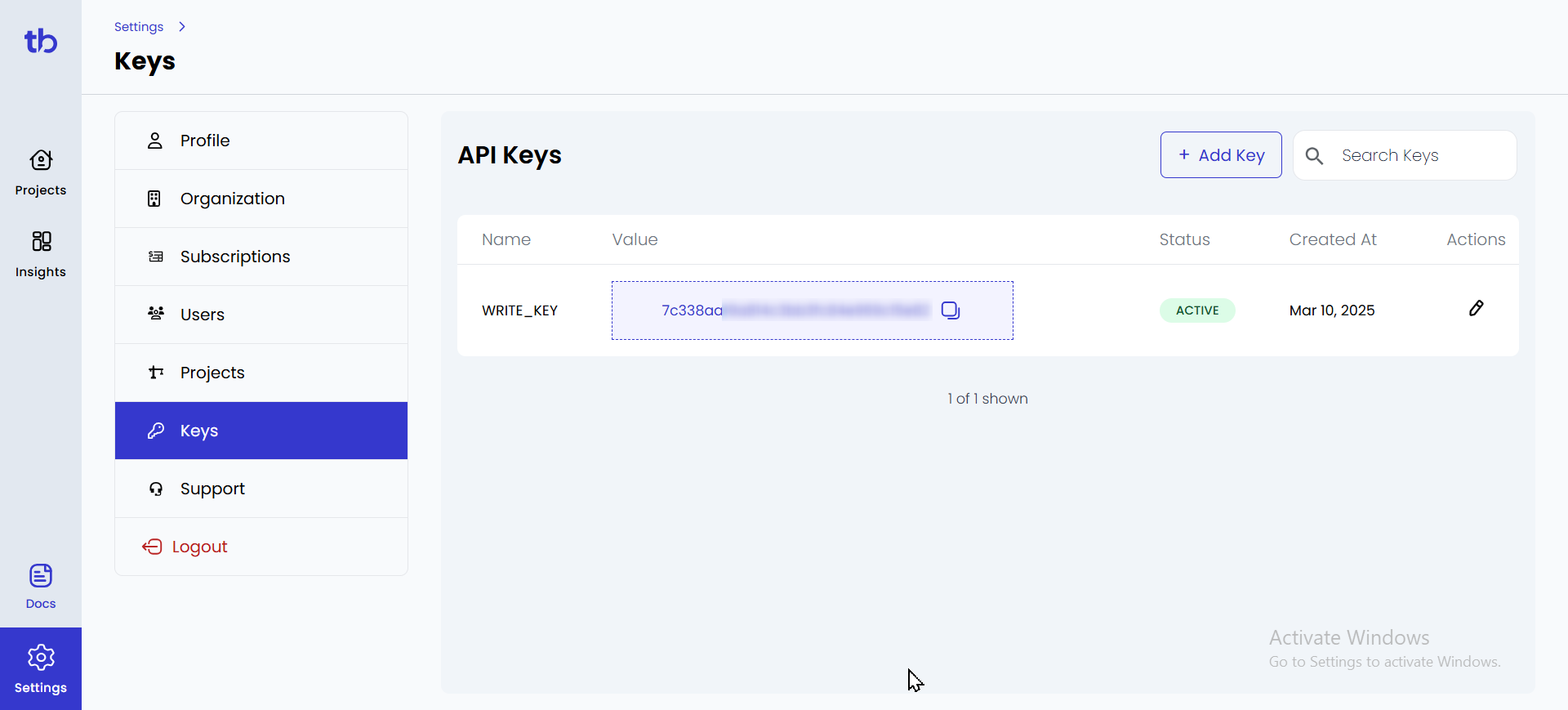

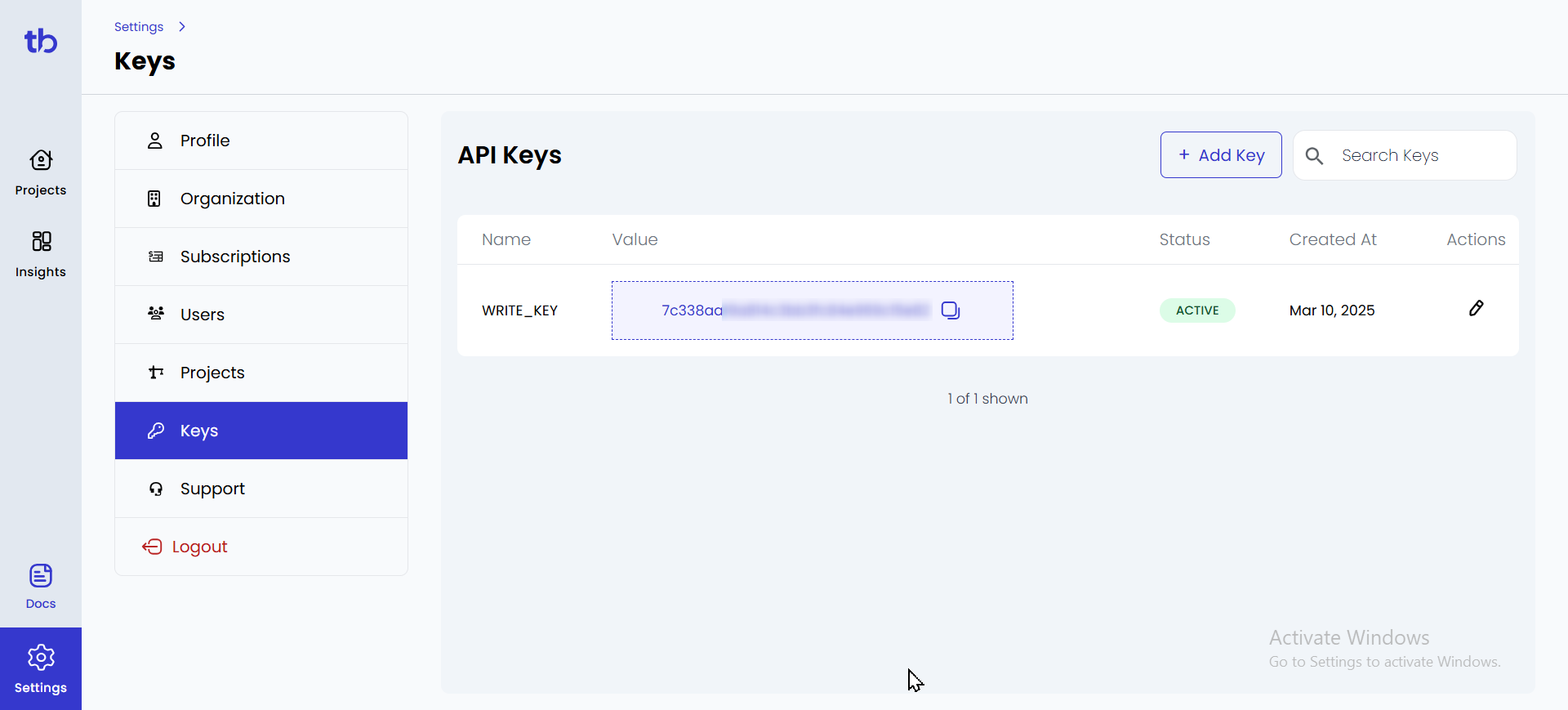

Step 1: Sign in to TestBeats

- Go to the TestBeats website and sign in with your credentials.

- Once signed in, create an organization.

- Navigate to Settings under the Profile section.

- In the Keys section, you will find your API Key — copy it for later use.

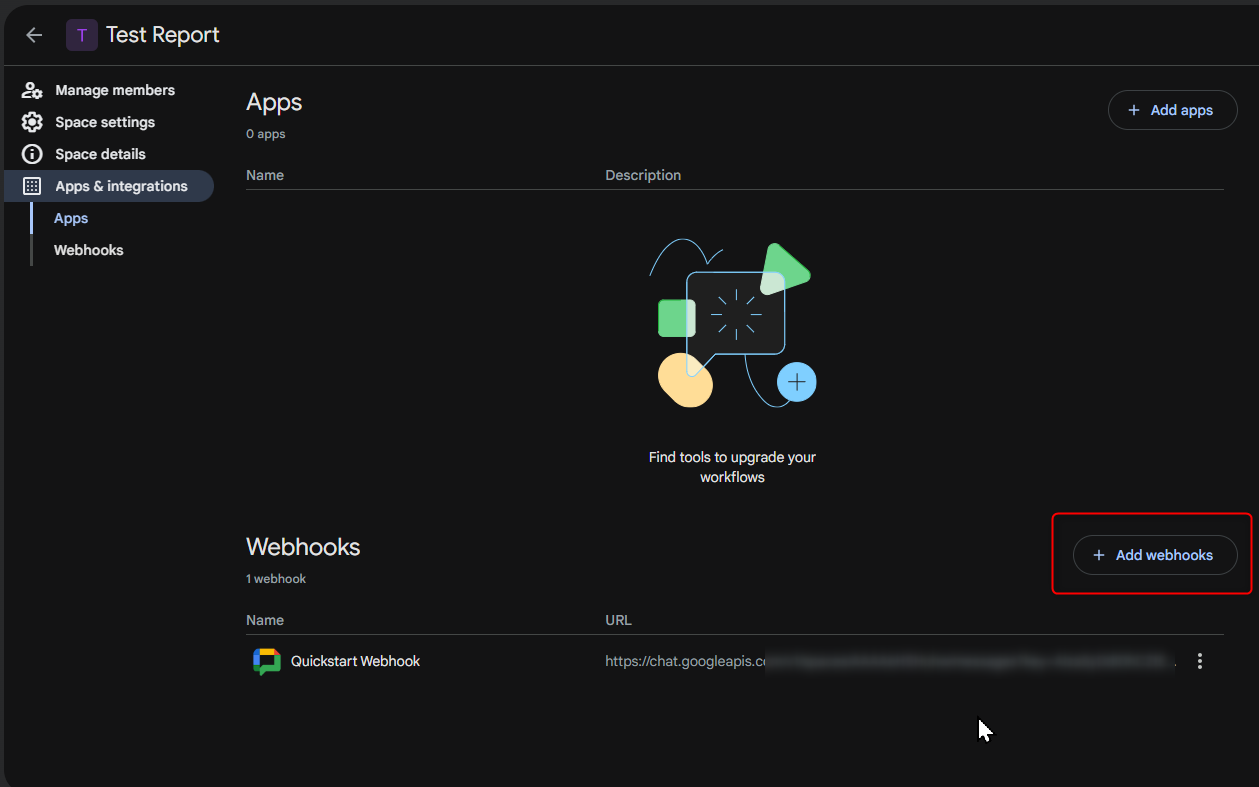

Step 2: Setting Up a Google Chat Webhook

Google Chat webhooks allow TestBeats to send test execution updates directly to a chat space.

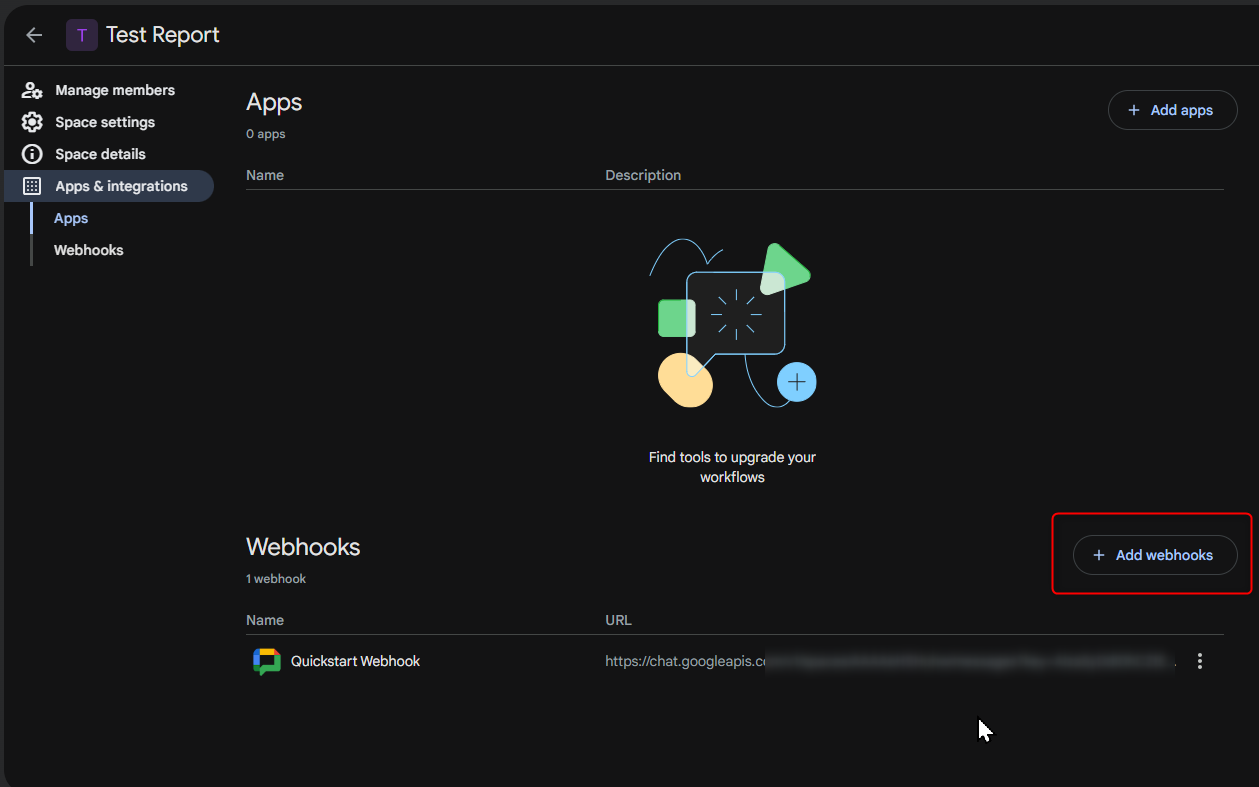

Create a Webhook in Google Chat

- Open Google Chat on the web and select the chat space where you want to receive notifications.

- Click on the space name and select Manage Webhooks.

- Click Add Webhook and provide a name (e.g., “Test Execution Alerts”).

- Google Chat will generate a Webhook URL. Copy this URL for later use.

Step 3: Create a TestBeats Configuration File

In your Playwright Cucumber framework, create a configuration file named testbeats.config.json in the root directory.

Sample Configuration for Google Chat Webhook

{

"api_key": "your_api_key",

"targets": [

{

"name": "chat",

"inputs": {

"url": "your_google_chat_webhook_url",

"title": "Test Execution Report",

"only_failures": false

}

}

],

"extensions": [

{

"name": "quick-chart-test-summary"

},

{

"name": "ci-info"

}

],

"results": [

{

"type": "cucumber",

"files": ["reports/cucumber-report.json"]

}

]

}

Key Configuration Details:

- “api_key” – Your TestBeats API Key.

- “url” – Paste the Google Chat webhook URL here.

- “only_failures” – If set to true, only failed tests trigger notifications.

- “files” – Path to your Cucumber JSON report.

Step 4: Running and Publishing Test Results

1. Run Tests in Playwright

Execute your test scenarios with:

npx cucumber-js --tags "@smoke"

After execution, a cucumber-report.json file will be generated in the reports folder.

2. Publish Test Results to TestBeats

Send results to TestBeats and Google Chat using:

npx testbeats@latest publish -c testbeats.config.json

Now, your Google Chat space will receive real-time notifications about test execution!

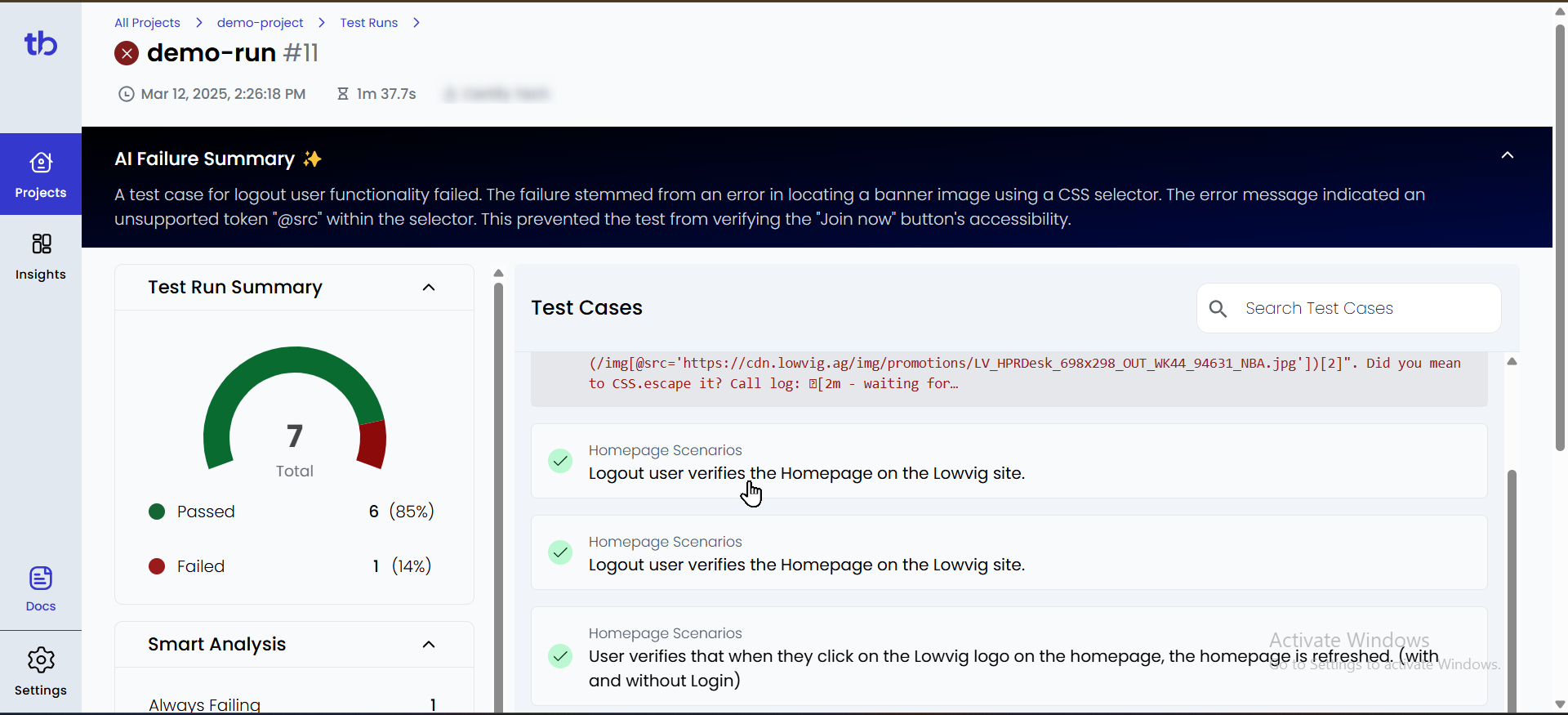

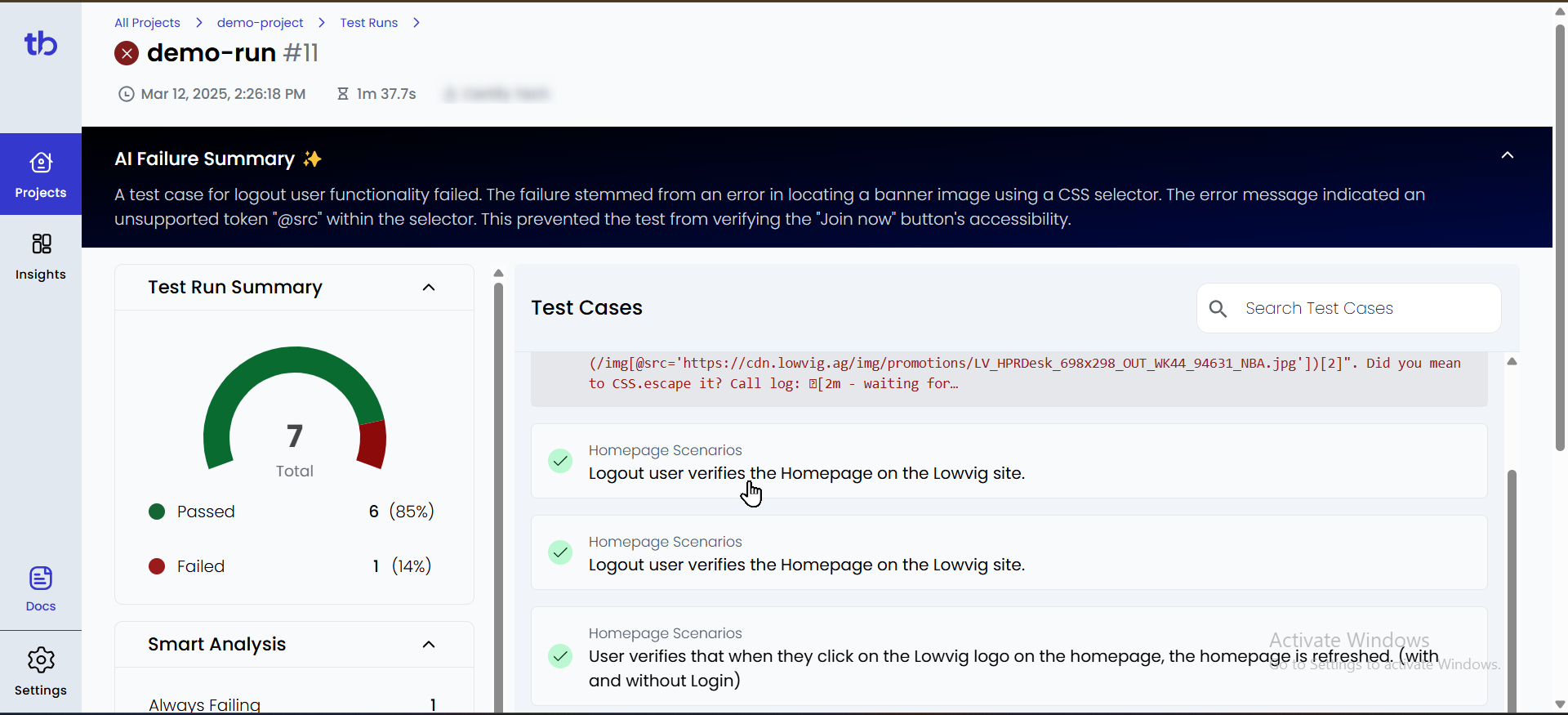

Step 5: Verify Test Reports in TestBeats

- Log in to TestBeats.

- Click on the “Projects” tab on the left.

- Select your project to view test execution details.

- Passed and failed tests will be displayed in the report.

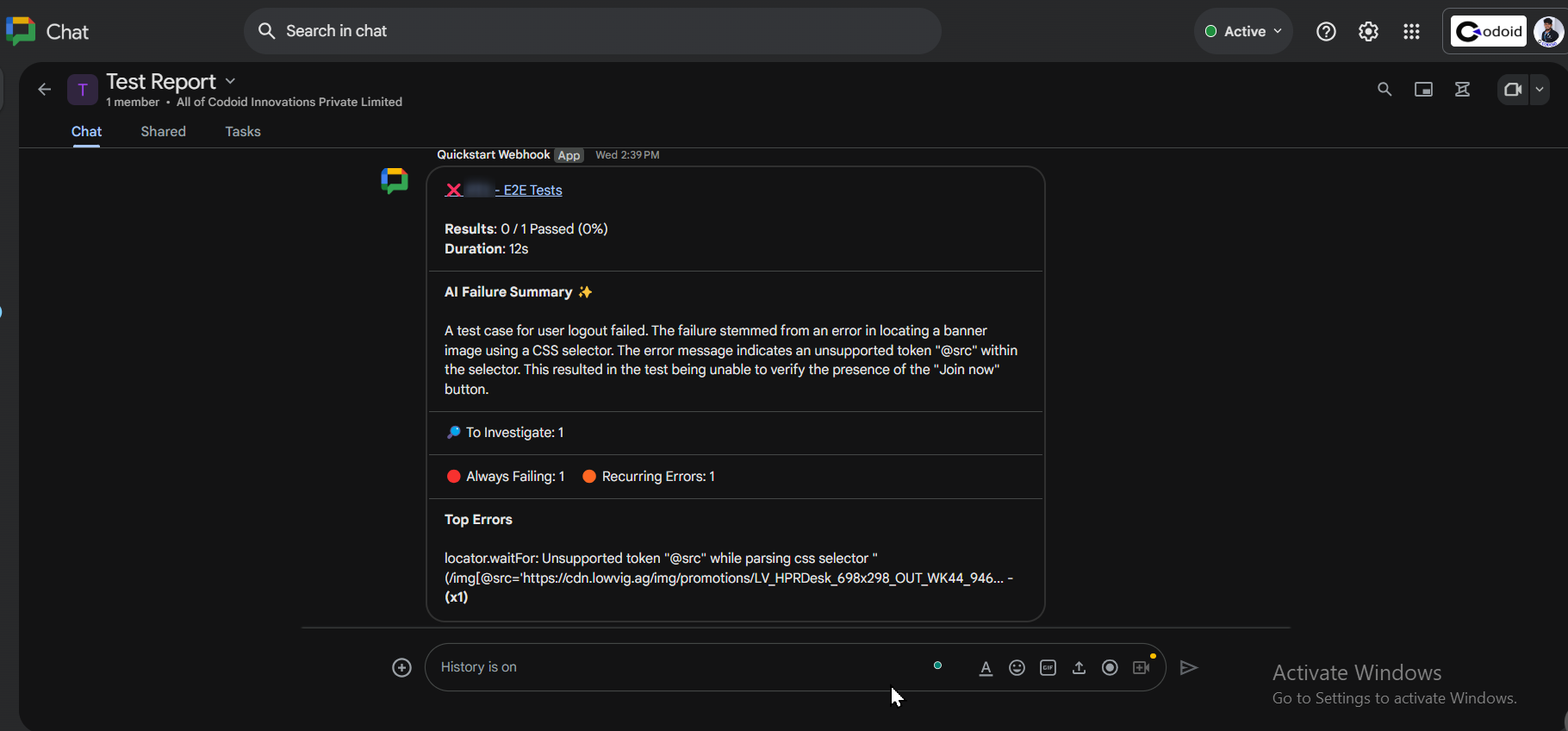

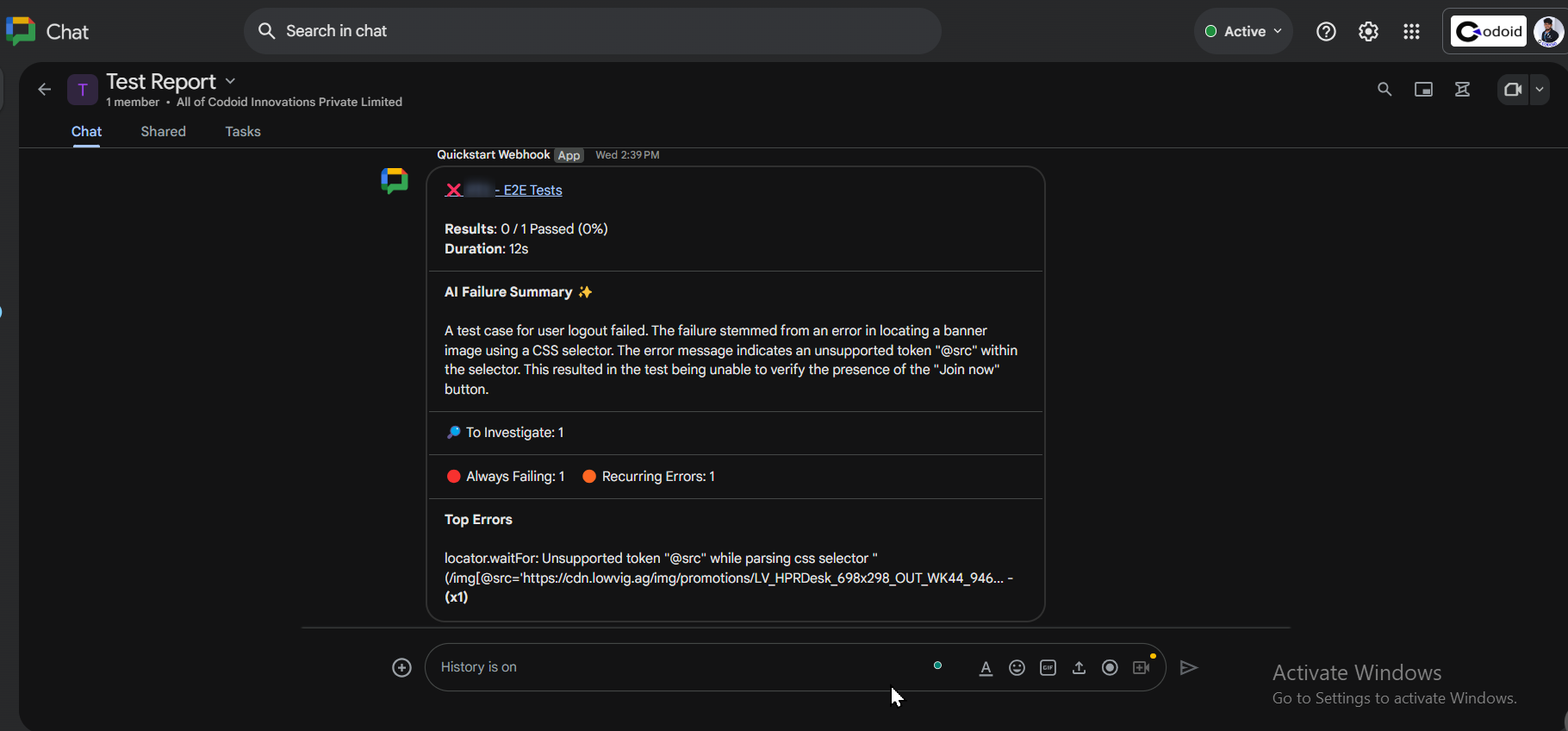

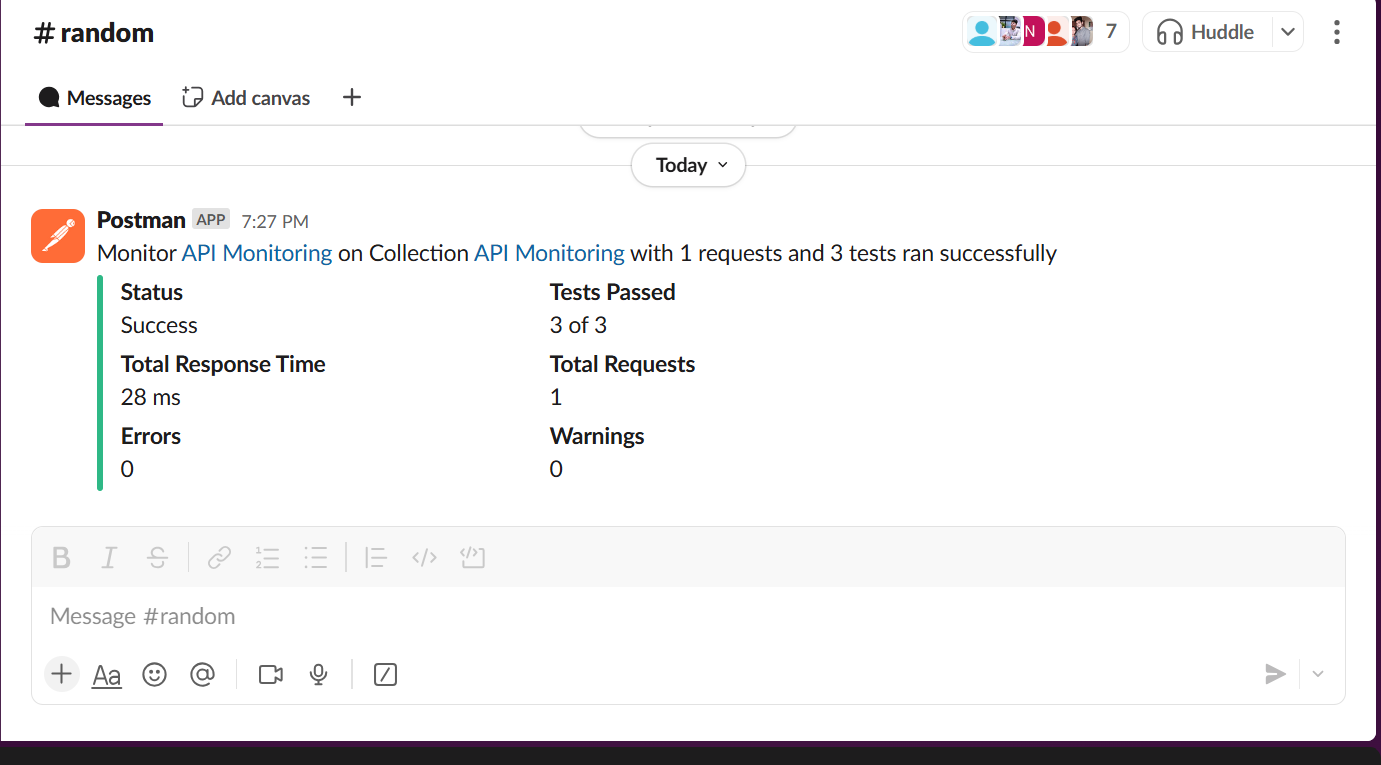

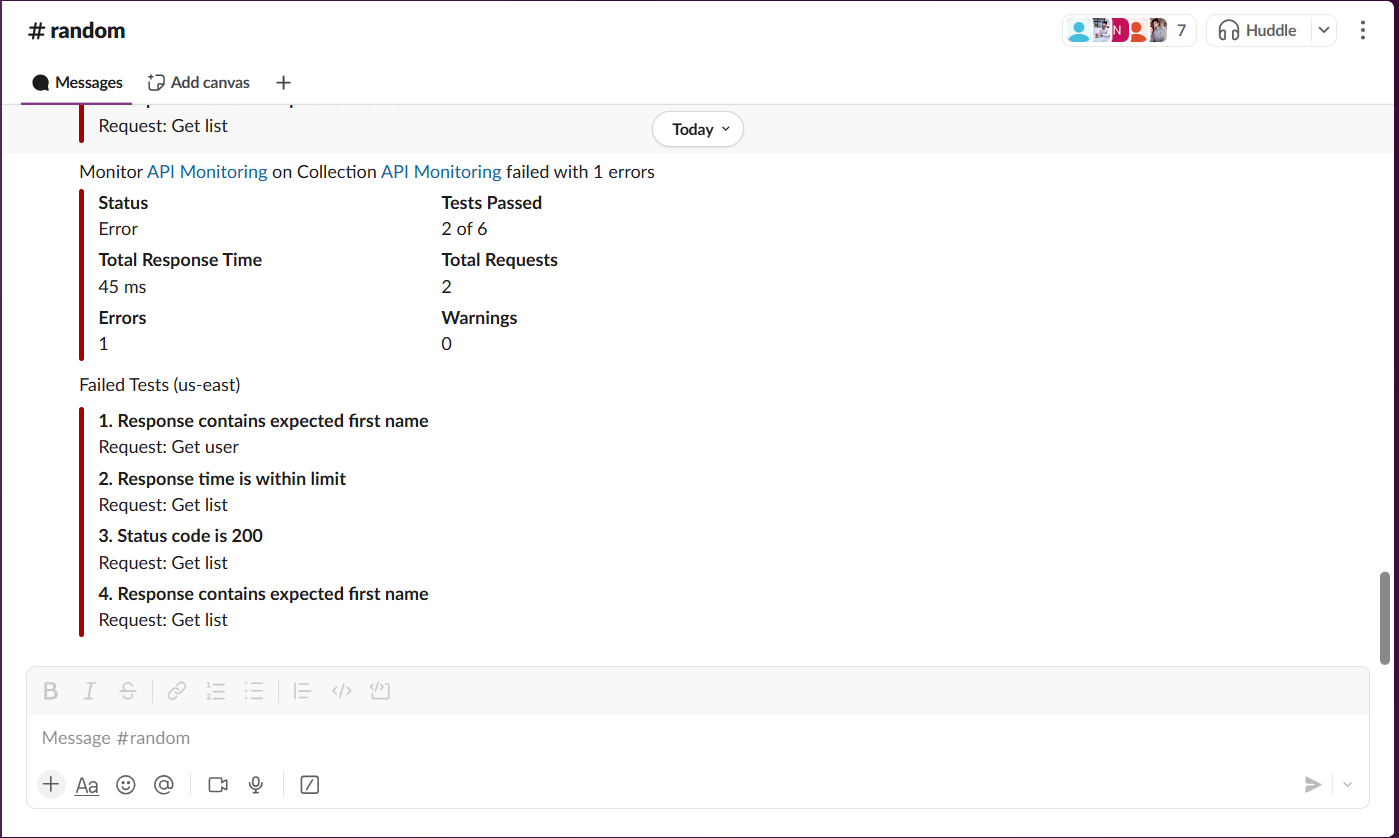

Example Notification in Google Chat

After execution, a message like this will appear in your Google Chat space:

Conclusion:

Integrating Playwright with Testbeats makes test automation more efficient by providing real-time alerts, structured test tracking, and detailed analytics. This setup improves collaboration, simplifies debugging, and helps teams quickly identify issues. Automated notifications via Google Chat or other tools keep stakeholders updated on test results, making it ideal for agile and CI/CD workflows. Codoid, a leading software testing company, specializes in automation, performance, and AI-driven testing. With expertise in Playwright, Selenium, and Cypress, Codoid offers end-to-end testing solutions, including API, mobile, and cloud-based testing, ensuring high-quality digital experiences.

Frequently Asked Questions

-

What is Testbeats?

Testbeats is a test reporting and analytics platform that helps teams track and analyze test execution results, providing real-time insights and automated notifications.

-

What types of reports does Testbeats generate?

Testbeats provides detailed test execution reports, including pass/fail rates, execution trends, failure analysis, and historical data for better decision-making.

-

How does Testbeats improve collaboration?

By integrating with communication tools like Google Chat, Slack, and Microsoft Teams, Testbeats ensures real-time test result updates, helping teams stay informed and react faster to issues.

-

Does Testbeats support frameworks other than Playwright?

Yes, Testbeats supports multiple testing frameworks, including Selenium, Cypress, and CucumberJS, making it a versatile reporting solution.

-

Does Testbeats support CI/CD pipelines?

Yes, Testbeats can be integrated into CI/CD workflows to automate test reporting and enable real-time monitoring of test executions.

by Hannah Rivera | Mar 17, 2025 | API Testing, Blog, Latest Post |

The demand for robust and efficient API testing tools has never been higher. Teams are constantly seeking solutions that not only streamline their testing workflows but also integrate seamlessly with their existing development pipelines. Enter Bruno, a modern, open-source API client purpose-built for API testing and automation. Bruno distinguishes itself from traditional tools like Postman by offering a lightweight, local-first approach that prioritizes speed, security, and developer-friendly workflows. Designed to suit both individual testers and collaborative teams, Bruno brings simplicity and power to API automation by combining an intuitive interface with advanced features such as version control integration, JavaScript-based scripting, and command-line execution capabilities.

Unlike cloud-dependent platforms, Bruno emphasizes local-first architecture, meaning API collections and configurations are stored directly on your machine. This approach ensures data security and faster performance, enabling developers to easily sync test cases via Git or other version control systems. Additionally, Bruno offers flexible environment management and dynamic scripting to allow teams to build complex automated API workflows with minimal overhead. Bruno stands out as a compelling solution for organizations striving to modernize their API testing process and integrate automation into CI/CD pipelines. This guide explores Bruno’s setup, test case creation, scripting capabilities, environment configuration, and how it can enhance your API automation strategy.

Before you deep dive into API automation testing, we recommend checking out our detailed blog, which will give you valuable insights into how Bruno can optimize your API testing workflow.

Setting Up Bruno for API Automation

In the following sections, we’ll walk you through everything you need to get Bruno up and running for your API automation needs — from installation to creating your first automated test cases, configuring environment variables, and executing tests via CLI.

Whether you’re automating GET or POST requests, adding custom JavaScript assertions, or managing multiple environments, this guide will show you exactly how to harness Bruno’s capabilities to build a streamlined and efficient API testing workflow.

Install Bruno

- Download Bruno from its official site (bruno.io) or GitHub repository, depending on your OS.

- Follow the installation prompts to set up the tool on your computer.

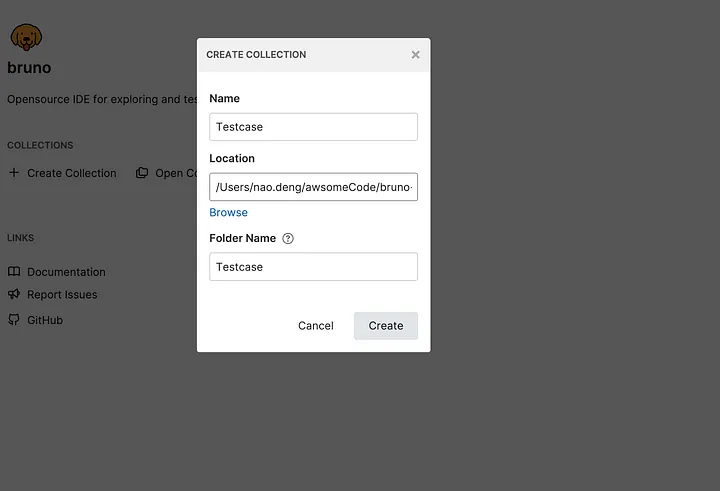

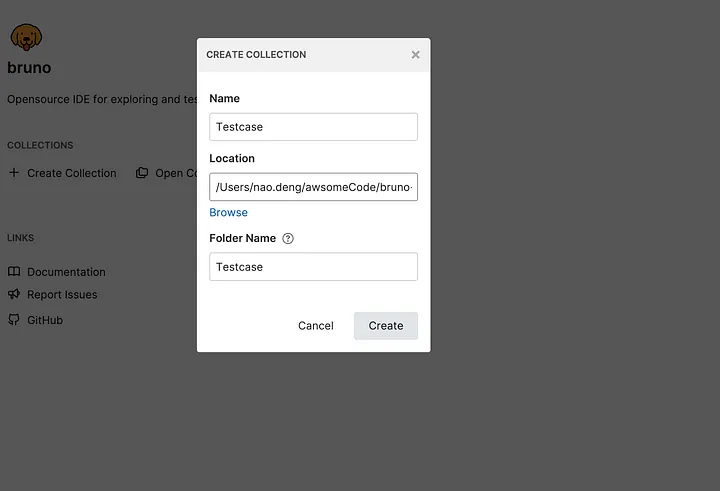

Creating a Test Case Directory

Begin by launching the Bruno application and setting up a directory for storing test cases.

- 1. Run the Bruno application.

- 2. Create a COLLECTION named Testcase.

- 3. Select the project folder you created as the directory for this COLLECTION.

Writing and Executing Test Cases in Bruno

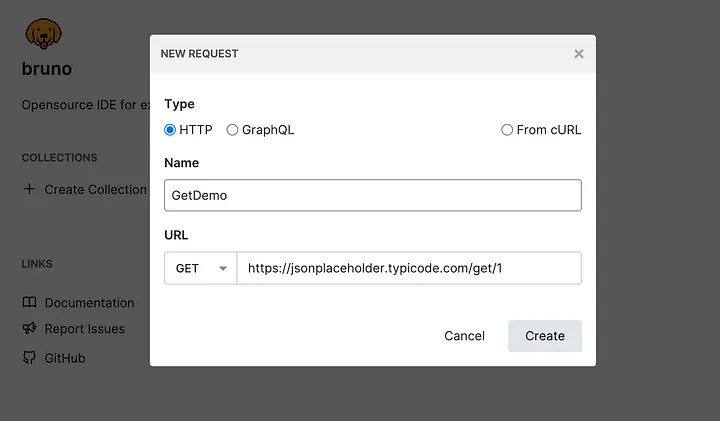

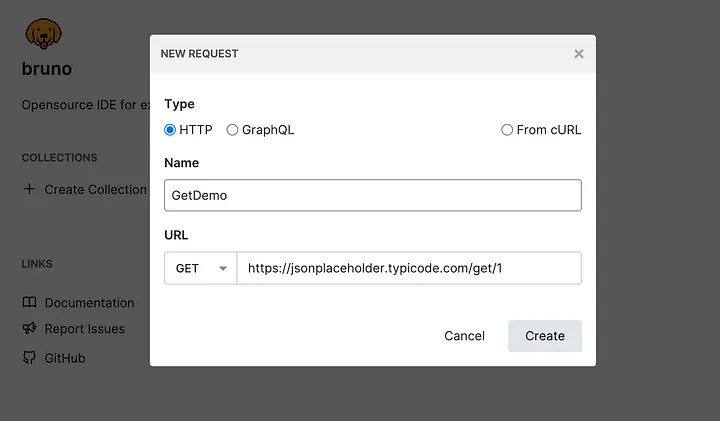

1. Creating a GET Request Test Case

- Click the ADD REQUEST button under the Testcase COLLECTION.

- Set the request type to GET.

- Name the request GetDemo and set the URL to:

https://jsonplaceholder.typicode.com/posts/1

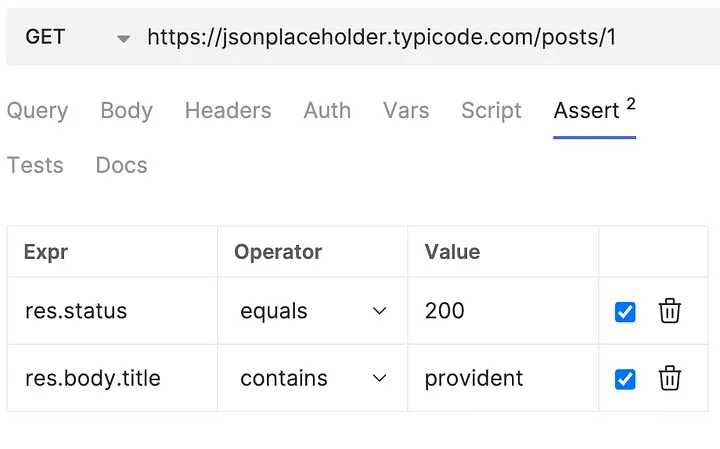

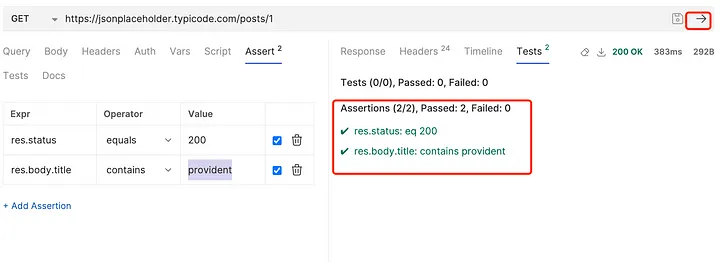

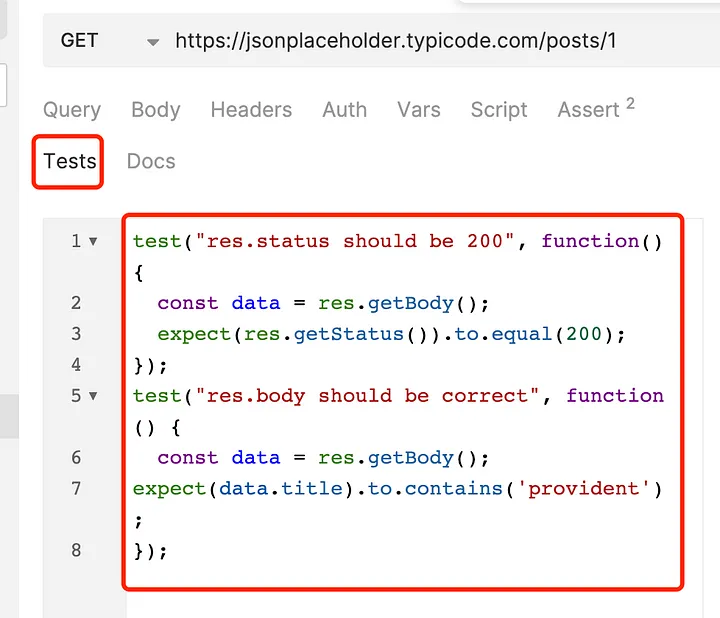

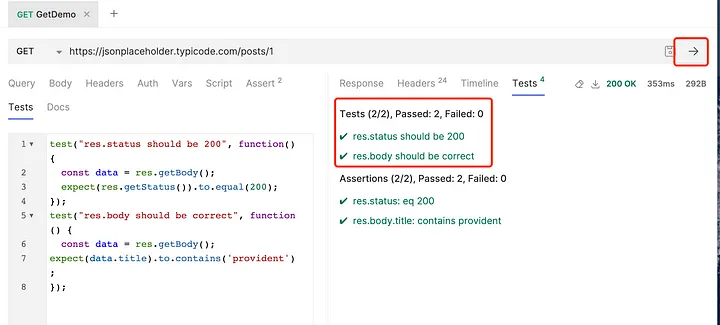

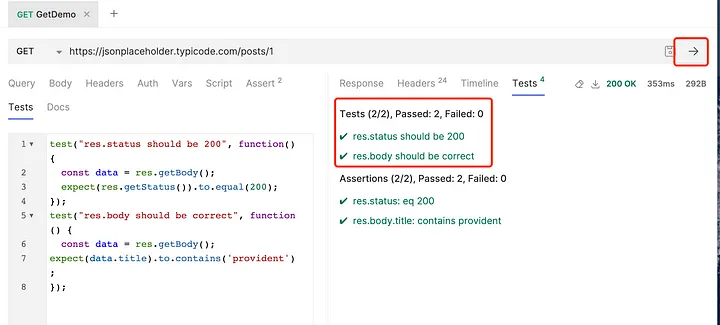

2. Adding Test Assertions Using Built-in Assert

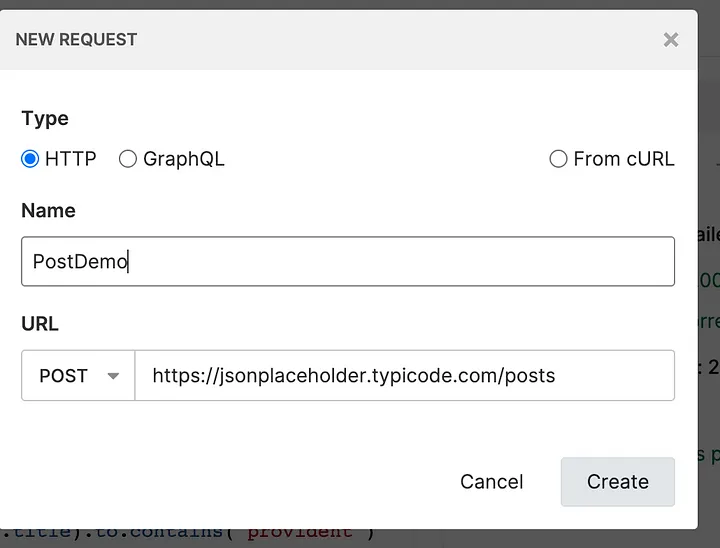

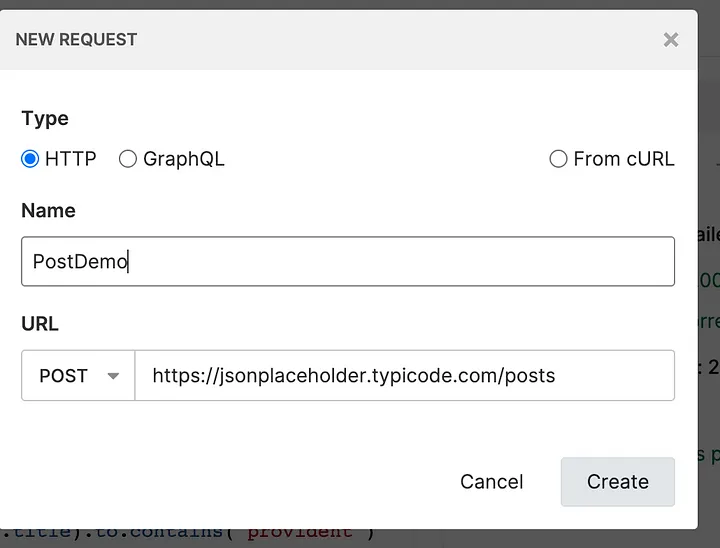

4. Creating a POST Request Test Case

- Click ADD REQUEST under the Testcase COLLECTION.

- Set the request type to POST.

- Name the request PostDemo and set the URL to:

https://jsonplaceholder.typicode.com/posts

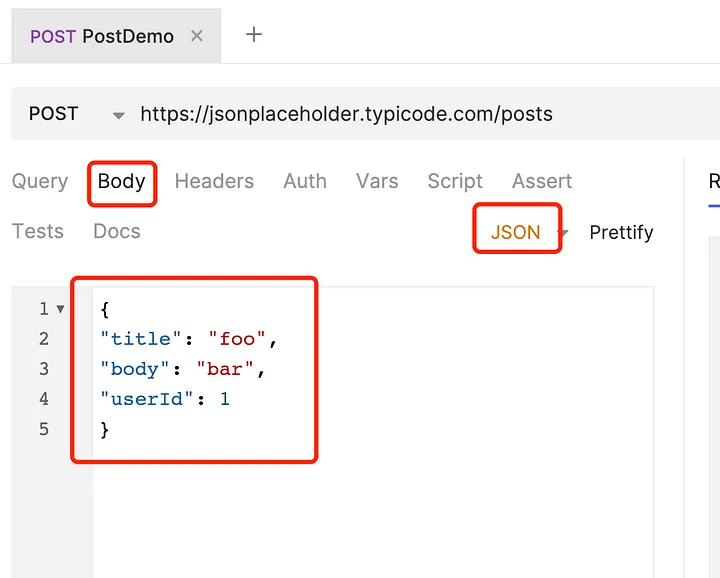

- Click Body and enter the following JSON data:

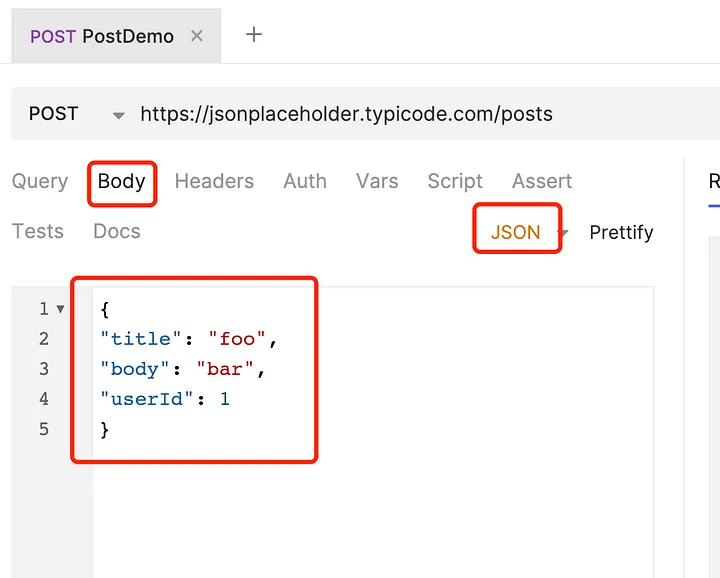

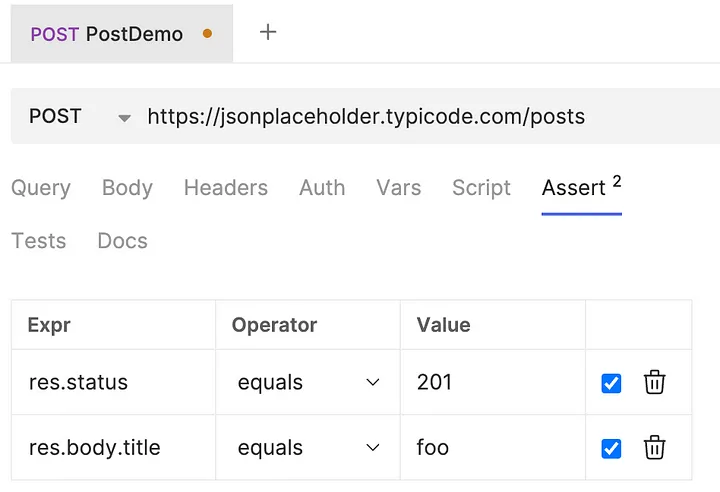

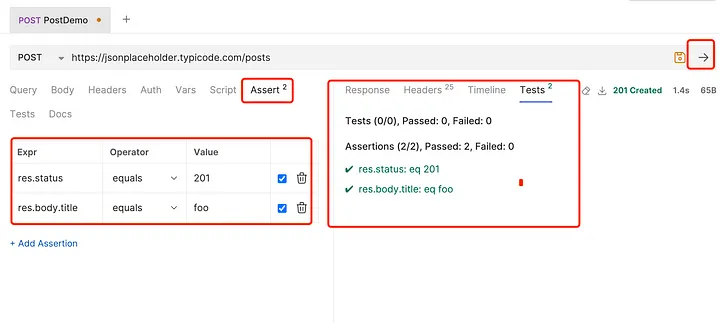

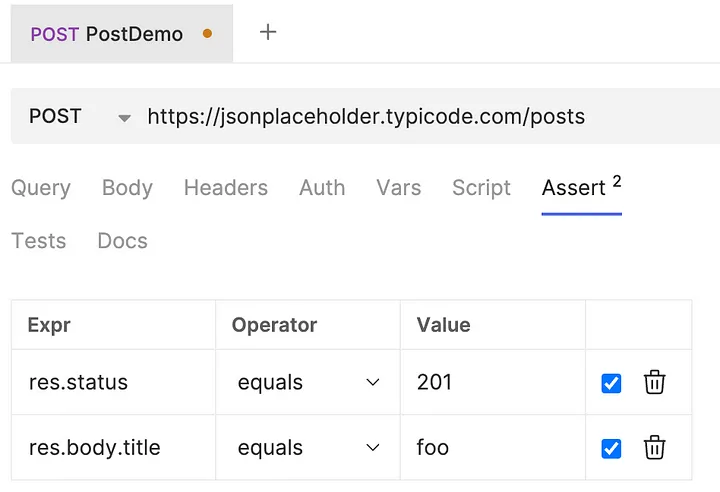

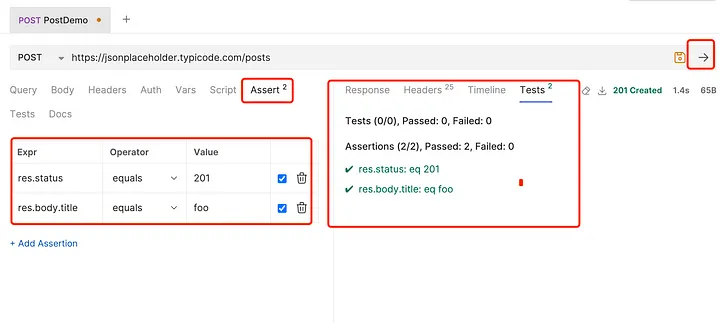

5. Adding Test Assertions Using Built-in Assert

- Click the Assert button under PostDemo.

- Add the following assertions:

- Response status code equals 201.

- The title in the response body equals “foo.”

- Click Run to execute the assertions.

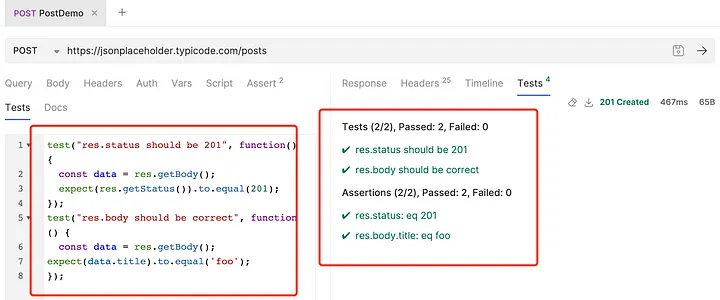

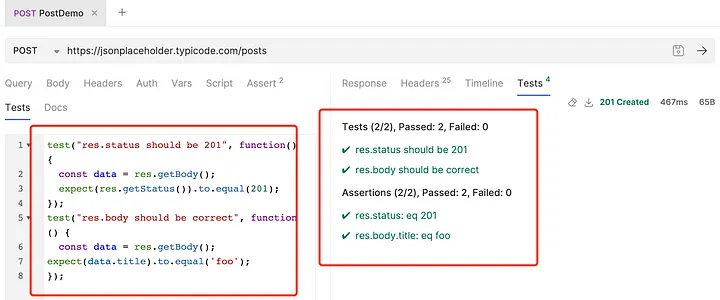

6. Writing Test Assertions Using JavaScript

- Click Tests under PostDemo.

- Add the following script:

test("res.status should be 201", function() {

const data = res.getBody();

expect(res.getStatus()).to.equal(201);

});

test("res.body should be correct", function() {

const data = res.getBody();

expect(data.title).to.equal('foo');

});

- Click Run to validate assertions.

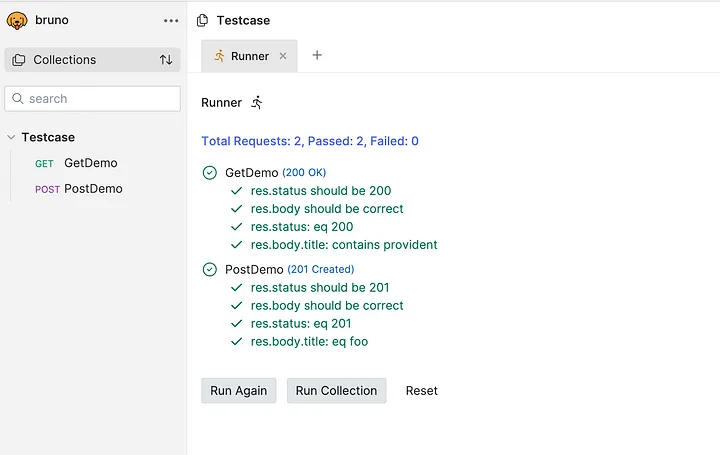

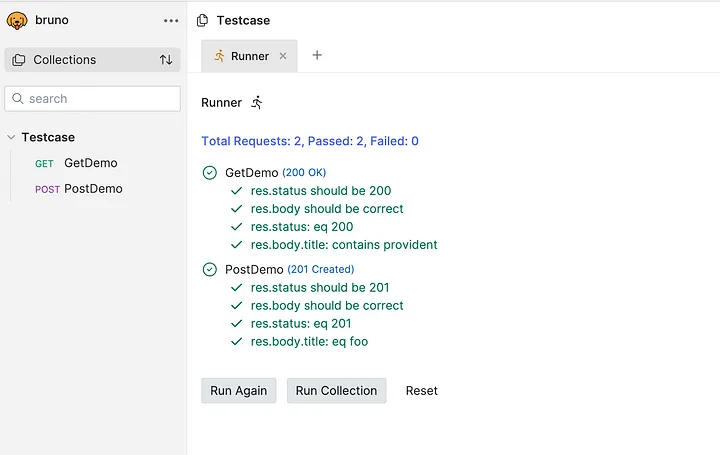

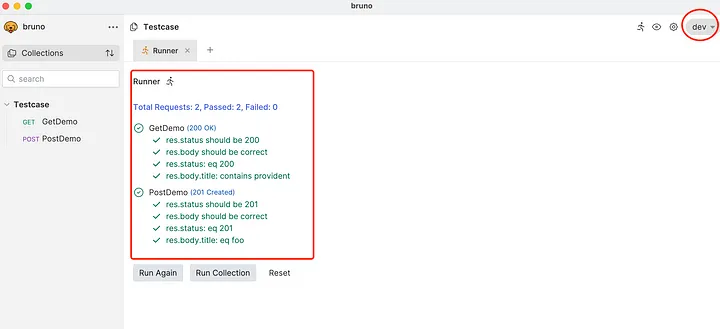

Executing Two Test Cases Locally

- Click the Run button under the Testcase COLLECTION to execute all test cases.

- Check and validate whether the results match the expected outcomes.

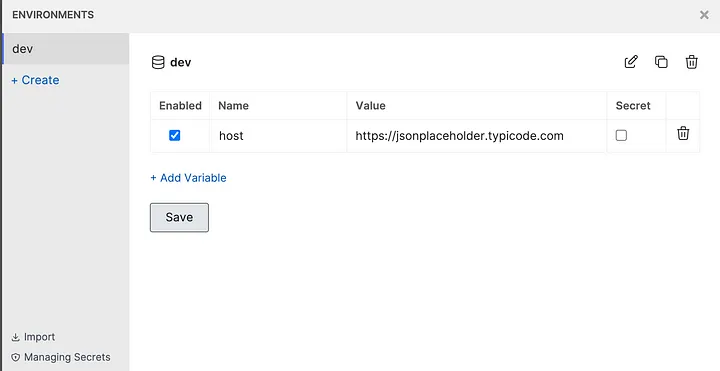

Configuring Environment Variables in Bruno for API Testing

When running API test cases in different environments, modifying request addresses manually for each test case can be tedious, especially when dealing with multiple test cases. Bruno, an API testing tool, simplifies this by providing environment variables, allowing us to configure request addresses dynamically. This way, instead of modifying each test case, we can simply switch environments.

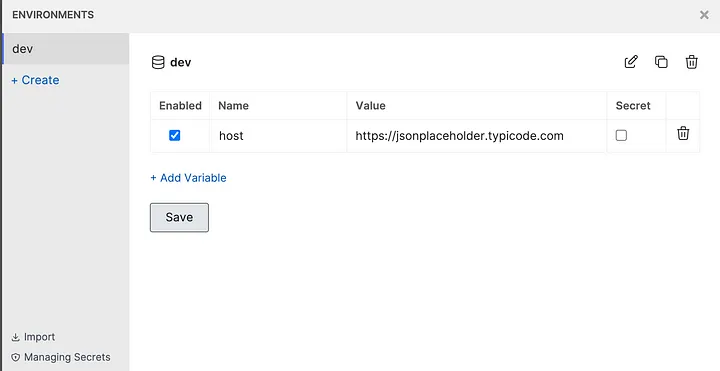

Creating Environment Variable Configuration Files in Bruno

Follow these steps to set up environment variables in Bruno:

1. Open the Environment Configuration Page:

- Click the Environments button under the Testcase COLLECTION to access the environment settings.

2. Create a New Environment:

- Click ADD ENVIRONMENT in the top-right corner.

- Enter a name for the environment (e.g., dev) and click SAVE to create the configuration file.

3. Add an Environment Variable:

- Select the newly created environment (dev) to open its configuration page.

- Click ADD VARIABLE in the top-right corner.

- Enter the variable name as host and set the value to https://jsonplaceholder.typicode.com.

- Click SAVE to apply the changes.

Using Environment Variables in Test Cases

Instead of hardcoding URLs in test cases, use {{host}} as a placeholder. Bruno will automatically replace it with the configured value from the selected environment, making it easy to switch between different testing environments.

By utilizing environment variables, you can streamline your API testing workflow, reducing manual effort and enhancing maintainability.

Using Environment Variables in Test Cases

Once environment variables are configured in Bruno, we can use them in test cases instead of hardcoding request addresses. This makes it easier to switch between different testing environments without modifying individual test cases.

Modifying Test Cases to Use Environment Variables

1. Update the GetDemo Request:

- Click the GetDemo request under the Testcase COLLECTION to open its editing page.

- Modify the request address to {{host}}/posts/1.

- Click SAVE to apply the changes.

2. Update the PostDemo Request:

- Click the PostDemo request under the Testcase COLLECTION to open its editing page.

- Modify the request address to {{host}}/posts.

- Click SAVE to apply the changes.

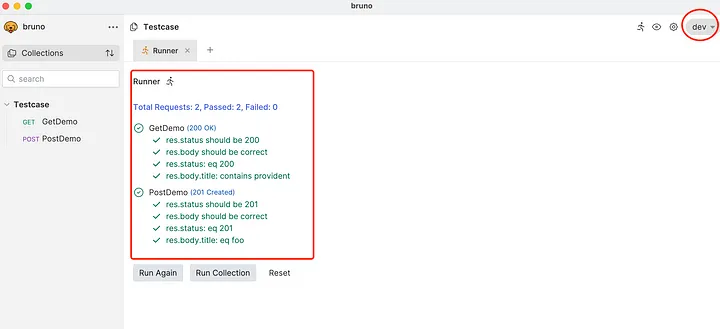

Debugging Environment Variables

- Click the Environments button under the Testcase COLLECTION and select the dev environment.

- Click the RUN button in the top-right corner to execute all test cases.

- Verify that the test results meet expectations.

Conclusion

Bruno is a lightweight and powerful tool designed for automating API testing with ease. Its local-first approach, Git-friendly structure, JavaScript-based scripting, and environment management make it ideal for building fast, secure, and reliable API tests. While Bruno streamlines automation, partnering with Codoid can help you take it further. As experts in API automation, Codoid provides end-to-end solutions to help you design, implement, and scale efficient testing frameworks integrated with your CI/CD pipelines. Reach out to Codoid today to enhance your API automation strategy and accelerate your software delivery.

Frequently Asked Questions

-

How does Bruno API Automation work?

Bruno allows you to automate the testing of APIs locally by recording, running, and validating API requests and responses. It helps streamline the testing process and ensures more efficient workflows.

-

Is Bruno API Automation suitable for large-scale projects?

Absolutely! Bruno's local-first approach and ability to scale with your testing needs make it suitable for both small and large-scale API testing projects.

-

What makes Bruno different from other API automation tools?

Bruno stands out due to its local-first design, simplicity, and ease of use, making it an excellent choice for teams looking for a straightforward and scalable API testing solution.

-

Is Bruno API Automation free to use?

Bruno offers a free version with basic features, allowing users to get started with API automation. There may also be premium features available for more advanced use cases.

-

Does Bruno provide reporting features?

Yes, Bruno includes detailed reporting features that allow you to track test results, view error logs, and analyze performance metrics, helping you optimize your API testing process.

-

Can Bruno be used for continuous integration (CI) and deployment (CD)?

Absolutely! Bruno can be integrated into CI/CD pipelines to automate the execution of API tests during different stages of development, ensuring continuous quality assurance.

-

How secure is Bruno API Automation?

Bruno ensures secure API testing by providing options for encrypted communications and secure storage of sensitive data, giving you peace of mind while automating your API tests.

by Mollie Brown | Mar 14, 2025 | Automation Testing, Blog, Latest Post |

Ensuring the quality, reliability, and performance of applications is more critical than ever. As applications become more complex, manual testing alone is no longer sufficient to keep up with rapid release cycles. Automated testing has emerged as a game-changer, enabling teams to streamline their testing workflows, reduce manual effort, and improve test coverage while accelerating software delivery. Among the various automation tools available,TestComplete, developed by SmartBear, stands out as a feature-rich and versatile solution for automating tests across multiple platforms, including desktop, web, and mobile applications. It supports both scripted and scriptless automation, making it accessible to beginners and experienced testers alike.

Whether you are new to test automation or looking to enhance your skills, this step-by-step tutorial series will guide you through the essential functionalities of TestComplete and help you become proficient in leveraging its powerful features.

Key Features of TestComplete

- Cross-Platform Testing – Supports testing across desktop, web, and mobile applications.

- Multiple Scripting Languages – Allows test automation using Python, JavaScript, VBScript, JScript, and DelphiScript.

- Scriptless Test Automation – Provides keyword-driven and record-and-replay testing options for beginners.

- Advanced Object Recognition – Uses AI-based algorithms to identify UI elements, even when their properties change.

- Data-Driven Testing – Enables running tests with different data sets to improve test coverage.

- Seamless CI/CD Integration – Works with tools like Jenkins, Azure DevOps, and Git for continuous testing.

- Parallel and Distributed Testing – Runs tests simultaneously across multiple environments to save time.

Why Use TestComplete?

- User-Friendly Interface – Suitable for both beginners and experienced testers.

- Supports Multiple Technologies – Works with apps built on .NET, Java, Delphi, WPF, Angular, React, etc.

- Reduces Manual Effort – Automates repetitive tests, allowing teams to focus on critical testing areas.

- Improves Software Quality – Ensures applications are stable, reliable, and bug-free before release.

Getting Started with TestComplete

Starting your TestComplete journey is easy. You can get a free trial for 30 days. This lets you see what it can do before deciding. To get started, just visit the official SmartBear website for download and installation steps. Make sure to check the system requirements first to see if it works with your computer.

After installing, TestComplete will help you create your first testing project. Its simple design makes it easy to set up your testing space. This is true even for people who are new to software testing tools.

System Requirements and Installation Guide

Before you start installing TestComplete, it is important to check the system requirements. This helps ensure it will run smoothly and prevents any unexpected compatibility problems. You can find the detailed system requirements on the SmartBear website, but here is a quick summary:

- Operating System: Use Windows 10 or Windows Server 2016 or newer. Make sure the system architecture (32-bit or 64-bit) matches the version of TestComplete you want to install.

- Hardware: A dual-core processor with a clock speed of 2 GHz or more is best for good performance. You should have at least 2 GB of RAM, but 4 GB or more is better, especially for larger projects.

- Disk Space: You need at least 1 GB of free disk space to install TestComplete. It’s smart to have more space for project files and test materials.

Once you meet these system needs, the installation itself is usually easy. SmartBear offers guides on their website. Generally, all you need to do is download the installer that fits your system, run it as an administrator, agree to the license, choose where to install, and follow the instructions on the screen.

Setting Up Your First Test Environment

Follow these simple steps to set up your test environment and run your first test in TestComplete.

Install TestComplete

- Download and install TestComplete from the SmartBear website.

- Activate your license or start a free trial.

Prepare Your Testing Environment

- Make sure your application (web, desktop, or mobile) is ready for testing.

- Set up any test data if needed.

- If testing a web or mobile app, configure the required browser or emulator.

Check Plugin Availability

- After installation, open TestComplete.

- Go to File → Install Extensions and ensure that necessary plugins are enabled.

-

- For web automation, enable Web Testing Plugin.

- For mobile automation, enable Mobile Testing Plugin.

Plugins are essential for ensuring TestComplete can interact with the type of application you want to test.

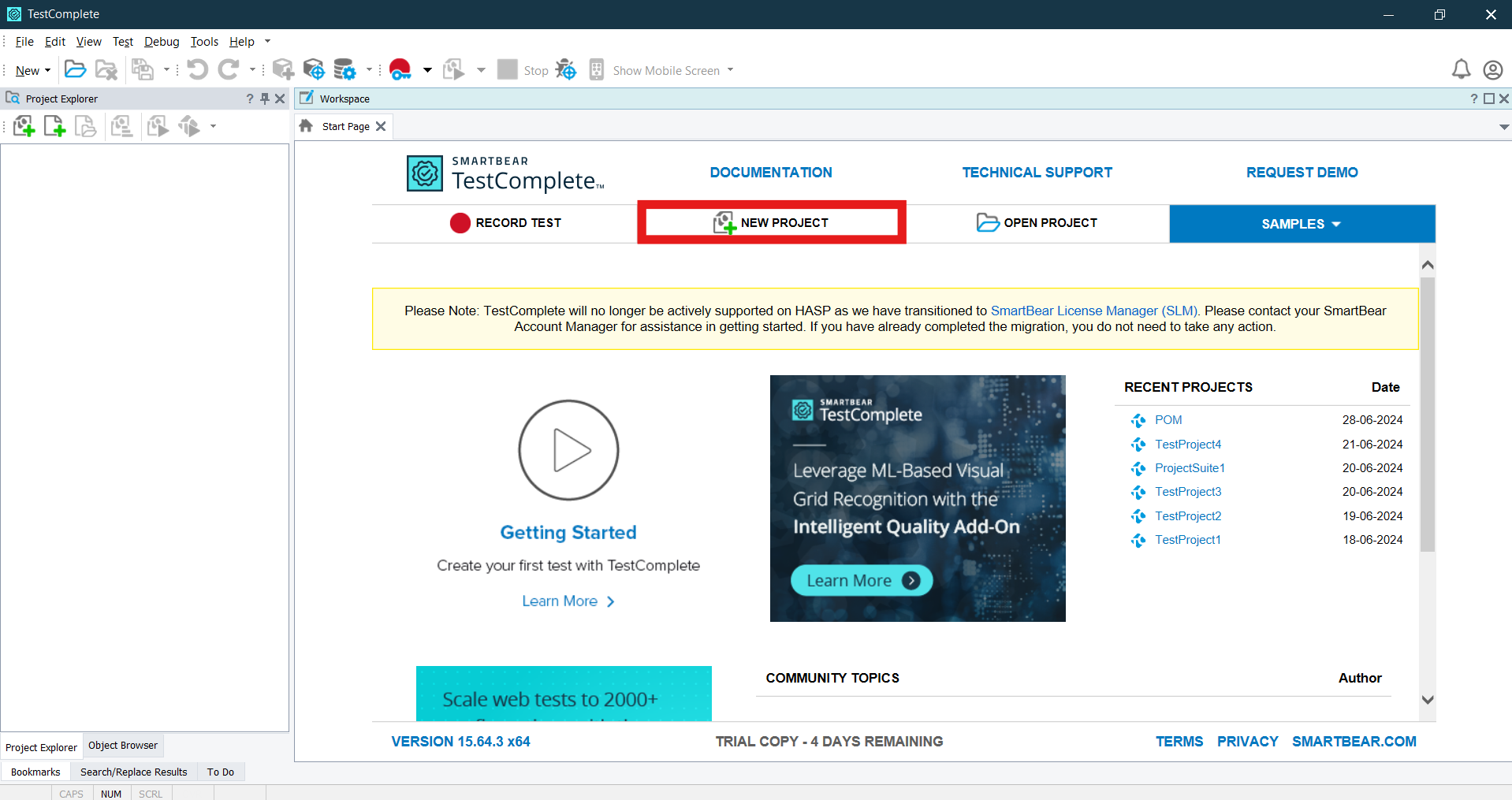

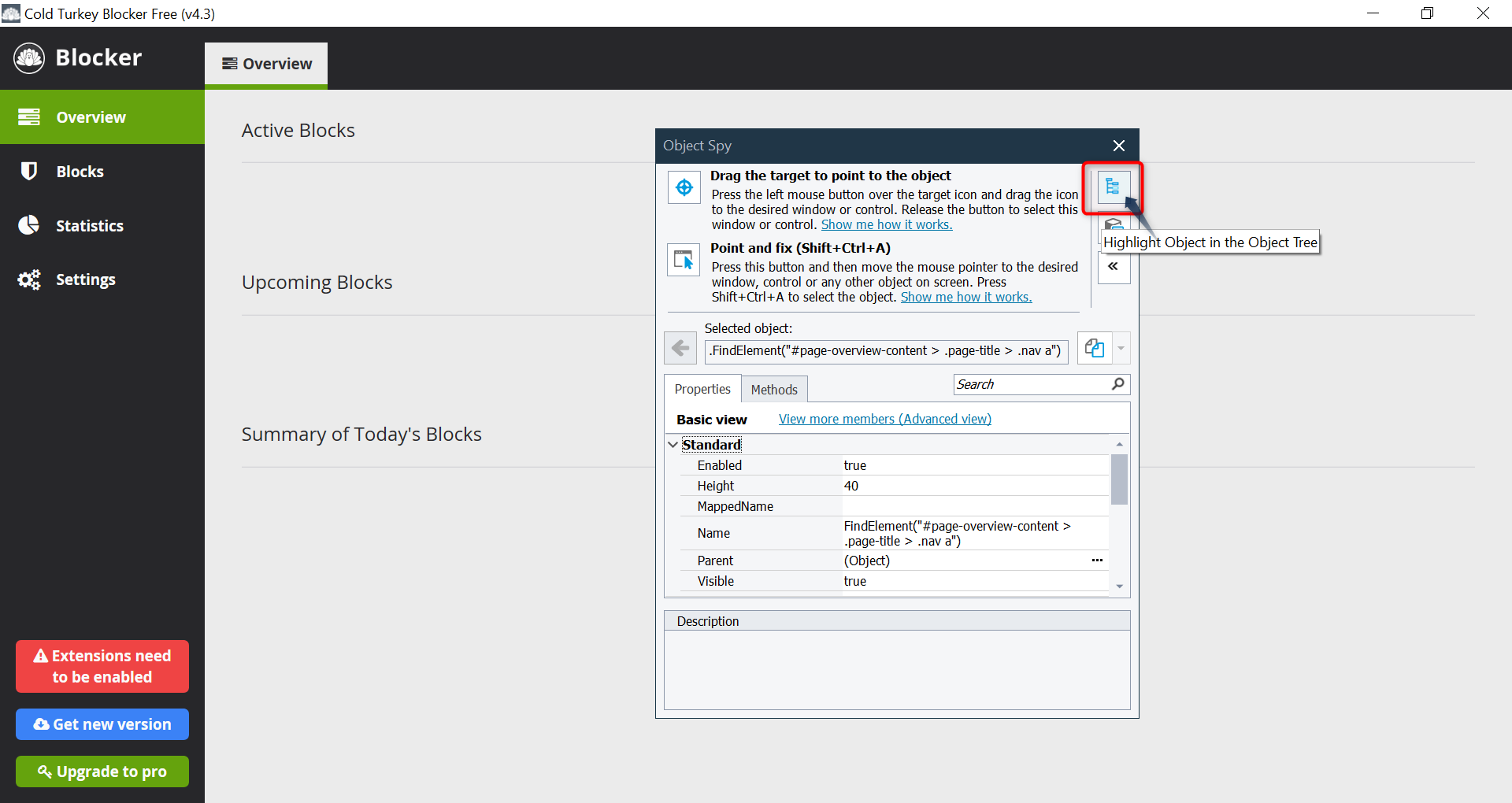

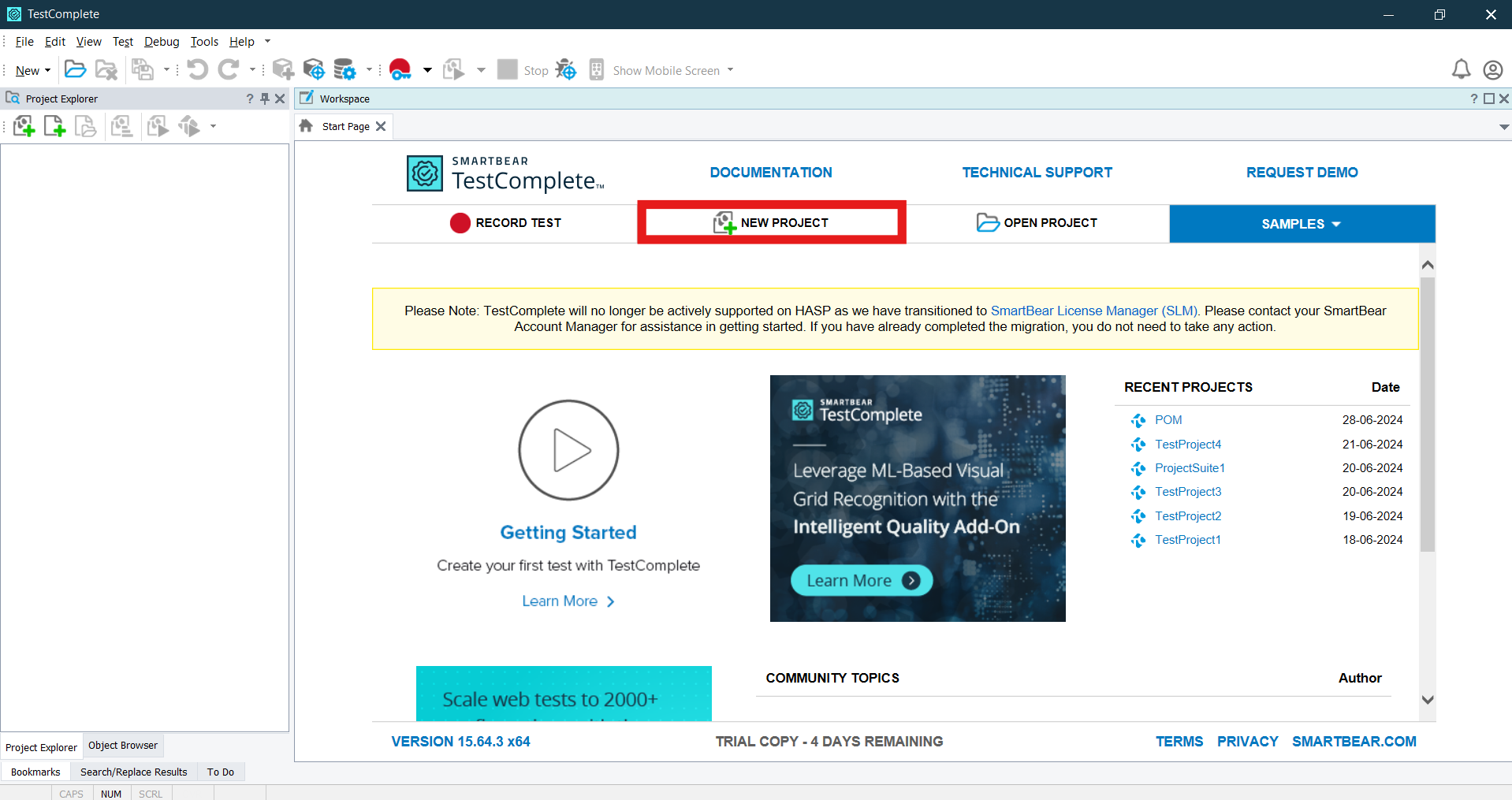

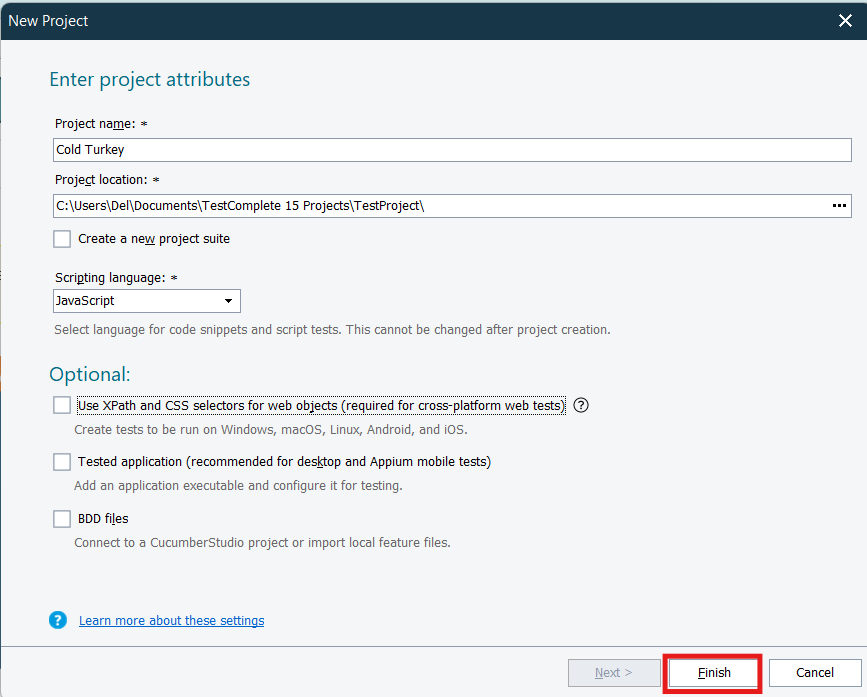

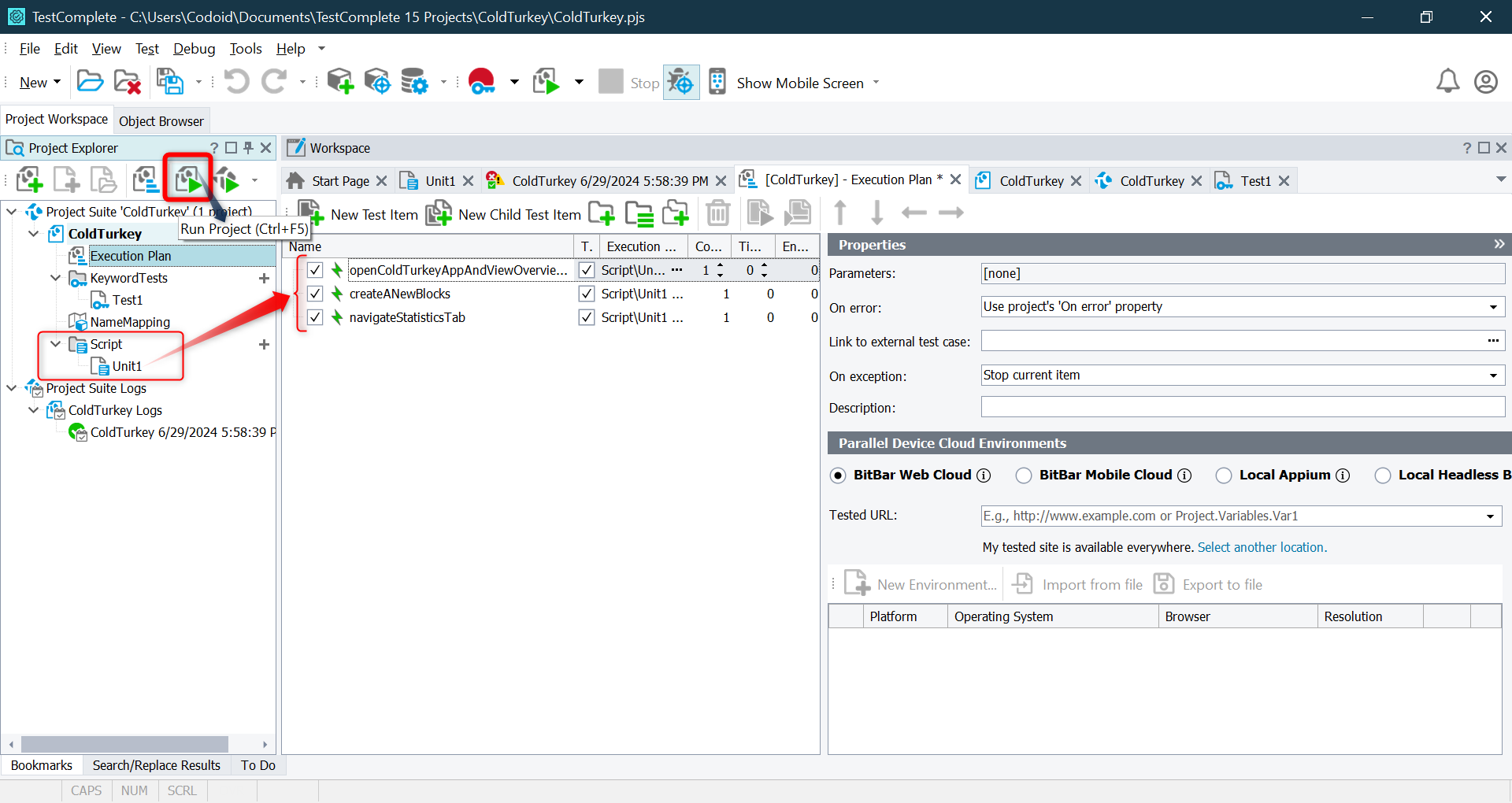

Creat New Project

- Open TestComplete and click “New Project”.

- On the Enter project attributes page of the wizard, you specify the name, location and scripting language of the project, as well as some additional settings:

- Project Name – Specifies the name of the project. TestComplete will automatically add the .mds extension to this name when creating the project file.

- Project Location – specifies the Folder where the Project file will be created.

- Scripting Language – Select the scripting language for your project once selected you can’t change the Project language So choose wisely. You can choose any one of scripting languages Javascript, Python, VBScript

- Use XPath and CSS selectors for web objects – Having this option enabled is compulsory for creating cross platform web test that is, tests that can be run in remote environments that use web browsers not supported by TestComplete directly, like Safari, and operating systems and platforms, like Windows, Linux, Unix, Mac OS, mobile Android and iOS.

- Tested Application – select this checkbox if you want to add your desktop or mobile application to the tested application list of your new project. You can also add a tested application at any time later.

- BDD Files – Select this check box to import your BDD feature files to your project to automate them. You can also import files at any time after you create the project

- Select the Application Type based on what you are testing:

- Desktop Application → For Windows-based applications.

- Web Application → For testing websites and web applications (supports Chrome, Edge, Firefox, etc.).

- Mobile Application → For testing Android and iOS apps (requires a connected device/emulator).

- Enter a Project Name and select a save location.

- Click “Create” to set up the project.

TestComplete will now generate project files, including test logs, name mappings, and test scripts.

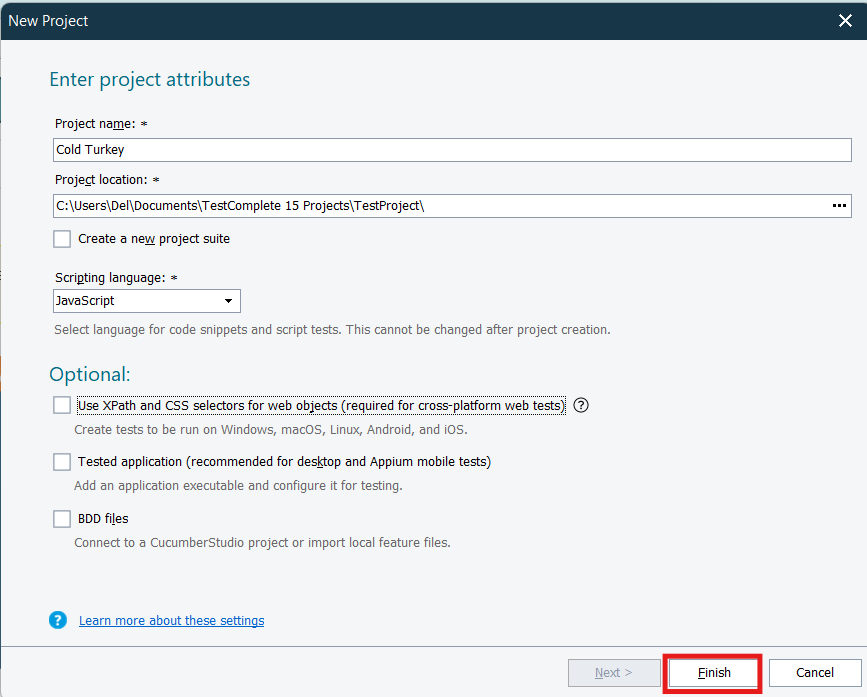

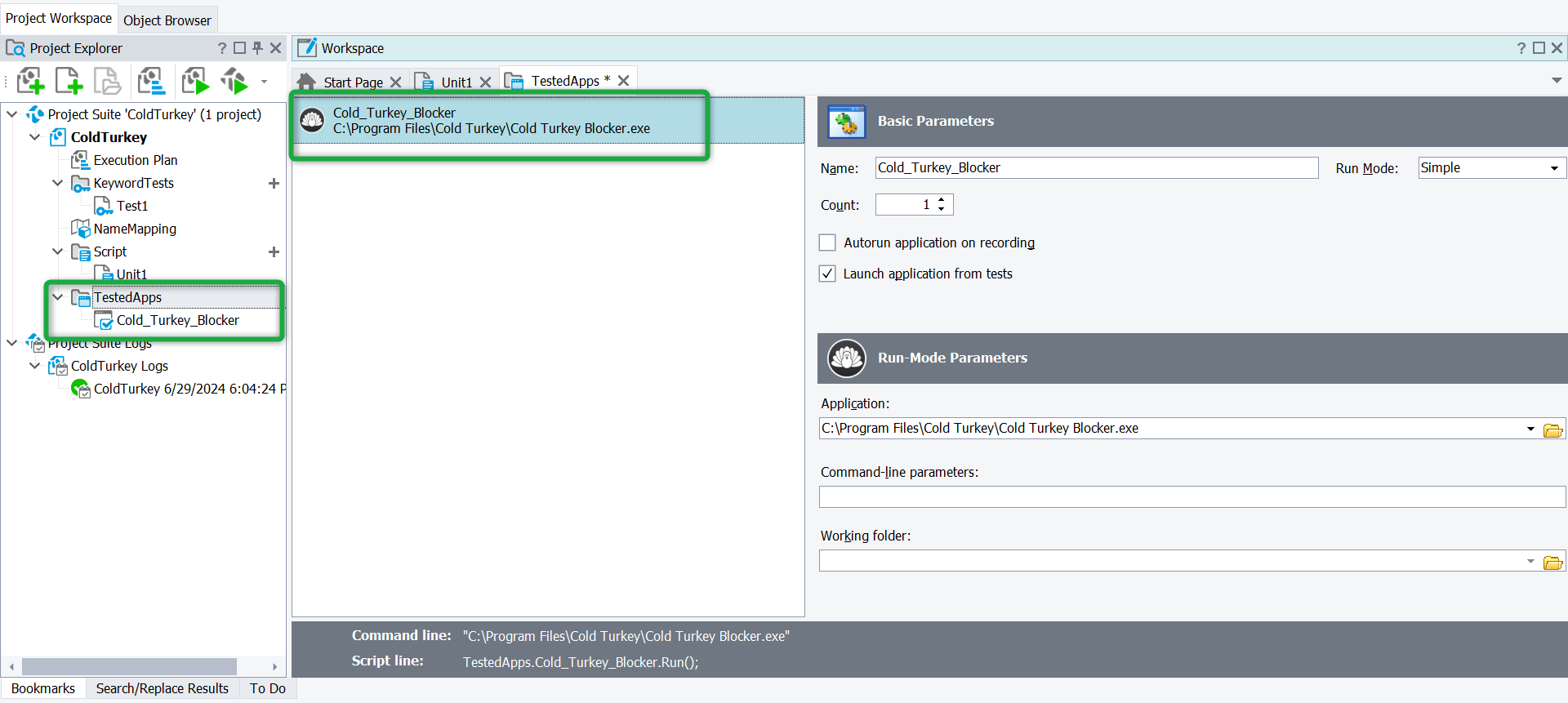

Adding the Application Under Test (AUT)

To automate tests, TestComplete needs to recognize the Application Under Test (AUT).

For Desktop Applications:

- Go to Project Explorer → Tested Applications.

- Click “Add”, then select “Add Application”.

- Browse and select the .exe file of your desktop application.

- Click OK to add it.

For Web Applications:

- Navigate to Tested Applications → Click “Add”.

- Enter the URL of the web application.

- Select the browser where the test will run (Chrome, Edge, Firefox, etc.).

- Click OK to save.

For Mobile Applications:

- Connect an Android/iOS device to your computer.

- In TestComplete, navigate to Mobile Devices → Connect Device.

-

- Select the application package or install the app on your device.

Now, TestComplete knows which application to launch and test.

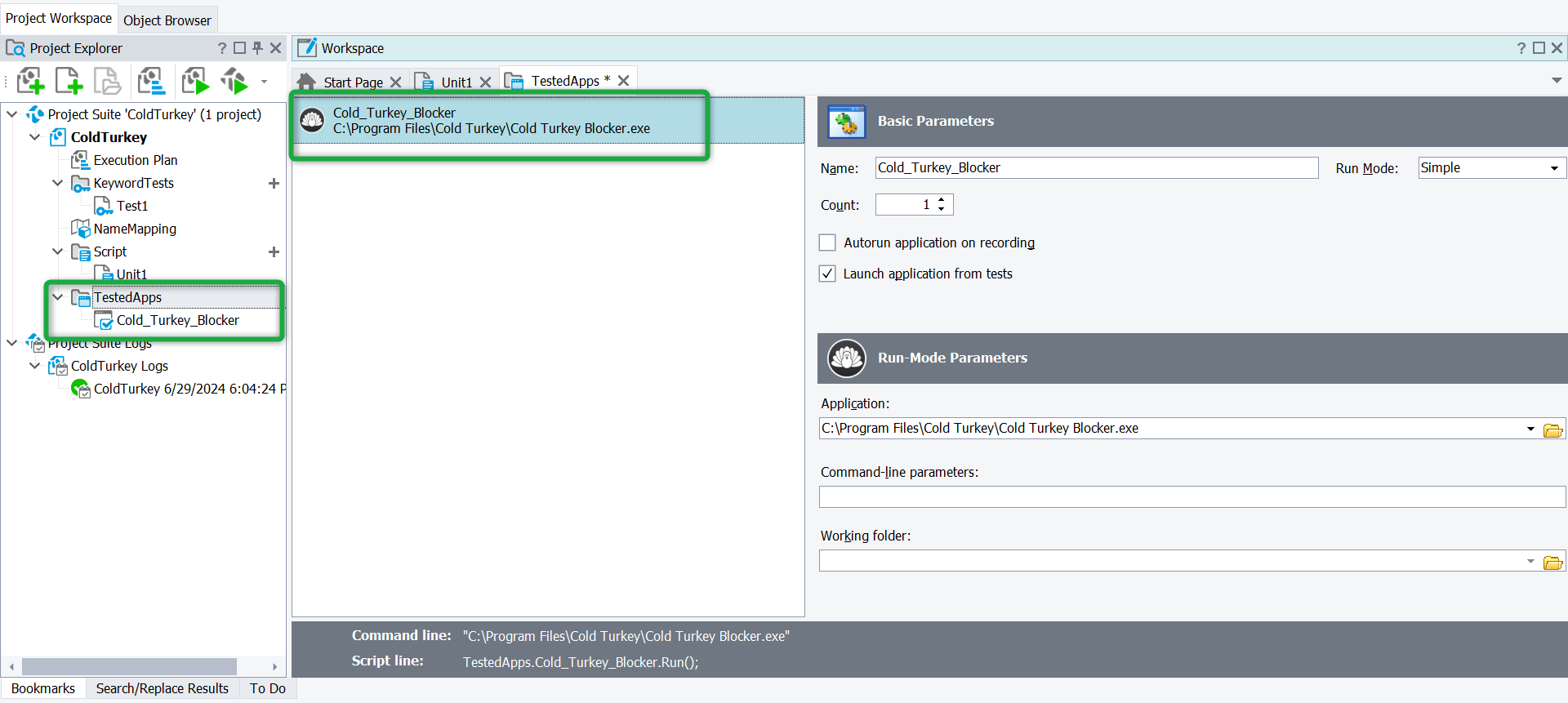

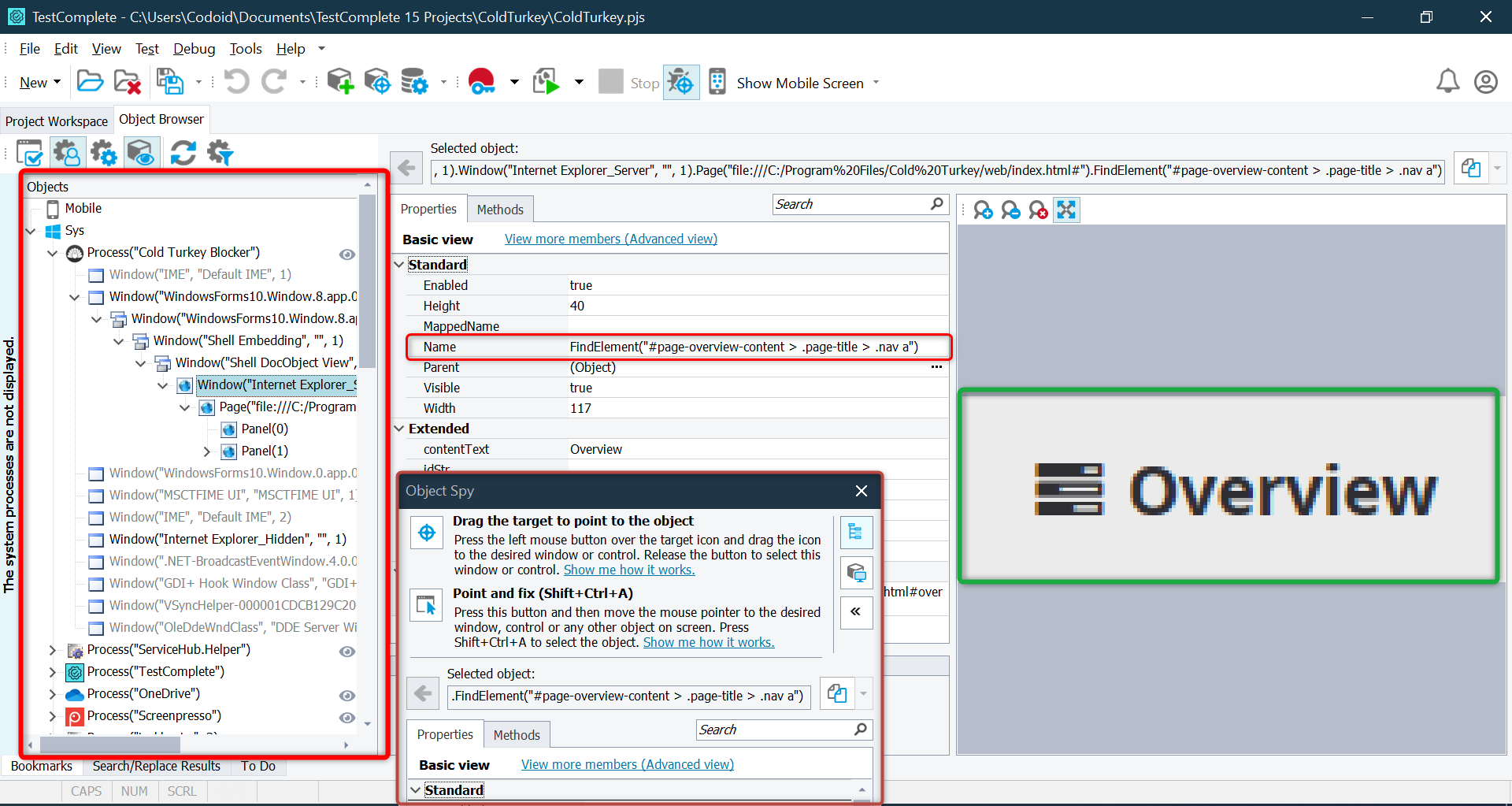

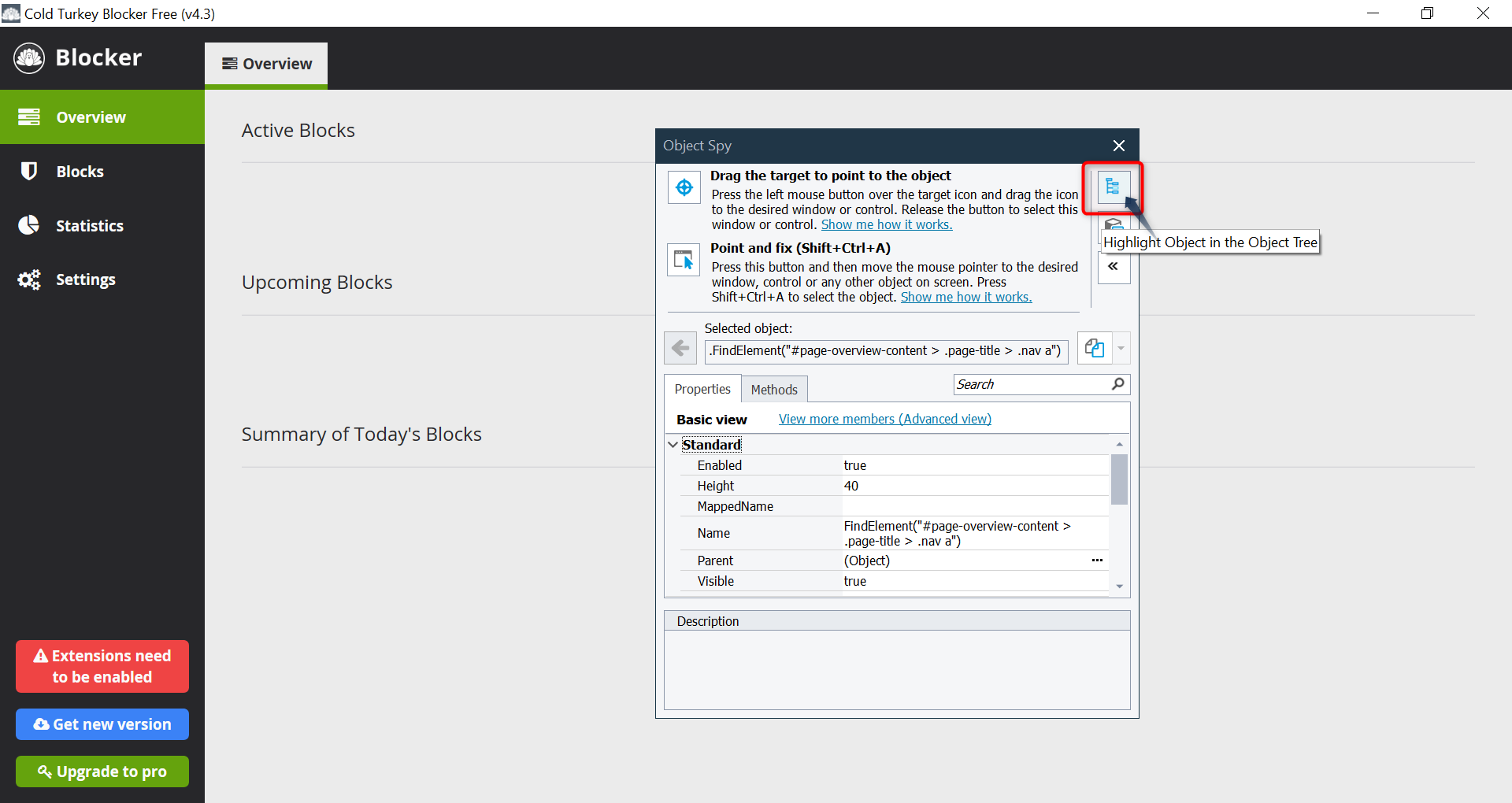

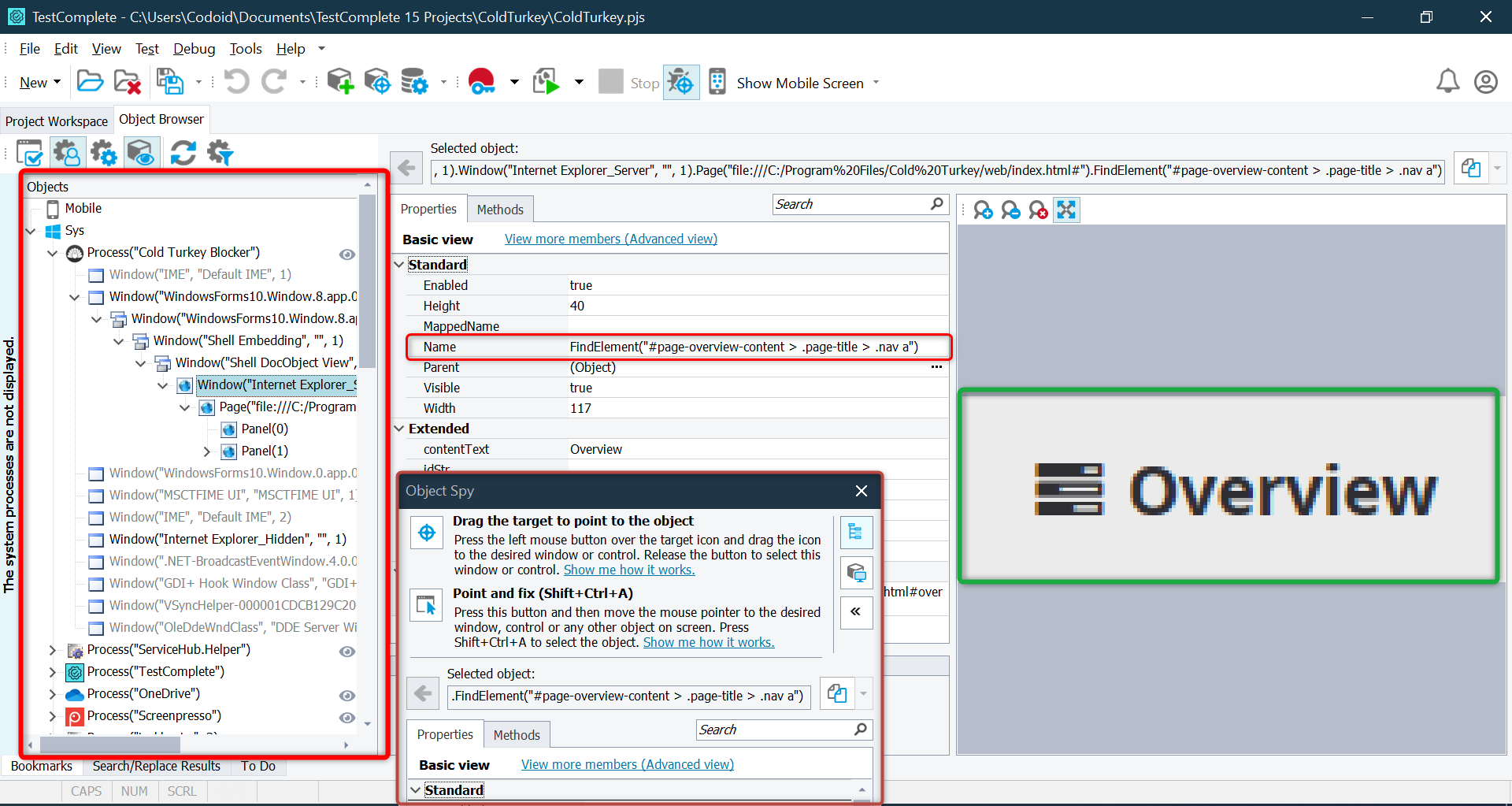

Understanding Object Spy & Object Browser

TestComplete interacts with applications by identifying UI elements like buttons, text fields, checkboxes, etc. It does this using:

Object Spy (To Identify UI Elements)

- Click Object Spy from the TestComplete toolbar.

- Drag the crosshair icon over the UI element you want to inspect.

- TestComplete will display:

- Element properties (ID, name, type, etc.)

- Available methods (Click, SetText, etc.)

- Click “Map Object” to save it for automation scripts.

Object Spy helps TestComplete recognize elements even if their location changes.

Object Browser (To View All UI Elements)

- Open View → Object Browser.

- Browse through the application’s UI hierarchy.

- Click any object to view its properties and available actions.

Object Browser is useful for debugging test failures and understanding UI structure.

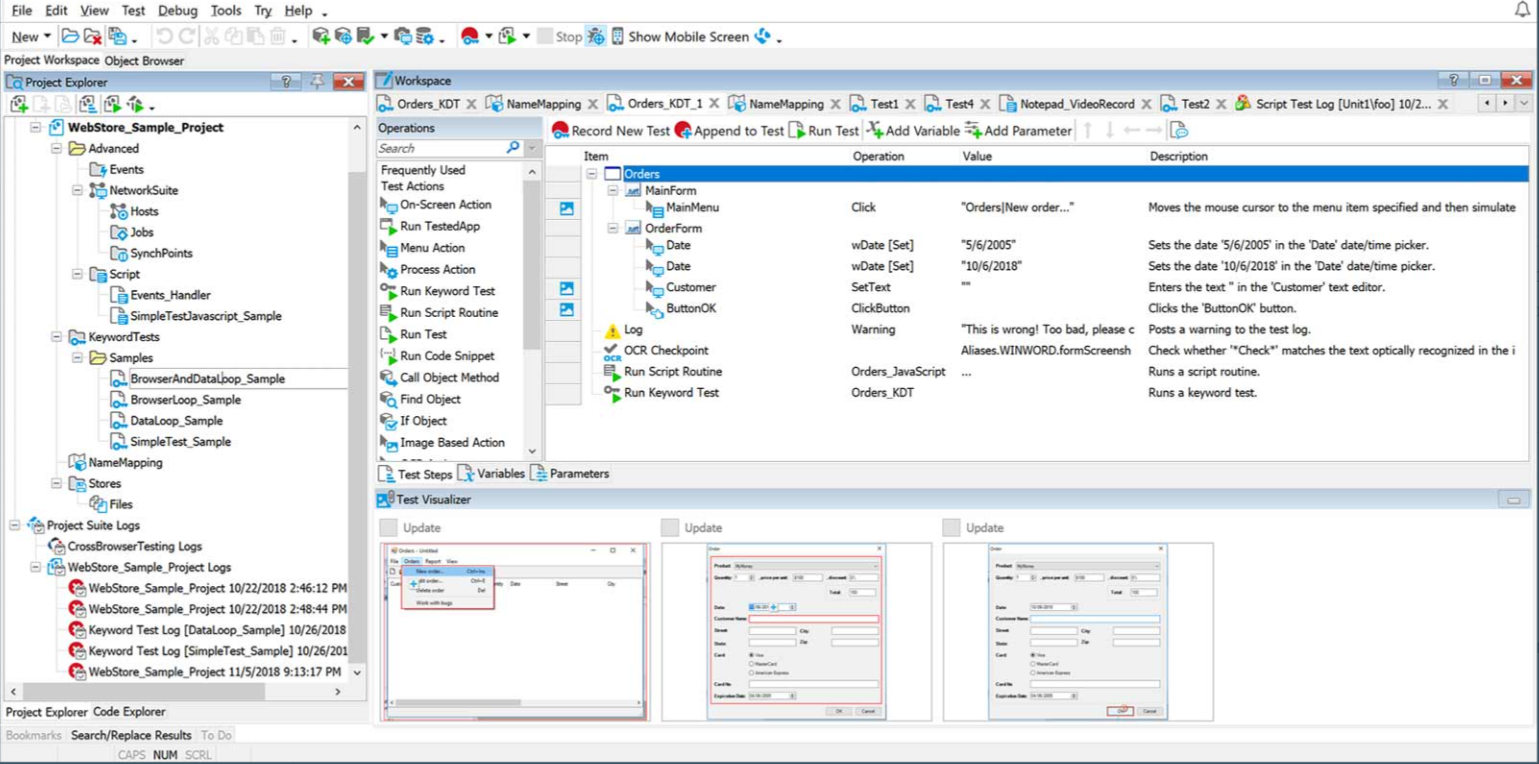

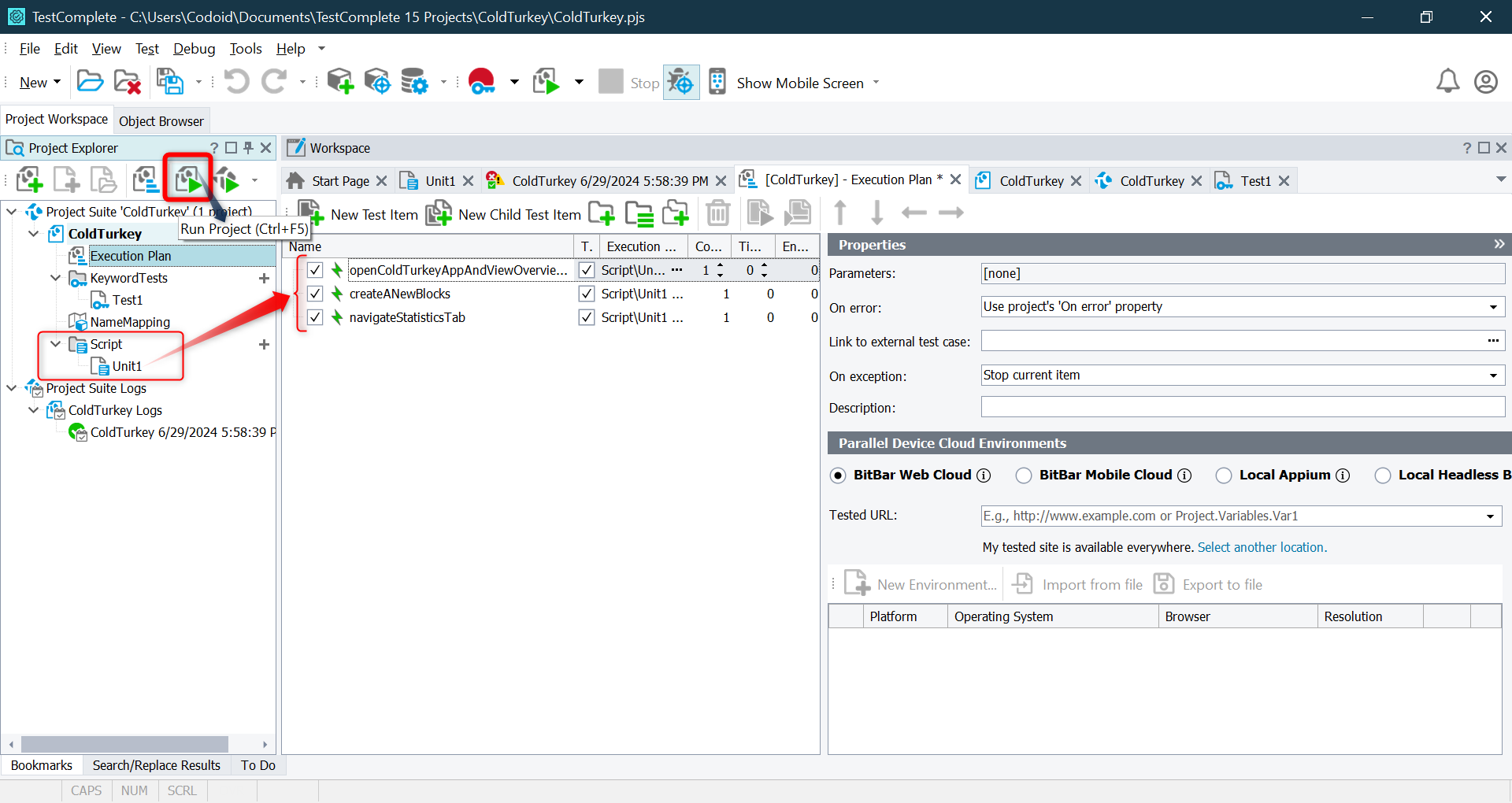

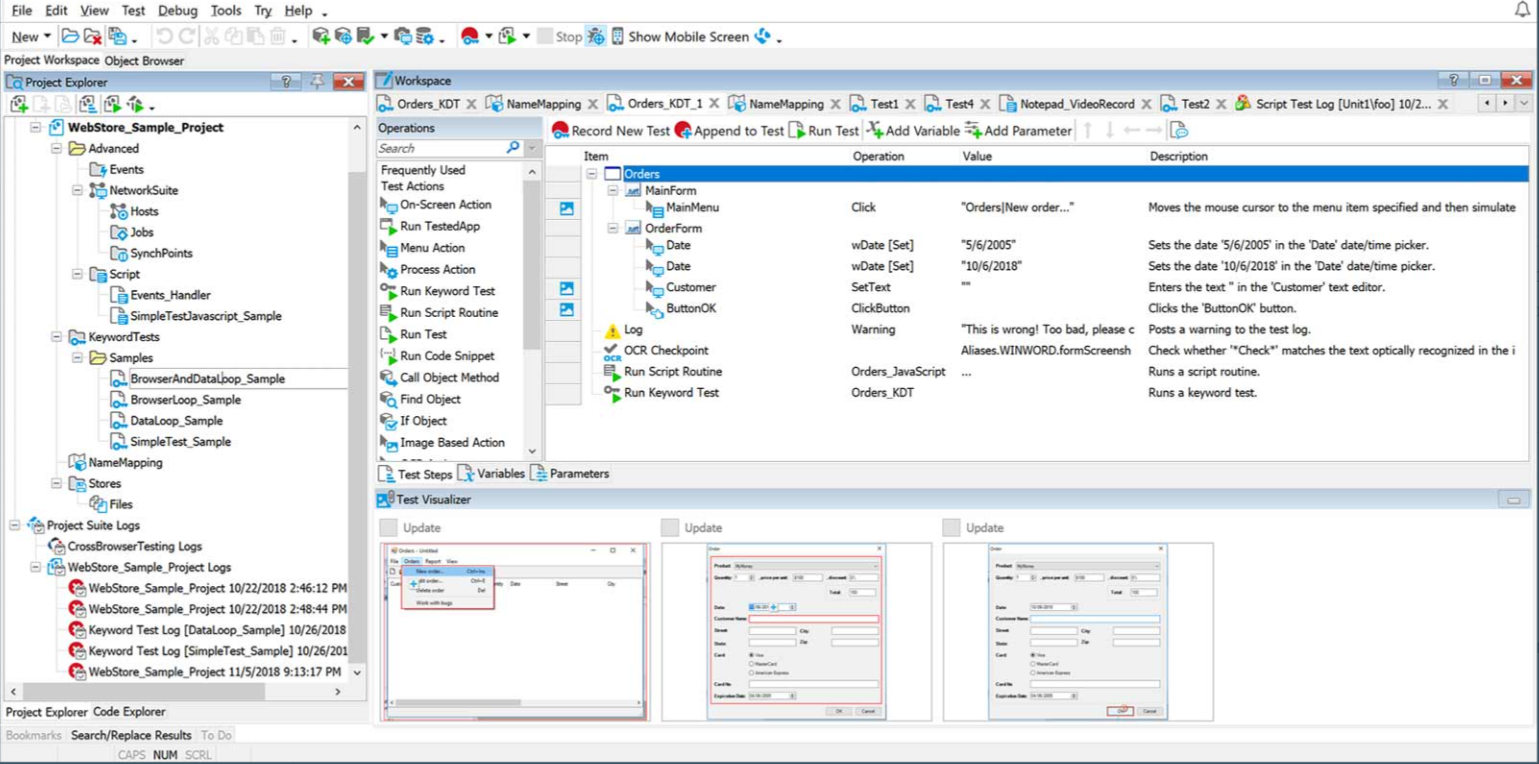

Creating a Test in TestComplete

TestComplete allows different ways to create automated tests.

Method 1: Record and Playback (No Coding Required)

- Click “Record” in the toolbar.

- Perform actions on your application (click buttons, enter text, etc.).

- Click “Stop” to save the recorded test.

- Click Run to execute the recorded test.

Great for beginners or those who want quick test automation without scripting!

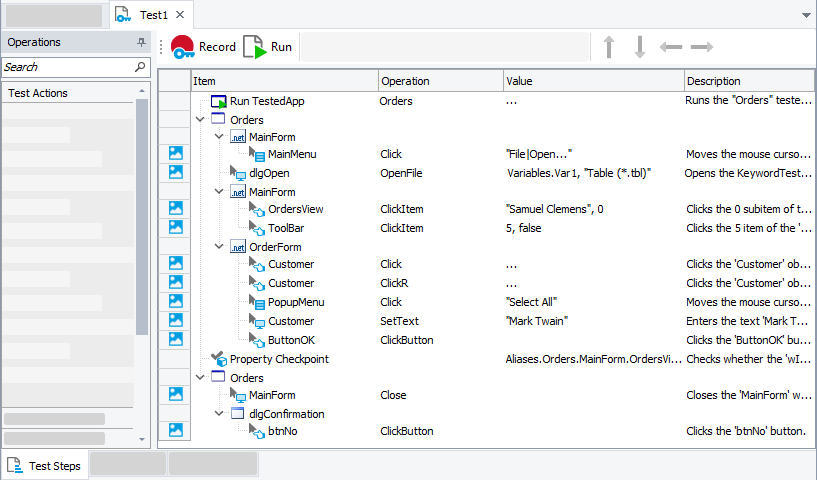

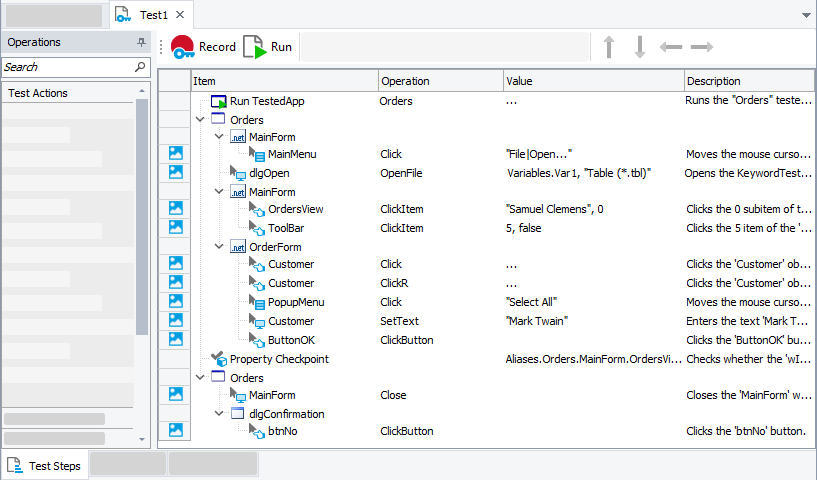

Method 2: Keyword-Driven Testing (Step-by-Step Actions)

- Open Keyword Test Editor.

- Add actions like Click, Input, Verify, etc. using a graphical interface.

- Arrange steps in order and save the test.

- Run the test and check results.

Ideal for testers who prefer a structured, visual test flow.

Method 3: Scripted Testing (Python, JavaScript, VBScript, etc.)

Best for advanced users who need flexibility and customization.

Running the Test

- Click Run to start the test execution.

- TestComplete will launch the application and perform actions based on the recorded/scripted steps.

- You can pause, stop, or debug the test at any point.

Running a test executes the automation script and interacts with the UI elements as per the defined steps.

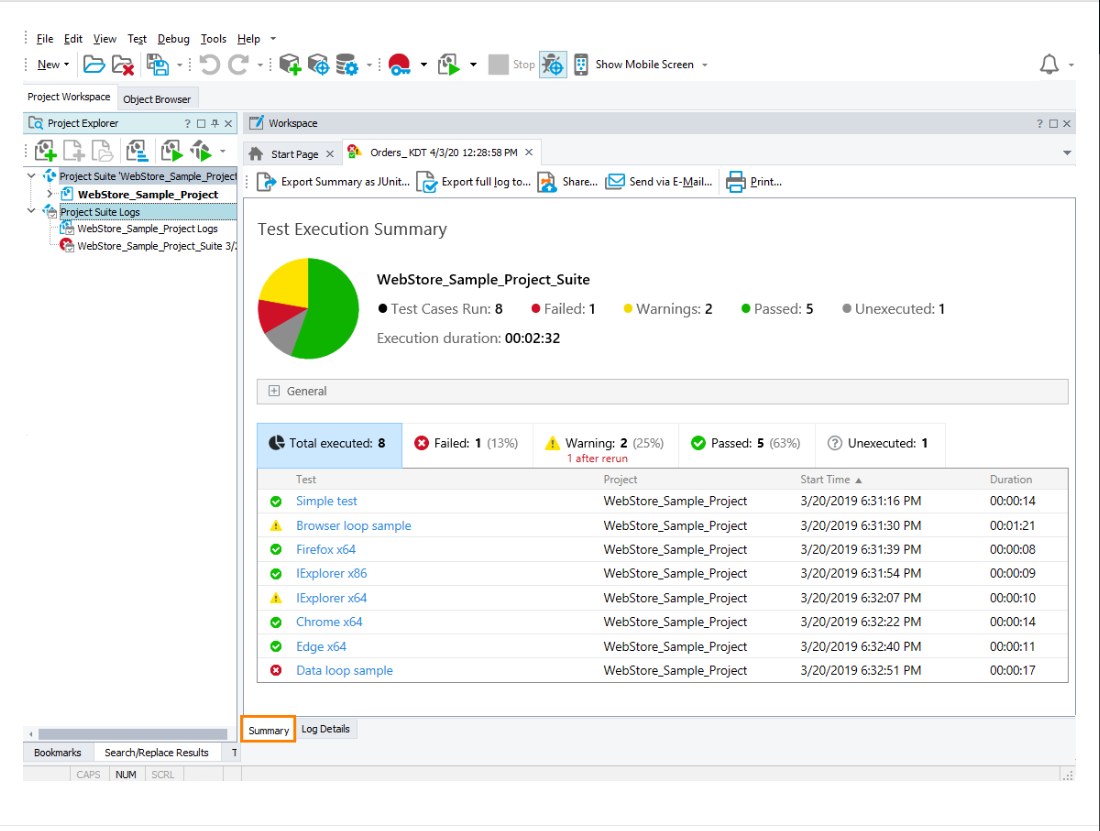

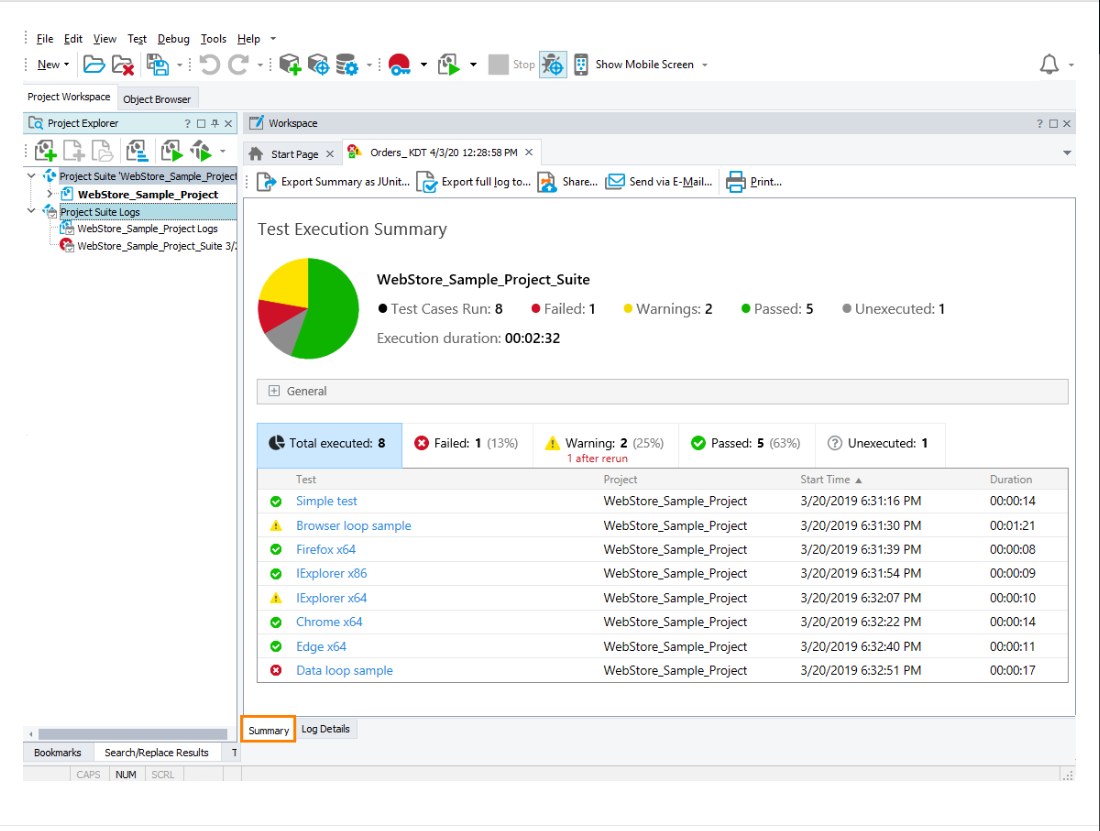

Viewing Execution Results

Once the test completes, TestComplete generates a Test Log that provides:

- ✅Pass/Fail Status – Displays if the test succeeded or failed.

- 📷Screenshots – Captures test execution steps.

- ⚠️Error Messages – Shows failure reasons (if any).

- 📊Execution Time & Performance Metrics – Helps analyze test speed.

Here some of Pros & Cons of TestComplete

Pros

- Supports a wide range of platforms, including Windows, macOS, iOS, and Android.

- Allows for data-driven testing, enabling tests to be run with multiple data sets to ensure comprehensive coverage.

- Supports parallel execution of tests, speeding up the overall testing process.

- Generates detailed test reports and logs, helping testers analyze results and track issues efficiently.

- Can test web, desktop, and mobile applications.

Cons

- Mastering all the functionalities, especially advanced scripting, can take time.

- TestComplete can be a bit expensive compared to some other testing tools.

- It can be resource-intensive, requiring robust hardware for optimal performance, especially when running multiple tests in parallel.

- Despite advanced object recognition, there can still be issues with recognizing dynamic or complex UI elements, requiring manual adjustments.

Conclusion

Test automation is essential for ensuring software quality, increasing efficiency, and reducing manual effort. Among the many automation tools available, TestComplete, developed by SmartBear, is a powerful and flexible solution for testing desktop, web, and mobile applications. In this tutorial, we covered key aspects of using TestComplete, including installation, project setup, test creation, execution, and result analysis. We also explored how to add an Application Under Test (AUT), use Object Spy and Object Browser to identify UI elements, and implement different testing methods such as record-and-playback, keyword-driven testing, and scripting. Additionally, we discussed best practices like name mapping, test modularization, CI/CD integration, and data-driven testing to ensure stable and efficient automation.

As a leading software testing company, Codoid specializes in test automation, performance testing, and QA consulting. With extensive expertise in TestComplete and other advanced automation tools, Codoid helps businesses improve software quality, speed up testing cycles, and build strong automation strategies. Whether you’re new to automation or looking to enhance your existing test framework, Codoid offers expert guidance for achieving reliable and scalable automation solutions.

This blog provided an overview of TestComplete’s capabilities, but there’s much more to explore. Stay tuned for upcoming blogs, where we’ll dive deeper into advanced scripting, data-driven testing, CI/CD integration, and handling dynamic UI elements in TestComplete.

Frequently Asked Questions

-

Is TestComplete free to use?

TestComplete offers a free trial but requires a paid license for continued use. Pricing depends on the features and number of users. You can download the trial version from the SmartBear website.

-

Which platforms does TestComplete support?

TestComplete supports automation for Windows desktop applications, web applications (Chrome, Edge, Firefox), and mobile applications (Android & iOS).

-

Can I use TestComplete for cross-browser testing?

Yes, TestComplete allows you to automate cross-browser testing for websites on Chrome, Edge, and Firefox. It also supports XPath and CSS selectors for identifying web elements.

-

How does TestComplete compare to Selenium?

-TestComplete supports scripted and scriptless testing, while Selenium requires programming knowledge.

-TestComplete provides built-in object recognition and reporting, whereas Selenium needs third-party tools.

-Selenium is open-source and free, whereas TestComplete is a paid tool with professional support.

-

How do I export TestComplete test results?

TestComplete generates detailed test logs with screenshots, errors, and performance data. These reports can be exported as HTML files for documentation and analysis.

-

What industries use TestComplete for automation testing?

TestComplete is widely used in industries like finance, healthcare, retail, and technology for automating web, desktop, and mobile application testing.

by Chris Adams | Mar 10, 2025 | API Testing, Blog, Latest Post |

APIs (Application Programming Interfaces) play a crucial role in enabling seamless communication between different systems, applications, and services. From web and mobile applications to cloud-based solutions, businesses rely heavily on APIs to deliver a smooth and efficient user experience. However, with this growing dependence comes the need for continuous monitoring to ensure APIs function optimally at all times. API monitoring is the process of tracking API performance, availability, and reliability in real-time, while API testing verifies that APIs function correctly, return expected responses, and meet performance benchmarks. Together, they ensure that APIs work as expected, respond within acceptable timeframes, and do not experience unexpected downtime or failures. Without proper monitoring and testing, even minor API failures can lead to service disruptions, frustrated users, and revenue losses. By proactively keeping an eye on API performance, businesses can ensure that their applications run smoothly, enhance user satisfaction, and maintain a competitive edge.

In this blog, we will explore the key aspects of API monitoring, its benefits, and best practices for keeping APIs reliable and high-performing. Whether you’re a developer, product manager, or business owner, understanding the significance of API monitoring is essential for delivering a top-notch digital experience.

Why API Monitoring is Important

- Detects Downtime Early: Alerts teams when an API is down or experiencing issues.

- Improves Performance: Helps identify slow response times or bottlenecks.

- Ensures Reliability: Monitors API endpoints to maintain a seamless experience for users.

- Enhances Security: Detects unusual traffic patterns or unauthorized access attempts.

- Optimizes Third-Party API Usage: Ensures external APIs used in applications are functioning correctly.

Types of API Monitoring

- Availability Monitoring: Checks if the API is online and accessible.

- Performance Monitoring: Measures response times, latency, and throughput.

- Functional Monitoring: Tests API endpoints to ensure they return correct responses.

- Security Monitoring: Detects vulnerabilities, unauthorized access, and potential attacks.

- Synthetic Monitoring: Simulates user behavior to test API responses under different conditions.

- Real User Monitoring (RUM): Tracks actual user interactions with the API in real-time.

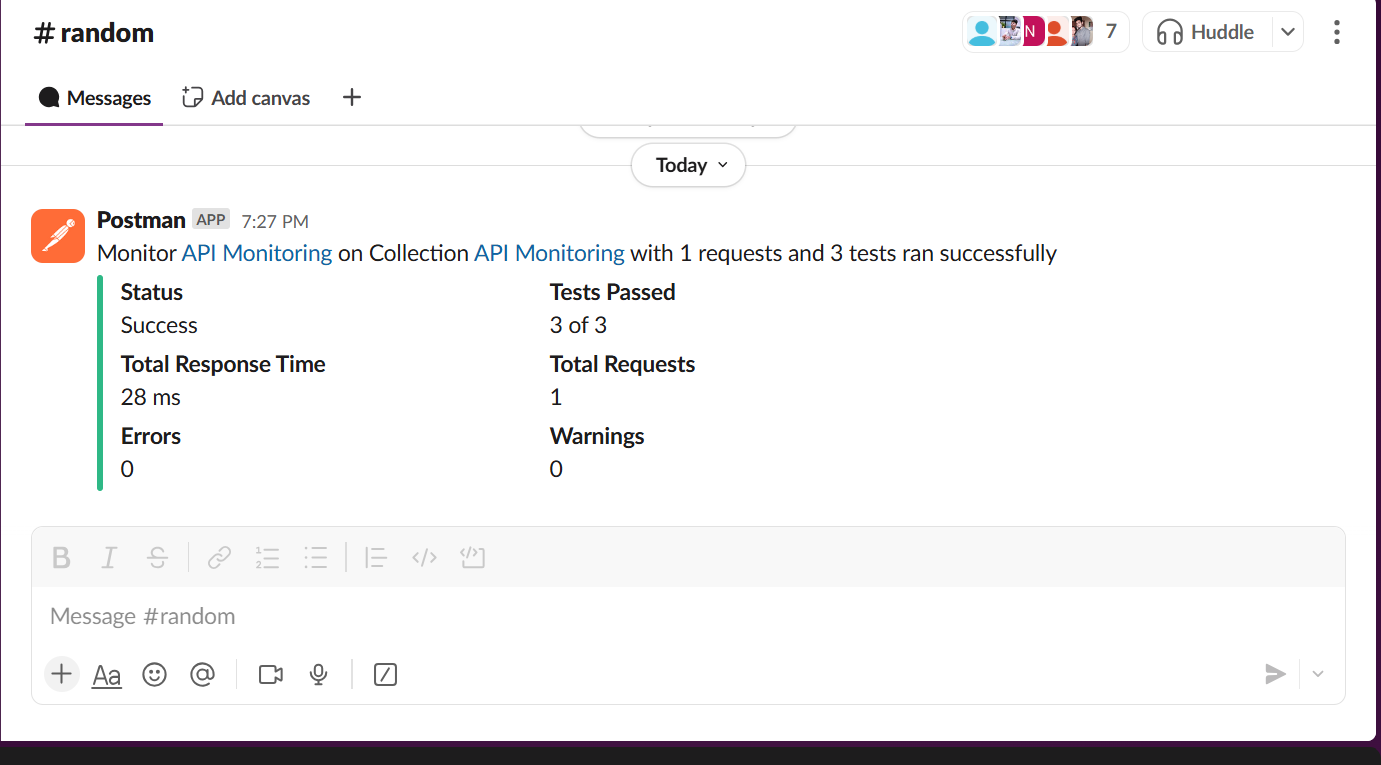

Now that we’ve covered the types of API monitoring, let’s set it up using Postman. In the next section, we’ll go through the steps to configure test scripts, automate checks, and set up alerts for smooth API monitoring.

Set Up API Monitoring in Postman – A Step-by-Step Guide

Postman provides built-in API monitoring to help developers and testers track API performance, uptime, and response times. By automating API checks at scheduled intervals, Postman ensures that APIs remain functional, fast, and reliable.

Follow this step-by-step guide to set up API monitoring in Postman.

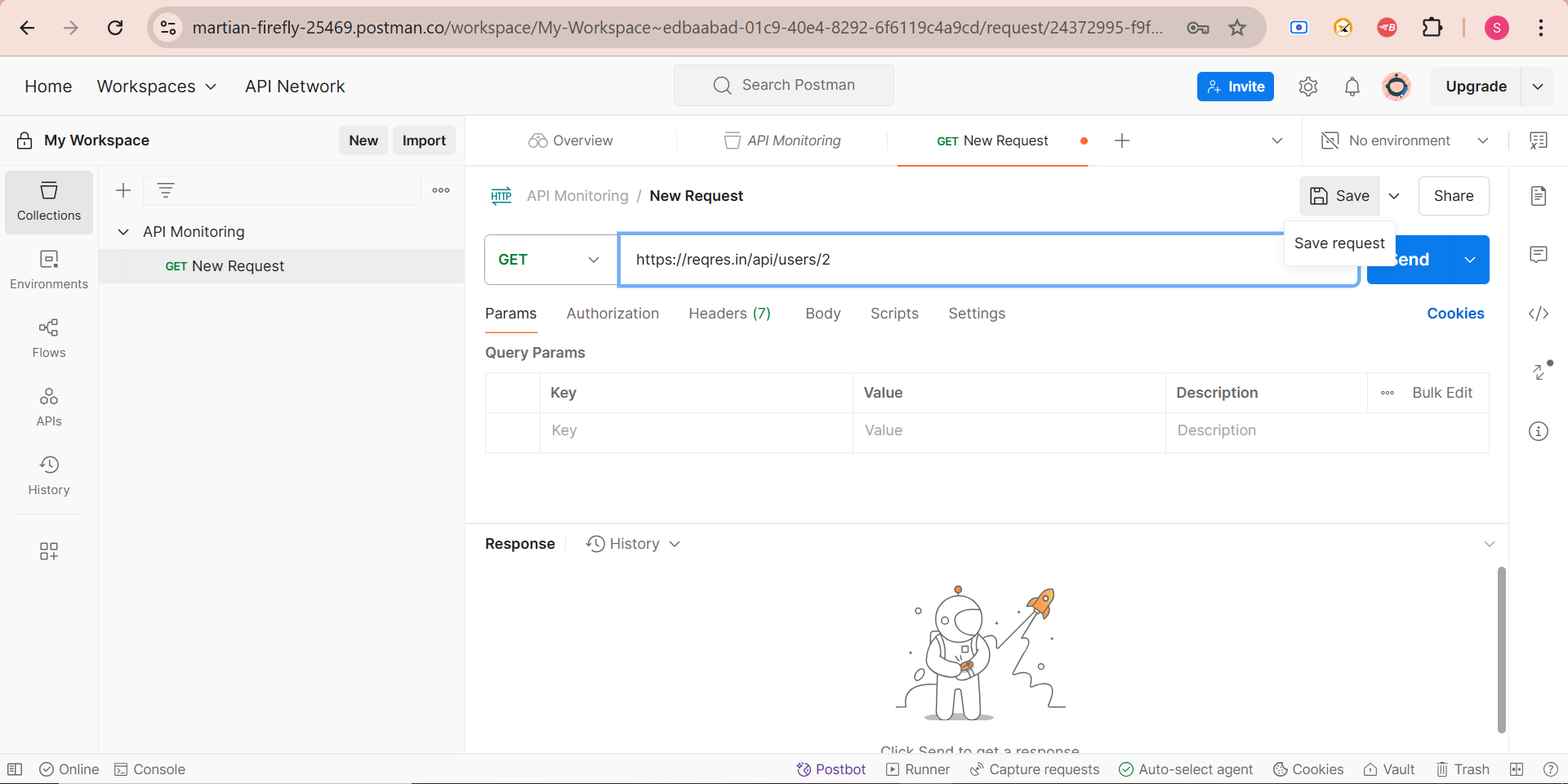

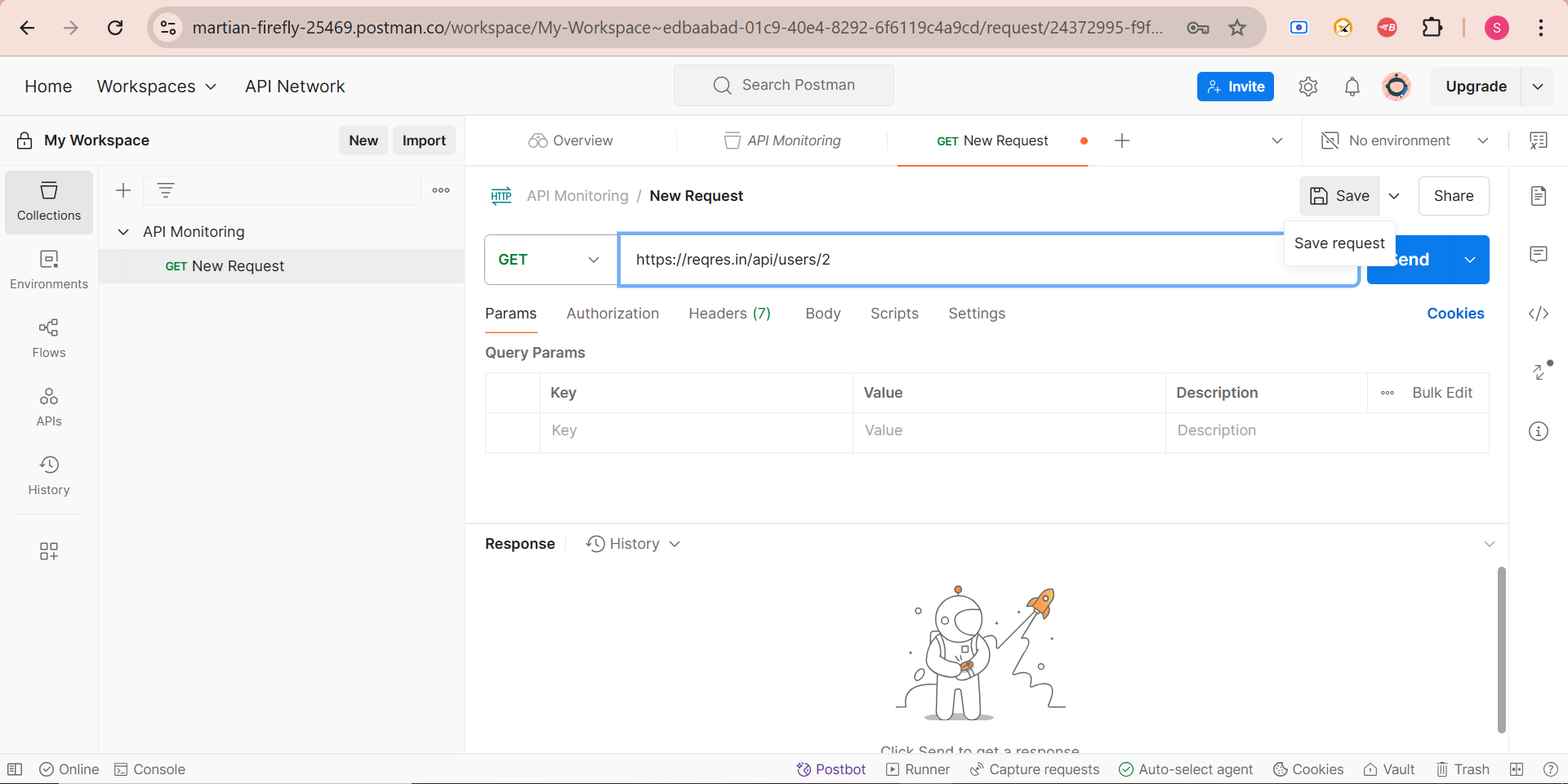

Step 1: Create a Postman Collection

A collection is a group of API requests that you want to monitor.

How to Create a Collection:

1. Open Postman and click on the “Collections” tab in the left sidebar.

2. Click “New Collection” and name it (e.g., “API Monitoring”).

3. Click “Add a request” and enter the API URL you want to monitor (e.g., https://api.example.com/users).

4. Select the request method (GET, POST, PUT, DELETE, etc.).

5. Click “Save” to store the request inside the collection.

Example:

- If you are monitoring a weather API, you might create a GET request like: https://api.weather.com/v1/location/{city}/forecas

- If you want to get single user from the list: https://reqres.in/api/users/2

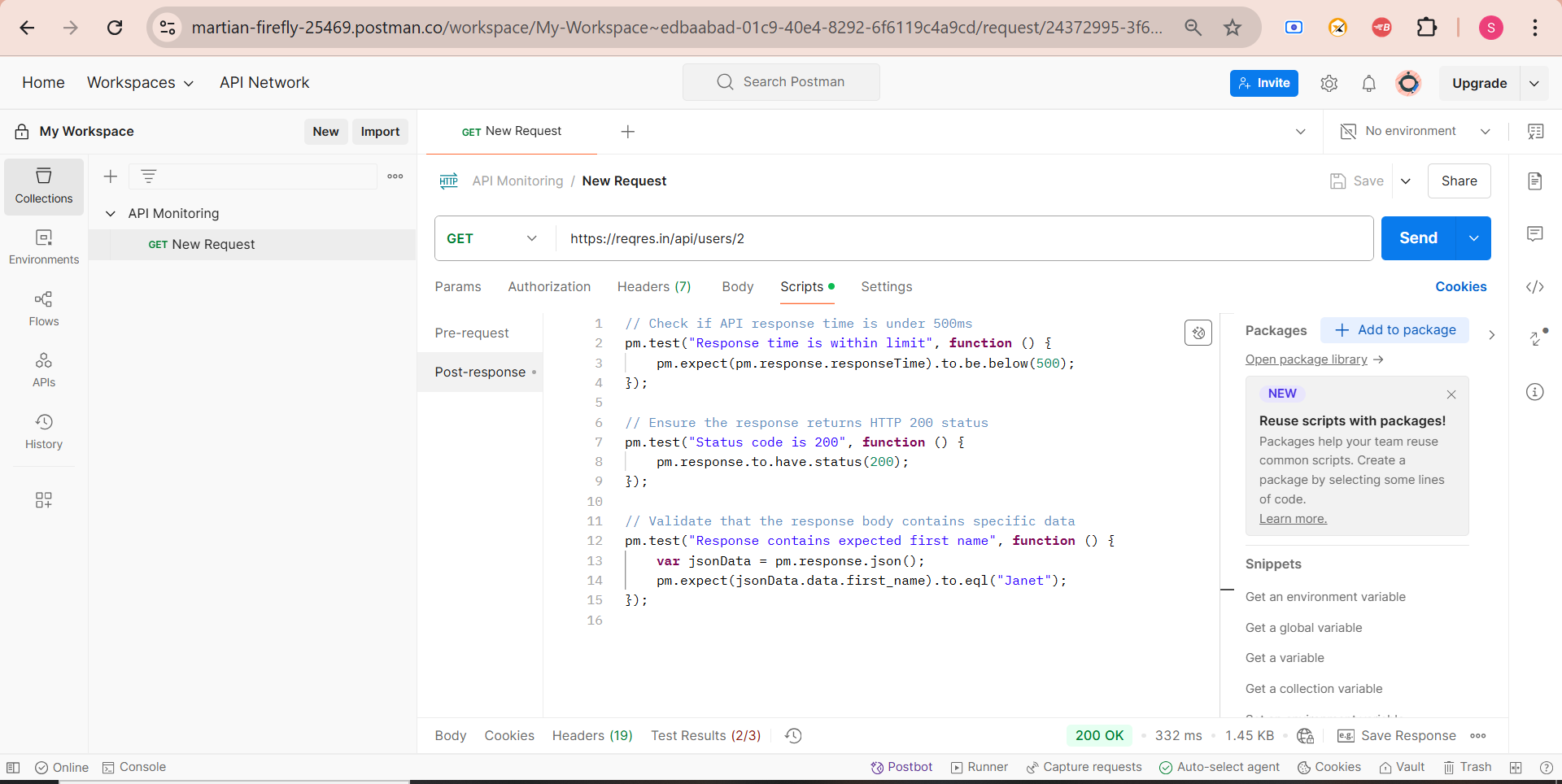

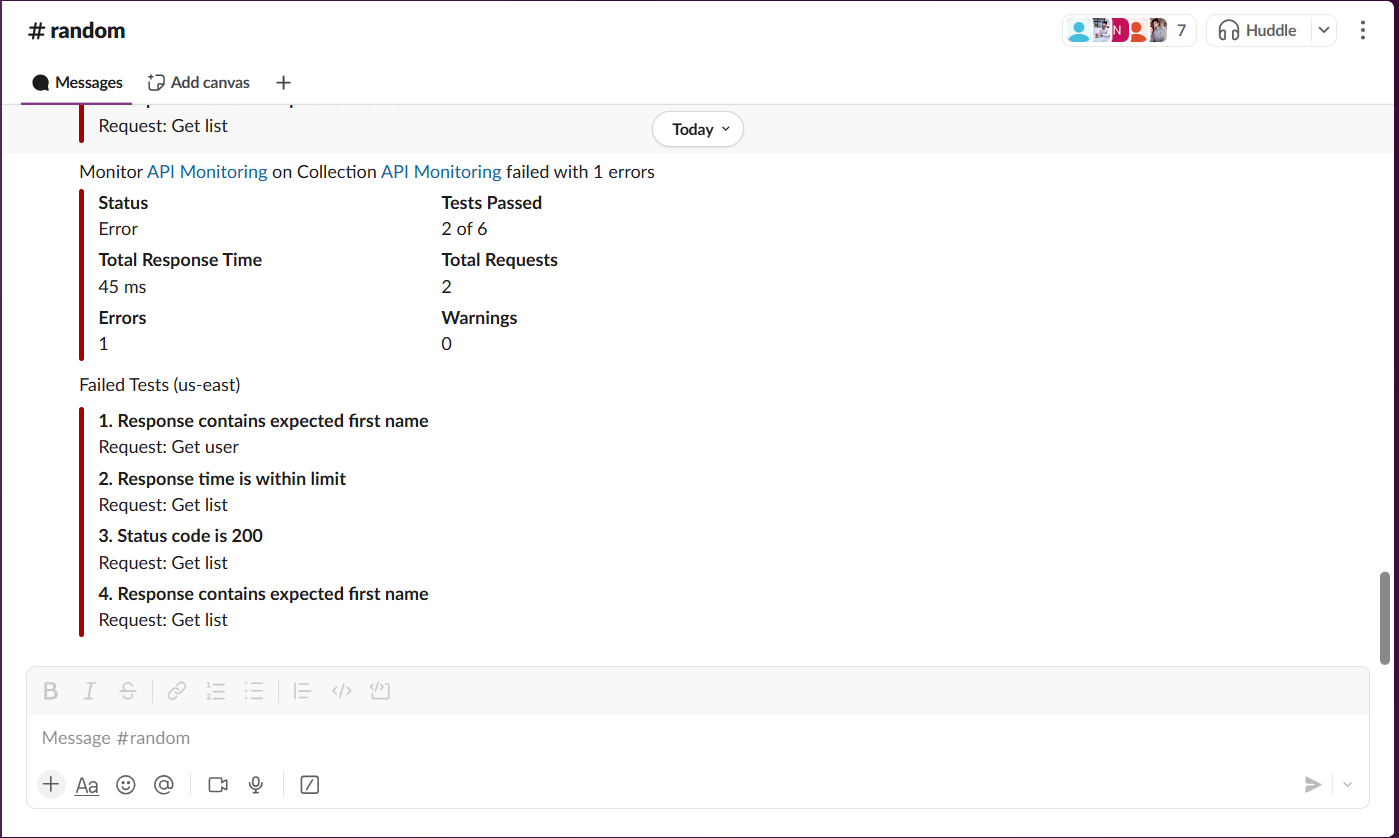

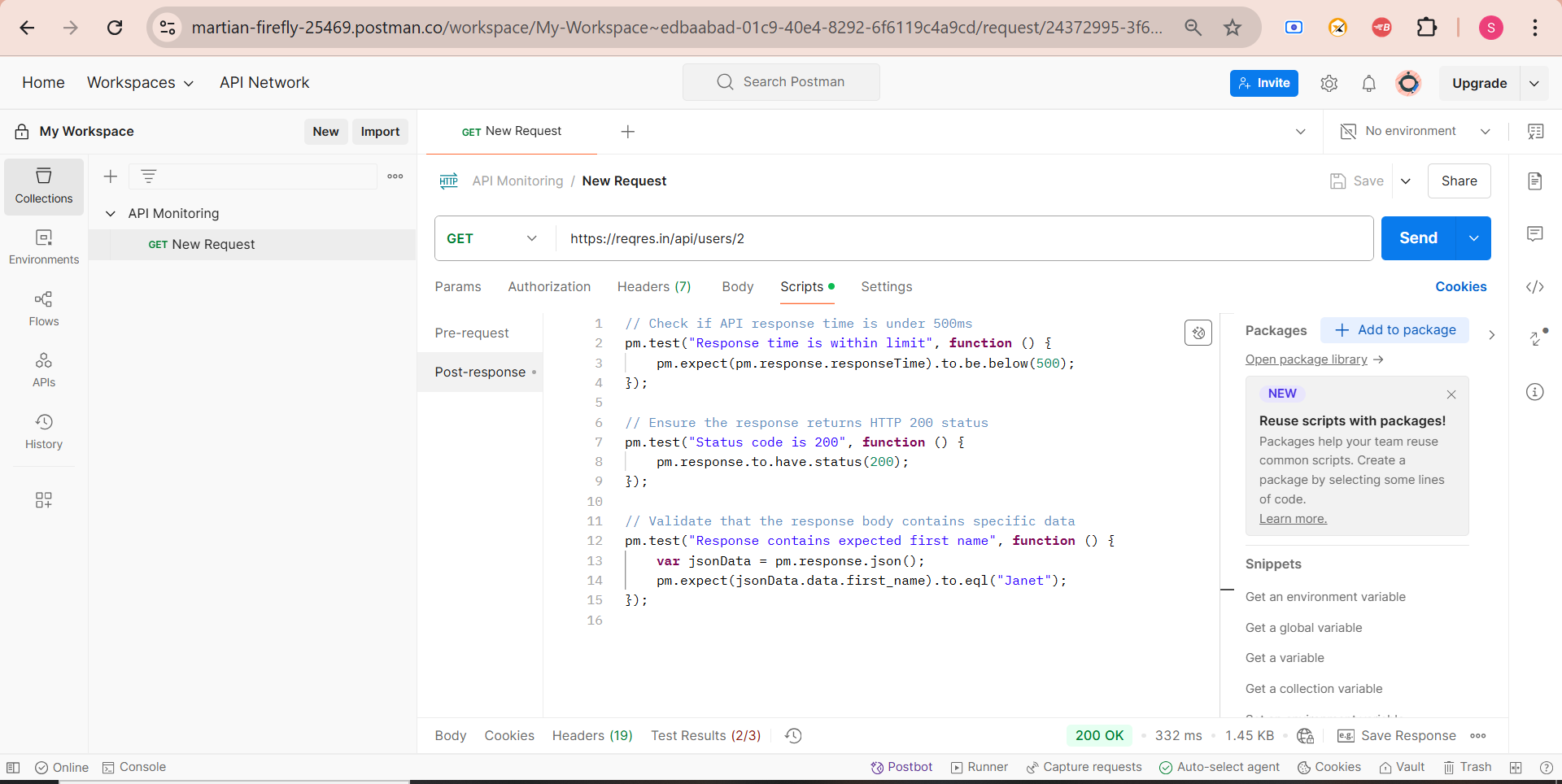

Step 2: Add API Tests to Validate Responses

Postman allows you to write test scripts in JavaScript to validate API responses.

How to Add API Tests in Postman:

1. Open your saved API request from the collection.

2. Click on the “Tests” tab.

3. Enter the following test scripts to check API response time, status codes, and data validation.

Example Test Script:

// Check if API response time is under 500ms

pm.test("Response time is within limit", function () {

pm.expect(pm.response.responseTime).to.be.below(500);

});

// Ensure the response returns HTTP 200 status

pm.test("Status code is 200", function () {

pm.response.to.have.status(200);

});

// Validate that the response body contains specific data

pm.test("Response contains expected data", function () {

var jsonData = pm.response.json();

//pm.expect(jsonData.city).to.eql("New York");

pm.expect(jsonData.data.first_name).to.eql("Janet");

});

4. Click “Save” to apply the tests to the request.

What These Tests Do:

- Response time check– Ensures API response is fast.

- Status code validation– Confirms API returns 200 OK.

- Data validation– Checks if the API response contains expected values.

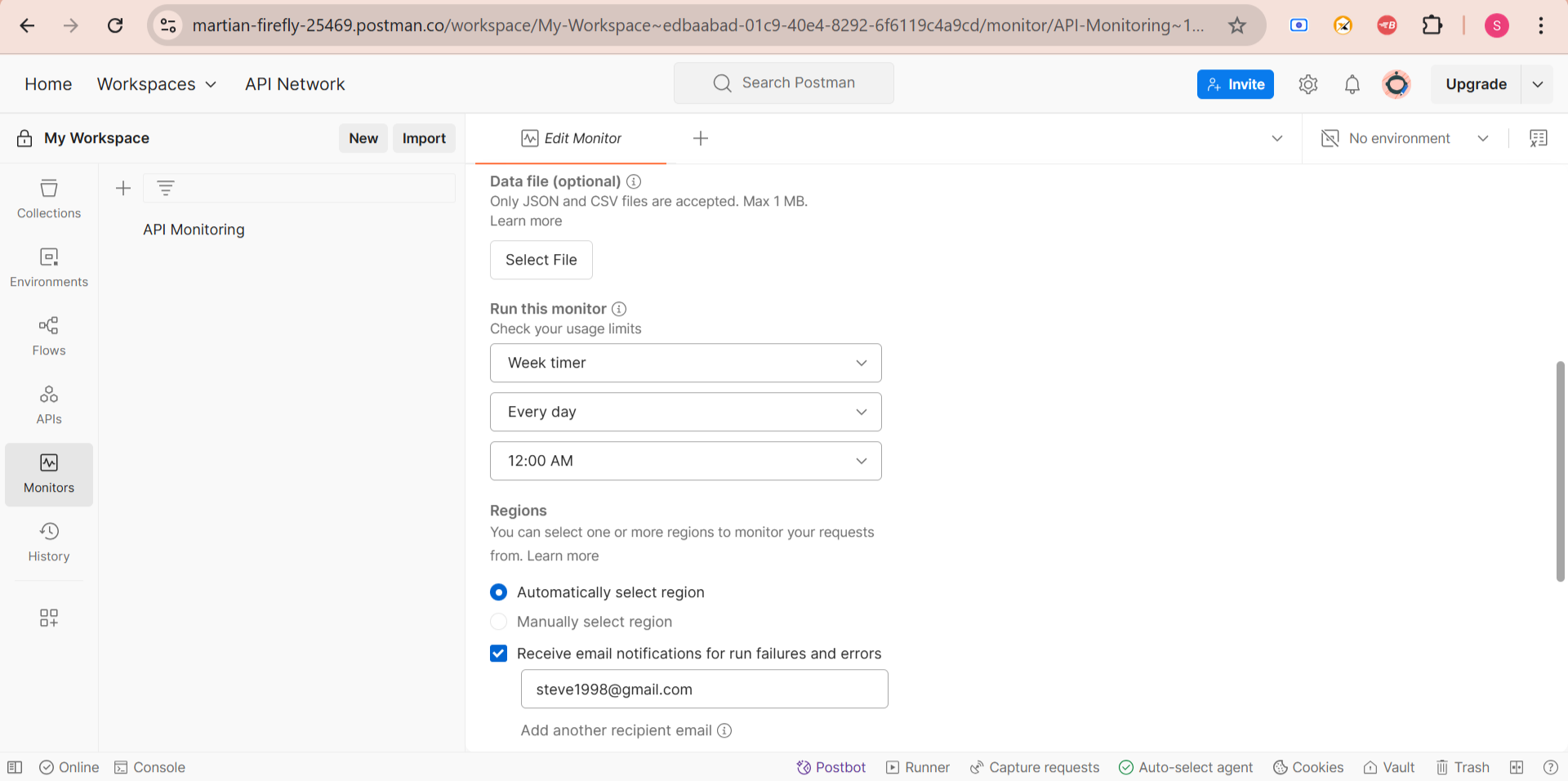

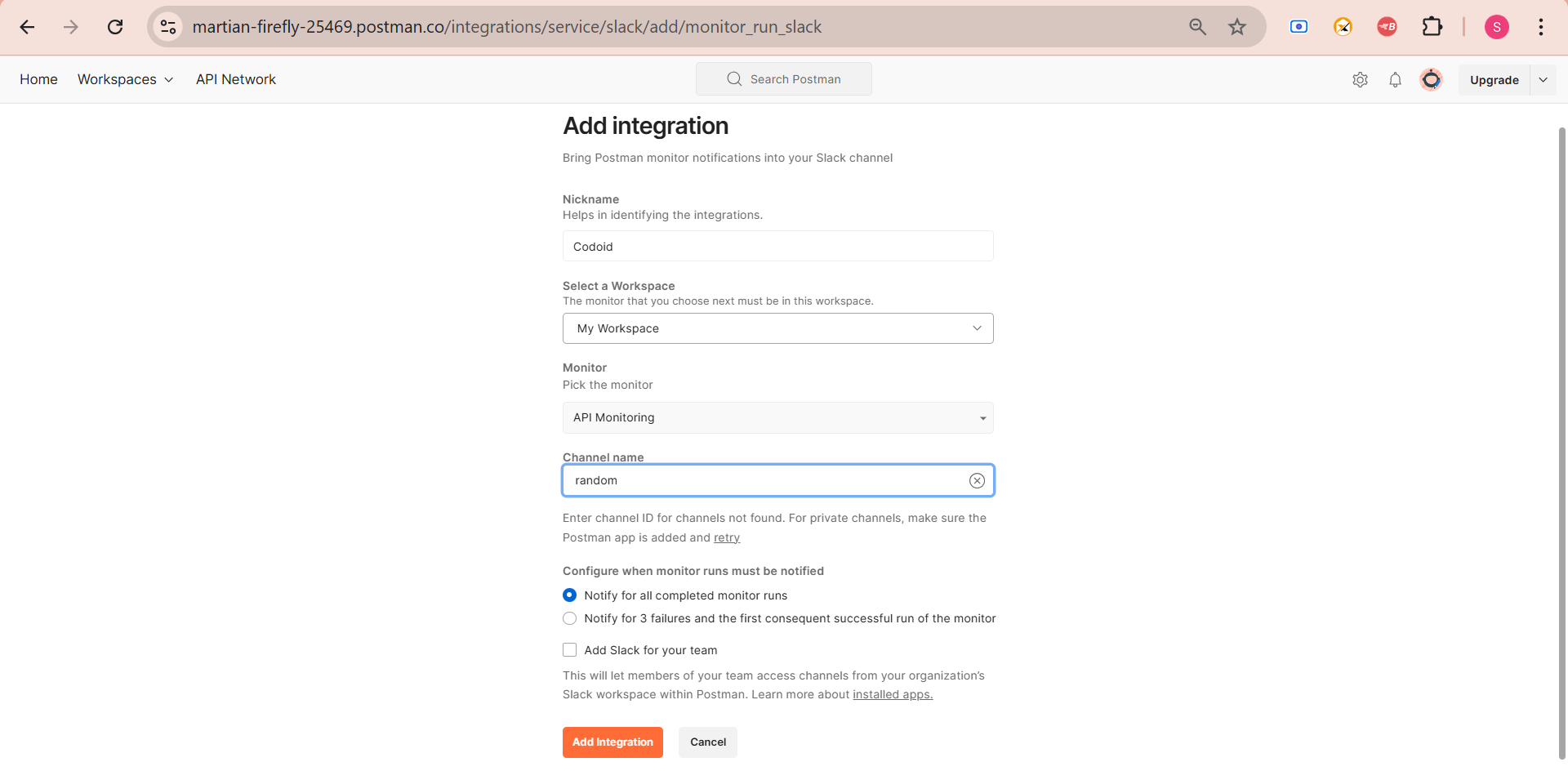

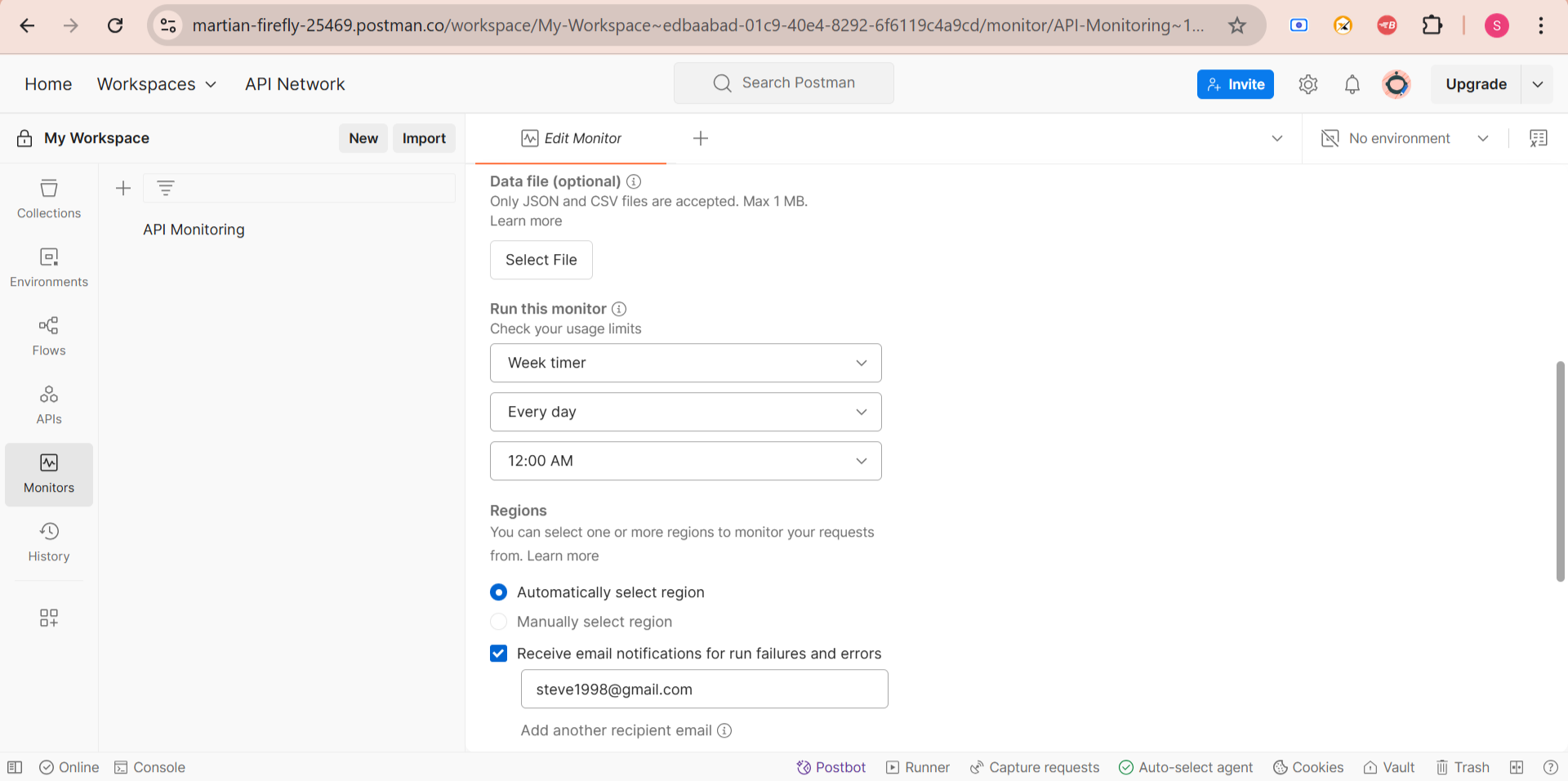

Step 3: Configure Postman Monitor

Postman Monitors allow you to run API tests at scheduled intervals to check API health and performance.

How to Set Up a Monitor in Postman:

1. Click on the “Monitors” tab on the left sidebar.

2. Click “Create a Monitor” and select the collection you created earlier.

3. Set the monitoring frequency (e.g., every 5 minutes, hourly, or daily).

- Set the monitoring frequency (e.g., Every day, 12 AM , or daily).

4. Choose a region for monitoring (e.g., US East, Europe, Asia) to check API performance from different locations.

5. Click “Create Monitor” to start tracking API behavior.

Example: A company that operates globally might set up monitors to run every 10 minutes from different locations to detect regional API performance issues.

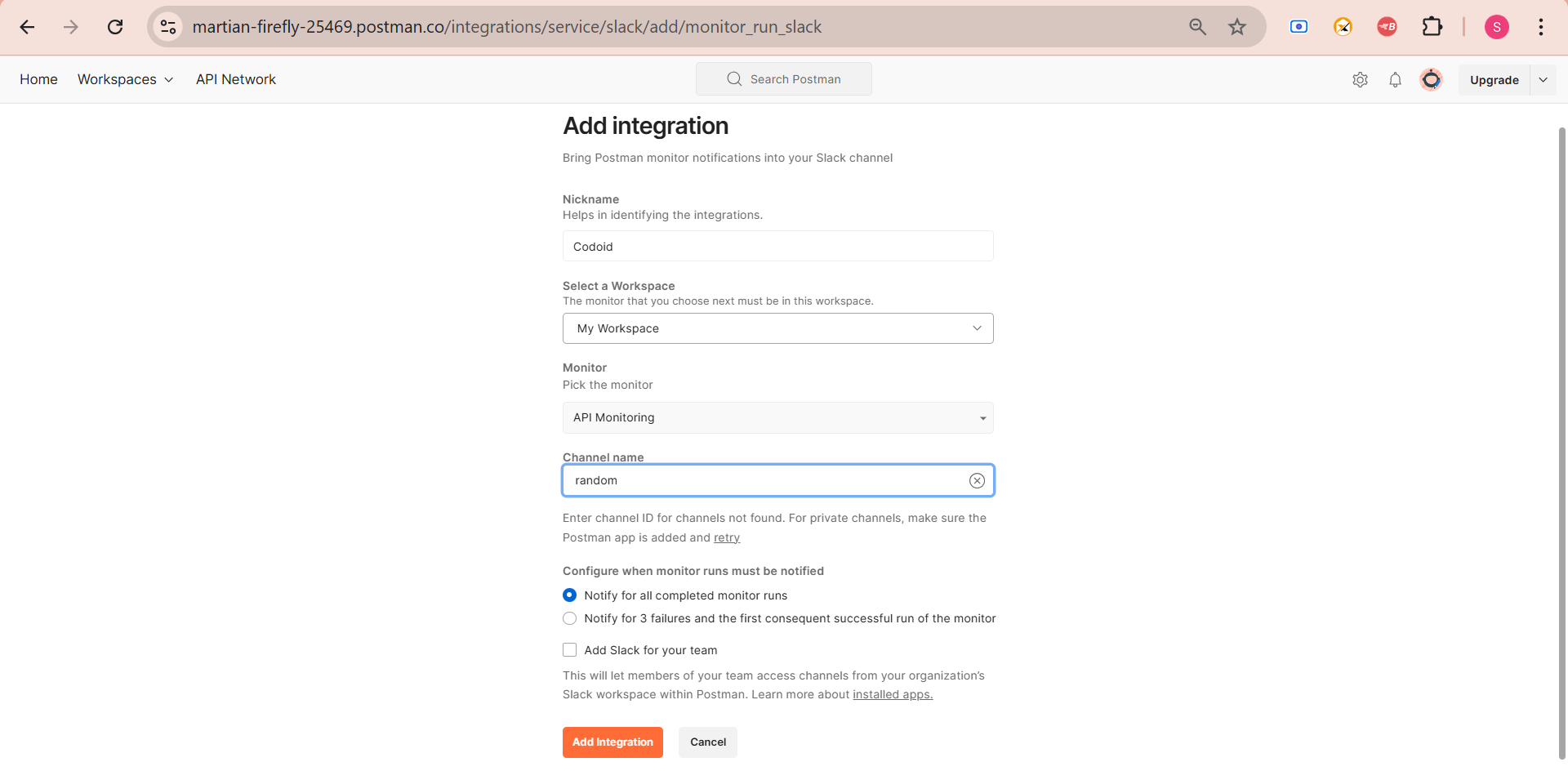

Step 4: Set Up Alerts for API Failures

To ensure quick response to API failures, Postman allows real-time notifications via email, Slack, and other integrations.

How to Set Up Alerts:

1. Open the Monitor settings in Postman.

2. Enable email notifications for failed tests.

3. Integrate Postman with Slack, Microsoft Teams, or PagerDuty for real-time alerts.

4. Use Postman Webhooks to send alerts to other monitoring systems.

Example: A fintech company might configure Slack alerts to notify developers immediately if their payment API fails.

Step 5: View API Monitoring Reports & Logs

Postman provides detailed execution history and logs to help you analyze API performance over time.

How to View Reports in Postman:

1. Click on the “Monitors” tab.

2. Select your API monitor to view logs.

3. Analyze:

- Success vs. failure rate of API calls.

- Average response time trends over time.

- Location-based API performance (if different regions were configured).

4. Export logs for debugging or reporting.

Example: A retail company might analyze logs to detect slow API response times during peak shopping hours and optimize their backend services.

Implementing API Monitoring Strategies

Implementing an effective API monitoring strategy involves setting up tools, defining key metrics, and ensuring proactive issue detection and resolution. Here’s a step-by-step approach:

1. Define API Monitoring Goals

Before implementing API monitoring, clarify the objectives:

- Ensure high availability (uptime monitoring).

- Improve performance (latency tracking).

- Validate functionality (response correctness).

- Detect security threats (unauthorized access or data leaks).

- Monitor third-party API dependencies (SLA compliance).

2. Identify Key API Metrics to Monitor

Track important API performance indicators, such as:

Availability Metrics

- Uptime/Downtime (Percentage of time API is available)

- Error Rate (5xx, 4xx errors)

Performance Metrics

- Response Time (Latency in milliseconds)

- Throughput (Requests per second)

- Rate Limiting Issues (Throttling by API providers)

Functional Metrics

- Payload Validation (Ensuring expected response structure)

- Endpoint Coverage (Monitoring all critical API endpoints)

Security Metrics

- Unauthorized Access Attempts

- Data Breach Indicators (Unusual data retrieval patterns)

3. Implement Different Types of Monitoring

A. Real-Time Monitoring

- Continuously check API health and trigger alerts if it fails.

- Use tools like Prometheus + Grafana for real-time metrics.

B. Synthetic API Testing

- Simulate real-world API calls and verify responses.

- Use Postman or Runscope to automate synthetic tests.

C. Log Analysis & Error Tracking

- Collect API logs and analyze patterns for failures.

- Use ELK Stack (Elasticsearch, Logstash, Kibana) or Datadog.

D. Load & Stress Testing

- Simulate heavy traffic to ensure APIs can handle peak loads.

- Use JMeter or k6 to test API scalability.

4. Set Up Automated Alerts & Notifications

- Use Slack, PagerDuty, or email alerts for incident notifications.

- Define thresholds (e.g., response time > 500ms, error rate > 2%).

- Use Prometheus AlertManager or Datadog Alerts for automation.

5. Integrate with CI/CD Pipelines

- Add API tests in Jenkins, GitHub Actions, or GitLab CI/CD.

- Run functional and performance tests during deployments.

- Prevent faulty API updates from going live.

6. Ensure API Security & Compliance

- Implement Rate Limiting & Authentication Checks.

- Monitor API for malicious requests (SQL injection, XSS, etc.).

- Ensure compliance with GDPR, HIPAA, or other regulations.

7. Regularly Review and Optimize Monitoring

- Conduct monthly API performance reviews.

- Adjust alert thresholds based on historical trends.

- Improve monitoring coverage for new API endpoints.

Conclusion

API monitoring helps prevent issues before they impact users. By using the right tools and strategies, businesses can minimize downtime, improve efficiency, and provide seamless digital experiences. To achieve robust API monitoring, expert guidance can make a significant difference. Codoid, a leading software testing company, provides comprehensive API testing and monitoring solutions, ensuring APIs function optimally under various conditions.

Frequently Asked Questions

-

Why is API monitoring important?

API monitoring helps detect downtime early, improves performance, ensures reliability, enhances security, and optimizes third-party API usage.

-

How can I set up API monitoring in Postman?

You can create a Postman Collection, add test scripts, configure Postman Monitor, set up alerts, and analyze reports to track API performance.

-

How does API monitoring improve security?

API monitoring detects unusual traffic patterns, unauthorized access attempts, and potential vulnerabilities, ensuring a secure API environment.

-

How do I set up alerts for API failures?

Alerts can be configured in Postman via email, Slack, Microsoft Teams, or PagerDuty to notify teams in real-time about API issues.

-

What are best practices for API monitoring?

-Define clear monitoring goals.

-Use different types of monitoring (real-time, synthetic, security).

-Set up automated alerts for quick response.

-Conduct load and stress testing.

-Regularly review and optimize monitoring settings.

by Charlotte Johnson | Mar 6, 2025 | Software Testing, Blog, Latest Post |

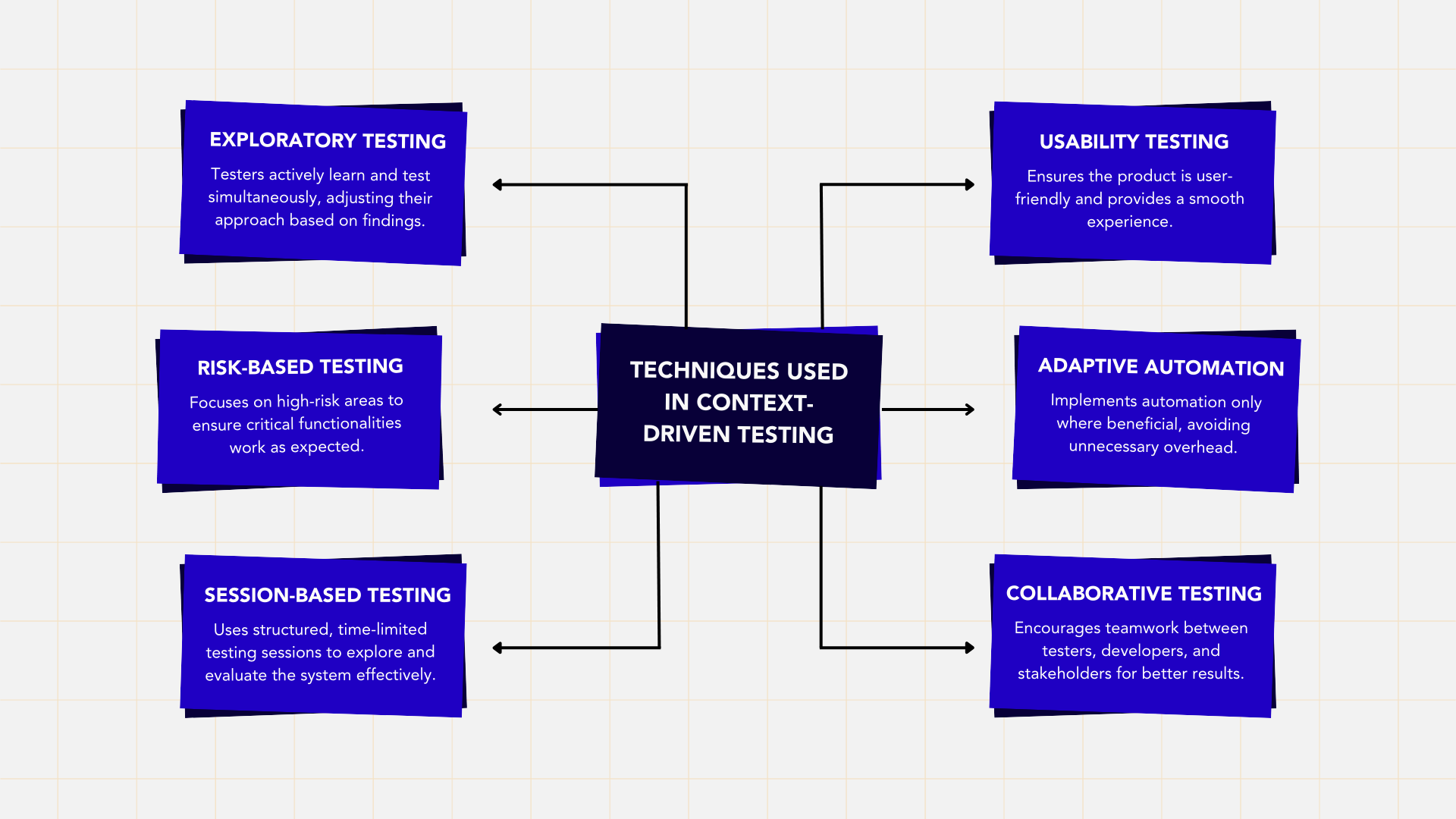

Many traditional software testing methods follow strict rules, assuming that the same approach works for every project. However, every software project is different, with unique challenges, requirements, and constraints. Context-Driven Testing (CDT) is a flexible testing approach that adapts strategies based on the specific needs of a project instead of following fixed best practices, CDT encourages testers to think critically and adjust their methods based on project goals, team skills, budget, timelines, and technical limitations. This approach was introduced by Cem Kaner, James Bach, and Bret Pettichord, who emphasized that there are no universal testing rules—only practices that work well in a given context. CDT is particularly useful in agile projects, startups, and rapidly changing environments where requirements often shift. It allows testers to adapt in real time, ensuring testing remains relevant and effective. Unlike traditional methods that focus only on whether the software meets requirements, CDT ensures the product actually solves real problems for users. By promoting flexibility, collaboration, and problem-solving, Context-Driven Testing helps teams create high-quality software that meets both business and user expectations. It is a practical, efficient, and intelligent approach to testing in today’s fast-paced software development world.

The Evolution of Context-Driven Testing in Software Development

Software testing has evolved from rigid, standardized processes to more flexible and adaptive approaches. Context-driven testing (CDT) emerged as a response to traditional frameworks that struggled to handle the unique needs of different projects.

Early Testing: A Fixed Approach

Initially, software testing followed strictly defined processes with heavy documentation and structured test cases. Waterfall models required extensive upfront planning, making it difficult to adapt to changes. These methods often led to:

- Lack of flexibility in dynamic projects

- Inefficient use of resources, focusing on documentation over actual testing

- Misalignment with business needs, causing ineffective testing outcomes

The Shift Toward Agile and Exploratory Testing

With the rise of Agile development, testing became more iterative and collaborative, allowing testers to:

- Think critically instead of following rigid scripts

- Adapt quickly to changes in project requirements

- Prioritize business value over just functional correctness

However, exploratory testing lacked a structured decision-making framework, leading to the need for Context-Driven Testing.

The Birth of Context-Driven Testing

CDT was introduced by Cem Kaner, James Bach, and Bret Pettichord as a flexible, situational approach to testing. It focuses on:

- Tailoring testing methods based on project context

- Encouraging collaboration between testers, developers, and stakeholders

- Adapting continuously as projects evolve

This made CDT highly effective for Agile, DevOps, and fast-paced development environments.

CDT in Modern Software Development

Today, CDT remains crucial in handling complex software systems such as AI-driven applications and IoT devices. It continues to evolve by:

- Integrating AI-based testing for smarter test coverage

- Working with DevOps for continuous, real-time testing

- Focusing on risk-based testing to address critical system areas

By adapting to real-world challenges, CDT ensures efficient, relevant, and high-impact testing in today’s fast-changing technology landscape.

The Seven Key Principles of Context-Driven Testing

1. The value of any practice depends on its context.

2. There are good practices in context, but there are no best practices.

3. People, working together, are the most important part of any project’s context.

4. Projects unfold over time in ways that are often not predictable.

5. The product is a solution. If the problem isn’t solved, the product doesn’t work.

6. Good software testing is a challenging intellectual process.

7. Only through judgment and skill, exercised cooperatively throughout the entire project, are we able to do the right things at the right times to effectively test our products.

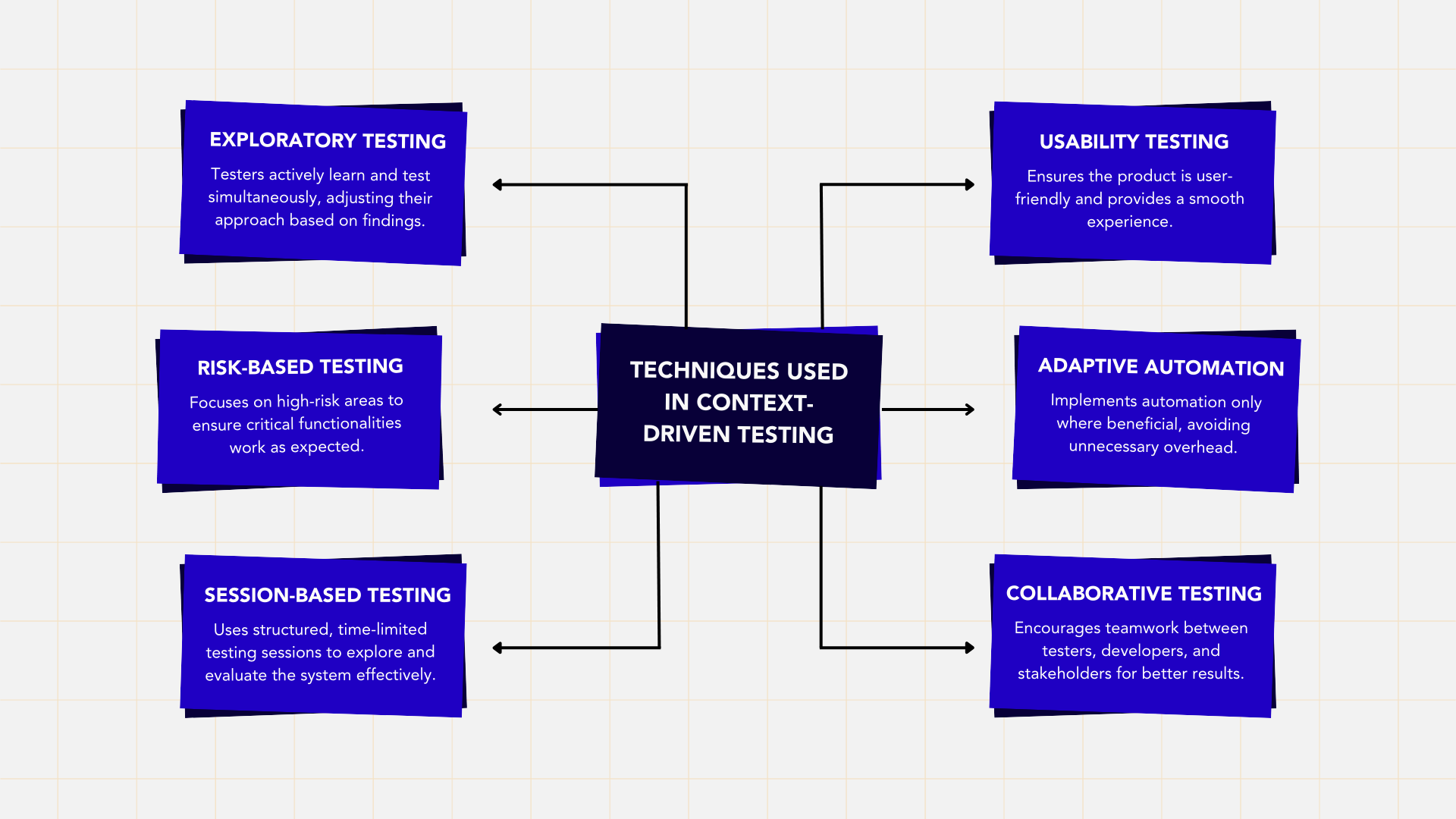

Step-by-Step Guide to Adopting Context-Driven Testing

Adopting Context-Driven Testing (CDT) requires a flexible mindset and a willingness to adapt testing strategies based on project needs. Unlike rigid frameworks, CDT focuses on real-world scenarios, team collaboration, and continuous learning. Here’s how to implement it effectively:

- Understand the Project Context – Identify key business goals, technical constraints, and potential risks to tailor the testing approach.

- Choose the Right Testing Techniques – Use exploratory testing, risk-based testing, or session-based testing depending on project requirements.

- Encourage Tester Autonomy – Allow testers to make informed decisions and think critically rather than strictly following predefined scripts.

- Collaborate with Teams – Work closely with developers, business analysts, and stakeholders to align testing efforts with real user needs.

- Continuously Adapt – Modify testing strategies as the project evolves, focusing on areas with the highest impact.

By following these steps, teams can ensure effective, relevant, and high-quality testing that aligns with real-world project demands.

Case Studies: Context-Driven Testing in Action

These case studies demonstrate how Context-Driven Testing (CDT) adapts to different industries and project needs by applying flexible, risk-based, and user-focused testing methods. Unlike rigid testing frameworks, CDT helps teams prioritize critical aspects, optimize testing efforts, and adapt to evolving requirements, ensuring high-quality software that meets real-world demands.

Case Study 1: Ensuring Security in Online Banking

Client: A financial institution launching an online banking platform.

Challenge: Ensuring strict security and compliance due to financial regulations.

How CDT Helps:

Banking applications deal with sensitive financial data, making security and compliance top priorities. CDT allows testers to focus on high-risk areas, choosing testing techniques that best suit security needs instead of following a generic testing plan.

Context-Driven Approach:

- Security Testing: Identified vulnerabilities like SQL injection, unauthorized access, and session hijacking through exploratory security testing.

- Compliance Testing: Ensured the platform met industry regulations (e.g., PCI-DSS, GDPR) by adapting testing to legal requirements.

- Load Testing: Simulated peak transaction loads to check performance under heavy usage.

- Exploratory Testing: Assessed UI/UX usability, identifying any issues affecting the user experience.

Outcome: A secure, compliant, and user-friendly banking platform that meets regulatory requirements while providing a smooth customer experience.

Case Study 2: Handling High Traffic for an E-Commerce Platform

Client: A startup preparing for a Black Friday sale.

Challenge: Ensuring the website can handle high traffic volumes without performance failures.

How CDT Helps:

E-commerce businesses face seasonal traffic spikes, which can lead to website crashes and lost sales. CDT helps by prioritizing performance and scalability testing while considering time and budget constraints.

Context-Driven Approach:

- Performance Testing: Simulated real-time Black Friday traffic to test site stability under heavy loads.

- Cloud-Based Load Testing: Used cost-effective cloud testing tools to manage high-traffic scenarios within budget.

- Collaboration with Developers: Worked closely with developers to identify and resolve bottlenecks affecting website performance.

Outcome: A stable, high-performing e-commerce website capable of handling increased user traffic without downtime, maximizing sales during peak shopping events.

Case Study 3: Testing an IoT-Based Smart Home Device

Client: A company launching a smart thermostat with WiFi and Bluetooth connectivity.

Challenge: Ensuring seamless connectivity, ease of use, and durability in real-world conditions.

How CDT Helps:

Unlike standard software applications, IoT devices operate in varied environments with different network conditions. CDT allows testers to focus on real-world usage scenarios, adapting testing based on device behavior and user expectations.

Context-Driven Approach:

- Usability Testing: Ensured non-technical users could set up and configure the device easily.

- Network Testing: Evaluated WiFi and Bluetooth stability under different network conditions.

- Environmental Testing: Tested durability by simulating temperature and humidity variations.

- Real-World Scenario Testing: Assessed performance outside lab conditions, ensuring the device functions as expected in actual homes.

Outcome: A user-friendly, reliable smart home device tested under real-world conditions, ensuring smooth operation for end users.

Advantages of Context-Driven Testing

- Adaptability: Adjusts to project-specific needs rather than following rigid processes.

- Focus on Business Goals: Ensures testing efforts align with what matters most to the business.

- Encourages Critical Thinking: Testers make informed decisions rather than blindly executing test cases.

- Effective Resource Utilization: Saves time and effort by prioritizing relevant tests.

- Higher Quality Feedback: Testing aligns with real-world usage rather than theoretical best practices.

- Increased Collaboration: Encourages better communication between testers, developers, and stakeholders.

Challenges of Context-Driven Testing

- Requires Skilled Testers: Testers must have deep analytical skills and domain knowledge.

- Difficult to Standardize: Organizations that prefer fixed processes may find it hard to implement.

- Needs Strong Communication: Collaboration is key, as the approach depends on aligning with stakeholders.

- Potential Pushback from Management: Some organizations prefer strict guidelines and may resist a flexible approach.

Best Practices for Context-Driven Testing Success

To effectively implement Context-Driven Testing (CDT), teams must embrace flexibility, critical thinking, and collaboration. Here are some best practices to ensure success:

- Understand the Project Context – Identify business goals, user needs, technical limitations, and risks before choosing a testing approach.

- Choose Testing Techniques Wisely – Use exploratory, risk-based, or session-based testing based on project requirements.

- Encourage Tester Independence – Allow testers to think critically, explore, and adapt instead of just following predefined scripts.

- Promote Collaboration – Engage developers, business analysts, and stakeholders to align testing with business needs.

- Be Open to Change – Adjust testing strategies as requirements evolve and new challenges arise.

- Balance Manual and Automated Testing – Automate only where valuable, focusing on repetitive or high-risk areas.

- Measure and Improve Continuously – Track testing effectiveness, gather feedback, and refine the process for better results.

Conclusion

Context-Driven Testing (CDT) is a flexible, adaptive, and real-world-focused approach that ensures testing aligns with the unique needs of each project. Unlike rigid, predefined testing methods, CDT allows testers to think critically, collaborate effectively, and adjust strategies based on evolving project requirements. This makes it especially valuable in Agile, DevOps, and rapidly changing development environments. For businesses looking to apply CDT effectively, Codoid offers expert testing services, including exploratory, automation, performance, and usability testing. Their customized approach helps teams build high-quality, user-friendly software while adapting to project challenges.

Frequently Asked Questions

-

What Makes Context-Driven Testing Different from Traditional Testing Approaches?

Context-driven testing is about adjusting to the specific needs of a project instead of sticking to set methods. It is different from the traditional way of testing. This approach values flexibility and creativity, helping to meet specific needs well. By using this tailored method, it improves test coverage and makes sure testing work closely matches the project goals.

-

How Do You Determine the Context for a Testing Project?

To understand the project context for testing, you need to look at project requirements, the needs of stakeholders, and current systems. Think about things like how big the project is, its timeline, and any risks involved. These factors will help you adjust your testing plan. Using development tools can also help make sure your testing fits well with the project context.

-

Can Context-Driven Testing Be Automated?

Context-driven testing cannot be fully automated. This is because it relies on being flexible and understanding human insights. Still, automated tools can help with certain tasks, like regression testing. They allow for manual work when understanding the details of a situation is important.

-

How Does Context-Driven Testing Fit into DevOps Practices?

Context-driven testing works well with DevOps practices by adjusting to the changing development environment. It focuses on being flexible, getting quick feedback, and working together, which are important in continuous delivery. By customizing testing for each project, it improves software quality and speeds up deployment cycles.

-

What Are the First Steps in Transitioning to Context-Driven Testing?

To switch to context-driven testing, you need to know the project requirements very well. Adjust your test strategies to meet these needs. Work closely with stakeholders to ensure everyone is on the same page with testing. Include ways to gather feedback for ongoing improvement and flexibility. Use tools that fit in well with adaptable testing methods.

by Anika Chakraborty | Mar 5, 2025 | Accessibility Testing, Blog, Latest Post |

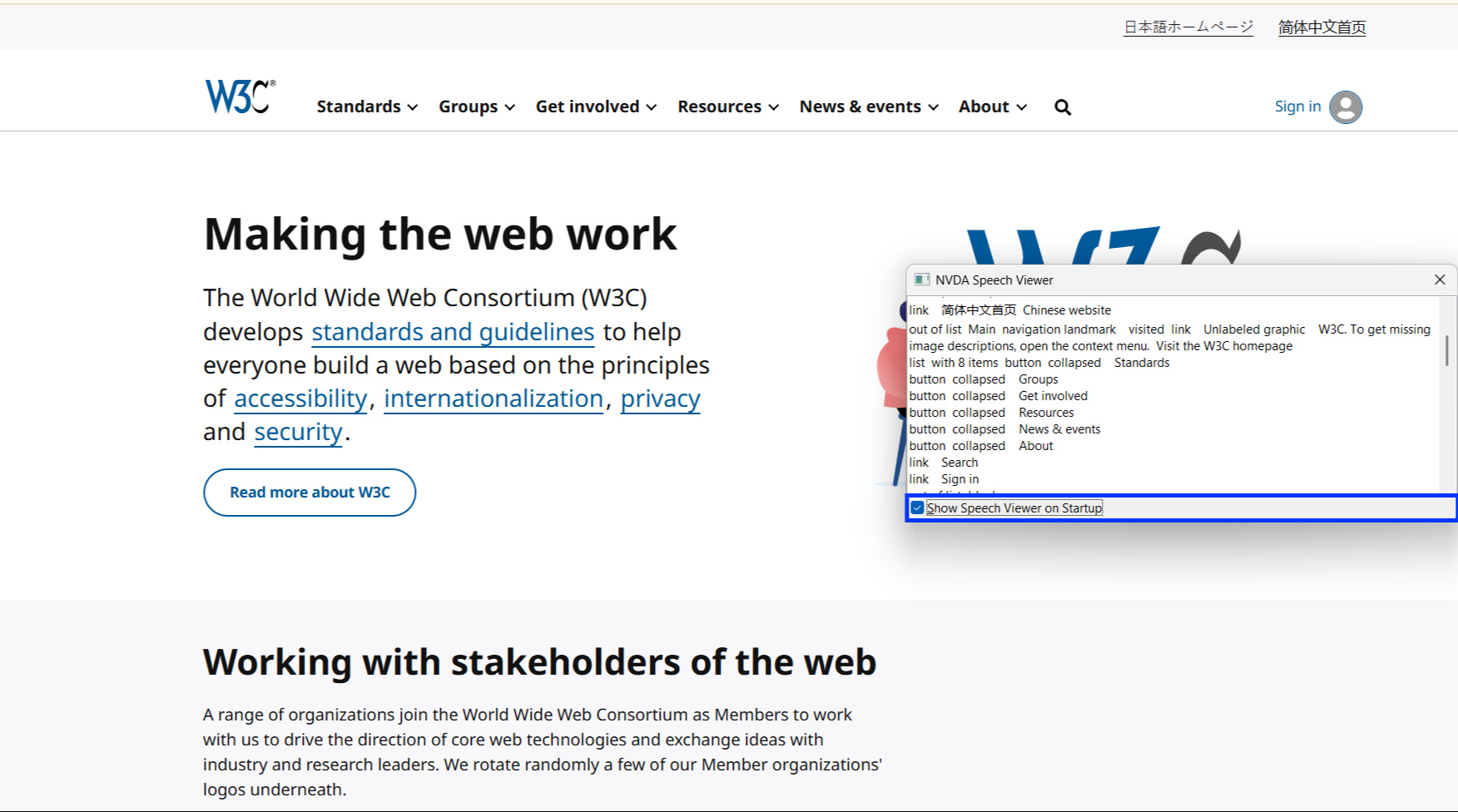

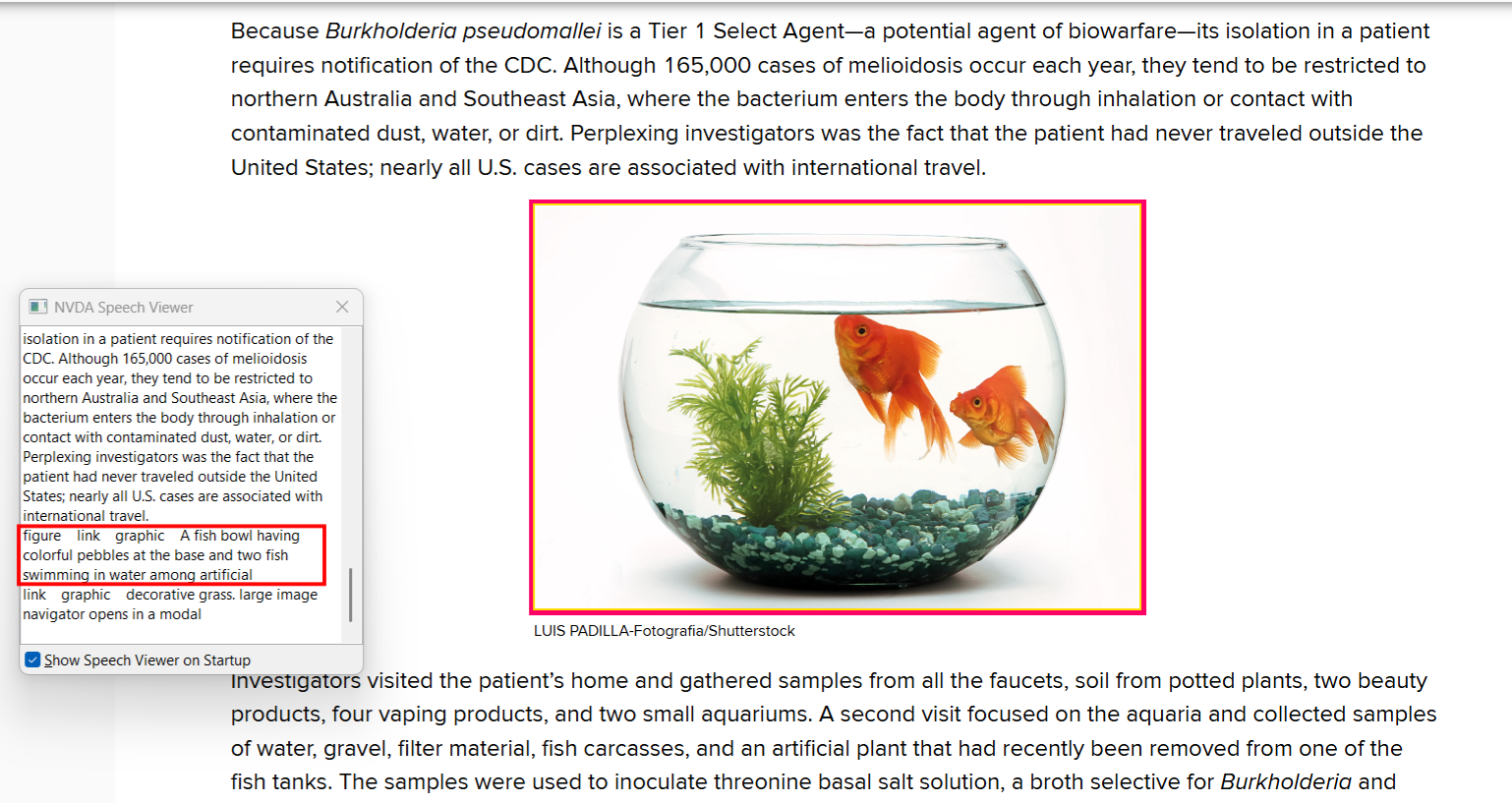

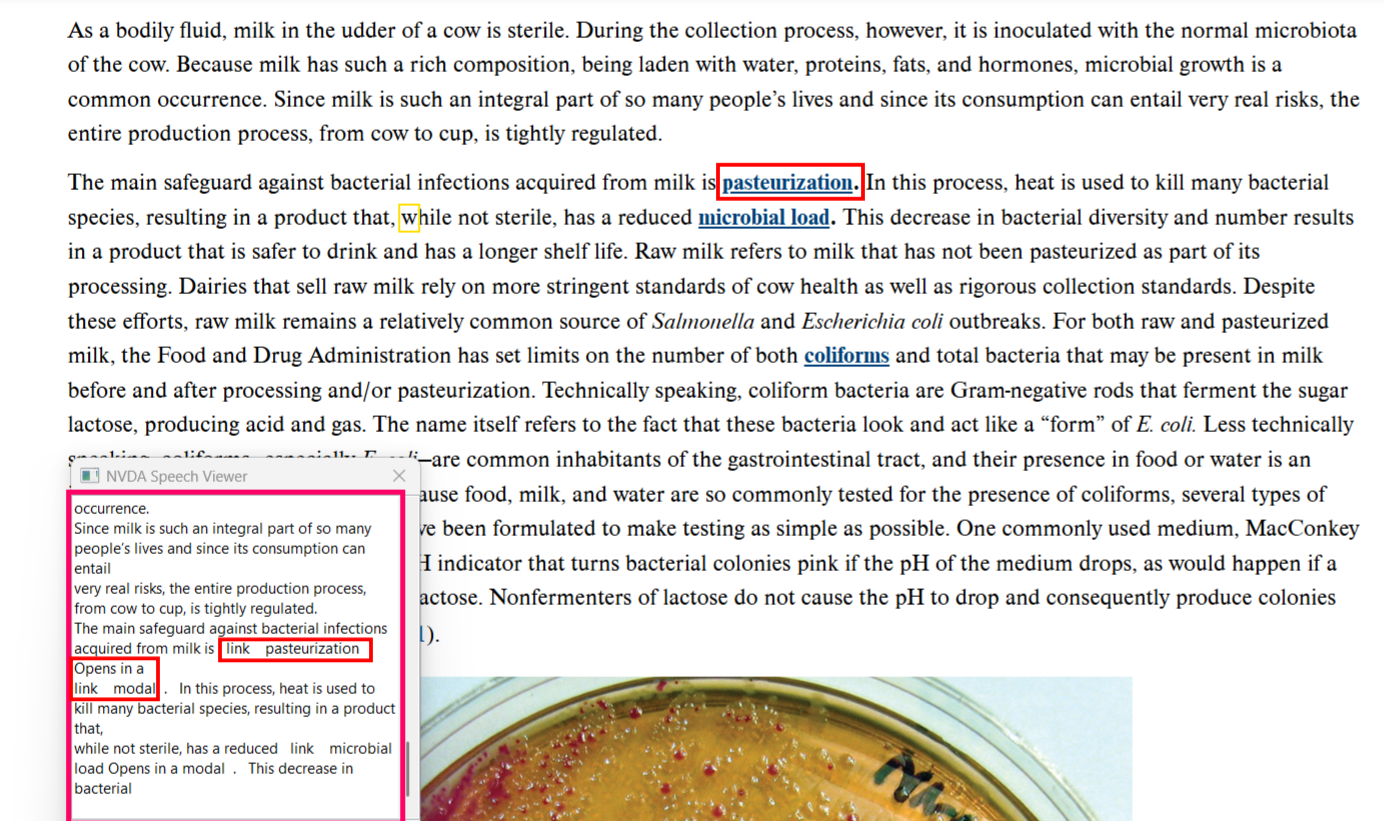

NVDA (NonVisual Desktop Access) is a powerful screen reader designed to assist individuals with visual impairments in navigating and interacting with digital content. It enables users to access Windows-based applications, websites, documents, and emails by converting on-screen text into speech and Braille output. With support for multiple languages and Braille displays, NVDA provides accessibility across various digital platforms. It also offers customizable keyboard shortcuts, speech synthesis options, and screen magnification features, allowing users to tailor their experience based on individual needs. In addition to its role in daily accessibility, NVDA is an essential tool for accessibility testing, helping organizations evaluate whether their digital products meet key accessibility standards such as WCAG, Section 508, ADA, and EN 301 549. By simulating how visually impaired users interact with websites and applications, testers can identify and fix accessibility barriers, ensuring an inclusive digital experience. This blog will guide you through how to use NVDA effectively, covering installation, basic navigation, and advanced features like web browsing, document reading, application accessibility, and accessibility testing. Whether you’re a beginner or an experienced user, this tutorial will help you maximize NVDA’s capabilities for seamless digital access.

Why Choose NVDA for Accessibility Testing?

NVDA is widely used by many visually impaired users due to its reliability, accessibility, and powerful features. As one of the most popular screen readers, it plays a crucial role in accessibility testing, ensuring that websites and applications are compatible with real-world usage.

As part of the testing process, NVDA is utilized to evaluate accessibility and verify compliance with WCAG and other accessibility standards. Its features make it an essential tool for testers in identifying and addressing accessibility barriers.

- Free & Open-Source – Available at no cost, making it accessible to everyone.

- Multi-Language Support – Supports various languages and voice options for diverse users.

- Braille Compatibility – Works with external braille displays, expanding accessibility.

- Keyboard Navigation – Enables seamless interaction using hotkeys, crucial for users relying on keyboard controls.

- Website & App Testing – Helps validate accessibility compliance and usability.

- Continuous Updates – Regular improvements enhance performance and functionality.

- Lightweight & Fast – Runs efficiently on low-end devices, making it widely accessible.

Since many disabled users depend on NVDA, it should be included in accessibility testing, along with other screen readers like JAWS, VoiceOver, and Narrator. Its use ensures that digital products are accessible, user-friendly, and inclusive for all.

How to Install NVDA (Step-by-Step):

1. Download NVDA

2. Open the Installer: Locate the downloaded .exe file in your downloads folder.

3. Confirm Installation: Click “Yes” in the pop-up dialog box that appears.

4. Choose Installation Options: Select your preferred installation options (such as installing for all users or just for yourself).

5. Start Installation: Click Install to begin the process.

6. Complete Installation: Once the installation is complete, click Finish.You may be given an option to launch NVDA immediately.

7. Restart : your computer if prompted to ensure smooth functionality.

How to Perform NVDA Testing

1. Check the Navigation

- Check if all interactive elements (buttons, links, forms) receive focus.

- Ensure the focus moves in a logical order and does not jump randomly.

2. Verify Headings Structure

- Ensure headings are labeled correctly (H1, H2, H3, etc.).

- Use the H key to navigate through headings efficiently.

3. Test Readability & Content Order

- Use the Down Arrow key to check if content is read in a logical sequence.

- Navigate backward using the Up Arrow key to ensure text flows naturally.

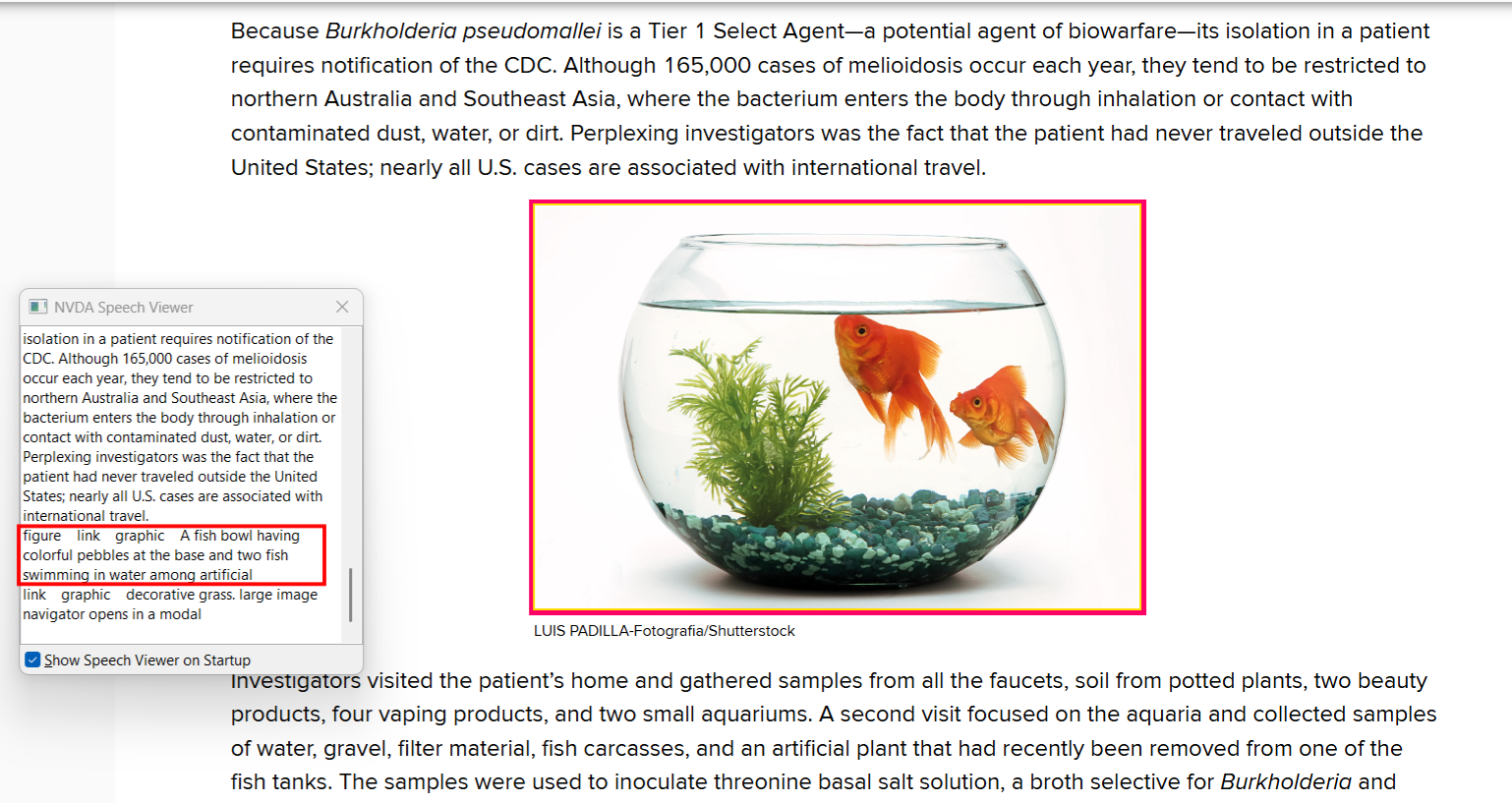

4. Check Alt Text for Images

- Ensure all images have meaningful alt text that describes their content.

- NVDA should correctly announce the image descriptions.

5. Validate Forms

- Ensure form fields have appropriate labels.

- Check that NVDA reads out each form element correctly.

- Test checkboxes, radio buttons, and combo boxes for accessibility.

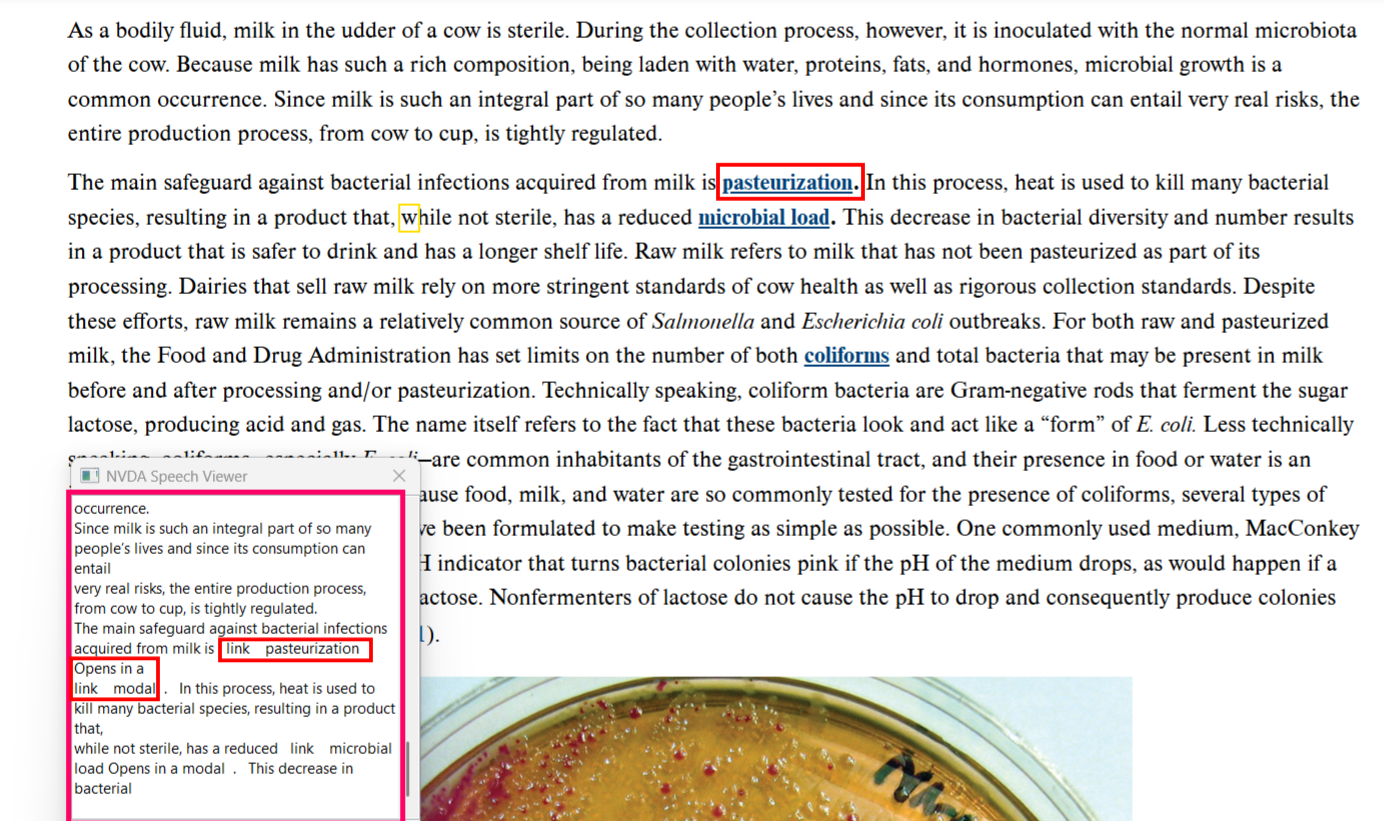

6. Verify Links & Buttons

- Replace generic text like “Click Here” with descriptive links (e.g., “Download Guide”).

- Ensure buttons are labeled clearly and announced properly by NVDA.

7. Test Multimedia Accessibility

- Ensure videos include captions or transcripts for better accessibility.

- Avoid auto-playing videos without user control.

- Provide alternative text for non-text content such as charts or infographics.

Basic NVDA Commands:

| S. No |

Action |

Shortcut |

| 1 |

Turn NVDA on |

Ctrl + Alt + N |

| 2 |

Turn NVDA off |

Insert + Q |

| 3 |

Stop reading |

Ctrl |

| 4 |

Start reading continuously |

Insert + Down Arrow |

| 5 |

Read next item |

Down Arrow |

| 6 |

Activate link or button |

Enter or Spacebar |

| 7 |

Open NVDA menu |

Insert + N |

Navigation Commands:

| S. No |

Action |

Shortcut |

| 1 |

Move to next heading |

H |

| 2 |

Move to previous heading |

Shift + H |

| 3 |

Move to next link |

K |

| 4 |

Move to previous link |

Shift + K |

| 5 |

Move to next unvisited link |

U |

| 6 |

Move to next visited link |

V |

| 7 |

Table |

T |

| 8 |

List |

L |

Table Navigation:

| S. No |

Action |

Shortcut |

| 1 |

Inside Table content |

Ctrl + Alt + Arrows |

Text Reading:

| S. No |

Action |

Shortcut |

| 1 |

Read previous word |

Ctrl + Left Arrow |

| 2 |

Read next word |

Ctrl + Right Arrow |

| 3 |

Read character by character |

Left/Right Arrow |

Form Navigation:

| S. No |

Action |

Shortcut |

| 1 |

Move to next form field |

F |

| 2 |

Move to previous form field |

Shift + F |

| 3 |

Move to next checkbox |

X |

| 4 |

Move to previous checkbox |

Shift + X |

| 5 |

Move to next radio button |

R |

Avoid Visual Reliance with NVDA

- Bold or color changes should not be the only way to highlight important text. Use HTML tags like ‘strong’ or ’em’.

- CAPTCHAs should have audio alternatives for visually impaired users.

- Ensure hover effects or animations are accessible and not essential for navigation.

Troubleshooting Common NVDA Issues

When using NVDA for accessibility testing or daily tasks, some common issues may arise. Below are frequent problems and their solutions:

NVDA is not starting

- Restart the system and check for conflicting applications.

- If the issue persists, reinstall NVDA.

No speech output

- Ensure the volume is turned up.

- Check NVDA settings and select the correct speech synthesizer.

Text is being read incorrectly

- Verify that the website or application has proper ARIA labels and semantic HTML.

- Test with another screen reader to confirm the issue.

Keyboard shortcuts are not working

- Ensure NVDA is not in sleep mode.

- Restart NVDA and check shortcut settings.

Dynamic content is not being read

- Enable “Live Regions” in NVDA settings.

- Refresh the page manually if necessary.

Performance is slow or laggy

- Close unnecessary background applications.

- Adjust NVDA settings for better performance and restart the system.

By troubleshooting these issues effectively, NVDA can be used efficiently in accessibility testing, ensuring a seamless experience for users who rely on screen readers.

Conclusion

NVDA (NonVisual Desktop Access) is a highly effective screen reader that empowers visually impaired users to navigate and interact with digital content effortlessly. With its text-to-speech conversion, Braille display support, and customizable keyboard shortcuts, NVDA enhances accessibility across various applications, including web browsing, document editing, and software operations. Its continuous updates and broad compatibility make it a reliable solution for both individuals and organizations seeking to create inclusive digital experiences. At Codoid, we recognize the importance of accessibility in modern software development. Our accessibility testing services ensure that digital platforms comply with accessibility standards such as WCAG and Section 508, making them user-friendly for individuals with disabilities. By leveraging tools like NVDA, we help businesses enhance their software’s usability, ensuring equal access for all users.

Frequently Asked Questions

-

How can I switch between different speech synthesizers in NVDA?

To change speech synthesizers in NVDA, press NVDA+N. This will open the NVDA menu. Go to Preferences and then Settings, and click on Speech. In the Synthesizer area, you can pick your preferred synthesizer from the drop-down menu. You can also change the speech rate and other voice settings in the same window.

-

Can NVDA be used on mobile devices or tablets?

NVDA is made for the Windows operating system. It does not work directly with mobile devices or tablets.

-

What are some must-have add-ons for NVDA users?

The add-ons that NVDA users need can be different for each person. What works for one might not work for another. But many people often choose add-ons that help with navigating websites, provide better support for certain apps, or add features that make using the software more comfortable and easy.

-

How do I update NVDA, and how often should I do it?

To update NVDA, visit the NV Access website and download the latest version. You should keep NVDA updated whenever a new version comes out. This will help you gain bug fixes, new features, and better performance.

-

What should I do if NVDA is not working with a specific application?

If you have problems with compatibility, try running the app in administrator mode. You can also check for updates. Another option is to look at online forums or the app developer's website. They might have information about known issues or solutions.